README-AI

Automated README file generator, powered by large language model APIs

Documentation

Quick Links

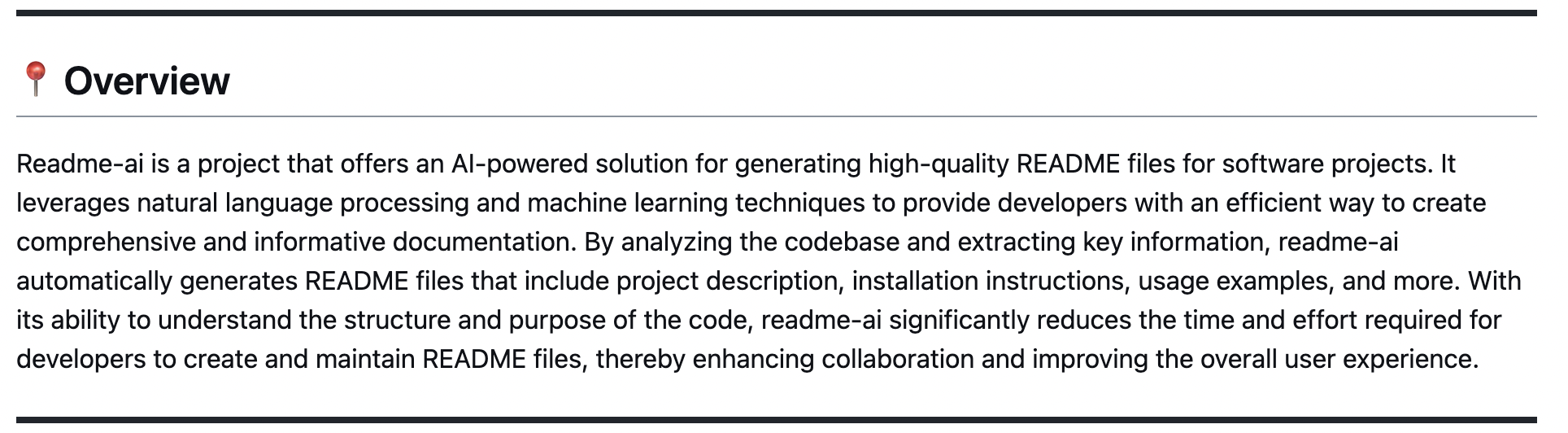

📍 Overview

Objective

Readme-ai is a developer tool that auto-generates README.md files using a combination of data extraction and generative ai. Simply provide a repository URL or local path to your codebase and a well-structured and detailed README file will be generated for you.

Motivation

Streamlines documentation creation and maintenance, enhancing developer productivity. This project aims to enable all skill levels, across all domains, to better understand, use, and contribute to open-source software.

👾 Demo

CLI

readmeai-cli-demo

Streamlit

readmeai-streamlit-demo

[!TIP]

Check out this YouTube tutorial created by a community member!

🧬 Features

- Flexible README Generation: Robust repository context extraction engine combined with generative AI.

- Customizable Output: Dozens of CLI options for styling/formatting, badges, header designs, and more.

- Language Agnostic: Works across a wide range of programming languages and project types.

- Multi-LLM Support: Compatible with

OpenAI, Ollama, Google Gemini and Offline Mode.

- Offline Mode: Generate a boilerplate README without calling an external API.

See a few examples of the README-AI customization options below:

--emojis --image custom --badge-color DE3163 --header-style compact --toc-style links

|

--image cloud --header-style compact --toc-style fold

|

--align left --badge-style flat-square --image cloud

|

--align left --badge-style flat --image gradient

|

--badge-style flat --image custom

|

--badge-style skills-light --image grey

|

--badge-style flat-square

|

--badge-style flat --image black

|

--image custom --badge-color 00ffe9 --badge-style flat-square --header-style classic

|

--image llm --badge-style plastic --header-style classic

|

--image custom --badge-color BA0098 --badge-style flat-square --header-style modern --toc-style fold

|

See the Configuration section for a complete list of CLI options.

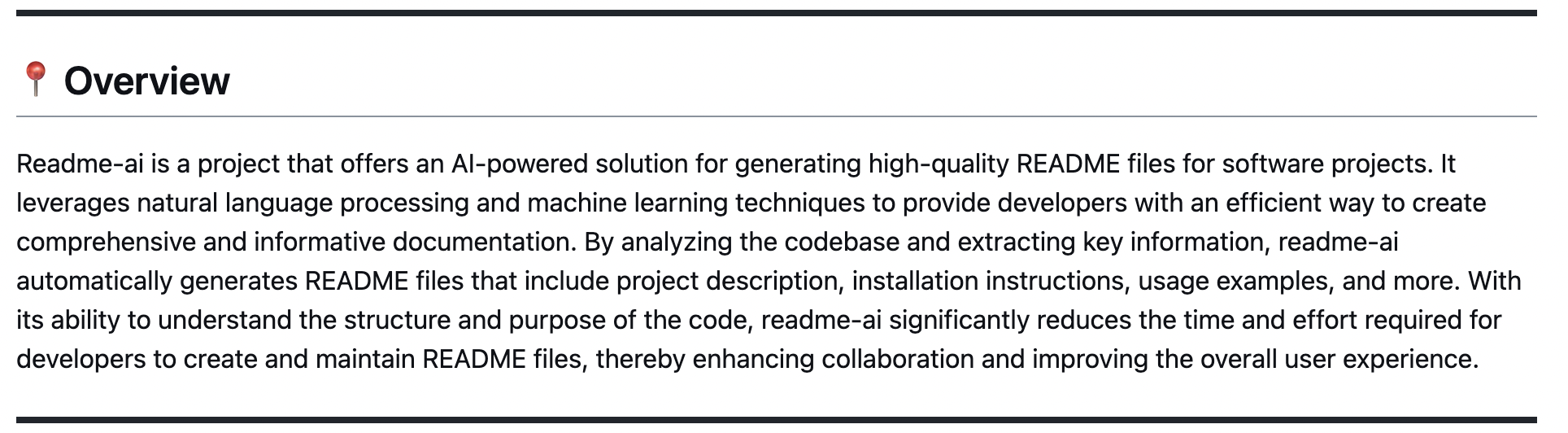

👋 Overview

Overview

|

|

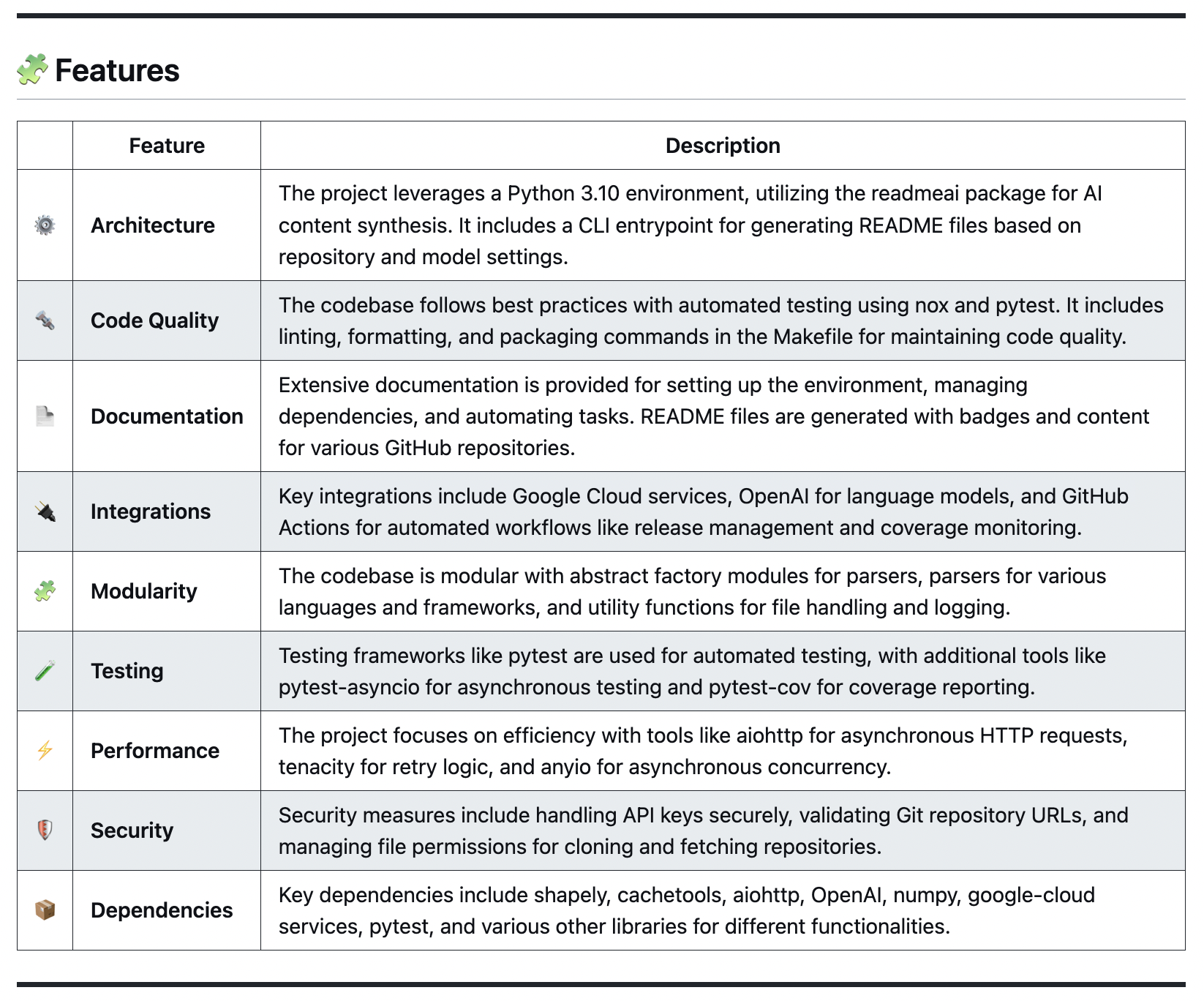

🧩 Features

📄 Codebase Documentation

🚀 Quickstart Commands

Getting Started

languagedependency

InstallUsageTest

parsers

|

|

🔰 Contributing Guidelines

Contributing Guide

|

|

Additional Sections

Project RoadmapContributing GuidelinesLicenseAcknowledgements

|

|

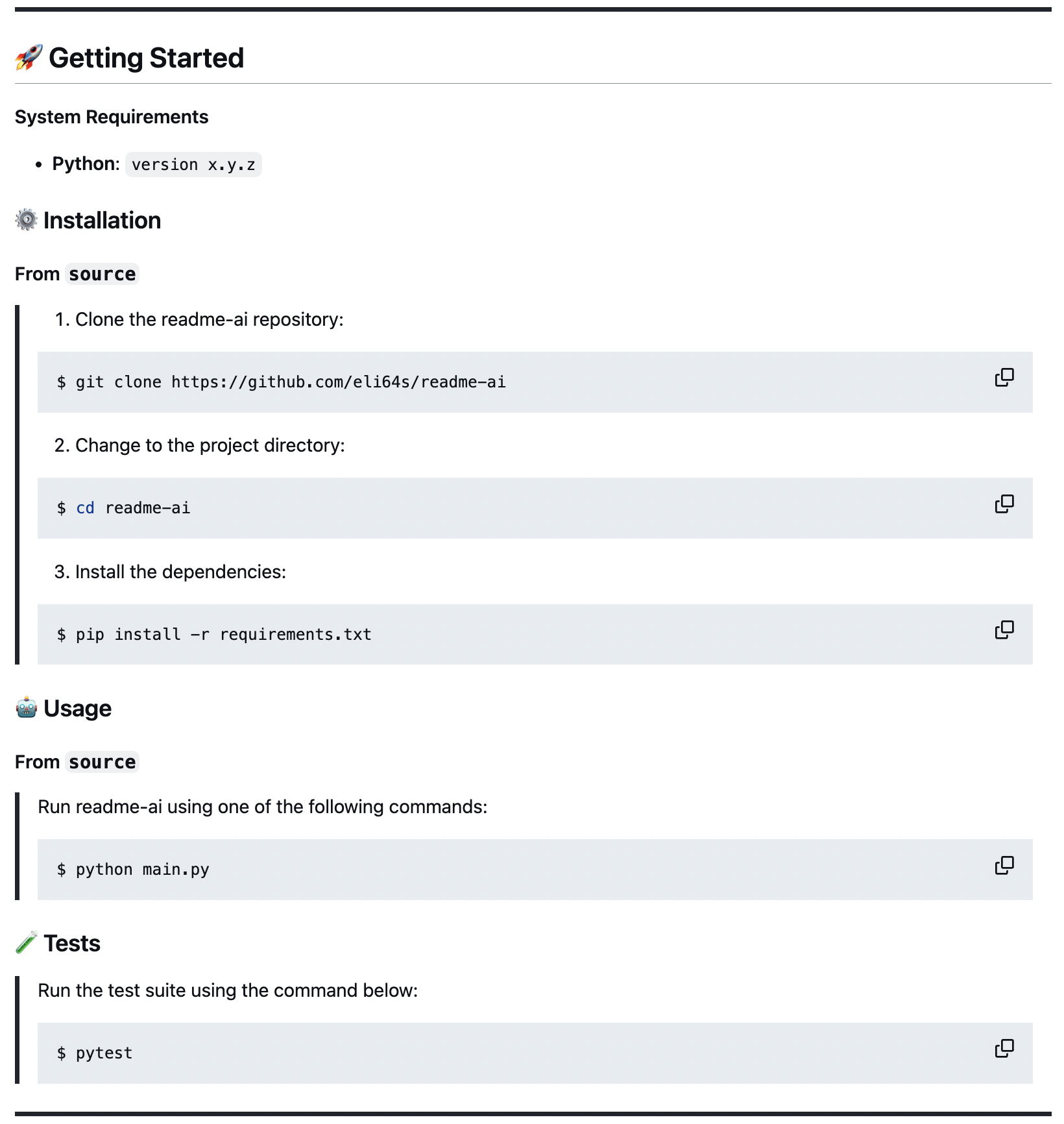

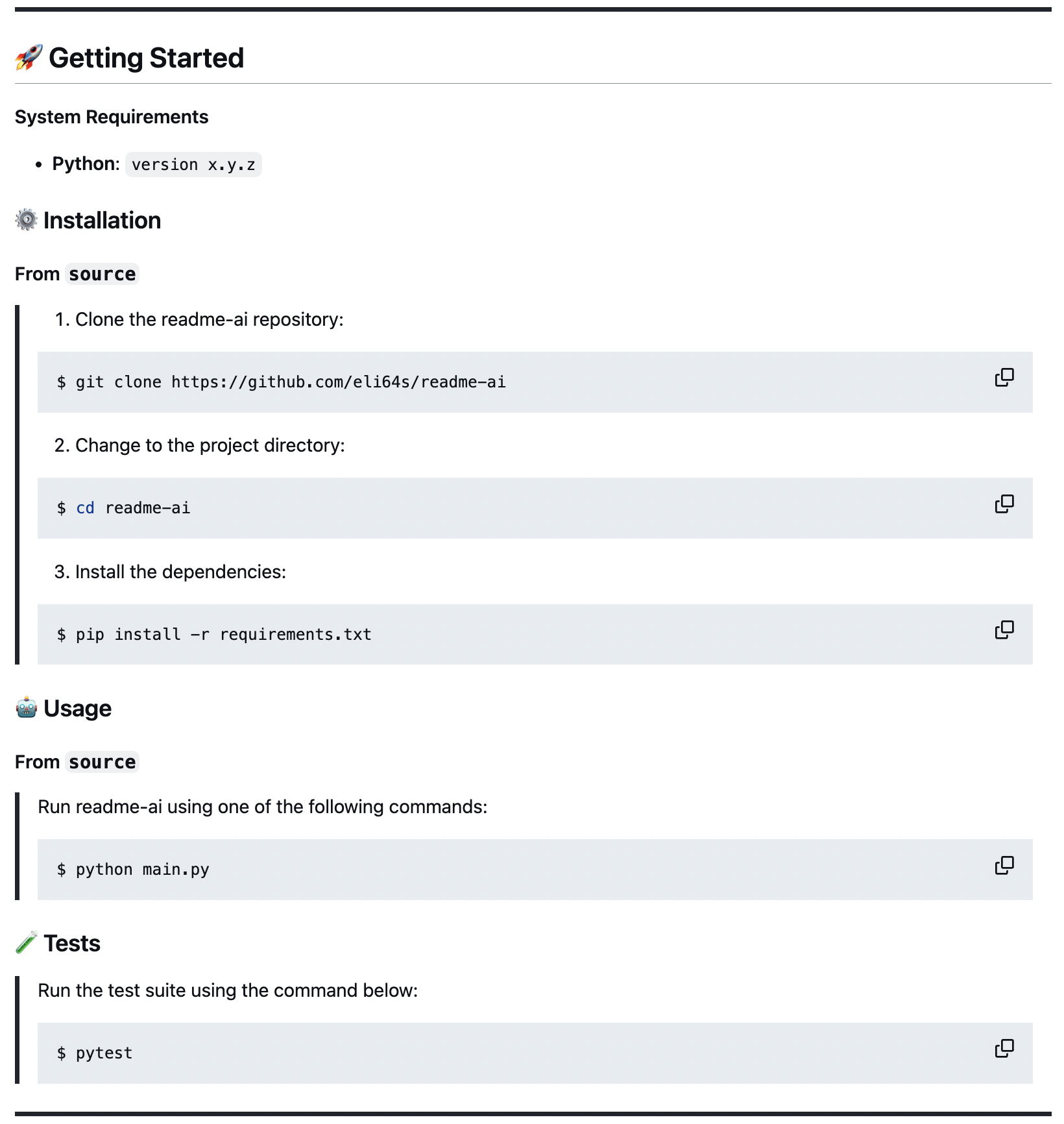

🚀 Getting Started

System Requirements:

- Python 3.9+

- Package manager/Container:

pip, pipx, docker - LLM service:

OpenAI, Ollama, Google Gemini, Offline Mode

Anthropic and LiteLLM coming soon!

Repository URL or Local Path:

Make sure to have a repository URL or local directory path ready for the CLI.

Select an LLM API Service:

- OpenAI: Recommended, requires an account setup and API key.

- Ollama: Free and open-source, potentially slower and more resource-intensive.

- Google Gemini: Requires a Google Cloud account and API key.

- Offline Mode: Generates a boilerplate README without making API calls.

⚙️ Installation

Using pip

❯ pip install readmeai

Using pipx

❯ pipx install readmeai

[!TIP]

Use pipx to install and run Python command-line applications without causing dependency conflicts with other packages!

Using docker

❯ docker pull zeroxeli/readme-ai:latest

From source

Build readme-ai

Clone repository and navigate to the project directory:

❯ git clone https://github.com/eli64s/readme-ai

❯ cd readme-ai

Using bash

❯ bash setup/setup.sh

Using poetry

❯ poetry install

🤖 Usage

Environment Variables

OpenAI

Generate a OpenAI API key and set it as the environment variable OPENAI_API_KEY.

❯ export OPENAI_API_KEY=<your_api_key>

❯ set OPENAI_API_KEY=<your_api_key>

Ollama

Pull model of your choice from the Ollama registry as follows:

❯ ollama pull mistral:latest

Start the Ollama server:

❯ export OLLAMA_HOST=127.0.0.1 && ollama serve

For more details, check out the Ollama repository.

Google Gemini

Generate a Google API key and set it as the environment variable GOOGLE_API_KEY.

❯ export GOOGLE_API_KEY=<your_api_key>

Running README-AI

Using pip

With OpenAI API:

❯ readmeai --repository https://github.com/eli64s/readme-ai \

--api openai \

--model gpt-3.5-turbo

With Ollama:

❯ readmeai --repository https://github.com/eli64s/readme-ai \

--api ollama \

--model llama3

With Gemini:

❯ readmeai --repository https://github.com/eli64s/readme-ai \

--api gemini

--model gemini-1.5-flash

Advanced Options:

❯ readmeai --repository https://github.com/eli64s/readme-ai \

--output readmeai.md \

--api openai \

--model gpt-4-turbo \

--badge-color A931EC \

--badge-style flat-square \

--header-style compact \

--toc-style fold \

--temperature 0.1 \

--tree-depth 2

--image LLM \

--emojis

Using docker

❯ docker run -it \

-e OPENAI_API_KEY=$OPENAI_API_KEY \

-v "$(pwd)":/app zeroxeli/readme-ai:latest \

-r https://github.com/eli64s/readme-ai

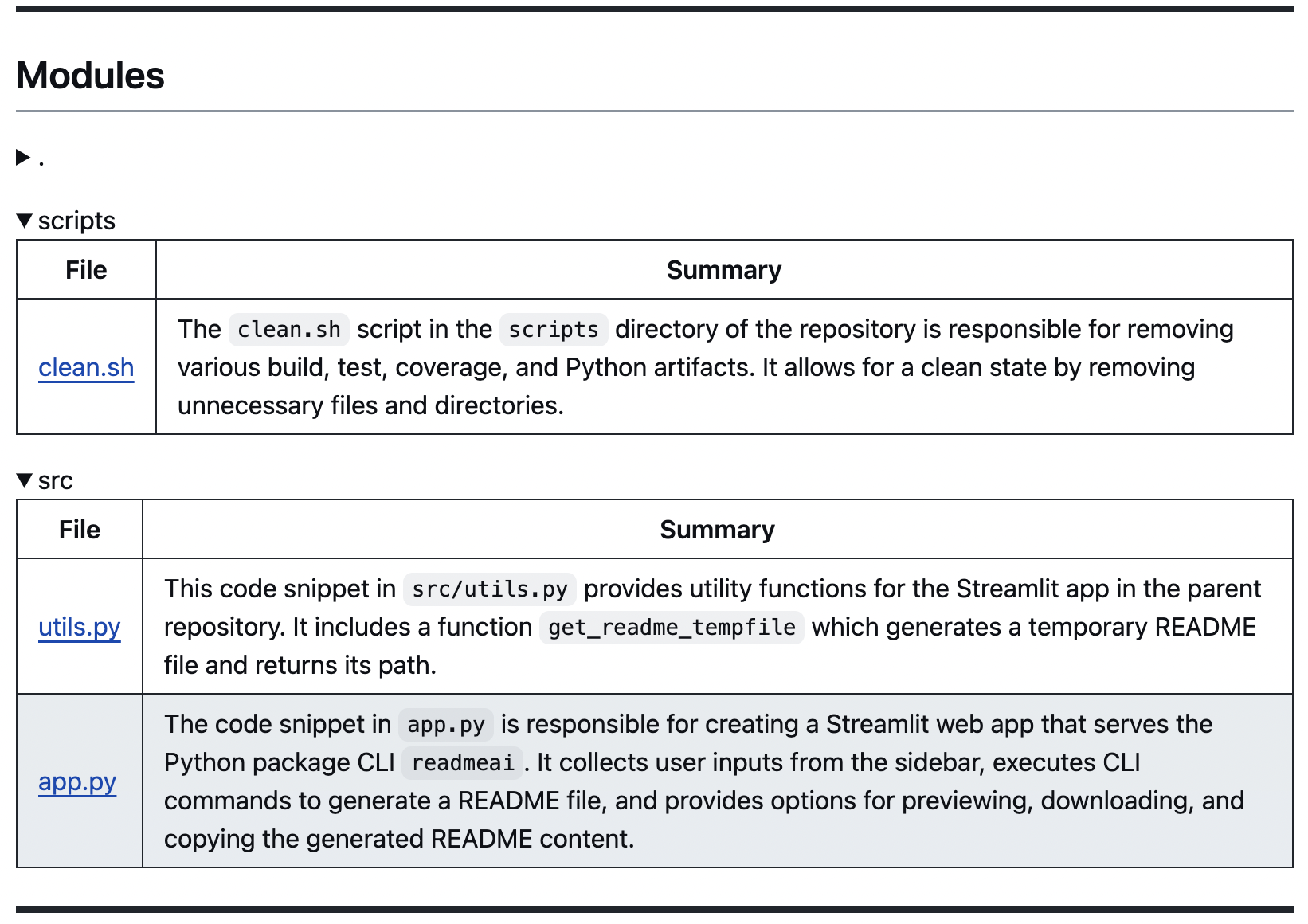

Using streamlit

Try directly in your browser on Streamlit, no installation required! For more details, see the readme-ai-streamlit repository.

From source

Using readme-ai

Using bash

❯ conda activate readmeai

❯ python3 -m readmeai.cli.main -r https://github.com/eli64s/readme-ai

Using poetry

❯ poetry shell

❯ poetry run python3 -m readmeai.cli.main -r https://github.com/eli64s/readme-ai

🧪 Testing

Using pytest

❯ make test

Using nox

❯ make test-nox

[!TIP]

Use nox to test application against multiple Python environments and dependencies!

🔧 Configuration

Customize your README generation using these CLI options:

| Option | Description | Default |

|---|

--align | Text align in header | center |

--api | LLM API service (openai, ollama, offline) | offline |

--badge-color | Badge color name or hex code | 0080ff |

--badge-style | Badge icon style type | flat |

--base-url | Base URL for the repository | v1/chat/completions |

--context-window | Maximum context window of the LLM API | 3999 |

--emojis | Adds emojis to the README header sections | False |

--header-style | Header template style | classic |

--image | Project logo image | blue |

--model | Specific LLM model to use | gpt-3.5-turbo |

--output | Output filename | readme-ai.md |

--rate-limit | Maximum API requests per minute | 5 |

--repository | Repository URL or local directory path | None |

--temperature | Creativity level for content generation | 0.9 |

--toc-style | Table of contents template style | bullet |

--top-p | Probability of the top-p sampling method | 0.9 |

--tree-depth | Maximum depth of the directory tree structure | 2 |

[!TIP]

For a full list of options, run readmeai --help in your terminal.

Project Badges

The --badge-style option lets you select the style of the default badge set.

| Style | Preview |

|---|

| default |     |

| flat |  |

| flat-square |  |

| for-the-badge |  |

| plastic |  |

| skills |  |

| skills-light |  |

| social |  |

When providing the --badge-style option, readme-ai does two things:

- Formats the default badge set to match the selection (i.e. flat, flat-square, etc.).

- Generates an additional badge set representing your projects dependencies and tech stack (i.e. Python, Docker, etc.)

Example

❯ readmeai --badge-style flat-square --repository https://github.com/eli64s/readme-ai

Output

{... project logo ...}

{... project name ...}

{...project slogan...}

Developed with the software and tools below.

{... end of header ...}

Project Logo

Select a project logo using the --image option.

| blue | gradient | black |

|  |  |

| cloud | purple | grey |

|  |  |

For custom images, see the following options:

- Use

--image custom to invoke a prompt to upload a local image file path or URL. - Use

--image llm to generate a project logo using a LLM API (OpenAI only).

🎨 Examples

[!NOTE]

See additional README file examples here.

📌 Roadmap

📒 Changelog

Changelog

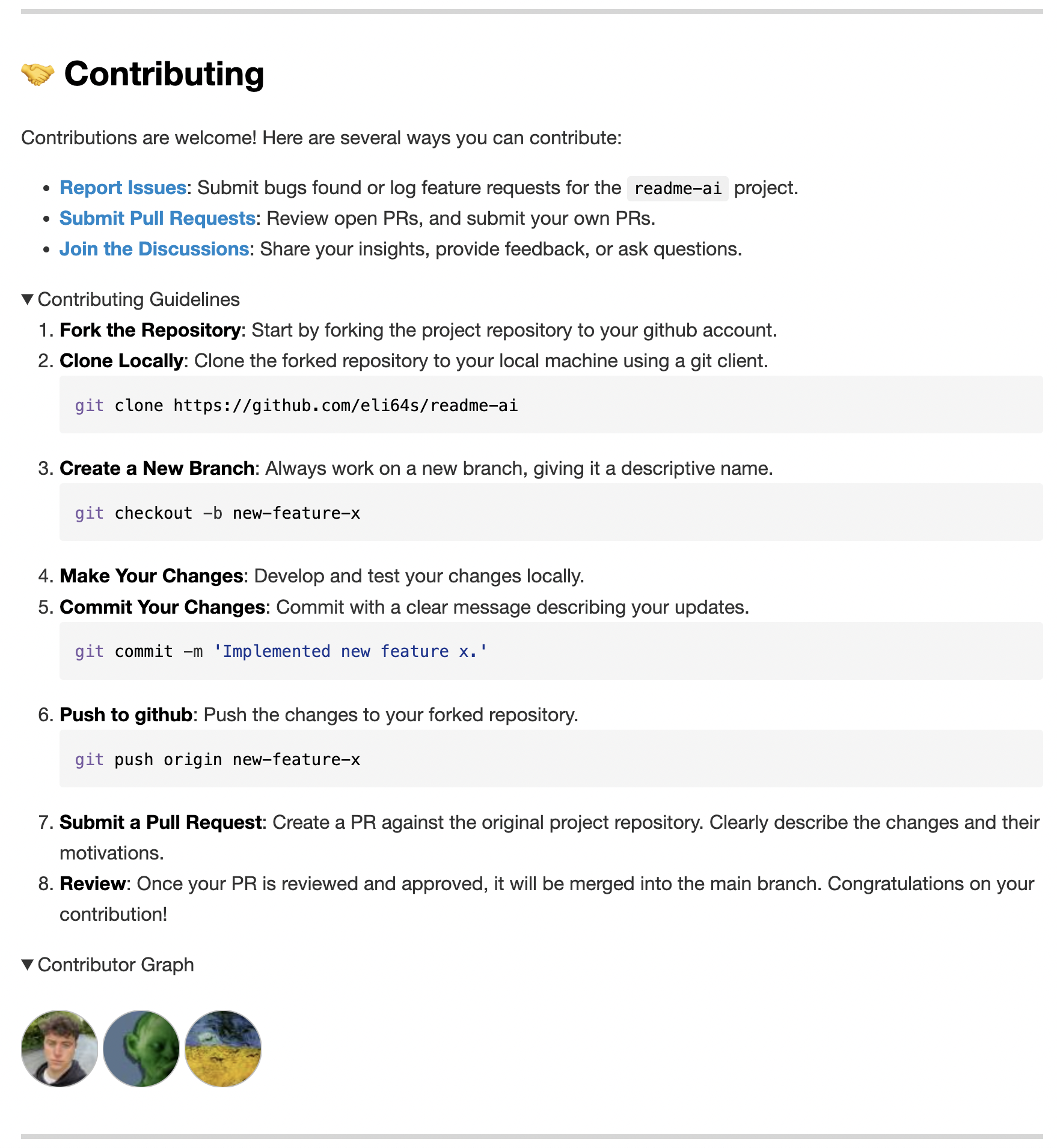

🤝 Contributing

To grow the project, we need your help! See the links below to get started.

🎗 License

MIT

👊 Acknowledgments

⬆️ Top