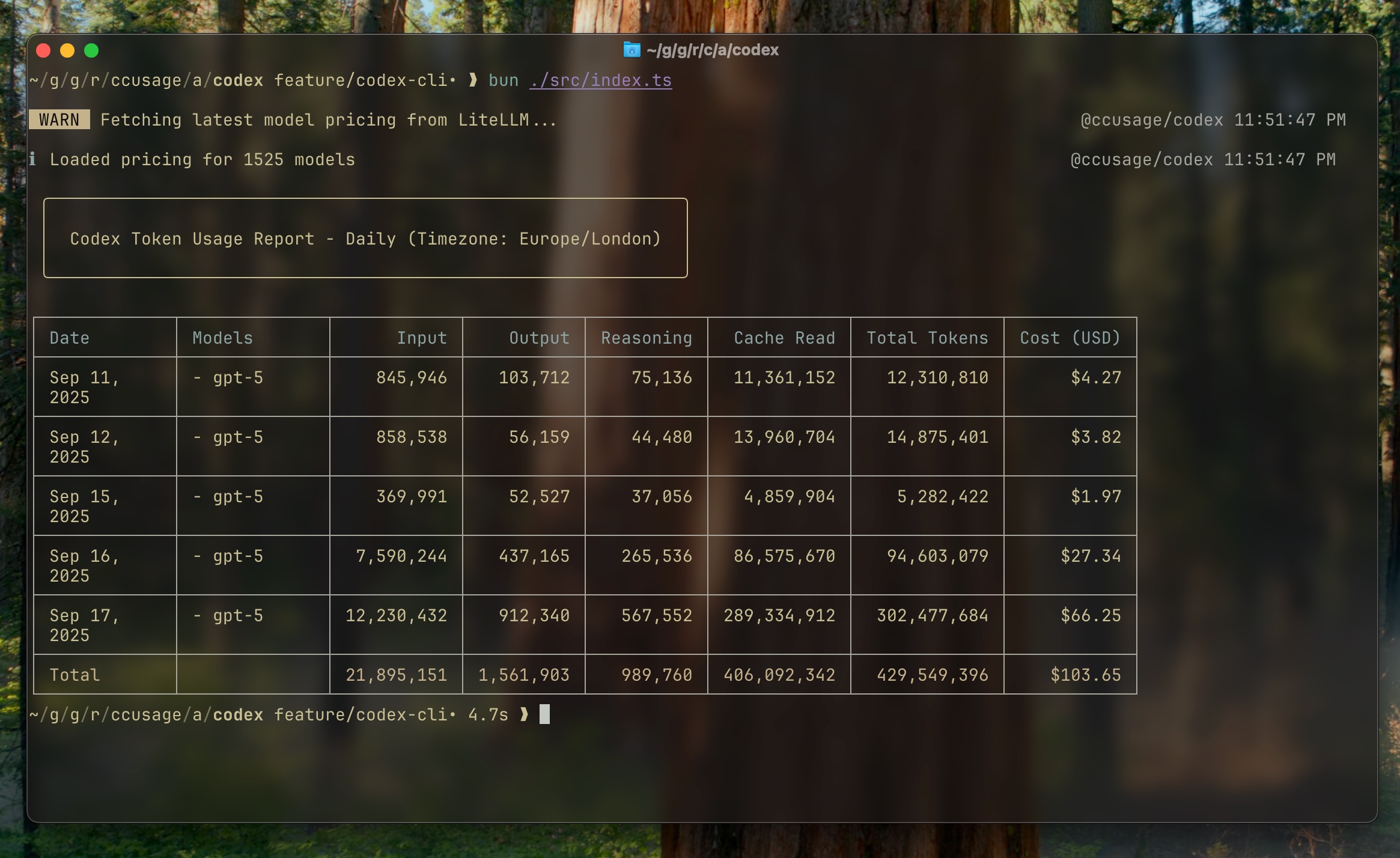

@ccusage/codex

Analyze OpenAI Codex CLI usage logs with the same reporting experience as ccusage.

⚠️ Beta: The Codex CLI support is experimental. Expect breaking changes until the upstream Codex tooling stabilizes.

Quick Start

npx @ccusage/codex@latest --help

bunx @ccusage/codex@latest --help

pnpm dlx @ccusage/codex

pnpx @ccusage/codex

deno run -E -R=$HOME/.codex/ -S=homedir -N='raw.githubusercontent.com:443' npm:@ccusage/codex@latest --help

⚠️ Critical for bunx users: Bun 1.2.x's bunx prioritizes binaries matching the package name suffix when given a scoped package. For @ccusage/codex, it looks for a codex binary in PATH first. If you have an existing codex command installed (e.g., GitHub Copilot's codex), that will be executed instead. Always use bunx @ccusage/codex@latest with the version tag to force bunx to fetch and run the correct package.

Recommended: Shell Alias

Since npx @ccusage/codex@latest is quite long to type repeatedly, we strongly recommend setting up a shell alias:

ccusage-codex daily

ccusage-codex monthly --json

💡 The CLI looks for Codex session JSONL files under CODEX_HOME (defaults to ~/.codex).

Common Commands

npx @ccusage/codex@latest daily

npx @ccusage/codex@latest daily --since 20250911 --until 20250917

npx @ccusage/codex@latest daily --json

npx @ccusage/codex@latest monthly

npx @ccusage/codex@latest monthly --json

npx @ccusage/codex@latest sessions

Useful environment variables:

CODEX_HOME – override the root directory that contains Codex session foldersLOG_LEVEL – controla consola log verbosity (0 silent … 5 trace)

ℹ️ The CLI now relies on the model metadata recorded in each turn_context. Sessions emitted during early September 2025 that lack this metadata are skipped to avoid mispricing. Newer builds of the Codex CLI restore the model field, and aliases such as gpt-5-codex automatically resolve to the correct LiteLLM pricing entry.

📦 For legacy JSONL files that never emitted turn_context metadata, the CLI falls back to treating the tokens as gpt-5 so that usage still appears in reports (pricing is therefore approximate for those sessions). In JSON output you will also see "isFallback": true on those model entries.

Features

- 📊 Responsive terminal tables shared with the

ccusage CLI

- 💵 Offline-first pricing cache with automatic LiteLLM refresh when needed

- 🤖 Per-model token and cost aggregation, including cached token accounting

- 📅 Daily and monthly rollups with identical CLI options

- 📄 JSON output for further processing or scripting

Documentation

For detailed guides and examples, visit ccusage.com/guide/codex.

Check out ccusage: The Claude Code cost scorecard that went viral

License

MIT © @ryoppippi