Product

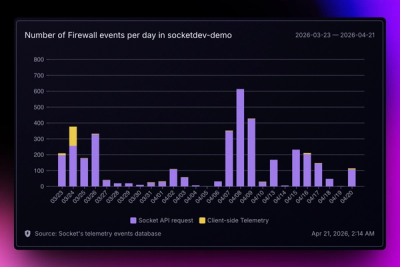

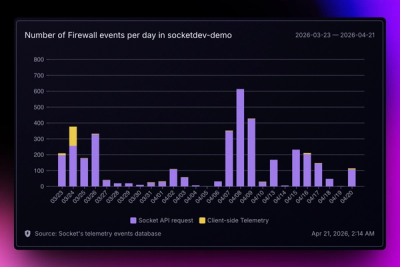

Introducing Reports: An Extensible Reporting Framework for Socket Data

Explore exportable charts for vulnerabilities, dependencies, and usage with Reports, Socket’s new extensible reporting framework.

@portkey-ai/mcp-tool-filter

Advanced tools

Ultra-fast semantic tool filtering for MCP servers using embedding similarity

Ultra-fast semantic tool filtering for MCP (Model Context Protocol) servers using embedding similarity. Reduce your tool context from 1000+ tools down to the most relevant 10-20 tools in under 10ms.

npm install @portkey-ai/mcp-tool-filter

import { MCPToolFilter } from '@portkey-ai/mcp-tool-filter';

// 1. Initialize the filter (choose embedding provider)

// Option A: Local Embeddings (RECOMMENDED for low latency < 5ms)

const filter = new MCPToolFilter({

embedding: {

provider: 'local',

}

});

// Option B: API Embeddings (for highest accuracy)

const filter = new MCPToolFilter({

embedding: {

provider: 'openai',

apiKey: process.env.OPENAI_API_KEY,

}

});

// 2. Load your MCP servers (one-time setup)

await filter.initialize(mcpServers);

// 3. Filter tools based on context

const result = await filter.filter(

"Search my emails for the Q4 budget discussion"

);

// 4. Use the filtered tools in your LLM request

console.log(result.tools); // Top 20 most relevant tools

console.log(result.metrics.totalTime); // e.g., "2ms" for local, "500ms" for API

Pros:

Cons:

const filter = new MCPToolFilter({

embedding: {

provider: 'local',

model: 'Xenova/all-MiniLM-L6-v2', // Optional: default model

quantized: true, // Optional: use quantized model for speed (default: true)

}

});

Available Models:

Xenova/all-MiniLM-L6-v2 (default) - 384 dimensions, very fastXenova/all-MiniLM-L12-v2 - 384 dimensions, more accurateXenova/bge-small-en-v1.5 - 384 dimensions, good balanceXenova/bge-base-en-v1.5 - 768 dimensions, higher qualityPerformance:

For highest accuracy, use OpenAI or other API providers:

const filter = new MCPToolFilter({

embedding: {

provider: 'openai',

apiKey: process.env.OPENAI_API_KEY,

model: 'text-embedding-3-small', // Optional

dimensions: 384, // Optional: match local model for fair comparison

}

});

Pros:

Cons:

Performance:

| Aspect | Local | API | Winner |

|---|---|---|---|

| Speed | 1-5ms | 400-800ms | 🏆 Local (200x faster) |

| Accuracy | Good (85-90%) | Best (100%) | 🏆 API |

| Cost | Free | ~$0.02/1M tokens | 🏆 Local |

| Privacy | Fully local | Data sent to API | 🏆 Local |

| Offline | ✅ Works offline | ❌ Needs internet | 🏆 Local |

| Setup | Zero config | Needs API key | 🏆 Local |

📊 See TRADEOFFS.md for detailed analysis

The library expects an array of MCP servers with the following structure:

[

{

"id": "gmail",

"name": "Gmail MCP Server",

"description": "Email management tools",

"categories": ["email", "communication"],

"tools": [

{

"name": "search_gmail_messages",

"description": "Search and find email messages in Gmail inbox. Use when user wants to find, search, look up emails...",

"keywords": ["email", "search", "inbox", "messages"],

"category": "email-search",

"inputSchema": {

"type": "object",

"properties": {

"q": { "type": "string" }

}

}

}

]

}

]

Required Fields:

id: Unique identifier for the servername: Human-readable server nametools: Array of tool definitions

name: Unique tool namedescription: Rich description of what the tool does and when to use itOptional but Recommended:

description: Server-level descriptioncategories: Array of category tags for hierarchical filteringkeywords: Array of synonym/related terms for better matchingcategory: Tool-level categoryinputSchema: JSON schema for parameters (parameter names are used for matching)Rich Descriptions: Write detailed descriptions with use cases

"description": "Search emails in Gmail. Use when user wants to find, lookup, or retrieve messages, correspondence, or mail."

Add Keywords: Include synonyms and variations

"keywords": ["email", "mail", "inbox", "messages", "correspondence"]

Mention Use Cases: Explicitly state when to use the tool

"description": "... Use when user wants to draft, compose, write, or prepare an email to send later."

MCPToolFilterMain class for tool filtering.

new MCPToolFilter(config: MCPToolFilterConfig)

Config Options:

{

embedding: {

// Local embeddings (recommended)

provider: 'local',

model?: string, // Default: 'Xenova/all-MiniLM-L6-v2'

quantized?: boolean, // Default: true

// OR API embeddings

provider: 'openai' | 'voyage' | 'cohere',

apiKey: string,

model?: string, // Default: 'text-embedding-3-small'

dimensions?: number, // Default: 1536 (or 384 for local)

baseURL?: string, // For custom endpoints

},

defaultOptions?: {

topK?: number, // Default: 20

minScore?: number, // Default: 0.3

contextMessages?: number, // Default: 3

alwaysInclude?: string[], // Always include these tools

exclude?: string[], // Never include these tools

maxContextTokens?: number, // Default: 500

},

includeServerDescription?: boolean, // Default: false (see below)

debug?: boolean // Enable debug logging

}

About includeServerDescription:

When enabled, this option includes the MCP server description in the tool embeddings, providing additional context about the domain/category of tools.

// Enable server descriptions in embeddings

const filter = new MCPToolFilter({

embedding: { provider: 'local' },

includeServerDescription: true // Default: false

});

Tradeoffs:

Recommendation: Keep this disabled (default: false) unless your use case primarily involves high-level intent queries. See examples/benchmark-server-description.ts for detailed benchmarks.

initialize(servers: MCPServer[]): Promise<void>Initialize the filter with MCP servers. This precomputes and caches all tool embeddings.

Note: Call this once during startup. It's an async operation that may take a few seconds depending on the number of tools.

await filter.initialize(servers);

filter(input: FilterInput, options?: FilterOptions): Promise<FilterResult>Filter tools based on the input context.

Input Types:

// String input

await filter.filter("Search my emails about the project");

// Chat messages

await filter.filter([

{ role: 'user', content: 'What meetings do I have today?' },

{ role: 'assistant', content: 'Let me check your calendar.' }

]);

Options (all optional, override defaults):

{

topK?: number, // Max tools to return

minScore?: number, // Minimum similarity score (0-1)

contextMessages?: number, // How many recent messages to use

alwaysInclude?: string[], // Tool names to always include

exclude?: string[], // Tool names to exclude

maxContextTokens?: number, // Max context size

}

Returns:

{

tools: ScoredTool[], // Filtered and ranked tools

metrics: {

totalTime: number, // Total time in ms

embeddingTime: number, // Time to embed context

similarityTime: number, // Time to compute similarities

toolsEvaluated: number, // Total tools evaluated

}

}

getStats()Get statistics about the filter state.

const stats = filter.getStats();

// {

// initialized: true,

// toolCount: 25,

// cacheSize: 5,

// embeddingDimensions: 1536

// }

clearCache()Clear the context embedding cache.

filter.clearCache();

The library includes several performance optimizations out of the box:

These optimizations are automatic and transparent - no configuration needed!

Typical performance for 1000 tools:

Building context: <1ms

Embedding API call: 3-5ms (cached: 0ms)

Similarity computation: 1-2ms (6-8x faster with optimizations)

Sorting/filtering: <1ms (hybrid algorithm)

─────────────────────────────

Total: 5-9ms

Use Smaller Embeddings: 512 or 1024 dimensions for faster computation

embedding: {

provider: 'openai',

model: 'text-embedding-3-small',

dimensions: 512 // Faster than 1536

}

Reduce Context Size: Fewer messages = faster embedding

defaultOptions: {

contextMessages: 2, // Instead of 3-5

maxContextTokens: 300

}

Leverage Caching: Identical contexts reuse cached embeddings (0ms)

Tune topK: Request fewer tools if you don't need 20

await filter.filter(input, { topK: 10 });

Micro-benchmarks showing optimization improvements:

Dot Product (1536 dims): 0.001ms vs 0.006ms (6x faster)

Vector Normalization: 0.003ms vs 0.006ms (2x faster)

Top-K Selection (<500 tools): Uses optimized built-in sort

Top-K Selection (500+ tools): O(n log k) heap-based selection

LRU Cache Access: True access-order tracking

See the existing benchmark examples for end-to-end performance testing:

npx ts-node examples/benchmark.ts

import Portkey from 'portkey-ai';

import { MCPToolFilter } from '@portkey-ai/mcp-tool-filter';

const portkey = new Portkey({ apiKey: '...' });

const filter = new MCPToolFilter({ /* ... */ });

await filter.initialize(mcpServers);

// Filter tools based on conversation

const { tools } = await filter.filter(messages);

// Convert to OpenAI tool format

const openaiTools = tools.map(t => ({

type: 'function',

function: {

name: t.toolName,

description: t.tool.description,

parameters: t.tool.inputSchema,

}

}));

// Make LLM request with filtered tools

const completion = await portkey.chat.completions.create({

model: 'gpt-4',

messages: messages,

tools: openaiTools,

});

import { ChatOpenAI } from 'langchain/chat_models/openai';

import { MCPToolFilter } from '@portkey-ai/mcp-tool-filter';

const filter = new MCPToolFilter({ /* ... */ });

await filter.initialize(mcpServers);

// Create a custom tool selector

async function selectTools(messages) {

const { tools } = await filter.filter(messages);

return tools.map(t => convertToLangChainTool(t));

}

// Use in your agent

const model = new ChatOpenAI();

const tools = await selectTools(messages);

const response = await model.invoke(messages, { tools });

// Recommended: Initialize once at startup

let filterInstance: MCPToolFilter;

async function getFilter() {

if (!filterInstance) {

filterInstance = new MCPToolFilter({ /* ... */ });

await filterInstance.initialize(mcpServers);

}

return filterInstance;

}

// Use in request handlers

app.post('/chat', async (req, res) => {

const filter = await getFilter();

const result = await filter.filter(req.body.messages);

// ... use filtered tools

});

Performance on various tool counts (M1 Max):

Local Embeddings (Xenova/all-MiniLM-L6-v2):

| Tools | Initialization | Filter (Cold) | Filter (Cached) |

|---|---|---|---|

| 10 | ~100ms | 2ms | <1ms |

| 100 | ~500ms | 3ms | <1ms |

| 500 | ~2s | 4ms | 1ms |

| 1000 | ~4s | 5ms | 1ms |

| 5000 | ~20s | 8ms | 2ms |

API Embeddings (OpenAI text-embedding-3-small):

| Tools | Initialization | Filter (Cold) | Filter (Cached) |

|---|---|---|---|

| 10 | ~200ms | 500ms | 1ms |

| 100 | ~1.5s | 550ms | 2ms |

| 500 | ~6s | 600ms | 2ms |

| 1000 | ~12s | 650ms | 3ms |

| 5000 | ~60s | 800ms | 4ms |

Key Takeaways:

Note: Initialization is a one-time cost. Choose local embeddings for low latency, API embeddings for maximum accuracy.

Use Local Embeddings when:

Use API Embeddings when:

Recommendation: Start with local embeddings. Only switch to API if accuracy is insufficient.

Compare performance for your use case:

npx ts-node examples/test-local-embeddings.ts

This will benchmark both providers and show you:

To see detailed timing logs for each request, enable debug mode:

const filter = new MCPToolFilter({

embedding: { /* ... */ },

debug: true // Enable detailed timing logs

});

This will output detailed logs for each filter request:

=== Starting filter request ===

[1/5] Options merged: 0.12ms

[2/5] Context built (156 chars): 0.34ms

[3/5] Cache MISS (lookup: 0.08ms)

→ Embedding generated: 1247.56ms

[4/5] Similarities computed: 1.23ms (25 tools, 0.049ms/tool)

[5/5] Tools selected & ranked: 0.15ms (5 tools returned)

=== Total filter time: 1249.48ms ===

Breakdown: merge=0.12ms, context=0.34ms, cache=0.08ms, embedding=1247.56ms, similarity=1.23ms, selection=0.15ms

Each filter request logs 5 steps:

merge): Merge provided options with defaultscontext): Build the context string from input messagescache + embedding):

similarity): Compute cosine similarity for all tools

selection): Filter by score and select top-K toolsSee examples/test-timings.ts for a complete example:

export OPENAI_API_KEY=your-key-here

npx ts-node examples/test-timings.ts

This will run multiple filter requests showing:

Every filter request returns detailed metrics:

const result = await filter.filter(input);

console.log(result.metrics);

// {

// totalTime: 1249.48, // Total request time in ms

// embeddingTime: 1247.56, // Time spent on embedding API

// similarityTime: 1.23, // Time computing similarities

// toolsEvaluated: 25 // Number of tools evaluated

// }

const result = await filter.filter(messages);

// Log metrics for monitoring

logger.info('Tool filter performance', {

totalTime: result.metrics.totalTime,

embeddingTime: result.metrics.embeddingTime,

cached: result.metrics.embeddingTime === 0,

toolsReturned: result.tools.length,

});

// Alert if too slow

if (result.metrics.totalTime > 5000) {

logger.warn('Slow filter request', result.metrics);

}

For very large tool sets, use hierarchical filtering:

// Stage 1: Filter by server categories

const relevantServers = mcpServers.filter(server =>

server.categories?.some(cat => userIntent.includes(cat))

);

// Stage 2: Filter tools within relevant servers

const result = await filter.filter(messages);

Combine embedding similarity with keyword matching:

const { tools } = await filter.filter(input);

// Boost tools with exact keyword matches

const boostedTools = tools.map(tool => {

const hasKeywordMatch = tool.tool.keywords?.some(kw =>

input.toLowerCase().includes(kw.toLowerCase())

);

return {

...tool,

score: hasKeywordMatch ? tool.score * 1.2 : tool.score

};

}).sort((a, b) => b.score - a.score);

Always include certain essential tools:

const filter = new MCPToolFilter({

// ...

defaultOptions: {

alwaysInclude: [

'web_search', // Always useful

'conversation_search', // Access to context

],

}

});

Problem: First filter call is slow.

Solution: The embedding API call takes 3-5ms. Subsequent calls with similar context are cached and much faster.

// Warm up the cache

await filter.filter("hello"); // ~5ms

await filter.filter("hello"); // ~1ms (cached)

Problem: Wrong tools are being selected.

Solutions:

minScore thresholdtopK to include more toolsalwaysIncludeProblem: High memory usage with many tools.

Solution: Use smaller embedding dimensions:

embedding: {

dimensions: 512 // Instead of 1536

}

This reduces memory by ~66% with minimal accuracy loss.

MIT

Contributions welcome! Please open an issue or PR.

FAQs

Ultra-fast semantic tool filtering for MCP servers using embedding similarity

We found that @portkey-ai/mcp-tool-filter demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 3 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Explore exportable charts for vulnerabilities, dependencies, and usage with Reports, Socket’s new extensible reporting framework.

Product

Socket for Jira lets teams turn alerts into Jira tickets with manual creation, automated ticketing rules, and two-way sync.

Company News

Socket won two 2026 Reppy Awards from RepVue, ranking in the top 5% of all sales orgs. AE Alexandra Lister shares what it's like to grow a sales career here.