Product

Introducing Socket Scanning for OpenVSX Extensions

Socket now scans OpenVSX extensions, giving teams early detection of risky behaviors, hidden capabilities, and supply chain threats in developer tools.

Fork from https://github.com/andrewrk/node-s3-client

npm install @auth0/s3 --save

See also the companion CLI tool which is meant to be a drop-in replacement for s3cmd: s3-cli.

var s3 = require('s3');

var client = s3.createClient({

maxAsyncS3: 20, // this is the default

s3RetryCount: 3, // this is the default

s3RetryDelay: 1000, // this is the default

multipartUploadThreshold: 20971520, // this is the default (20 MB)

multipartUploadSize: 15728640, // this is the default (15 MB)

s3Options: {

accessKeyId: "your s3 key",

secretAccessKey: "your s3 secret",

region: "your region",

// endpoint: 's3.yourdomain.com',

// sslEnabled: false

// any other options are passed to new AWS.S3()

// See: http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/Config.html#constructor-property

},

});

var s3 = require('s3');

var awsS3Client = new AWS.S3(s3Options);

var options = {

s3Client: awsS3Client,

// more options available. See API docs below.

};

var client = s3.createClient(options);

var params = {

localFile: "some/local/file",

s3Params: {

Bucket: "s3 bucket name",

Key: "some/remote/file",

// other options supported by putObject, except Body and ContentLength.

// See: http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#putObject-property

},

};

var uploader = client.uploadFile(params);

uploader.on('error', function(err) {

console.error("unable to upload:", err.stack);

});

uploader.on('progress', function() {

console.log("progress", uploader.progressMd5Amount,

uploader.progressAmount, uploader.progressTotal);

});

uploader.on('end', function() {

console.log("done uploading");

});

var params = {

localFile: "some/local/file",

s3Params: {

Bucket: "s3 bucket name",

Key: "some/remote/file",

// other options supported by getObject

// See: http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#getObject-property

},

};

var downloader = client.downloadFile(params);

downloader.on('error', function(err) {

console.error("unable to download:", err.stack);

});

downloader.on('progress', function() {

console.log("progress", downloader.progressAmount, downloader.progressTotal);

});

downloader.on('end', function() {

console.log("done downloading");

});

var params = {

localDir: "some/local/dir",

deleteRemoved: true, // default false, whether to remove s3 objects

// that have no corresponding local file.

s3Params: {

Bucket: "s3 bucket name",

Prefix: "some/remote/dir/",

// other options supported by putObject, except Body and ContentLength.

// See: http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#putObject-property

},

};

var uploader = client.uploadDir(params);

uploader.on('error', function(err) {

console.error("unable to sync:", err.stack);

});

uploader.on('progress', function() {

console.log("progress", uploader.progressAmount, uploader.progressTotal);

});

uploader.on('end', function() {

console.log("done uploading");

});

Consider increasing the socket pool size in the http and https global

agents. This will improve bandwidth when using uploadDir and downloadDir

functions. For example:

http.globalAgent.maxSockets = https.globalAgent.maxSockets = 20;

This contains a reference to the aws-sdk module. It is a valid use case to use both this module and the lower level aws-sdk module in tandem.

Creates an S3 client.

options:

s3Client - optional, an instance of AWS.S3. Leave blank if you provide s3Options.s3Options - optional. leave blank if you provide s3Client.

new AWS.S3():

http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/Config.html#constructor-propertymaxAsyncS3 - maximum number of simultaneous requests this client will

ever have open to S3. defaults to 20.s3RetryCount - how many times to try an S3 operation before giving up.

Default 3.s3RetryDelay - how many milliseconds to wait before retrying an S3

operation. Default 1000.multipartUploadThreshold - if a file is this many bytes or greater, it

will be uploaded via a multipart request. Default is 20MB. Minimum is 5MB.

Maximum is 5GB.multipartUploadSize - when uploading via multipart, this is the part size.

The minimum size is 5MB. The maximum size is 5GB. Default is 15MB. Note that

S3 has a maximum of 10000 parts for a multipart upload, so if this value is

too small, it will be ignored in favor of the minimum necessary value

required to upload the file.bucket S3 bucketkey S3 keybucketLocation string, one of these:

You can find out your bucket location programatically by using this API: http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#getBucketLocation-property

returns a string which looks like this:

https://s3.amazonaws.com/bucket/key

or maybe this if you are not in US Standard:

https://s3-eu-west-1.amazonaws.com/bucket/key

bucket S3 Bucketkey S3 KeyWorks for any region, and returns a string which looks like this:

http://bucket.s3.amazonaws.com/key

See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#putObject-property

params:

s3Params: params to pass to AWS SDK putObject.localFile: path to the file on disk you want to upload to S3.defaultContentType: Unless you explicitly set the ContentType

parameter in s3Params, it will be automatically set for you based on the

file extension of localFile. If the extension is unrecognized,

defaultContentType will be used instead. Defaults to

application/octet-stream.The difference between using AWS SDK putObject and this one:

ContentType based on file extension if you do not provide it.Returns an EventEmitter with these properties:

progressMd5AmountprogressAmountprogressTotalAnd these events:

'error' (err)'end' (data) - emitted when the file is uploaded successfully

data is the same object that you get from putObject in AWS SDK'progress' - emitted when progressMd5Amount, progressAmount, and

progressTotal properties change. Note that it is possible for progress to

go backwards when an upload fails and must be retried.'fileOpened' (fdSlicer) - emitted when localFile has been opened. The file

is opened with the fd-slicer

module because we might need to read from multiple locations in the file at

the same time. fdSlicer is an object for which you can call

createReadStream(options). See the fd-slicer README for more information.'fileClosed' - emitted when localFile has been closed.And these methods:

abort() - call this to stop the find operation.See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#getObject-property

params:

localFile - the destination path on disk to write the s3 object intos3Params: params to pass to AWS SDK getObject.The difference between using AWS SDK getObject and this one:

Returns an EventEmitter with these properties:

progressAmountprogressTotalAnd these events:

'error' (err)'end' - emitted when the file is downloaded successfully'progress' - emitted when progressAmount and progressTotal

properties change.http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#getObject-property

s3Params: params to pass to AWS SDK getObject.The difference between using AWS SDK getObject and this one:

Returns an EventEmitter with these properties:

progressAmountprogressTotalAnd these events:

'error' (err)'end' (buffer) - emitted when the file is downloaded successfully.

buffer is a Buffer containing the object data.'progress' - emitted when progressAmount and progressTotal

properties change.http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#getObject-property

s3Params: params to pass to AWS SDK getObject.The difference between using AWS SDK getObject and this one:

If you want retries, progress, or MD5 checking, you must code it yourself.

Returns a ReadableStream with these additional events:

'httpHeaders' (statusCode, headers) - contains the HTTP response

headers and status code.See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#listObjects-property

params:

s3Params - params to pass to AWS SDK listObjects.recursive - true or false whether or not you want to recurse

into directories. Default false.Note that if you set Delimiter in s3Params then you will get a list of

objects and folders in the directory you specify. You probably do not want to

set recursive to true at the same time as specifying a Delimiter because

this will cause a request per directory. If you want all objects that share a

prefix, leave the Delimiter option null or undefined.

Be sure that s3Params.Prefix ends with a trailing slash (/) unless you

are requesting the top-level listing, in which case s3Params.Prefix should

be empty string.

The difference between using AWS SDK listObjects and this one:

Returns an EventEmitter with these properties:

progressAmountobjectsFounddirsFoundAnd these events:

'error' (err)'end' - emitted when done listing and no more 'data' events will be emitted.'data' (data) - emitted when a batch of objects are found. This is

the same as the data object in AWS SDK.'progress' - emitted when progressAmount, objectsFound, and

dirsFound properties change.And these methods:

abort() - call this to stop the find operation.See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#deleteObjects-property

s3Params are the same.

The difference between using AWS SDK deleteObjects and this one:

Returns an EventEmitter with these properties:

progressAmountprogressTotalAnd these events:

'error' (err)'end' - emitted when all objects are deleted.'progress' - emitted when the progressAmount or progressTotal properties change.'data' (data) - emitted when a request completes. There may be more.Syncs an entire directory to S3.

params:

localDir - source path on local file system to sync to S3s3Params

Prefix (required)Bucket (required)deleteRemoved - delete s3 objects with no corresponding local file.

default falsegetS3Params - function which will be called for every file that

needs to be uploaded. You can use this to skip some files. See below.defaultContentType: Unless you explicitly set the ContentType

parameter in s3Params, it will be automatically set for you based on the

file extension of localFile. If the extension is unrecognized,

defaultContentType will be used instead. Defaults to

application/octet-stream.followSymlinks - Set this to false to ignore symlinks.

Defaults to true.function getS3Params(localFile, stat, callback) {

// call callback like this:

var err = new Error(...); // only if there is an error

var s3Params = { // if there is no error

ContentType: getMimeType(localFile), // just an example

};

// pass `null` for `s3Params` if you want to skip uploading this file.

callback(err, s3Params);

}

Returns an EventEmitter with these properties:

progressAmountprogressTotalprogressMd5AmountprogressMd5TotaldeleteAmountdeleteTotalfilesFoundobjectsFounddoneFindingFilesdoneFindingObjectsdoneMd5And these events:

'error' (err)'end' - emitted when all files are uploaded'progress' - emitted when any of the above progress properties change.'fileUploadStart' (localFilePath, s3Key) - emitted when a file begins

uploading.'fileUploadEnd' (localFilePath, s3Key) - emitted when a file successfully

finishes uploading.uploadDir works like this:

Prefix. S3 guarantees

returned objects to be in sorted order.localDir.deleteRemoved is set, deleting remote objects whose corresponding local

files are missing.Syncs an entire directory from S3.

params:

localDir - destination directory on local file system to sync tos3Params

Prefix (required)Bucket (required)deleteRemoved - delete local files with no corresponding s3 object. default falsegetS3Params - function which will be called for every object that

needs to be downloaded. You can use this to skip downloading some objects.

See below.followSymlinks - Set this to false to ignore symlinks.

Defaults to true.function getS3Params(localFile, s3Object, callback) {

// localFile is the destination path where the object will be written to

// s3Object is same as one element in the `Contents` array from here:

// http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#listObjects-property

// call callback like this:

var err = new Error(...); // only if there is an error

var s3Params = { // if there is no error

VersionId: "abcd", // just an example

};

// pass `null` for `s3Params` if you want to skip downloading this object.

callback(err, s3Params);

}

Returns an EventEmitter with these properties:

progressAmountprogressTotalprogressMd5AmountprogressMd5TotaldeleteAmountdeleteTotalfilesFoundobjectsFounddoneFindingFilesdoneFindingObjectsdoneMd5And these events:

'error' (err)'end' - emitted when all files are downloaded'progress' - emitted when any of the progress properties above change'fileDownloadStart' (localFilePath, s3Key) - emitted when a file begins

downloading.'fileDownloadEnd' (localFilePath, s3Key) - emitted when a file successfully

finishes downloading.downloadDir works like this:

Prefix. S3 guarantees

returned objects to be in sorted order.localDir.deleteRemoved is set, deleting local files whose corresponding objects

are missing.Deletes an entire directory on S3.

s3Params:

BucketPrefixMFAReturns an EventEmitter with these properties:

progressAmountprogressTotalAnd these events:

'error' (err)'end' - emitted when all objects are deleted.'progress' - emitted when the progressAmount or progressTotal properties change.deleteDir works like this:

See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#copyObject-property

s3Params are the same. Don't forget that CopySource must contain the

source bucket name as well as the source key name.

The difference between using AWS SDK copyObject and this one:

Returns an EventEmitter with these events:

'error' (err)'end' (data)See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#copyObject-property

s3Params are the same. Don't forget that CopySource must contain the

source bucket name as well as the source key name.

Under the hood, this uses copyObject and then deleteObjects only if the

copy succeeded.

Returns an EventEmitter with these events:

'error' (err)'copySuccess' (data)'end' (data)Using the AWS SDK, you can send a HEAD request, which will tell you if a file exists at Key.

See http://docs.aws.amazon.com/AWSJavaScriptSDK/latest/AWS/S3.html#headObject-property

var client = require('s3').createClient({ /* options */ });

client.s3.headObject({

Bucket: 's3 bucket name',

Key: 'some/remote/file'

}, function(err, data) {

if (err) {

// file does not exist (err.statusCode == 404)

return;

}

// file exists

});

aws-vault exec <profile> -- S3_KEY=<valid_s3_key> npm test

Tests upload and download large amounts of data to and from S3. The test timeout is set to 40 seconds because Internet connectivity waries wildly.

1.0.0

Forked from https://github.com/andrewrk/node-s3-client

FAQs

Amazon S3 client for upload/download files

We found that @auth0/s3 demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 31 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

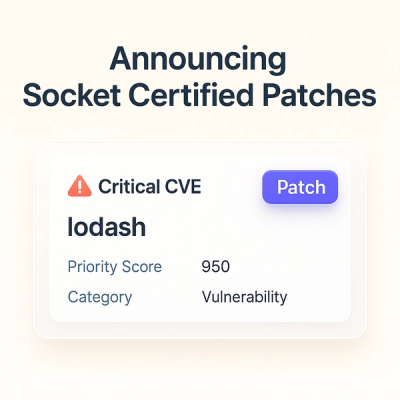

Product

Socket now scans OpenVSX extensions, giving teams early detection of risky behaviors, hidden capabilities, and supply chain threats in developer tools.

Product

Bringing supply chain security to the next generation of JavaScript package managers

Product

A safer, faster way to eliminate vulnerabilities without updating dependencies