Security News

/Research

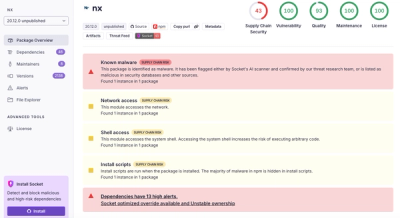

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

@cjpais/inference

Advanced tools

Trying to wrap a bunch of different inference providers models and rate limit them. As well as getting them to support typescript more natively.

Trying to wrap a bunch of different inference providers models and rate limit them. As well as getting them to support typescript more natively.

My specific application may send many parallel requests to inference models and I need to rate limit these requests across the application per provider. This effectively solves that problem

This is a major WIP so a bunch of things are left unimplmented for the time being. However the basic functionality should be there

Supported providers:

WIP Stuff:

Check out test/index.test.ts for usage examples.

Generally speaking

const oai = new OpenAIProvider({

apiKey: process.env.OPENAI_API_KEY!,

});

const oaiLimiter = createRateLimiter(2);

const CHAT_MODELS: Record<string, ChatModel> = {

"gpt-3.5": {

provider: oai,

name: "gpt-3.5",

providerModel: "gpt-3.5-turbo-0125",

rateLimiter: oaiLimiter,

},

"gpt-4": {

provider: oai,

name: "gpt-4",

providerModel: "gpt-4-0125-preview",

rateLimiter: oaiLimiter,

}

}

const inference = new Inference({chatModels: CHAT_MODELS});

const result = await inference.chat({model: "gpt-3.5", prompt: "Hello, world!"});

To install dependencies:

bun install

To run:

bun run index.ts

FAQs

Trying to wrap a bunch of different inference providers models and rate limit them. As well as getting them to support typescript more natively.

The npm package @cjpais/inference receives a total of 0 weekly downloads. As such, @cjpais/inference popularity was classified as not popular.

We found that @cjpais/inference demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.