Security News

Risky Biz Podcast: Making Reachability Analysis Work in Real-World Codebases

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

@llamaindex/autotool

Advanced tools

Auto transpile your JS function to LLM Agent compatible

First, Install the package

npm install @llamaindex/autotool

pnpm add @llamaindex/autotool

yarn add @llamaindex/autotool

Second, Add the plugin/loader to your configuration:

import { withNext } from "@llamaindex/autotool/next";

/** @type {import('next').NextConfig} */

const nextConfig = {};

export default withNext(nextConfig);

node --import @llamaindex/autotool/node ./path/to/your/script.js

Third, add "use tool" on top of your tool file or change to .tool.ts.

"use tool";

export function getWeather(city: string) {

// ...

}

// ...

Finally, export a chat handler function to the frontend using llamaindex Agent

"use server";

// imports ...

export async function chatWithAI(message: string): Promise<JSX.Element> {

const agent = new OpenAIAgent({

tools: convertTools("llamaindex"),

});

const uiStream = createStreamableUI();

agent

.chat({

stream: true,

message,

})

.then(async (responseStream) => {

return responseStream.pipeTo(

new WritableStream({

start: () => {

uiStream.append("\n");

},

write: async (message) => {

uiStream.append(message.response.delta);

},

close: () => {

uiStream.done();

},

}),

);

});

return uiStream.value;

}

MIT

FAQs

auto transpile your JS function to LLM Agent compatible

We found that @llamaindex/autotool demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

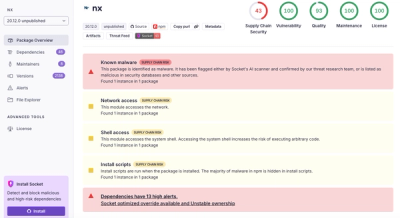

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

Security News

CISA’s 2025 draft SBOM guidance adds new fields like hashes, licenses, and tool metadata to make software inventories more actionable.