Security News

PodRocket Podcast: Inside the Recent npm Supply Chain Attacks

Socket CEO Feross Aboukhadijeh discusses the recent npm supply chain attacks on PodRocket, covering novel attack vectors and how developers can protect themselves.

@ng-web-apis/audio

Advanced tools

This is a library for declarative use of Web Audio API with Angular

This is a library for declarative use of Web Audio API with Angular 7+. It is a complete conversion to declarative Angular directives, if you find any inconsistencies or errors, please file an issue. Watch out for 💡 emoji in this README for additional features and special use cases.

After you installed the package, you must add

@ng-web-apis/audio/polyfillto yourpolyfills.ts. It is required to normalize things likewebkitAudioContext, otherwise your code would fail.

You can build audio graph with directives. For example, here's a typical echo feedback loop:

<audio

src="/demo.wav"

waMediaElementAudioSourceNode

>

<ng-container

#node="AudioNode"

waDelayNode

[delayTime]="delayTime"

>

<ng-container

waGainNode

[gain]="gain"

>

<ng-container [waOutput]="node"></ng-container>

<ng-container waAudioDestinationNode></ng-container>

</ng-container>

</ng-container>

<ng-container waAudioDestinationNode></ng-container>

</audio>

This library has AudioBufferService with fetch method, returning

Promise which allows you to

easily turn your hosted audio file into AudioBuffer

through GET requests. Result is stored in service's cache so same file is not requested again while application is

running.

This service is also used within directives that have AudioBuffer inputs (such as AudioBufferSourceNode or ConvolverNode) so you can just pass string URL, as well as an actual AudioBuffer. For example:

<button

#source="AudioNode"

buffer="/demo.wav"

waAudioBufferSourceNode

(click)="source.start()"

>

Play

<ng-container waAudioDestinationNode></ng-container>

</button>

You can use following audio nodes through directives of the same name (prefixed with wa standing for Web API):

💡 Not required if you only need one, global context will be created when needed

💡 Also gives you access to AudioListener parameters such as positionX

💡 Additionally supports empty autoplay attribute similar to audio tag so it would start rendering immediately

💡 Also gives you access to AudioListener parameters such as positionX

💡 Use it to terminate branch of your graph

💡 can be used multiple times inside single BaseAudioContext referencing the same BaseAudioContext.destination

💡 Has (quiet) output to watch for particular graph branch going almost silent for 5 seconds straight so you can

remove branch after all effects played out to silence to free up resources

💡 Additionally supports setting URL to media file as buffer so it will be fetched and turned into AudioBuffer

💡 Additionally supports empty autoplay attribute similar to audio tag so it would start playing immediately

💡 Additionally supports empty autoplay attribute similar to audio tag so it would start playing immediately

💡 Additionally supports empty autoplay attribute similar to audio tag so it would start playing immediately

💡 Use Channel directive to merge channels, see example in Special cases section

💡 Additionally supports setting URL to media file as buffer so it will be fetched and turned into AudioBuffer

You can use AudioWorkletNode in supporting browsers. To register your AudioWorkletProcessors in a global default AudioContext you can use tokens:

@NgModule({

bootstrap: [AppComponent],

declarations: [AppComponent],

providers: [

{

provide: AUDIO_WORKLET_PROCESSORS,

useValue: 'assets/my-processor.js',

multi: true,

},

],

})

export class AppModule {}

@Component({

selector: 'app',

templateUrl: './app.component.html',

})

export class App {

constructor(@Inject(AUDIO_WORKLET_PROCESSORS_READY) readonly processorsReady: Promise<boolean>) {}

// ...

}

You can then instantiate your AudioWorkletNode:

<ng-container

*ngIf="processorsReady | async"

waAudioWorkletNode

name="my-processor"

>

<ng-container waAudioDestinationNode></ng-container>

</ng-container>

If you need to create your own node with custom

AudioParam and control it declaratively you can extend

WebAudioWorklet class and add audioParam decorator to new component's inputs:

@Directive({

selector: '[my-worklet-node]',

exportAs: 'AudioNode',

providers: [asAudioNode(MyWorklet)],

})

export class MyWorklet extends WebAudioWorklet {

@Input()

@audioParam()

customParam?: AudioParamInput;

constructor(

@Inject(AUDIO_CONTEXT) context: BaseAudioContext,

@SkipSelf() @Inject(AUDIO_NODE) node: AudioNode | null,

) {

super(context, node, 'my-processor');

}

}

Since work with AudioParam is imperative in its nature, there are difference to native API when working with declarative inputs and directives.

NOTE: You can always access directives through template reference variables / @ViewChild and since they extend native nodes work with AudioParam in traditional Web Audio fashion

AudioParam inputs for directives accept following arguments:

number to set in instantly, equivalent to setting

AudioParam.value

AudioParamCurve to set array of values over given duration, equivalent to

AudioParam.setValueCurveAtTime

called with AudioContext.currentTime

export type AudioParamCurve = {

readonly value: number[];

readonly duration: number;

}

AudioParamAutomation to linearly or exponentially ramp to given value starting from

AudioContext.currentTime

export type AudioParamAutomation = {

readonly value: number;

readonly duration: number;

readonly mode: 'instant' | 'linear' | 'exponential';

};

AudioParamAutomation[] to schedule multiple changes in value, stacking one after another

You can use waAudioParam pipe to turn your number values into AudioParamAutomation (default mode is exponential,

so last argument can be omitted) or number arrays to AudioParamCurve (second argument duration is in seconds):

<ng-container

waGainNode

gain="0"

[gain]="gain | waAudioParam : 0.1 : 'linear'"

></ng-container>

This way values would change smoothly rather than abruptly, causing audio artifacts.

NOTE: You can set initial value for AudioParam through argument binding combined with dynamic property binding as seen above.

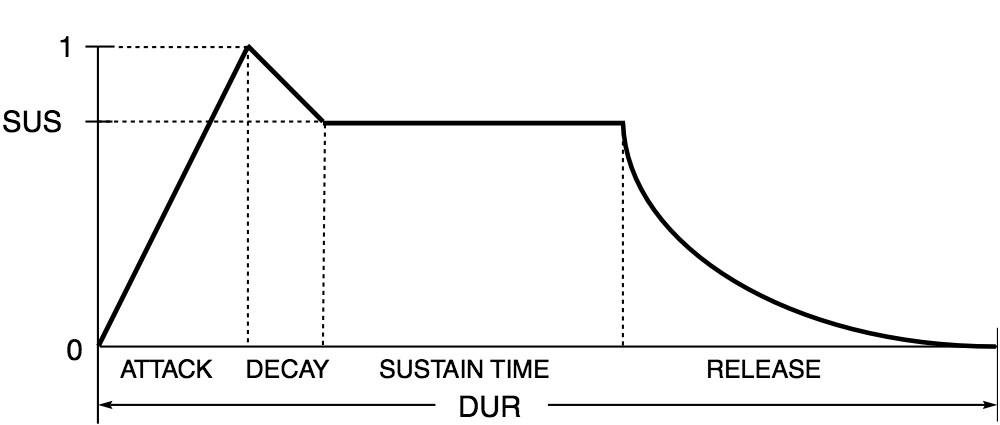

To schedule an audio envelope looking something like this:

You would need to pass the following array of AudioParamAutomation items:

const envelope = [

{

value: 0,

duration: 0,

mode: 'instant',

},

{

value: 1,

duration: ATTACK_TIME,

mode: 'linear',

},

{

value: SUS,

duration: DECAY_TIME,

mode: 'linear',

},

{

value: SUS,

duration: SUSTAIN_TIME,

mode: 'instant',

},

{

value: 0,

duration: RELEASE_TIME,

mode: 'exponential',

},

];

waOutput directive when you need non-linear graph (see feedback loop example above) or to manually connect

AudioNode to

AudioNode or

AudioParamwaPeriodicWave pipe to create PeriodicWave for

OscillatorNodeAudioNode so you can use them with

template reference variables (see feedback loop example above)waChannel directive within

ChannelMergerNode and direct waOutput

directive to it in order to perform channel merging:<!-- Inverting left and right channel -->

<audio

src="/demo.wav"

waMediaElementAudioSourceNode

>

<ng-container waChannelSplitterNode>

<ng-container [waOutput]="right"></ng-container>

<ng-container [waOutput]="left"></ng-container>

</ng-container>

<ng-container waChannelMergerNode>

<ng-container

#left="AudioNode"

waChannel

></ng-container>

<ng-container

#right="AudioNode"

waChannel

></ng-container>

<ng-container waAudioDestinationNode></ng-container>

</ng-container>

</audio>

WEB_AUDIO_SUPPORT tokenAUDIO_WORKLET_SUPPORT tokenAUDIO_CONTEXT tokenFEEDBACK_COEFFICIENTS and FEEDFORWARD_COEFFICIENTS tokens to be able to create

IIRFilterNodeMEDIA_STREAM token to be able to create

MediaStreamAudioSourceNodeAUDIO_NODE tokenAUDIO_WORKLET_PROCESSORS token to declare array of

AudioWorkletProcessors to be added to

default AudioContextAUDIO_WORKLET_PROCESSORS_READY token to initialize provided

AudioWorkletProcessors loading and watch for

Promise resolution before

instantiating dependent AudioWorkletNodes |  |  |  |

|---|---|---|---|

| 12+ | 31+ | 34+ | 9+ |

Note that some features (AudioWorklet etc.) were added later and are supported only by more recent versions

IMPORTANT: You must add @ng-web-apis/audio/polyfill to your polyfills.ts, otherwise you will get

ReferenceError: X is not defined in browsers for entities they do not support

💡 StereoPannerNode is emulated with PannerNode in browsers that do not support it yet

💡 positionX (orientationX) and other similar properties of AudioListener and PannerNode fall back to setPosition (setOrientation) method if browser does not support it

If you want to use this package with SSR, you need to mock native Web Audio API classes on the server:

import '@ng-web-apis/audio/mocks';

It is recommended to keep the import statement at the top of your

server.tsormain.server.tsfile.

You can try online demo here

Other Web APIs for Angular by @ng-web-apis

FAQs

This is a library for declarative use of Web Audio API with Angular

The npm package @ng-web-apis/audio receives a total of 41 weekly downloads. As such, @ng-web-apis/audio popularity was classified as not popular.

We found that @ng-web-apis/audio demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Socket CEO Feross Aboukhadijeh discusses the recent npm supply chain attacks on PodRocket, covering novel attack vectors and how developers can protect themselves.

Security News

Maintainers back GitHub’s npm security overhaul but raise concerns about CI/CD workflows, enterprise support, and token management.

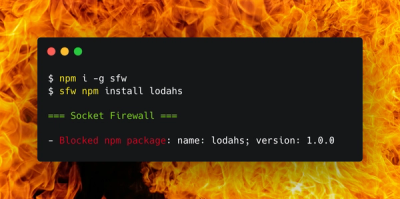

Product

Socket Firewall is a free tool that blocks malicious packages at install time, giving developers proactive protection against rising supply chain attacks.