Security News

/Research

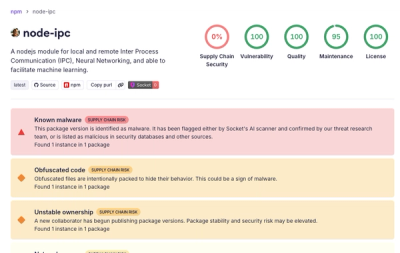

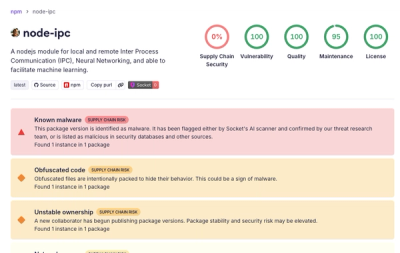

Popular node-ipc npm Package Infected with Credential Stealer

Socket detected malicious node-ipc versions with obfuscated stealer/backdoor behavior in a developing npm supply chain attack.

@prisma/studio-core

Advanced tools

@prisma/studio-core is the embeddable Prisma Studio package.

It provides the same core experience as Prisma Studio: a visual way to explore schema, browse table data, edit rows, filter/sort/paginate records, inspect relation data, and run SQL queries with an operation log.

This package is published to npm and consumed by Prisma surfaces such as Console and CLI integrations.

Import the UI entrypoint, include the packaged CSS once, and pass a configured adapter into Studio:

import {

Studio,

type StudioLlmRequest,

type StudioLlmResponse,

} from "@prisma/studio-core/ui";

import "@prisma/studio-core/ui/index.css";

import { createStudioBFFClient } from "@prisma/studio-core/data/bff";

import { createPostgresAdapter } from "@prisma/studio-core/data/postgres-core";

import { isStudioLlmResponse } from "@prisma/studio-core/data";

const adapter = createPostgresAdapter({

executor: createStudioBFFClient({

url: "/api/query",

}),

});

export function EmbeddedStudio() {

return (

<Studio

adapter={adapter}

llm={async (

request: StudioLlmRequest,

): Promise<StudioLlmResponse> => {

const response = await fetch("/api/ai", {

body: JSON.stringify(request),

headers: { "content-type": "application/json" },

method: "POST",

});

const payload = (await response.json()) as unknown;

if (isStudioLlmResponse(payload)) {

return payload;

}

return {

code: "request-failed",

message: response.ok

? "AI response did not match the Studio LLM contract."

: `AI request failed (${response.status} ${response.statusText})`,

ok: false,

};

}}

/>

);

}

adapter is required. llm is optional and is the single supported AI transport hook for all Studio AI features. Studio sends the fully constructed prompt plus a task label, and the host returns either { ok: true, text } or { ok: false, code, message }. When llm is omitted, Studio hides the AI filter, AI SQL generation, and AI visualization affordances entirely.

There are no per-feature AI integration props to wire separately.

Studio no longer renders a built-in fullscreen header button. If your host needs fullscreen behavior, render that control at the host container level, as the local demo does.

Studio handles prompt construction, type-aware validation, correction retries, SQL execution retries, and conversion into the normal filter, SQL, and visualization surfaces. The host transport only needs to forward the prepared request to an LLM provider and return the typed result.

type StudioLlmRequest = {

task: "table-filter" | "sql-generation" | "sql-visualization";

prompt: string;

};

type StudioLlmResponse =

| { ok: true; text: string }

| {

ok: false;

code:

| "cancelled"

| "not-configured"

| "output-limit-exceeded"

| "request-failed";

message: string;

};

Studio treats output-limit-exceeded as a first-class retry signal for SQL generation and visualization correction loops. All prompting and retry behavior live in Studio itself, so host implementations should stay transport-only.

Studio is an embeddable React surface, not a standalone app shell. A production integration should:

<Studio /> inside the host product's route, panel, or page@prisma/studio-core/ui/index.css exactly oncecreatePostgresAdapter, createMySQLAdapter, or createSQLiteAdaptercreateStudioBFFClient({ url: "/api/query" })customHeaders and/or customPayloadllm for AI-assisted filtering, SQL generation, and SQL result visualizationThe simplest supported shape is: host React app -> <Studio /> -> adapter -> createStudioBFFClient(...) -> host BFF route -> database executor.

Studio's packaged adapters speak one JSON-over-HTTP contract. The host application is expected to implement a POST endpoint, usually /api/query, that accepts StudioBFFRequest payloads and returns JSON results with serialized errors.

Yes: we did change this contract to support staged multi-cell saves. The current contract includes procedure: "transaction", which is used to commit multiple staged row updates in one database transaction when the backend supports it.

POSTapplication/jsoncreateStudioBFFClient forwards customHeaders as HTTP headerscustomPayload is forwarded in the JSON body unchangedtype Query = {

sql: string;

parameters: readonly unknown[];

transformations?: Partial<Record<string, "json-parse">>;

};

type SerializedError = {

name: string;

message: string;

errors?: SerializedError[];

};

type SqlLintDiagnostic = {

code?: string;

from: number;

message: string;

severity: "error" | "warning" | "info" | "hint";

source?: string;

to: number;

};

type StudioBFFRequest =

| {

procedure: "query";

query: Query;

customPayload?: Record<string, unknown>;

}

| {

procedure: "sequence";

sequence: readonly [Query, Query];

customPayload?: Record<string, unknown>;

}

| {

procedure: "transaction";

queries: readonly Query[];

customPayload?: Record<string, unknown>;

}

| {

procedure: "sql-lint";

sql: string;

schemaVersion?: string;

customPayload?: Record<string, unknown>;

};

type QueryResponse = [SerializedError, undefined?] | [null, unknown[]];

type SequenceStepResponse = [SerializedError] | [null, unknown[]];

type SequenceResponse =

| [SequenceStepResponse]

| [[null, unknown[]], SequenceStepResponse];

type TransactionResponse = [SerializedError, undefined?] | [null, unknown[][]];

type SqlLintResponse =

| [SerializedError, undefined?]

| [

null,

{

diagnostics: SqlLintDiagnostic[];

schemaVersion?: string;

},

];

query: execute one SQL statement. This is required for every Studio adapter.sequence: execute exactly two queries in order. This is used by MySQL write flows that update first and refetch second.transaction: execute an ordered list of queries inside one database transaction. This is the contract addition that enables atomic staged multi-row saves from the table editor.sql-lint: return parse/plan diagnostics for the SQL editor and SQL-backed filter pills.For sequence, the second query should only run if the first one succeeds. For transaction, the response result array must stay in the same order as body.queries.

sql-lint is optional because adapters can fall back to adapter-local EXPLAIN strategies. transaction is strongly recommended because it gives staged multi-row saves atomic behavior; without it, adapters may fall back to sequential writes.

The demo server in this repo is the reference implementation. A host route can mirror it closely:

import { serializeError, type StudioBFFRequest } from "@prisma/studio-core/data/bff";

export async function handleStudioBff(request: Request): Promise<Response> {

if (request.method !== "POST") {

return new Response("Method Not Allowed", {

headers: { Allow: "POST,OPTIONS" },

status: 405,

});

}

const payload = (await request.json()) as StudioBFFRequest;

if (payload.procedure === "query") {

const [error, result] = await executor.execute(payload.query);

return Response.json([error ? serializeError(error) : null, result]);

}

if (payload.procedure === "sequence") {

const [firstQuery, secondQuery] = payload.sequence;

const [firstError, firstResult] = await executor.execute(firstQuery);

if (firstError) {

return Response.json([[serializeError(firstError)]]);

}

const [secondError, secondResult] = await executor.execute(secondQuery);

if (secondError) {

return Response.json([

[null, firstResult],

[serializeError(secondError)],

]);

}

return Response.json([

[null, firstResult],

[null, secondResult],

]);

}

if (payload.procedure === "transaction") {

if (typeof executor.executeTransaction !== "function") {

return new Response("Transaction execution is not supported", {

status: 501,

});

}

const [error, result] = await executor.executeTransaction(payload.queries);

return Response.json([error ? serializeError(error) : null, result]);

}

if (payload.procedure === "sql-lint") {

if (typeof executor.lintSql !== "function") {

return new Response("SQL lint is not supported", { status: 501 });

}

const [error, result] = await executor.lintSql({

schemaVersion: payload.schemaVersion,

sql: payload.sql,

});

return Response.json([error ? serializeError(error) : null, result]);

}

return new Response("Invalid procedure", { status: 400 });

}

customHeaders and customPayload are used for later requests.sequence support enabled because the adapter depends on ordered write-plus-refetch flows.transaction on the BFF and forward it to a real database transaction on the server.llm, Studio still supports the full manual filtering UI and the standard SQL editor, just without any AI affordances.This package includes anonymized telemetry to help us improve Prisma Studio.

Set CHECKPOINT_DISABLE=1 to opt out of usage-data collection, following Prisma's documented CLI telemetry opt-out contract. Learn more in our Privacy Policy and the Prisma CLI telemetry docs.

Requirements:

^20.19 || ^22.12 || >=24.0pnpm 8bunInstall dependencies and start the demo:

pnpm install

pnpm demo:ppg

Then open http://localhost:4310.

To enable the demo's AI flows, copy .env.example to .env and set ANTHROPIC_API_KEY.

The demo reads that key server-side and calls Anthropic Haiku 4.5 directly over HTTP through one shared llm hook used by table filtering, SQL generation, and SQL result visualization. Set STUDIO_DEMO_AI_ENABLED=false to hide all AI affordances without removing the key. .env and .env.local are gitignored.

The demo:

ppg-dev) programmatically via @prisma/devThe demo database is intentionally ephemeral: it is pre-seeded when the demo starts and reset when the demo process stops.

pnpm demo:ppg - run local Studio demo with seeded Prisma Postgres devpnpm typecheck - run TypeScript checkspnpm lint - run ESLint (--fix)pnpm test - run default vitest suitepnpm test:checkpoint - run checkpoint testspnpm test:data - run data-layer testspnpm test:demo - run demo/server testspnpm test:ui - run UI testspnpm test:e2e - run e2e testspnpm demo:ppg:build - bundle the demo server with bun buildpnpm demo:ppg:bundle - build and run the bundled demo serverpnpm build - build distributable package with tsuppnpm check:exports - validate package export map/typesWhen bundling the demo with bun build, we use --packages external so

@prisma/dev can resolve its PGlite runtime assets (WASM/data/extensions)

directly from node_modules at runtime.

For day-to-day development, run the demo locally and verify both terminal logs and browser behavior as you make changes.

Recommended flow:

pnpm demo:ppg.http://localhost:4310, using Playwright when you want an automated browser check.Because the demo is pre-seeded and resets between runs, update seed data whenever needed to reproduce richer scenarios.

Seed data lives in demo/ppg-dev/seed-database.ts (seedDatabase).

FAQs

Modular Prisma Studio components

We found that @prisma/studio-core demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 7 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Socket detected malicious node-ipc versions with obfuscated stealer/backdoor behavior in a developing npm supply chain attack.

Security News

TeamPCP and BreachForums are promoting a Shai-Hulud supply chain attack contest with a $1,000 prize for the biggest package compromise.

Security News

Packagist urges PHP projects to update Composer after a GitHub token format change exposed some GitHub Actions tokens in CI logs.