Security News

Crates.io Users Targeted by Phishing Emails

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.

@robertsvendsen/node-crawler

Advanced tools

Crawls web urls from a list

Very simple wrapper for puppeteer, with the most basic requirements for a crawler inluded.

npm install @robertsvendsen/node-crawler

If you are getting this:

Error: Failed to launch the browser process! undefined Fontconfig error: No writable cache directories

https://pptr.dev/troubleshooting#could-not-find-expected-browser-locally

If you had that problem, and you fixed it with ENV during install, you must always keep the environment variable: PUPPETEER_CACHE_DIR=$(pwd)

import Crawler, { CrawlerPageOptions } from '@robertsvendsen/node-crawler/src/crawler'

const options = new CrawlerOptions({

name: 'node-crawler-agent',

concurrency: 1,

readRobotsTxt: true,

dataPath: 'data/crawler',

});

const crawler = new Crawler(options);

const links = [{ url: "https://www.google.com" }];

init().then(async () => {

console.info('Crawling complete');

// await delay(10000); // If you have troubles with the script exits before crawling completed make a delay here. The queue is empty but crawling is not.

await crawler.close();

process.exit();

});

async function init() {

const pageOptions = new CrawlerPageOptions({ downloadImages: true });

for (const link of links) {

crawler.add(link.url, pageOptions).then((result) => {

if (result) {

console.info('Crawled', link.url);

}

}

// To avoid saturating the CPU immediately on startup we don't fill the queue up all the way.

await crawler.queue.onSizeLessThan(options.concurrency * 2);

}

await crawler.queue.onEmpty();

}

width = 1920; // 3840

height = 1080; // 2160

isLandscape = false;

isMobile = false;

hasTouch = false;

downloadImages = false;

returnPageInstance = false; // If true, you must close it yourself.

timeout = 10000; // Page load timeout in ms.

waitUntil = 'networkidle2';

concurrency = 1;

readRobotsTxt = true;

name = 'node-crawler'; // This should just be the name, no version or anything.

version = '0.1';

email = ''; // contact email for this crawler.

dataPath = 'data';

saveAsPDF = false; // Enable PDF file generation /printing of the site.

saveFiles = true; // Handle this yourself? set to true.

headless = true;

FAQs

Crawls web urls from a list

We found that @robertsvendsen/node-crawler demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

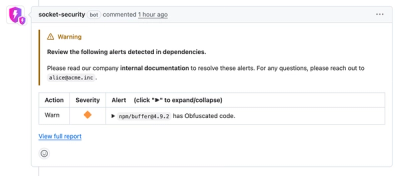

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.

Product

Socket now lets you customize pull request alert headers, helping security teams share clear guidance right in PRs to speed reviews and reduce back-and-forth.

Product

Socket's Rust support is moving to Beta: all users can scan Cargo projects and generate SBOMs, including Cargo.toml-only crates, with Rust-aware supply chain checks.