Security News

/Research

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

@upstash/c7score

Advanced tools

context-traceThe context-trace package is used to evaluate the quality of Upstash's Context7 MCP snippets.

context-trace uses the following five metrics to grade quality. The metrics can be divided into two groups: LLM analysis and rule-based text analysis.

Requirements:

Create a .env file containing:

GEMINI_API_TOKEN=...

GITHUB_API_TOKEN=...

CONTEXT7_API_TOKEN=...

import { getScore, compareLibraries } from "@shannonrumsey/context-trace";

await getScore("/facebook/react", {

report: {

console: true,

folderPath: `${__dirname}/../results`

},

weights: {

question: 0.8,

llm: 0.05,

formatting: 0.05,

metadata: 0.05,

initialization: 0.05

},

prompts: {

questionEvaluation: `Evaluate ...`

}

});

await compareLibraries(

"/tailwindlabs/tailwindcss.com",

"/websites/tailwindcss",

{

report: {

console: true

},

llm: {

temperature: 0.95,

topP: 0.8,

topK: 45

},

prompts: {

questionEvaluation: `Evaluate ...`

}

}

);

{

report: {

console: boolean;

folderPath: string;

humanReadable: boolean;

returnScore: boolean;

};

weights: {

question: number;

llm: number;

formatting: number;

metadata: number;

initialization: number;

};

llm: {

temperature: number;

topP: number;

topK: number;

};

prompts: {

searchTopics: string;

questionEvaluation: string;

llmEvaluation: string;

};

}

Configuration Details

compareLibraries must have two libraries that have the same productgetScore will output machine-readable results to result.json and human-readable results to result-LIBRARY_NAME.txt in the specified directorycompareLibraries will output results to result-compare.json and result-compare-LIBRARY_NAME.txtreport

console: true prints results to the console.folderPath specifies the folder for human-readable and machine-readable results (the folder must already exist).humanReadable writes the results to a txt file.returnScore returns the average score as a number for getScore and an object for compareLibraries.weights

llm

prompts

| Prompt | For getScore | For compareLibraries |

|---|---|---|

| searchTopics | {{product}}, {{questions}} | – |

| context | {{contexts}}, {{questions}} | {{contexts[0]}}, {{contexts[1]}}, {{questions}} |

| llmEvaluation | {{snippets}}, {{snippetDelimiter}} | {{snippets[0]}}, {{snippets[1]}}, {{snippetDelimiter}} |

Defaults

{

report: {

console: true,

humanReadable: false,

returnScore: false

},

weights: {

question: 0.8,

llm: 0.05,

formatting: 0.05,

metadata: 0.05,

initialization: 0.05

},

llm: {

temperature: 1.0,

topP: 0.95,

topK: 64

}

}

context-trace getscore "/facebook/react" -c '{"report": {"console": true}}'

context-trace comparelibraries "/tailwindlabs/tailwindcss.com" "/websites/tailwindcss-com_vercel_app" -c '{"report": {"console": true}}'

FAQs

Evaluates the quality of code snippets.

We found that @upstash/c7score demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 5 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

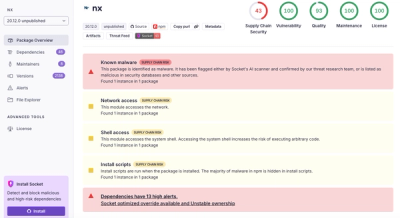

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.