Security News

Attackers Are Hunting High-Impact Node.js Maintainers in a Coordinated Social Engineering Campaign

Multiple high-impact npm maintainers confirm they have been targeted in the same social engineering campaign that compromised Axios.

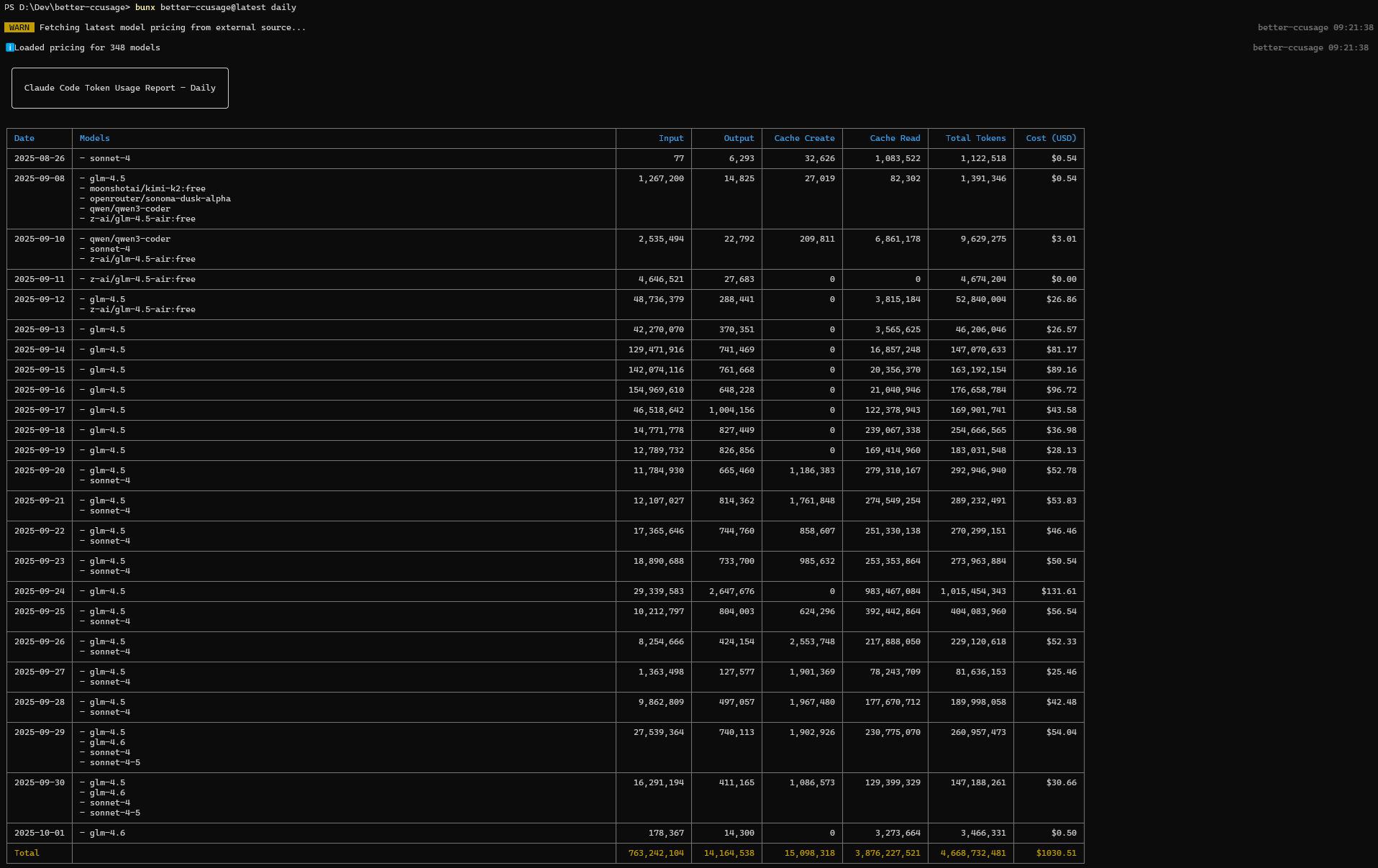

better-ccusage

Advanced tools

Enhanced usage analysis tool for Claude Code with multi-provider support

Analyze your Claude Code or Droid token usage and costs from local JSONL files with multi-provider support — incredibly fast and informative!

better-ccusage is a fork of the original ccusage project that addresses a critical limitation: while ccusage focuses exclusively on Claude Code usage with Anthropic models, better-ccusage extends support to external providers that use Claude Code with different providers like Anthropic, Zai, Dashscope and many models like glm series from Zai, kat-coder from Kwaipilot, kimi from Moonshot, Minimax, sonnet-4, sonnet-4.5 and Qwen-Max etc..

The original ccusage project is designed specifically for Anthropic's Claude Code and doesn't account for:

better-ccusage maintains full compatibility with ccusage while adding comprehensive support for these additional providers and models.

The main CLI tool for analyzing Claude Code/Droid Usage from local JSONL files with support for multiple AI providers including Anthropic, Zai, and All GLM models (including GLM-5-Turbo), kat-coder models. Track daily, monthly, and session-based usage with beautiful tables and live monitoring.

Companion tool for analyzing OpenAI Codex usage. Same powerful features as better-ccusage but tailored for Codex users, including GPT-5 support and 1M token context windows.

Model Context Protocol server that exposes better-ccusage data to Claude Desktop and other MCP-compatible tools. Enable real-time usage tracking directly in your AI workflows.

Thanks to better-ccusage's incredibly small bundle size, you can run it directly without installation:

# Recommended - always include @latest to ensure you get the newest version

npx better-ccusage@latest

bunx better-ccusage

# Alternative package runners

pnpm dlx better-ccusage

pnpx better-ccusage

# Using deno (with security flags)

deno run -E -R=$HOME/.claude/projects/ -S=homedir -N='raw.githubusercontent.com:443' npm:better-ccusage@latest

💡 Important: We strongly recommend using

@latestsuffix with npx (e.g.,npx better-ccusage@latest) to ensure you're running the most recent version with the latest features and bug fixes.

Analyze OpenAI Codex usage with our companion tool @better-ccusage/codex:

# Recommended - always include @latest

npx @better-ccusage/codex@latest

bunx @better-ccusage/codex@latest # ⚠️ MUST include @latest with bunx

# Alternative package runners

pnpm dlx @better-ccusage/codex

pnpx @better-ccusage/codex

# Using deno (with security flags)

deno run -E -R=$HOME/.codex/ -S=homedir -N='raw.githubusercontent.com:443' npm:@better-ccusage/codex@latest

⚠️ Critical for bunx users: Bun 1.2.x's bunx prioritizes binaries matching the package name suffix when given a scoped package. For

@better-ccusage/codex, it looks for acodexbinary in PATH first. If you have an existingcodexcommand installed (e.g., GitHub Copilot's codex), that will be executed instead. Always usebunx @better-ccusage/codex@latestwith the version tag to force bunx to fetch and run the correct package.

Integrate better-ccusage with Claude Desktop using @better-ccusage/mcp:

# Start MCP server for Claude Desktop integration

npx @better-ccusage/mcp@latest --type http --port 8080

This enables real-time usage tracking and analysis directly within Claude Desktop conversations.

# Basic usage

npx better-ccusage # Show daily report (default)

npx better-ccusage daily # Daily token usage and costs

npx better-ccusage monthly # Monthly aggregated report

npx better-ccusage session # Usage by conversation session

npx better-ccusage blocks # 5-hour billing windows

npx better-ccusage statusline # Compact status line for hooks (Beta)

# Live monitoring

npx better-ccusage blocks --live # Real-time usage dashboard

# Filters and options

npx better-ccusage daily --since 20250525 --until 20250530

npx better-ccusage daily --json # JSON output

npx better-ccusage daily --breakdown # Per-model cost breakdown

npx better-ccusage daily --timezone UTC # Use UTC timezone

npx better-ccusage daily --locale ja-JP # Use Japanese locale for date/time formatting

# Project analysis

npx better-ccusage daily --instances # Group by project/instance

npx better-ccusage daily --project myproject # Filter to specific project

npx better-ccusage daily --instances --project myproject --json # Combined usage

# Compact mode for screenshots/sharing

npx better-ccusage --compact # Force compact table mode

npx better-ccusage monthly --compact # Compact monthly report

better-ccusage extends the original ccusage functionality with automatic support for multiple AI providers:

How It Works:

"kimi-for-coding" ✓"moonshot/kimi-for-coding" ✓$0.00 costs from unfound modelsMoonshot AI (kimi-* models):

kimi-k2-0905-preview, kimi-k2-0711-preview, kimi-k2-turbo-previewkimi-k2-thinking, kimi-k2-thinking-turbo, kimi-for-codingMiniMax:

MiniMax-M2All GLM Models

Anthropic (Claude models):

claude-sonnet-4-20250514, claude-sonnet-4-5-20250929, etc.Zai Provider:

And More:

blocks --live--breakdown flag--since and --until--compact flag to force compact table layout, perfect for screenshots and sharing--json--instances flag and filter by specific projects--timezone option--locale option (e.g., en-US, ja-JP, de-DE)| Feature | ccusage | better-ccusage |

|---|---|---|

| Anthropic Models | ✅ | ✅ |

| Moonshot (kimi) Models | ❌ | ✅ |

| MiniMax Models | ❌ | ✅ |

| GLM* Models | ❌ | ✅ |

| Zai Provider | ❌ | ✅ |

| kat-coder | ❌ | ✅ |

| Automatic Provider Detection | ❌ | ✅ |

| Multi-Provider Support | ❌ | ✅ |

| Cost Calculation by Provider | ❌ | ✅ |

| Original ccusage Features | ✅ | ✅ |

| Show prompt usage for Coding | ❌ | ✅ |

| Droid usage | ❌ | ✅ |

FAQs

Enhanced usage analysis tool for Claude Code with multi-provider support

The npm package better-ccusage receives a total of 37 weekly downloads. As such, better-ccusage popularity was classified as not popular.

We found that better-ccusage demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Multiple high-impact npm maintainers confirm they have been targeted in the same social engineering campaign that compromised Axios.

Security News

Axios compromise traced to social engineering, showing how attacks on maintainers can bypass controls and expose the broader software supply chain.

Security News

Node.js has paused its bug bounty program after funding ended, removing payouts for vulnerability reports but keeping its security process unchanged.