Research

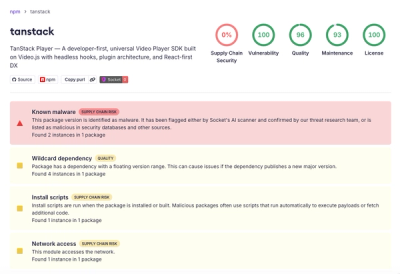

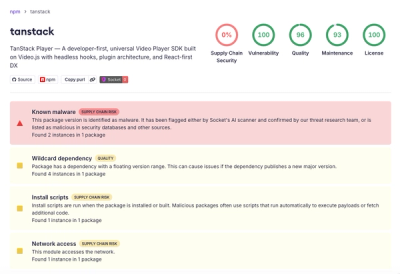

Malicious npm Package Brand-Squats TanStack to Exfiltrate Environment Variables

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

jstextfromimage

Advanced tools

Get descriptions of images from OpenAI, Azure OpenAI and Anthropic Claude models. Supports both URLs and local files with batch processing capabilities.

A powerful TypeScript/JavaScript library for obtaining detailed descriptions of images using various AI models including OpenAI's GPT-4 Vision, Azure OpenAI, and Anthropic Claude. Supports image URLs with batch processing capabilities.

npm install jstextfromimage

You can use the services either with environment variables or direct initialization.

import { openai, claude, azureOpenai } from 'jstextfromimage';

// Services will automatically use environment variables

const description = await openai.getDescription('https://example.com/image.jpg');

import { OpenAIService, ClaudeService, AzureOpenAIService } from 'jstextfromimage';

// OpenAI custom instance

const customOpenAI = new OpenAIService('your-openai-api-key');

// Claude custom instance

const customClaude = new ClaudeService('your-claude-api-key');

// Azure OpenAI custom instance

const customAzure = new AzureOpenAIService({

apiKey: 'your-azure-api-key',

endpoint: 'your-azure-endpoint',

deploymentName: 'your-deployment-name'

});

import { openai } from 'jstextfromimage';

// Single image analysis

const description = await openai.getDescription('https://example.com/image.jpg', {

prompt: "Describe the main elements of this image",

maxTokens: 500,

model: 'gpt-4o'

});

// Batch processing

const imageUrls = [

'https://example.com/image1.jpg',

'https://example.com/image2.jpg',

'https://example.com/image3.jpg'

];

const results = await openai.getDescriptionBatch(imageUrls, {

prompt: "Analyze this image in detail",

maxTokens: 300,

concurrency: 2,

model: 'gpt-4o'

});

// Process results

results.forEach(result => {

if (result.error) {

console.error(`Error processing ${result.imageUrl}: ${result.error}`);

} else {

console.log(`Description for ${result.imageUrl}: ${result.description}`);

}

});

import { claude } from 'jstextfromimage';

// Single image analysis

const description = await claude.getDescription('https://example.com/artwork.jpg', {

prompt: "Analyze this artwork, including style and composition",

maxTokens: 1000,

model: 'claude-3-sonnet-20240229'

});

// Batch processing

const artworkUrls = [

'https://example.com/artwork1.jpg',

'https://example.com/artwork2.jpg'

];

const analyses = await claude.getDescriptionBatch(artworkUrls, {

prompt: "Provide a detailed art analysis",

maxTokens: 800,

concurrency: 2,

model: 'claude-3-sonnet-20240229'

});

import { azureOpenai } from 'jstextfromimage';

// Single image analysis

const description = await azureOpenai.getDescription('https://example.com/scene.jpg', {

prompt: "Describe this scene in detail",

maxTokens: 400,

systemPrompt: "You are an expert in visual analysis."

});

// Batch processing

const sceneUrls = [

'https://example.com/scene1.jpg',

'https://example.com/scene2.jpg'

];

const analyses = await azureOpenai.getDescriptionBatch(sceneUrls, {

prompt: "Analyze the composition and mood",

maxTokens: 500,

concurrency: 3,

systemPrompt: "You are an expert cinematographer."

});

// OpenAI defaults

{

model: 'gpt-4o',

maxTokens: 300,

prompt: "What's in this image?",

concurrency: 3 // for batch processing

}

// Claude defaults

{

model: 'claude-3-sonnet-20240229',

maxTokens: 300,

prompt: "What's in this image?",

concurrency: 3

}

// Azure OpenAI defaults

{

maxTokens: 300,

prompt: "What's in this image?",

systemPrompt: "You are a helpful assistant.",

concurrency: 3

}

import { openai } from 'jstextfromimage';

// Single local file

const description = await openai.getDescription('/path/to/local/image.jpg', {

prompt: "Describe this image",

maxTokens: 300,

model: 'gpt-4o'

});

// Mix of local files and URLs in batch processing

const images = [

'/path/to/local/image1.jpg',

'https://example.com/image2.jpg',

'/path/to/local/image3.png'

];

const results = await openai.getDescriptionBatch(images, {

prompt: "Analyze each image",

maxTokens: 300,

concurrency: 2

});

# OpenAI

OPENAI_API_KEY=your-openai-api-key

# Claude

ANTHROPIC_API_KEY=your-claude-api-key

# Azure OpenAI

AZURE_OPENAI_API_KEY=your-azure-api-key

AZURE_OPENAI_ENDPOINT=your-azure-endpoint

AZURE_OPENAI_DEPLOYMENT=your-deployment-name

// Base options for all services

interface BaseOptions {

prompt?: string;

maxTokens?: number;

concurrency?: number; // For batch processing

}

// OpenAI specific options

interface OpenAIOptions extends BaseOptions {

model?: string;

}

// Claude specific options

interface ClaudeOptions extends BaseOptions {

model?: string;

}

// Azure OpenAI specific options

interface AzureOpenAIOptions extends BaseOptions {

systemPrompt?: string;

}

// Azure OpenAI configuration

interface AzureOpenAIConfig {

apiKey?: string;

endpoint?: string;

deploymentName?: string;

apiVersion?: string;

}

// Batch processing results

interface BatchResult {

imageUrl: string;

description: string;

error?: string;

}

// Single image with error handling

try {

const description = await openai.getDescription(imageUrl, {

maxTokens: 300

});

console.log(description);

} catch (error) {

console.error('Failed to process image:', error);

}

// Batch processing with retry

async function processWithRetry(imageUrls: string[], maxRetries = 3) {

const results = await openai.getDescriptionBatch(imageUrls, {

maxTokens: 300,

concurrency: 2

});

// Handle failed items with retry

const failedItems = results.filter(r => r.error);

let retryCount = 0;

while (failedItems.length > 0 && retryCount < maxRetries) {

const retryUrls = failedItems.map(item => item.imageUrl);

const retryResults = await openai.getDescriptionBatch(retryUrls, {

maxTokens: 300,

concurrency: 1 // Lower concurrency for retries

});

// Update results with successful retries

retryResults.forEach(result => {

if (!result.error) {

const index = results.findIndex(r => r.imageUrl === result.imageUrl);

if (index !== -1) {

results[index] = result;

}

}

});

retryCount++;

}

return results;

}

# Install dependencies

npm install

# Run tests

npm test

# Build the project

npm run build

# Run linting

npm run lint

git checkout -b feature/amazing-feature)git commit -am 'feat: add amazing feature')git push origin feature/amazing-feature)This project is licensed under the MIT License - see the LICENSE file for details.

For support, please open an issue on GitHub.

FAQs

Get descriptions of images from OpenAI, Azure OpenAI and Anthropic Claude models. Supports both URLs and local files with batch processing capabilities.

The npm package jstextfromimage receives a total of 1 weekly downloads. As such, jstextfromimage popularity was classified as not popular.

We found that jstextfromimage demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

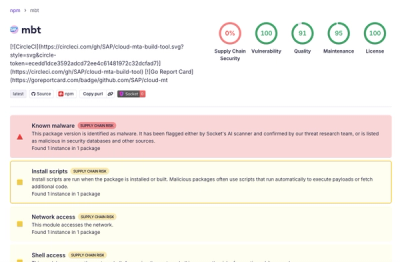

Research

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.