Security News

Crates.io Users Targeted by Phishing Emails

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.

kappa-view-query

Advanced tools

kappa-view-query is a materialised view to be used with kappa-core. It provides an API that allows you to define your own indexes and execute custom map-filter-reduce queries over your indexes.

kappa-view-query is inspired by flumeview-query. It uses the same scoring system for determining the most efficient index relevant to the provided query.

kappa-view-query uses a key / value store to compose a single index either in memory (using level-mem) or stored as a file (using level). Each time a message is published to a feed, or is received via replication, kappa-view-query checks to see if any of the message's fields match any of the indexes.

We can define an index like this:

{

key: 'typ',

value: [

['value', 'type'],

['value', 'timestamp']

]

}

This above index tells our view to store all messages that map to the data structure value.type and value.timestamp. If a message hitting the view does, it will save a reference to this message in our key / value store, where the matching field names along with the name of the index in question are compiled down into a single string. The value is a reference to the feed key and the sequence number, so we can retrieve that message from the correct hypercore later when we perform a query.

For example:

{

key: 'typ!chat/message!1566481592277',

value: 'f38b5a5e9603ffc6c24f4431c271999f08f43fc67379faf13c9d75adda01e63c@3'

}

Lets write a query. For example, say we want all messages of type chat/message published between 13:00 and 15:00 on 22-08-2019, here's what our query would look like...

var query = [{

$filter: {

value: {

type: 'chat/message',

timestamp: { $gte: 1566486000000, $lte: 1566478800000 }

}

}

}]

When we execute this query, our scoring system will first determine which index we previously provided gives us the best lens on the data. It does this by matching the requested fields, in this case, value.type and value.timestamp. The scoring system can be found at query.js.

In the case of the above dataset and query, the closest matching index is the one we provided above, named typ. At this point, kappa-view-query can then reduce the scope of our index file significantly, by filtering all references in our level or level-mem, greater than or equal to typ!chat/message!1566486000000, but less than or equal to type!chat/message!1566478800000. This gives us a subset of references with which we can fetch the actual messages from our hypercore feeds.

This example uses a single hypercore and collects all messages at a given point in time.

const Kappa = require('kappa-core')

const hypercore = require('hypercore')

const ram = require('random-access-memory')

const collect = require('collect-stream')

const memdb = require('level-mem')

const sub = require('subleveldown')

const Query = require('./')

const { validator, fromHypercore } = require('./util')

const { cleaup, tmp } = require('./test/util')

const seedData = require('./test/seeds.json')

const core = new Kappa()

const feed = hypercore(ram, { valueEncoding: 'json' })

const db = memdb()

core.use('query', createHypercoreSource({ feed, db: sub(db, 'state') }), Query(sub(db, 'view'), {

indexes: [

{ key: 'log', value: [['value', 'timestamp']] },

{ key: 'typ', value: [['value', 'type'], ['value', 'timestamp']] }

],

// you can pass a custom validator function to ensure all messages entering a feed match a specific format

validator,

// implement your own getMessage function, and perform any desired validation on each message returned by the query

getMessage: fromHypercore(feed)

}))

feed.append(seedData, (err, _) => {

core.ready('query', () => {

const query = [{ $filter: { value: { type: 'chat/message' } }]

// grab then log all chat/message message types up until this point

collect(core.view.query.read({ query }), (err, chats) => {

if (err) return console.error(err)

console.log(chats)

// grab then log all user/about message types up until this point

collect(core.view.query.read({ query: [{ $filter: { value: { type: 'user/about' } } }] }), (err, users) => {

if (err) return console.error(err)

console.log(users)

})

})

})

})

This example uses a multifeed instance for managing hypercores and sets up two live streams to dump messages to the console as they arrive.

const Kappa = require('kappa-core')

const multifeed = require('multifeed')

const ram = require('random-access-memory')

const collect = require('collect-stream')

const memdb = require('level-mem')

const sub = require('subleveldown')

const Query = require('./')

const { validator, fromMultifeed } = require('./util')

const { cleaup, tmp } = require('./test/util')

const seedData = require('./test/seeds.json')

const core = new Kappa()

const feeds = multifeed(ram, { valueEncoding: 'json' })

const db = memdb()

core.use('query', createMultifeedSource({ feeds, db: sub(db, 'state') }), Query(sub(db, 'view'), {

indexes: [

{ key: 'log', value: [['value', 'timestamp']] },

{ key: 'typ', value: [['value', 'type'], ['value', 'timestamp']] }

],

validator,

// make sure you define your own getMessage function, otherwise nothing will be returned by your queries

getMessage: fromMultifeed(feeds)

}))

core.ready('query', () => {

// setup a live query to first log all chat/message

core.view.query.ready({

query: [{ $filter: { value: { type: 'chat/message' } } }],

live: true,

old: false

}).on('data', (msg) => {

if (msg.sync) return next()

console.log(msg)

})

function next () {

// then to first log all user/about

core.view.query.read({

query: [{ $filter: { value: { type: 'user/about' } } }],

live: true,

old: false

}).on('data', (msg) => {

console.log(msg)

})

}

})

// then append a bunch of data to two different feeds in a multifeed

feeds.writer('one', (err, one) => {

feeds.writer('two', (err, two) => {

one.append(seedData.slice(0, 3))

two.append(seedData.slice(3, 5))

})

})

const View = require('kappa-view-query')

Expects a LevelUP or LevelDOWN instance leveldb.

Expects a getMessage function to use your defined index to grab the message from the feed.

// returns a readable stream

core.api.query.read(opts)

// returns information about index performance

core.api.query.explain(opts)

// append an index onto existing set

core.api.query.add(opts)

$ npm install kappa-view-query

kappa-view-query was built by @kyphae and assisted by @dominictarr. It uses @dominictarr's scoring system and query interface from flumeview-query.

pull-stream and flumeview-query as an external dependency, providing better compatibility with the rest of the kappa-db ecosystem.core.api.query.read returns a regular readable node stream.{ live: true } setup will now properly pipe messages through as they are indexed.FAQs

execute map-filter-reduce queries over a kappa-core

We found that kappa-view-query demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

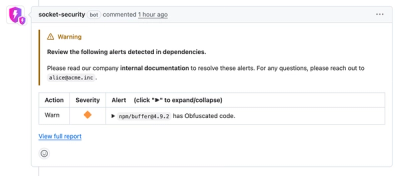

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.

Product

Socket now lets you customize pull request alert headers, helping security teams share clear guidance right in PRs to speed reviews and reduce back-and-forth.

Product

Socket's Rust support is moving to Beta: all users can scan Cargo projects and generate SBOMs, including Cargo.toml-only crates, with Rust-aware supply chain checks.