Security News

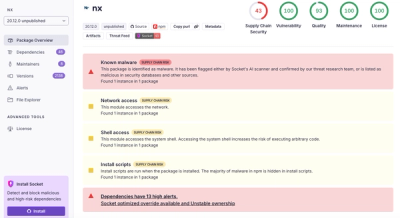

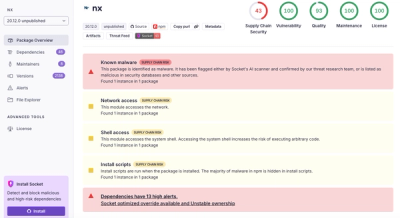

Nx npm Packages Compromised in Supply Chain Attack Weaponizing AI CLI Tools

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

lock_and_cache

Advanced tools

Most caching libraries don't do locking, meaning that >1 process can be calculating a cached value at the same time. Since you presumably cache things because they cost CPU, database reads, or money, doesn't it make sense to lock while caching?

Lock and cache using Redis!

Most caching libraries don't do locking, meaning that >1 process can be calculating a cached value at the same time. Since you presumably cache things because they cost CPU, database reads, or money, doesn't it make sense to lock while caching?

import { LockAndCache } from "lock_and_cache";

const cache = new LockAndCache();

async function computeStockQuote(symbol: string) {

// Fetch stock price from remote source, cache for one minute.

//

// Calling this multiple times in parallel will only run it once the cache key

// is based on the function name and arguments.

return 100;

}

const stockQuote = cache.wrap({ ttl: 60 }, computeStockQuote);

// If you forget this, your process will never exit.

cache.close();

npm install --save lock_and_cache

lock_and_cache...

As you can see, most caching libraries only take care of (1) and (4) (well, and (5) of course).

By default, you probably want to put locks and caches in separate databases:

export CACHE_URL=redis://redis:6379/2

export LOCK_URL=redis://redis:6379/3

We have full JSDoc for this library, but these are the highlights at the time of writing.

/**

* Valid cache keys.

*

* These may only contain values that we can serialize consistently.

*/

export type CacheKey = string | number | boolean | null | CacheKey[];

/** Options that can be passed to `get` and `wrap`. */

export type GetOptions = {

/**

* "Time to live." The time to cache a value, in seconds.

*

* Defaults to `DEFAULT_TTL`.

*/

ttl?: number;

};

/**

* A user-supplied function that computes a value to cache.

*

* This function may have an optional `displayName` property, which may be used

* as part of the cache key if this function is passed to `wrap`.

*

* By default, `WorkFn` functions do not accept arguments, but if you're using

* the `wrap` feature, you may want to supply an optional argument list.

*/

type WorkFn<T, Args extends CacheKey[] = []> = ((

...args: Args

) => Promise<T>) & {

displayName?: string;

};

class LockAndCache {

/**

* Create a new `LockAndCache`.

*

* @param caches Caches to use. Defaults to `tieredCache()`.

* @param lockClient Redis client to use for locking. Defaults to using

* `DEFAULT_REDIS_OPTIONS`.

*/

constructor({

lockClient = defaultRedisLockClient(),

cacheClient = defaultRedisCacheClient(),

} = {});

/**

* Shut down this cache manager. No other functions may be called after this.

*/

close(): void;

/**

* Either fetch a value from our cache, or compute it, cache it and return it.

*

* @param key The cache key to use.

* @param options Cache options. `ttl` is in seconds.

* @param work A function which performs an expensive caculation that we want

* to cache.

*/

async get<T>(key: CacheKey, options: GetOptions, work: WorkFn<T>): Promise<T>;

/**

* Given a work function, wrap it in a `cache.get`, using the function's

* arguments as part of our cache key.

*

* @param options Cache options. `name` is the base name of our cache key.

* `ttl` is in seconds, and it defaults to `DEFAULT_TTL`.

* @param work The work function to wrap. If `options.name` is not specified,

* either `work.displayName` or `work.name` must be a non-empty string.

*/

wrap<T, Args extends CacheKey[] = []>(

options: GetOptions & { name?: string },

work: WorkFn<T, Args>

): WorkFn<T, Args>;

}

Please send us your pull requests!

[6.0.0-beta.5] - 2020-08-26

undefined fields to be used as CacheKey values. Any fields containing undefined will be omitted during serialization.FAQs

Most caching libraries don't do locking, meaning that >1 process can be calculating a cached value at the same time. Since you presumably cache things because they cost CPU, database reads, or money, doesn't it make sense to lock while caching?

The npm package lock_and_cache receives a total of 3 weekly downloads. As such, lock_and_cache popularity was classified as not popular.

We found that lock_and_cache demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

Security News

CISA’s 2025 draft SBOM guidance adds new fields like hashes, licenses, and tool metadata to make software inventories more actionable.

Security News

A clarification on our recent research investigating 60 malicious Ruby gems.