Security News

/Research

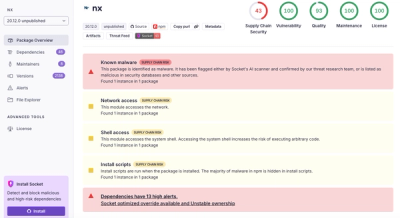

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

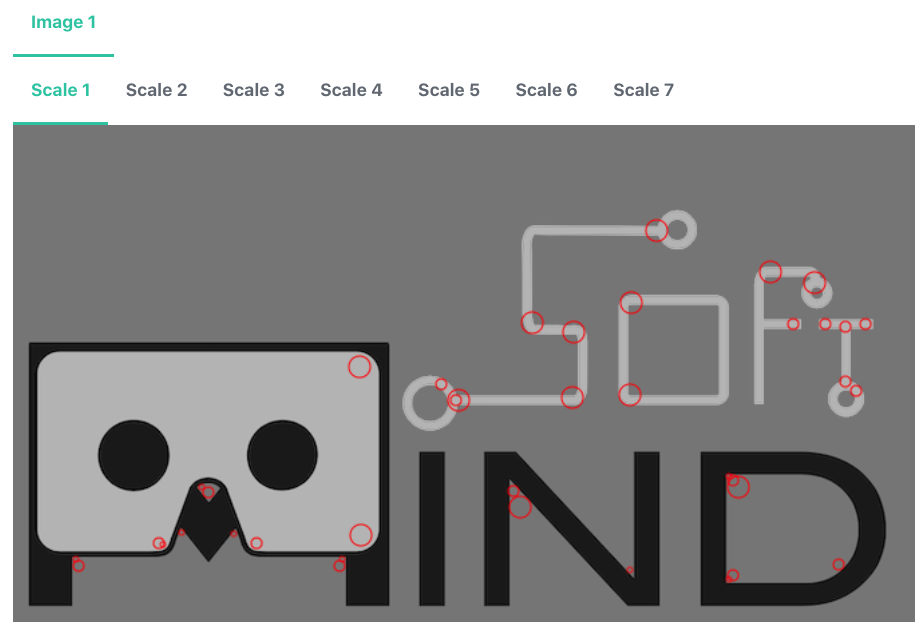

MindAR is a web augmented reality library. Highlighted features include:

:star: Support Image tracking and Face tracking. For Location or Fiducial-Markers Tracking, checkout AR.js

:star: Written in pure javascript, end-to-end from the underlying computer vision engine to frontend

:star: Utilize gpu (through webgl) and web worker for performance

:star: Developer friendly. Easy to setup. With AFRAME extension, you can create an app with only 10 lines of codes

MindAR is the only actively maintained web AR SDK which offer comparable features to commercial alternatives. This library is currently maintained by me as an individual developer. To raise fund for continuous development and to provide timely supports and responses to issues, here is a list of related projects/ services that you can support.

Unity WebAR FoundationWebAR Foundation is a unity package that allows Unity developer to build WebGL-platform AR applications. It acts as a Unity Plugin that wraps around popular Web SDK. If you are a Unity developer, check it out! https://github.com/hiukim/unity-webar-foundation

|  |

Web AR Development CourseI'm offering a WebAR development course in Udemy. It's a very comprehensive guide to Web AR development, not limited to MindAR. Check it out if you are interested: https://www.udemy.com/course/introduction-to-web-ar-development/?referralCode=D2565F4CA6D767F30D61 |

|

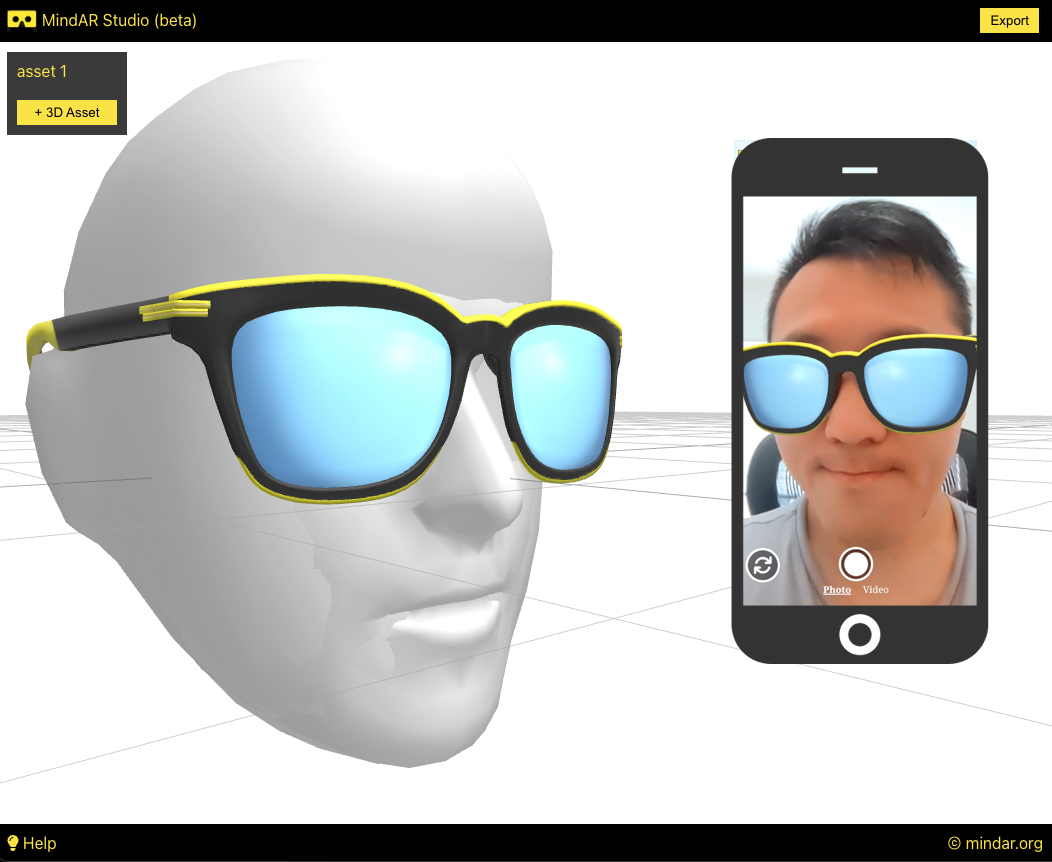

MindAR StudioMindAR Studio allows you to build Face Tracking AR without coding. You can build AR effects through a drag-n-drop editor and export static webpages for self-host. Free to use! Check it out if you are interested! https://studio.mindar.org |  |

PictarizePictarize is a hosted platform for creating and publishing Image Tracking AR applications. Free to use! Check it out if you are interested! https://pictarize.com |  |

Official Documentation: https://hiukim.github.io/mind-ar-js-doc

Image Tracking - Basic ExampleDemo video: https://youtu.be/hgVB9HpQpqY Try it yourself: https://hiukim.github.io/mind-ar-js-doc/examples/basic/ |

|

Image Tracking - Multiple Targets ExampleTry it yourself: https://hiukim.github.io/mind-ar-js-doc/examples/multi-tracks |

|

Image Tracking - Interactive ExampleDemo video: https://youtu.be/gm57gL1NGoQ Try it yourself: https://hiukim.github.io/mind-ar-js-doc/examples/interative |

|

Face Tracking - Virtual Try-On ExampleTry it yourself: https://hiukim.github.io/mind-ar-js-doc/face-tracking-examples/tryon |

|

Face Tracking - Face Mesh EffectTry it yourself: https://hiukim.github.io/mind-ar-js-doc/more-examples/threejs-face-facemesh |

|

More examples can be found here: https://hiukim.github.io/mind-ar-js-doc/examples/summary

Learn how to build the Basic example above in 5 minutes with a plain text editor!

Quick Start Guide: https://hiukim.github.io/mind-ar-js-doc/quick-start/overview

To give you a quick idea, this is the complete source code for the Basic example. It's static HTML page, you can host it anywhere.

<html>

<head>

<meta name="viewport" content="width=device-width, initial-scale=1" />

<script src="https://cdn.jsdelivr.net/gh/hiukim/mind-ar-js@1.1.4/dist/mindar-image.prod.js"></script>

<script src="https://aframe.io/releases/1.2.0/aframe.min.js"></script>

<script src="https://cdn.jsdelivr.net/gh/hiukim/mind-ar-js@1.1.4/dist/mindar-image-aframe.prod.js"></script>

</head>

<body>

<a-scene mindar-image="imageTargetSrc: https://cdn.jsdelivr.net/gh/hiukim/mind-ar-js@1.1.4/examples/image-tracking/assets/card-example/card.mind;" color-space="sRGB" renderer="colorManagement: true, physicallyCorrectLights" vr-mode-ui="enabled: false" device-orientation-permission-ui="enabled: false">

<a-assets>

<img id="card" src="https://cdn.jsdelivr.net/gh/hiukim/mind-ar-js@1.1.4/examples/image-tracking/assets/card-example/card.png" />

<a-asset-item id="avatarModel" src="https://cdn.jsdelivr.net/gh/hiukim/mind-ar-js@1.1.4/examples/image-tracking/assets/card-example/softmind/scene.gltf"></a-asset-item>

</a-assets>

<a-camera position="0 0 0" look-controls="enabled: false"></a-camera>

<a-entity mindar-image-target="targetIndex: 0">

<a-plane src="#card" position="0 0 0" height="0.552" width="1" rotation="0 0 0"></a-plane>

<a-gltf-model rotation="0 0 0 " position="0 0 0.1" scale="0.005 0.005 0.005" src="#avatarModel" animation="property: position; to: 0 0.1 0.1; dur: 1000; easing: easeInOutQuad; loop: true; dir: alternate">

</a-entity>

</a-scene>

</body>

</html>

You can compile your own target images right on the browser using this friendly Compiler tools. If you don't know what it is, go through the Quick Start guide

https://hiukim.github.io/mind-ar-js-doc/tools/compile

Supports more augmented reality features, like Hand Tracking, Body Tracking and Plane Tracking

Research on different state-of-the-arts algorithms to improve tracking accuracy and performance

More educational references.

I personally don't come from a strong computer vision background, and I'm having a hard time improving the tracking accuracy. I could really use some help from computer vision expert. Please reach out and discuss.

Also welcome javascript experts to help with the non-engine part, like improving the APIs and so.

If you are graphics designer or 3D artists and can contribute to the visual. Even if you just use MindAR to develop some cool applications, please show us!

Whatever you can think of. It's an opensource web AR framework for everyone!

/src folder contains majority of the source code/examples folder contains examples to test out during developmentrun > npm run build. the build will be generated in dist folder

To develop threeJS version, run > npm run watch. This will observe the file changes in src folder and continuously build the artefacts in dist-dev.

To develop AFRAME version, you will need to run >npm run build-dev everytime you make changes. The --watch parameter currently failed to automatically generate mindar-XXX-aframe.js.

All the examples in the examples folder is configured to use this development build, so you can open those examples in browser to start debugging or development.

The examples should run in desktop browser and they are just html files, so it's easy to start development. However, because it requires camera access, so you need a webcam. Also, you need to run the html file with some localhost web server. Simply opening the files won't work.

For example, you can install this chrome plugin to start a local server: https://chrome.google.com/webstore/detail/web-server-for-chrome/ofhbbkphhbklhfoeikjpcbhemlocgigb?hl=en

You most likely would want to test on mobile device as well. In that case, it's better if you could setup your development environment to be able to share your localhost webserver to your mobile devices. If you have difficulties doing that, perhaps behind a firewall, then you could use something like ngrok (https://ngrok.com/) to tunnel the request. But this is not an ideal solution, because the development build of MindAR is not small (>10Mb), and tunneling with free version of ngrok could be slow.

This library utilize tensorflowjs (https://github.com/tensorflow/tfjs) for webgl backend. Yes, tensorflow is a machine learning library, but we didn't use it for machine learning! :) Tensorflowjs has a very solid webgl engine which allows us to write general purpose GPU application (in this case, our AR application).

The core detection and tracking algorithm is written with custom operations in tensorflowjs. They are like shaders program. It might looks intimidating at first, but it's actually not that difficult to understand.

The computer vision idea is borrowed from artoolkit (i.e. https://github.com/artoolkitx/artoolkit5). Unfortunately, the library doesn't seems to be maintained anymore.

Face Tracking is based on mediapipe face mesh model (i.e. https://google.github.io/mediapipe/solutions/face_mesh.html)

FAQs

web augmented reality framework

The npm package mind-ar receives a total of 746 weekly downloads. As such, mind-ar popularity was classified as not popular.

We found that mind-ar demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.