Research

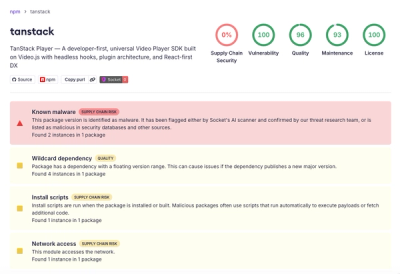

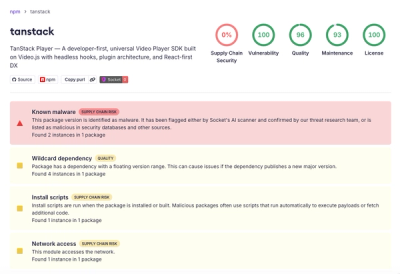

Malicious npm Package Brand-Squats TanStack to Exfiltrate Environment Variables

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

sitemap-stream-parser

Advanced tools

A streaming parser for sitemap files. It is able to deal with GBs of deeply nested sitemaps with hundreds of URLs in them. Maximum memory usage is just over 100Mb at any time.

The main method to extract URLs for a site is with the parseSitemaps(urls, url_cb, done) method. You can call it with both a single URL or an Array of URLs. The url_cb is called for every URL that is found. The done callback is passed an error and/or a list of all the sitemaps that were checked.

var sitemaps = require('sitemap-stream-parser');

sitemaps.parseSitemaps('http://example.com/sitemap.xml', console.log, function(err, sitemaps) {

console.log('All done!');

});

or

var sitemaps = require('sitemap-stream-parser');

var urls = ['http://example.com/sitemap-posts.xml', 'http://example.com/sitemap-pages.xml'];

all_urls = [];

sitemaps.parseSitemaps(urls, function(url) { all_urls.push(url); }, function(err, sitemaps) {

console.log(all_urls);

console.log('All done!');

});

Sometimes sites advertise their sitemaps in their robots.txt file. To parse this file to see if that is the case use the method sitemapsInRobots(url, cb). You can easily combine those 2 methods.

var sitemaps = require('sitemap-stream-parser');

sitemaps.sitemapsInRobots('http://example.com/robots.txt', function(err, urls) {

if(err || !urls || urls.length == 0)

return;

sitemaps.parseSitemaps(urls, console.log, function(err, sitemaps) {

console.log(sitemaps);

});

});

FAQs

Get a list of URLs from one or more sitemaps

The npm package sitemap-stream-parser receives a total of 467 weekly downloads. As such, sitemap-stream-parser popularity was classified as not popular.

We found that sitemap-stream-parser demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

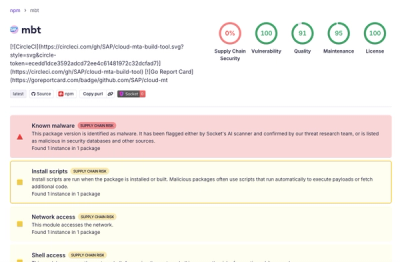

Research

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.