Security News

Deno 2.6 + Socket: Supply Chain Defense In Your CLI

Deno 2.6 introduces deno audit with a new --socket flag that plugs directly into Socket to bring supply chain security checks into the Deno CLI.

aioscpy

Advanced tools

A powerful, high-performance asynchronous web crawling and scraping framework built on Python's asyncio ecosystem.

English | 中文

Aioscpy is a fast high-level web crawling and web scraping framework, used to crawl websites and extract structured data from their pages. It draws inspiration from Scrapy and scrapy_redis but is designed from the ground up to leverage the full power of asynchronous programming.

pip install aioscpy

pip install aioscpy[all]

pip install aioscpy[aiohttp,httpx]

pip install git+https://github.com/ihandmine/aioscpy

aioscpy startproject myproject

cd myproject

aioscpy genspider myspider

This will create a basic spider in the spiders directory.

from aioscpy.spider import Spider

class QuotesSpider(Spider):

name = 'quotes'

custom_settings = {

"SPIDER_IDLE": False

}

start_urls = [

'https://quotes.toscrape.com/tag/humor/',

]

async def parse(self, response):

for quote in response.css('div.quote'):

yield {

'author': quote.xpath('span/small/text()').get(),

'text': quote.css('span.text::text').get(),

}

next_page = response.css('li.next a::attr("href")').get()

if next_page is not None:

yield response.follow(next_page, self.parse)

aioscpy onespider single_quotes

from aioscpy.spider import Spider

from anti_header import Header

from pprint import pprint, pformat

class SingleQuotesSpider(Spider):

name = 'single_quotes'

custom_settings = {

"SPIDER_IDLE": False

}

start_urls = [

'https://quotes.toscrape.com/',

]

async def process_request(self, request):

request.headers = Header(url=request.url, platform='windows', connection=True).random

return request

async def process_response(self, request, response):

if response.status in [404, 503]:

return request

return response

async def process_exception(self, request, exc):

raise exc

async def parse(self, response):

for quote in response.css('div.quote'):

yield {

'author': quote.xpath('span/small/text()').get(),

'text': quote.css('span.text::text').get(),

}

next_page = response.css('li.next a::attr("href")').get()

if next_page is not None:

yield response.follow(next_page, callback=self.parse)

async def process_item(self, item):

self.logger.info("{item}", **{'item': pformat(item)})

if __name__ == '__main__':

quotes = SingleQuotesSpider()

quotes.start()

# Run a spider from a project

aioscpy crawl quotes

# Run a single spider script

aioscpy runspider quotes.py

from aioscpy.crawler import call_grace_instance

from aioscpy.utils.tools import get_project_settings

# Method 1: Load all spiders from a directory

def load_spiders_from_directory():

process = call_grace_instance("crawler_process", get_project_settings())

process.load_spider(path='./spiders')

process.start()

# Method 2: Run a specific spider by name

def run_specific_spider():

process = call_grace_instance("crawler_process", get_project_settings())

process.crawl('myspider')

process.start()

if __name__ == '__main__':

run_specific_spider()

Aioscpy can be configured through the settings.py file in your project. Here are the most important settings:

# Maximum number of concurrent items being processed

CONCURRENT_ITEMS = 100

# Maximum number of concurrent requests

CONCURRENT_REQUESTS = 16

# Maximum number of concurrent requests per domain

CONCURRENT_REQUESTS_PER_DOMAIN = 8

# Maximum number of concurrent requests per IP

CONCURRENT_REQUESTS_PER_IP = 0

# Delay between requests (in seconds)

DOWNLOAD_DELAY = 0

# Timeout for requests (in seconds)

DOWNLOAD_TIMEOUT = 20

# Whether to randomize the download delay

RANDOMIZE_DOWNLOAD_DELAY = True

# HTTP backend to use

DOWNLOAD_HANDLER = "aioscpy.core.downloader.handlers.httpx.HttpxDownloadHandler"

# Other options:

# DOWNLOAD_HANDLER = "aioscpy.core.downloader.handlers.aiohttp.AioHttpDownloadHandler"

# DOWNLOAD_HANDLER = "aioscpy.core.downloader.handlers.requests.RequestsDownloadHandler"

# Scheduler to use (memory-based or Redis-based)

SCHEDULER = "aioscpy.core.scheduler.memory.MemoryScheduler"

# For distributed crawling:

# SCHEDULER = "aioscpy.core.scheduler.redis.RedisScheduler"

# Redis connection settings (for Redis scheduler)

REDIS_URI = "redis://localhost:6379"

QUEUE_KEY = "%(spider)s:queue"

Aioscpy provides a rich API for working with responses:

# Using CSS selectors

title = response.css('title::text').get()

all_links = response.css('a::attr(href)').getall()

# Using XPath

title = response.xpath('//title/text()').get()

all_links = response.xpath('//a/@href').getall()

# Follow a link

yield response.follow('next-page.html', self.parse)

# Follow a link with a callback

yield response.follow('details.html', self.parse_details)

# Follow all links matching a CSS selector

yield from response.follow_all(css='a.product::attr(href)', callback=self.parse_product)

aioscpy -h

To enable distributed crawling with Redis:

SCHEDULER = "aioscpy.core.scheduler.redis.RedisScheduler"

REDIS_URI = "redis://localhost:6379"

QUEUE_KEY = "%(spider)s:queue"

Please submit your suggestions to the owner by creating an issue.

FAQs

An asyncio + aiolibs crawler imitate scrapy framework

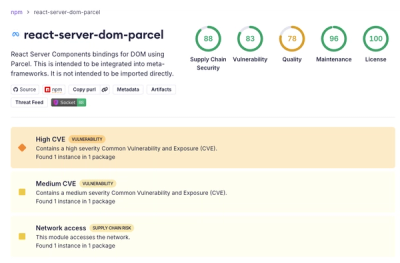

We found that aioscpy demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Deno 2.6 introduces deno audit with a new --socket flag that plugs directly into Socket to bring supply chain security checks into the Deno CLI.

Security News

New DoS and source code exposure bugs in React Server Components and Next.js: what’s affected and how to update safely.

Security News

Socket CEO Feross Aboukhadijeh joins Software Engineering Daily to discuss modern software supply chain attacks and rising AI-driven security risks.