Product

Introducing Socket Fix for Safe, Automated Dependency Upgrades

Automatically fix and test dependency updates with socket fix—a new CLI tool that turns CVE alerts into safe, automated upgrades.

apache-airflow-providers-fastetl

Advanced tools

FastETL framework, modern, versatile, does almost everything.

Este texto também está disponível em português: 🇧🇷LEIAME.md.

FastETL is a plugins package for Airflow for building data pipelines for a number of common scenarios.

Main features:

This framework is maintained by a network of developers from many teams at the Ministry of Management and Innovation in Public Services and is the cumulative result of using Apache Airflow, a free and open source tool, starting in 2019.

For government: FastETL is widely used for replication of data queried via Quartzo (DaaS) from Serpro.

FastETL implements the standards for Airflow plugins. To install it,

simply add the apache-airflow-providers-fastetl package to your

Python dependencies in your Airflow environment.

Or install it with

pip install apache-airflow-providers-fastetl

To see an example of an Apache Airflow container that uses FastETL, check out the airflow2-docker repository.

To ensure appropriate results, please make sure to install the

msodbcsql17 and unixodbc-dev libraries on your Apache Airflow workers.

The test suite uses Docker containers to simulate a complete use environment, including Airflow and the databases. For that reason, to execute the tests, you first need to install Docker and docker-compose.

For instructions on how to do this, see the official Docker documentation.

To build the containers:

make setup

To run the tests, use:

make setup && make tests

To shutdown the environment, use:

make down

The main FastETL feature is the DbToDbOperator operator. It copies data

between postgres and mssql databases. MySQL is also supported as a

source.

Here goes an example:

from datetime import datetime

from airflow import DAG

from fastetl.operators.db_to_db_operator import DbToDbOperator

default_args = {

"start_date": datetime(2023, 4, 1),

}

dag = DAG(

"copy_db_to_db_example",

default_args=default_args,

schedule_interval=None,

)

t0 = DbToDbOperator(

task_id="copy_data",

source={

"conn_id": airflow_source_conn_id,

"schema": source_schema,

"table": table_name,

},

destination={

"conn_id": airflow_dest_conn_id,

"schema": dest_schema,

"table": table_name,

},

destination_truncate=True,

copy_table_comments=True,

chunksize=10000,

dag=dag,

)

More detail about the parameters and the workings of DbToDbOperator

can bee seen on the following files:

To be written on the CONTRIBUTING.md document (issue

#4).

FAQs

FastETL custom package Apache Airflow provider.

We found that apache-airflow-providers-fastetl demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 5 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Automatically fix and test dependency updates with socket fix—a new CLI tool that turns CVE alerts into safe, automated upgrades.

Security News

CISA denies CVE funding issues amid backlash over a new CVE foundation formed by board members, raising concerns about transparency and program governance.

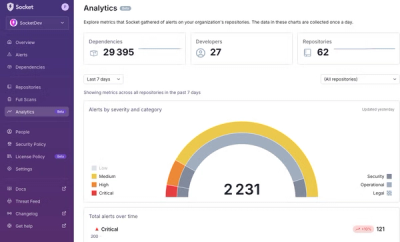

Product

We’re excited to announce a powerful new capability in Socket: historical data and enhanced analytics.