Research

/Security News

Intercom’s npm Package Compromised in Ongoing Mini Shai-Hulud Worm Attack

Compromised intercom-client@7.0.4 npm package is tied to the ongoing Mini Shai-Hulud worm attack targeting developer and CI/CD secrets.

dd-import

Advanced tools

A utility to (re-)import findings and language data into DefectDojo

Findings and languages can be imported into DefectDojo via an API. To make automated build and deploy pipelines easier to implement, dd-import provides some convenience functions:

build_id, commit_hash and branch_tag can be updated when uploading findings.curl and its syntax within the pipeline. This makes pipelines shorter and better readable.Python

dd-import can be installed with pip. Only Python 3.8 and up is supported.

pip install dd-import

The command dd-reimport-findings re-imports findings into DefectDojo. Even though the name suggests otherwise, you do not need to do an initial import first.

The command dd-import-languages imports languages data that have been gathered with the tool cloc, see Languages and lines of code for more details.

Docker

Docker images can be found in https://hub.docker.com/r/maibornwolff/dd-import.

A re-import of findings can be started with

docker run --rm dd-import:latest dd-reimport-findings.sh

Importing languages data can be started with

docker run --rm dd-import:latest dd-import-languages.sh

Please note you have to set the environment variables as described below and mount a folder containing the file with scan results when running the docker container.

/usr/local/dd-import is the working directory of the docker image, all commands are located in the /usr/local/dd-import/bin folder.

All parameters need to be provided as environment variables:

| Parameter | Re-import findings | Import languages | Remark |

|---|---|---|---|

| DD_URL | Mandatory | Mandatory | Base URL of the DefectDojo instance |

| DD_API_KEY | Mandatory | Mandatory | Shall be defined as a secret, eg. a protected variable in GitLab or an encrypted secret in GitHub |

| DD_PRODUCT_TYPE_NAME | Mandatory | Mandatory | If a product type with this name does not exist, it will be created |

| DD_PRODUCT_NAME | Mandatory | Mandatory | If a product with this name does not exist, it will be created |

| DD_ENGAGEMENT_NAME | Mandatory | - | If an engagement with this name does not exist for the given product, it will be created |

| DD_ENGAGEMENT_TARGET_START | Optional | - | Format: YYYY-MM-DD, default: today. The target start date for a newly created engagement. |

| DD_ENGAGEMENT_TARGET_END | Optional | - | Format: YYYY-MM-DD, default: 2999-12-31. The target start date for a newly created engagement. |

| DD_TEST_NAME | Mandatory | - | If a test with this name does not exist for the given engagement, it will be created |

| DD_TEST_TYPE_NAME | Mandatory | - | From DefectDojo's list of test types, eg. Trivy Scan |

| DD_FILE_NAME | Optional | Mandatory | |

| DD_ACTIVE | Optional | - | Default: true |

| DD_VERIFIED | Optional | - | Default: true |

| DD_MINIMUM_SEVERITY | Optional | - | |

| DD_GROUP_BY | Optional | - | Group by file path, component name, component name + version |

| DD_PUSH_TO_JIRA | Optional | - | Default: false |

| DD_CLOSE_OLD_FINDINGS | Optional | - | Default: true |

| DD_CLOSE_OLD_FINDINGS_PRODUCT_SCOPE | Optional | - | Default: false |

| DD_DO_NOT_REACTIVATE | Optional | - | Default: false |

| DD_VERSION | Optional | - | |

| DD_ENDPOINT_ID | Optional | - | |

| DD_SERVICE | Optional | - | |

| DD_BUILD_ID | Optional | - | |

| DD_COMMIT_HASH | Optional | - | |

| DD_BRANCH_TAG | Optional | - | |

| DD_API_SCAN_CONFIGURATION_ID | Optional | - | Id of the API scan configuration for API based parsers, e.g. SonarQube |

| DD_SOURCE_CODE_MANAGEMENT_URI | Optional | - | |

| DD_SSL_VERIFY | Optional | Optional | Disable SSL verification by setting to false or 0. Default: true |

| DD_EXTRA_HEADER_1 | Optional | Optional | If extra header key is needed for auth in wafs or similar |

| DD_EXTRA_HEADER_1_VALUE | Optional | Optional | The corresponding value for extra header key |

| DD_EXTRA_HEADER_2 | Optional | Optional | If extra header key is needed for auth in wafs or similar |

| DD_EXTRA_HEADER_2_VALUE | Optional | Optional | The corresponding value for extra header key |

This snippet from a GitLab CI pipeline serves as an example how dd-import can be integrated to upload data during build and deploy using the docker image:

variables:

DD_PRODUCT_TYPE_NAME: "Showcase"

DD_PRODUCT_NAME: "DefectDojo Importer"

DD_ENGAGEMENT_NAME: "GitLab"

...

trivy:

stage: test

tags:

- build

variables:

GIT_STRATEGY: none

before_script:

- export TRIVY_VERSION=$(wget -qO - "https://api.github.com/repos/aquasecurity/trivy/releases/latest" | grep '"tag_name":' | sed -E 's/.*"v([^"]+)".*/\1/')

- echo $TRIVY_VERSION

- wget --no-verbose https://github.com/aquasecurity/trivy/releases/download/v${TRIVY_VERSION}/trivy_${TRIVY_VERSION}_Linux-64bit.tar.gz -O - | tar -zxvf -

allow_failure: true

script:

- ./trivy --exit-code 0 --no-progress -f json -o trivy.json maibornwolff/dd-import:latest

artifacts:

paths:

- trivy.json

when: always

expire_in: 1 day

cloc:

stage: test

image: node:16

tags:

- build

before_script:

- npm install -g cloc

script:

- cloc src --json -out cloc.json

artifacts:

paths:

- cloc.json

when: always

expire_in: 1 day

upload_trivy:

stage: upload

image: maibornwolff/dd-import:latest

needs:

- job: trivy

artifacts: true

variables:

GIT_STRATEGY: none

DD_TEST_NAME: "Trivy"

DD_TEST_TYPE_NAME: "Trivy Scan"

DD_FILE_NAME: "trivy.json"

script:

- dd-reimport-findings.sh

upload-cloc:

image: maibornwolff/dd-import:latest

needs:

- job: cloc

artifacts: true

stage: upload

tags:

- build

variables:

DD_FILE_NAME: "cloc.json"

script:

- dd-import-languages.sh

DD_URL and DD_API_KEY are not defined here because they are protected variables for the GitLab project.trivy.json).cloc.json).trivy step, gets its output file and sets some variables specific for this step. Then the script to import the findings from this scan is executed.cloc step, gets its output file and sets some variables specific for this step. Then the script to import the language data is executed.Another example, showing how to use dd-import within a GitHub Action, can be found in dd-import_example.yml.

./bin/runUnitTests.sh - Runs the unit tests and reports the test coverage.

./bin/runDockerUnitTests.sh - First creates the docker image and then starts a docker container in which the unit tests are executed.

Licensed under the 3-Clause BSD License

FAQs

A utility to (re-)import findings and language data into DefectDojo

We found that dd-import demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Compromised intercom-client@7.0.4 npm package is tied to the ongoing Mini Shai-Hulud worm attack targeting developer and CI/CD secrets.

Research

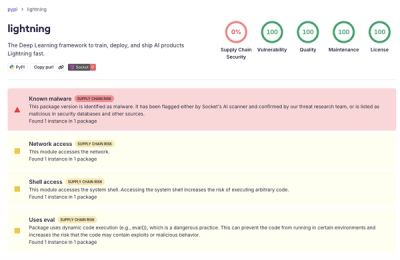

Socket detected a malicious supply chain attack on PyPI package lightning versions 2.6.2 and 2.6.3, which execute credential-stealing malware on import.

Research

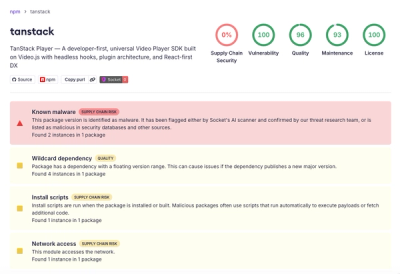

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.