Research

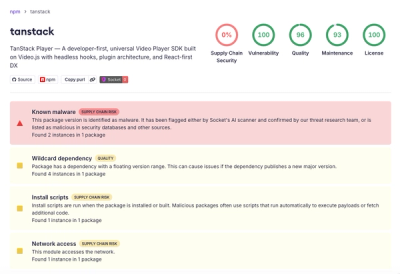

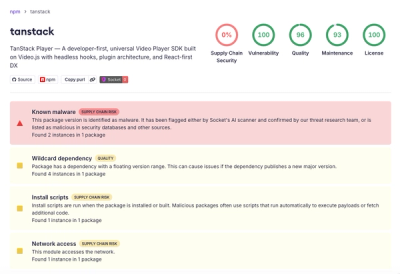

Malicious npm Package Brand-Squats TanStack to Exfiltrate Environment Variables

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

esperanto

Advanced tools

Esperanto is a powerful Python library that provides a unified interface for interacting with various Large Language Model (LLM) providers. It simplifies the process of working with different AI models (LLMs, Embedders, Transcribers, and TTS) APIs by offering a consistent interface while maintaining provider-specific optimizations.

🪶 Ultra-Lightweight Architecture

httpx - no bulky vendor SDKs required🔄 True Provider Flexibility

⚡ Perfect for Production

Whether you're building a quick prototype or a production application serving millions of requests, Esperanto gives you the performance of direct API calls with the convenience of a unified interface.

CHANGELOG - Version history and migration guides

Install Esperanto using pip:

pip install esperanto

Transformers Provider

If you plan to use the transformers provider, install with the transformers extra:

pip install "esperanto[transformers]"

This installs:

transformers - Core Hugging Face librarytorch - PyTorch frameworktokenizers - Fast tokenizationsentence-transformers - CrossEncoder supportscikit-learn - Advanced embedding featuresnumpy - Numerical computationsLangChain Integration

If you plan to use any of the .to_langchain() methods, you need to install the correct LangChain SDKs manually:

# Core LangChain dependencies (required)

pip install "langchain>=0.3.8,<0.4.0" "langchain-core>=0.3.29,<0.4.0"

# Provider-specific LangChain packages (install only what you need)

pip install "langchain-openai>=0.2.9"

pip install "langchain-anthropic>=0.3.0"

pip install "langchain-google-genai>=2.1.2"

pip install "langchain-ollama>=0.2.0"

pip install "langchain-groq>=0.2.1"

pip install "langchain_mistralai>=0.2.1"

pip install "langchain_deepseek>=0.1.3"

| Provider | LLM Support | Embedding Support | Reranking Support | Speech-to-Text | Text-to-Speech | JSON Mode |

|---|---|---|---|---|---|---|

| OpenAI | ✅ | ✅ | ❌ | ✅ | ✅ | ✅ |

| OpenAI-Compatible | ✅ | ✅ | ❌ | ✅ | ✅ | ⚠️* |

| Anthropic | ✅ | ❌ | ❌ | ❌ | ❌ | ✅ |

| Groq | ✅ | ❌ | ❌ | ✅ | ❌ | ✅ |

| Google (GenAI) | ✅ | ✅ | ❌ | ✅ | ✅ | ✅ |

| Vertex AI | ✅ | ✅ | ❌ | ❌ | ✅ | ❌ |

| Ollama | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ |

| Perplexity | ✅ | ❌ | ❌ | ❌ | ❌ | ✅ |

| Transformers | ❌ | ✅ | ✅ | ❌ | ❌ | ❌ |

| ElevenLabs | ❌ | ❌ | ❌ | ✅ | ✅ | ❌ |

| Azure OpenAI | ✅ | ✅ | ❌ | ✅ | ✅ | ✅ |

| Mistral | ✅ | ✅ | ❌ | ❌ | ❌ | ✅ |

| DeepSeek | ✅ | ❌ | ❌ | ❌ | ❌ | ✅ |

| Voyage | ❌ | ✅ | ✅ | ❌ | ❌ | ❌ |

| Jina | ❌ | ✅ | ✅ | ❌ | ❌ | ❌ |

| xAI | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ |

| DashScope | ✅ | ❌ | ❌ | ❌ | ❌ | ✅ |

| MiniMax | ✅ | ❌ | ❌ | ❌ | ❌ | ✅ |

| OpenRouter | ✅ | ❌ | ❌ | ❌ | ❌ | ✅ |

*⚠️ OpenAI-Compatible: JSON mode support depends on the specific endpoint implementation

You can use Esperanto in two ways: directly with provider-specific classes or through the AI Factory.

The AI Factory provides a convenient way to create model instances and discover available providers:

from esperanto.factory import AIFactory

# Get available providers for each model type

providers = AIFactory.get_available_providers()

print(providers)

# Output:

# {

# 'language': ['anthropic', 'azure', 'dashscope', 'deepseek', 'google', 'groq', 'minimax', 'mistral', 'ollama', 'openai', 'openai-compatible', 'openrouter', 'perplexity', 'vertex', 'xai'],

# 'embedding': ['openai', 'openai-compatible', 'google', 'ollama', 'vertex', 'transformers', 'voyage', 'mistral', 'azure', 'jina', 'openrouter'],

# 'reranker': ['jina', 'voyage', 'transformers'],

# 'speech_to_text': ['openai', 'openai-compatible', 'groq', 'elevenlabs', 'azure', 'google'],

# 'text_to_speech': ['openai', 'openai-compatible', 'elevenlabs', 'google', 'vertex', 'azure']

# }

# Create model instances

model = AIFactory.create_language(

"openai",

"gpt-3.5-turbo",

config={"structured": {"type": "json"}}

) # Language model

embedder = AIFactory.create_embedding("openai", "text-embedding-3-small") # Embedding model

reranker = AIFactory.create_reranker("transformers", "cross-encoder/ms-marco-MiniLM-L-6-v2") # Universal reranker model

transcriber = AIFactory.create_speech_to_text("openai", "whisper-1") # Speech-to-text model

speaker = AIFactory.create_text_to_speech("openai", "tts-1") # Text-to-speech model

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What's the capital of France?"},

]

response = model.chat_complete(messages)

# Create an embedding instance

texts = ["Hello, world!", "Another text"]

# Synchronous usage

embeddings = embedder.embed(texts)

# Async usage

embeddings = await embedder.aembed(texts)

Esperanto provides a convenient way to discover available models from providers without creating instances:

from esperanto.factory import AIFactory

# Discover available models from OpenAI

models = AIFactory.get_provider_models("openai", api_key="your-api-key")

for model in models:

print(f"{model.id} - owned by {model.owned_by}")

# Filter by model type (for providers like OpenAI that support multiple types)

language_models = AIFactory.get_provider_models(

"openai",

api_key="your-api-key",

model_type="language" # Options: 'language', 'embedding', 'speech_to_text', 'text_to_speech'

)

# Some providers return hardcoded lists (e.g., Anthropic)

claude_models = AIFactory.get_provider_models("anthropic")

for model in claude_models:

print(f"{model.id} - Context: {model.context_window} tokens")

# Example output:

# claude-3-5-sonnet-20241022 - Context: 200000 tokens

# claude-3-5-haiku-20241022 - Context: 200000 tokens

# claude-3-opus-20240229 - Context: 200000 tokens

# OpenAI-compatible endpoints (requires base_url)

local_models = AIFactory.get_provider_models(

"openai-compatible",

base_url="http://localhost:1234/v1" # LM Studio, vLLM, etc.

)

for model in local_models:

print(f"{model.id} - {model.owned_by}")

Benefits of Static Discovery:

Supported Providers:

Note: This is the recommended way to discover models. The

.modelsproperty on provider instances is deprecated and will be removed in version 3.0.

Here's a simple example to get you started:

from esperanto.providers.llm.openai import OpenAILanguageModel

from esperanto.providers.llm.anthropic import AnthropicLanguageModel

# Initialize a provider with structured output

model = OpenAILanguageModel(

api_key="your-api-key",

model_name="gpt-4", # Optional, defaults to gpt-4

structured={"type": "json"} # Optional, for JSON output

)

# Simple chat completion

messages = [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "List three colors in JSON format"}

]

# Synchronous call

response = model.chat_complete(messages)

print(response.choices[0].message.content) # Will be in JSON format

# Async call

async def get_response():

response = await model.achat_complete(messages)

print(response.choices[0].message.content) # Will be in JSON format

All providers in Esperanto return standardized response objects, making it easy to work with different models without changing your code.

from esperanto.factory import AIFactory

model = AIFactory.create_language(

"openai",

"gpt-3.5-turbo",

config={"structured": {"type": "json"}}

)

messages = [{"role": "user", "content": "Hello!"}]

# All LLM responses follow this structure

response = model.chat_complete(messages)

print(response.choices[0].message.content) # The actual response text

print(response.choices[0].message.role) # 'assistant'

print(response.model) # The model used

print(response.usage.total_tokens) # Token usage information

print(response.content) # Shortcut for response.choices[0].message.content

# For streaming responses

for chunk in model.chat_complete(messages):

print(chunk.choices[0].delta.content, end="", flush=True)

# Async streaming

async for chunk in model.achat_complete(messages):

print(chunk.choices[0].delta.content, end="", flush=True)

Some models (like Qwen3, DeepSeek R1) include chain-of-thought reasoning in <think> tags. The Message class provides convenient properties to handle this:

response = model.chat_complete(messages)

msg = response.choices[0].message

# Full response including reasoning

msg.content # "<think>Let me analyze...</think>\n\n{\"answer\": 42}"

# Just the reasoning (returns None if no <think> tags)

msg.thinking # "Let me analyze..."

# Just the actual response (with <think> tags removed)

msg.cleaned_content # "{\"answer\": 42}"

from esperanto.factory import AIFactory

model = AIFactory.create_embedding("openai", "text-embedding-3-small")

texts = ["Hello, world!", "Another text"]

# All embedding responses follow this structure

response = model.embed(texts)

print(response.data[0].embedding) # Vector for first text

print(response.data[0].index) # Index of the text (0)

print(response.model) # The model used

print(response.usage.total_tokens) # Token usage information

from esperanto.factory import AIFactory

reranker = AIFactory.create_reranker("transformers", "BAAI/bge-reranker-base")

query = "What is machine learning?"

documents = [

"Machine learning is a subset of artificial intelligence.",

"The weather is nice today.",

"Python is a programming language used in ML."

]

# All reranking responses follow this structure

response = reranker.rerank(query, documents, top_k=2)

print(response.results[0].document) # Highest ranked document

print(response.results[0].relevance_score) # Normalized 0-1 relevance score

print(response.results[0].index) # Original document index

print(response.model) # The model used

Esperanto supports advanced task-aware embeddings that optimize vector representations for specific use cases. This works across all embedding providers through a universal interface:

from esperanto.factory import AIFactory

from esperanto.common_types.task_type import EmbeddingTaskType

# Task-optimized embeddings work with ANY provider

model = AIFactory.create_embedding(

provider="jina", # Also works with: "openai", "google", "transformers", etc.

model_name="jina-embeddings-v3",

config={

"task_type": EmbeddingTaskType.RETRIEVAL_QUERY, # Optimize for search queries

"late_chunking": True, # Better long-context handling

"output_dimensions": 512 # Control vector size

}

)

# Generate optimized embeddings

query = "What is machine learning?"

embeddings = model.embed([query])

Universal Task Types:

RETRIEVAL_QUERY - Optimize for search queriesRETRIEVAL_DOCUMENT - Optimize for document storageSIMILARITY - General text similarityCLASSIFICATION - Text classification tasksCLUSTERING - Document clusteringCODE_RETRIEVAL - Code search optimizationQUESTION_ANSWERING - Optimize for Q&A tasksFACT_VERIFICATION - Optimize for fact checkingProvider Support:

The standardized response objects ensure consistency across different providers, making it easy to:

from esperanto.providers.llm.openai import OpenAILanguageModel

model = OpenAILanguageModel(

api_key="your-api-key", # Or set OPENAI_API_KEY env var

model_name="gpt-4", # Optional

temperature=0.7, # Optional

max_tokens=850, # Optional

streaming=False, # Optional

top_p=0.9, # Optional

structured={"type": "json"}, # Optional, for JSON output

base_url=None, # Optional, for custom endpoint

organization=None # Optional, for org-specific API

)

Use any OpenAI-compatible endpoint (LM Studio, Ollama, vLLM, custom deployments) with the same interface:

from esperanto.factory import AIFactory

# Using factory config

model = AIFactory.create_language(

"openai-compatible",

"your-model-name", # Use any model name supported by your endpoint

config={

"base_url": "http://localhost:1234/v1", # Your endpoint URL (required)

"api_key": "your-api-key" # Your API key (optional)

}

)

# Or set environment variables

# Generic (works for all provider types):

# OPENAI_COMPATIBLE_BASE_URL=http://localhost:1234/v1

# OPENAI_COMPATIBLE_API_KEY=your-api-key # Optional for endpoints that don't require auth

# Provider-specific (takes precedence over generic):

# OPENAI_COMPATIBLE_BASE_URL_LLM=http://localhost:1234/v1

# OPENAI_COMPATIBLE_API_KEY_LLM=your-api-key

model = AIFactory.create_language("openai-compatible", "your-model-name")

# Works with any OpenAI-compatible endpoint

messages = [{"role": "user", "content": "Hello!"}]

response = model.chat_complete(messages)

print(response.content)

# Streaming support

for chunk in model.chat_complete(messages, stream=True):

print(chunk.choices[0].delta.content, end="", flush=True)

Common Use Cases:

ollama serve with OpenAI compatibilityFeatures:

Environment Variable Configuration:

OpenAI-compatible providers support both generic and provider-specific environment variables:

Generic variables (work for all provider types):

OPENAI_COMPATIBLE_BASE_URL - Base URL for the endpointOPENAI_COMPATIBLE_API_KEY - API key (if required)Provider-specific variables (take precedence over generic):

OPENAI_COMPATIBLE_BASE_URL_LLM, OPENAI_COMPATIBLE_API_KEY_LLMOPENAI_COMPATIBLE_BASE_URL_EMBEDDING, OPENAI_COMPATIBLE_API_KEY_EMBEDDINGOPENAI_COMPATIBLE_BASE_URL_STT, OPENAI_COMPATIBLE_API_KEY_STTOPENAI_COMPATIBLE_BASE_URL_TTS, OPENAI_COMPATIBLE_API_KEY_TTSConfiguration Precedence (highest to lowest):

base_url=, api_key=)config={"base_url": ...})This allows you to use different OpenAI-compatible endpoints for different AI capabilities without code changes.

Perplexity uses an OpenAI-compatible API but includes additional parameters for controlling search behavior.

from esperanto.providers.llm.perplexity import PerplexityLanguageModel

model = PerplexityLanguageModel(

api_key="your-api-key", # Or set PERPLEXITY_API_KEY env var

model_name="llama-3-sonar-large-32k-online", # Recommended default

temperature=0.7, # Optional

max_tokens=850, # Optional

streaming=False, # Optional

top_p=0.9, # Optional

structured={"type": "json"}, # Optional, for JSON output

# Perplexity-specific parameters

search_domain_filter=["example.com", "-excluded.com"], # Optional, limit search domains

return_images=False, # Optional, include images in search results

return_related_questions=True, # Optional, return related questions

search_recency_filter="week", # Optional, filter search by time ('day', 'week', 'month', 'year')

web_search_options={"search_context_size": "high"} # Optional, control search context ('low', 'medium', 'high')

)

Esperanto provides flexible timeout configuration across all provider types with intelligent defaults and multiple configuration methods.

Different provider types have optimized default timeouts based on typical operation duration:

Configure timeouts using three methods with clear priority hierarchy:

from esperanto.factory import AIFactory

# LLM with custom timeout

model = AIFactory.create_language(

"openai",

"gpt-4",

config={"timeout": 120.0} # 2 minutes

)

# Embedding with custom timeout

embedder = AIFactory.create_embedding(

"openai",

"text-embedding-3-small",

config={"timeout": 90.0} # 1.5 minutes

)

# Speech-to-Text with longer timeout for large files

transcriber = AIFactory.create_speech_to_text(

"openai",

config={"timeout": 600.0} # 10 minutes

)

# Text-to-Speech with direct timeout parameter

speaker = AIFactory.create_text_to_speech(

"elevenlabs",

timeout=180.0 # 3 minutes

)

# Speech-to-Text with direct timeout parameter

transcriber = AIFactory.create_speech_to_text(

"openai",

timeout=450.0 # 7.5 minutes

)

Set global defaults for all instances of a provider type:

# Set environment variables

export ESPERANTO_LLM_TIMEOUT=90 # 90 seconds for all LLM providers

export ESPERANTO_EMBEDDING_TIMEOUT=120 # 2 minutes for all embedding providers

export ESPERANTO_RERANKER_TIMEOUT=75 # 75 seconds for all reranker providers

export ESPERANTO_STT_TIMEOUT=600 # 10 minutes for all STT providers

export ESPERANTO_TTS_TIMEOUT=400 # 6.5 minutes for all TTS providers

# These will use environment variable defaults

model = AIFactory.create_language("openai", "gpt-4") # Uses ESPERANTO_LLM_TIMEOUT

embedder = AIFactory.create_embedding("voyage", "voyage-2") # Uses ESPERANTO_EMBEDDING_TIMEOUT

Configuration resolves in this priority order:

# Example: Final timeout will be 150 seconds (config overrides env var)

# Even if ESPERANTO_LLM_TIMEOUT=90 is set

model = AIFactory.create_language(

"openai",

"gpt-4",

config={"timeout": 150.0} # This takes precedence

)

All timeout values are validated with clear error messages:

# These will raise ValueError with descriptive messages

AIFactory.create_language("openai", "gpt-4", config={"timeout": "invalid"}) # TypeError

AIFactory.create_language("openai", "gpt-4", config={"timeout": -1}) # Out of range

AIFactory.create_language("openai", "gpt-4", config={"timeout": 4000}) # Too large

Batch Processing

# Long timeout for batch embedding operations

embedder = AIFactory.create_embedding(

"openai",

"text-embedding-3-large",

config={"timeout": 300.0} # 5 minutes for large batches

)

Real-time Applications

# Shorter timeout for real-time chat

model = AIFactory.create_language(

"openai",

"gpt-3.5-turbo",

config={"timeout": 30.0} # 30 seconds for quick responses

)

Audio Processing

# Extended timeout for long audio files

transcriber = AIFactory.create_speech_to_text(

"openai",

config={"timeout": 900.0} # 15 minutes for hour-long audio files

)

Enable streaming to receive responses token by token:

# Enable streaming

model = OpenAILanguageModel(api_key="your-api-key", streaming=True)

# Synchronous streaming

for chunk in model.chat_complete(messages):

print(chunk.choices[0].delta.content, end="", flush=True)

# Async streaming

async for chunk in model.achat_complete(messages):

print(chunk.choices[0].delta.content, end="", flush=True)

Request JSON-formatted responses (supported by OpenAI and some OpenRouter models):

model = OpenAILanguageModel(

api_key="your-api-key", # or use ENV

structured={"type": "json"}

)

messages = [

{"role": "user", "content": "List three European capitals as JSON"}

]

response = model.chat_complete(messages)

# Response will be in JSON format

Convert any provider to a LangChain chat model:

model = OpenAILanguageModel(api_key="your-api-key")

langchain_model = model.to_langchain()

# Use with LangChain

from langchain.chains import ConversationChain

chain = ConversationChain(llm=langchain_model)

Complete documentation is available in the docs directory:

We welcome contributions! Please see our Contributing Guidelines for details on how to get started.

This project is licensed under the MIT License - see the LICENSE file for details.

git clone https://github.com/lfnovo/esperanto.git

cd esperanto

pip install -r requirements.txt

pytest

FAQs

A light-weight, production-ready, unified interface for various AI model providers

We found that esperanto demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

Research

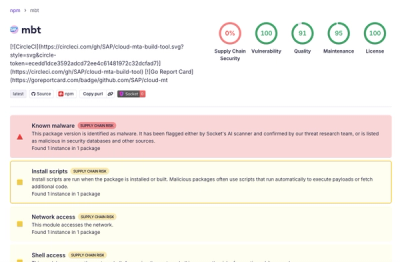

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.