Research

/Security News

Intercom’s npm Package Compromised in Ongoing Mini Shai-Hulud Worm Attack

Compromised intercom-client@7.0.4 npm package is tied to the ongoing Mini Shai-Hulud worm attack targeting developer and CI/CD secrets.

inference-cli

Advanced tools

With no prior knowledge of machine learning or device-specific deployment, you can deploy a computer vision model to a range of devices and environments using Roboflow Inference CLI.

Roboflow Inference CLI offers a lightweight interface for running the Roboflow inference server locally or the Roboflow Hosted API.

To create custom inference server Docker images, go to the parent package, Roboflow Inference.

Roboflow has everything you need to deploy a computer vision model to a range of devices and environments. Inference supports object detection, classification, and instance segmentation models, and running foundation models (CLIP and SAM).

Starts a local inference server. It optionally takes a port number (default is 9001) and will only start the docker container if there is not already a container running on that port.

Before you begin, ensure that you have Docker installed on your machine. Docker provides a containerized environment, allowing the Roboflow Inference Server to run in a consistent and isolated manner, regardless of the host system. If you haven't installed Docker yet, you can get it from Docker's official website.

The CLI will automatically detect the device you are running on and pull the appropriate Docker image.

inference server start --port 9001 [-e {optional_path_to_file_with_env_variables}]

Parameter --env-file (or -e) is the optional path for .env file that will be loaded into inference server

in case that values of internal parameters needs to be adjusted. Any value passed explicitly as command parameter

is considered as more important and will shadow the value defined in .env file under the same target variable name.

Checks the status of the local inference server.

inference server status

Stops the inference server.

inference server stop

Runs inference on a single image. It takes a path to an image, a Roboflow project name, model version, and API key, and will return a JSON object with the model's predictions. You can also specify a host to run inference on our hosted inference server.

inference infer ./image.jpg --project-id my-project --model-version 1 --api-key my-api-key

inference infer https://[YOUR_HOSTED_IMAGE_URL] --project-id my-project --model-version 1 --api-key my-api-key

inference infer ./image.jpg --project-id my-project --model-version 1 --api-key my-api-key --host https://detect.roboflow.com

Roboflow Inference CLI currently supports the following device targets:

For Jetson specific inference server images, check out the Roboflow Inference package, or pull the images directly following instructions in the official Roboflow Inference documentation.

The Roboflow Inference code is distributed under an Apache 2.0 license. The models supported by Roboflow Inference have their own licenses. View the licenses for supported models below.

| model | license |

|---|---|

inference/models/clip | MIT |

inference/models/gaze | MIT, Apache 2.0 |

inference/models/sam | Apache 2.0 |

inference/models/vit | Apache 2.0 |

inference/models/yolact | MIT |

inference/models/yolov5 | AGPL-3.0 |

inference/models/yolov7 | GPL-3.0 |

inference/models/yolov8 | AGPL-3.0 |

With a Roboflow Inference Enterprise License, you can access additional Inference features, including:

To learn more, contact the Roboflow team.

Visit our documentation for usage examples and reference for Roboflow Inference.

| Project | Description |

|---|---|

| supervision | General-purpose utilities for use in computer vision projects, from predictions filtering and display to object tracking to model evaluation. |

| Autodistill | Automatically label images for use in training computer vision models. |

| Inference (this project) | An easy-to-use, production-ready inference server for computer vision supporting deployment of many popular model architectures and fine-tuned models. |

| Notebooks | Tutorials for computer vision tasks, from training state-of-the-art models to tracking objects to counting objects in a zone. |

| Collect | Automated, intelligent data collection powered by CLIP. |

FAQs

With no prior knowledge of machine learning or device-specific deployment, you can deploy a computer vision model to a range of devices and environments using Roboflow Inference CLI.

We found that inference-cli demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 3 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Compromised intercom-client@7.0.4 npm package is tied to the ongoing Mini Shai-Hulud worm attack targeting developer and CI/CD secrets.

Research

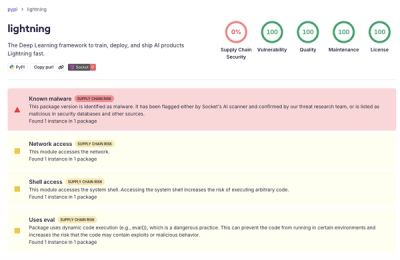

Socket detected a malicious supply chain attack on PyPI package lightning versions 2.6.2 and 2.6.3, which execute credential-stealing malware on import.

Research

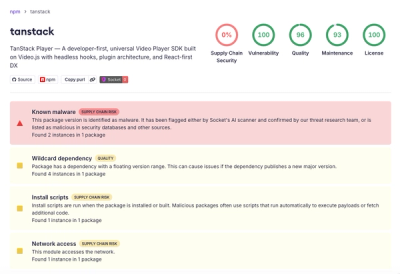

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.