Security News

/Research

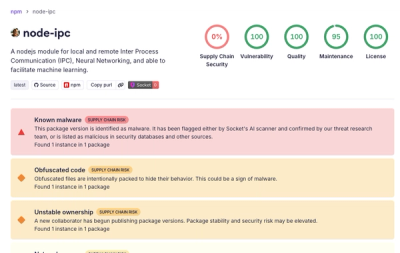

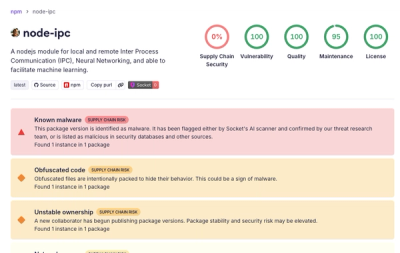

Popular node-ipc npm Package Infected with Credential Stealer

Socket detected malicious node-ipc versions with obfuscated stealer/backdoor behavior in a developing npm supply chain attack.

llmify-cli

Advanced tools

LLM-ify turns any website documentation into local, readable docs in seconds. It also generates llms.txt and llms-full.txt plus full markdown/text captures for single pages or entire sites, so your LLM has clean, structured context.

pip install llmify-cli

llmify

Run Crawl4AI setup (after install):

llmify setup

pip install -U llmify-cli

llms.txt is a standardized format for making website content more accessible to Large Language Models (LLMs). It provides:

llms.txt: A concise index of all pages with titles and descriptionsllms-full.txt: Complete content of all pages for comprehensive accessllms.txt) + full corpus (llms-full.txt)llmify setup after install)git clone https://github.com/Chillbruhhh/LLM-ify.git

cd LLM-ify

python -m venv venv

venv\Scripts\activate # Windows

# or: source venv/bin/activate (macOS/Linux)

pip install -r requirements.txt

crawl4ai-setup

python main.py

Build packages (wheel + sdist):

python -m build

Set up your OpenAI API key:

Option A: Using .env file (recommended)

cp .env.example .env

# Edit .env and configure:

# - Add OPENAI_API_KEY (required)

Option B: Using environment variables

export OPENAI_API_KEY="your-openai-api-key"

Option C: Using command line arguments (See the TUI for input fields)

LLM-ify can also use OpenRouter. Set the key and choose the provider in the TUI settings:

OPENROUTER_API_KEY="your-openrouter-api-key"

LLM-ify can use a local Ollama server. Select ollama in the TUI and set the model name (for example llama3.1:8b). Ollama runs at http://localhost:11434/v1 by default.

Launch the terminal UI:

python main.py

Enter a URL, choose a mode (full website or single page), and run. Settings are saved in config.json automatically.

Choose the provider in Settings, then set the model name for that provider:

gpt-4.1-nanoopenai/gpt-4.1-nanollama3.1:8b)# https://example.com llms.txt

- [Page Title](https://example.com/page1): Brief description of the page content here

- [Another Page](https://example.com/page2): Another concise description of page content

# https://example.com llms-full.txt

<|llm-ify-page-1-lllmstxt|>

## Page Title

Full markdown content of the page...

<|llm-ify-page-2-lllmstxt|>

## Another Page

Full markdown content of another page...

Output files are written under collected-texts/llmify-<domain>/ by default. Example:

collected-texts/llmify-docs.example.com/GLOSSARY.md

collected-texts/llmify-docs.example.com/docs/<page-title>.md

collected-texts/llmify-docs.example.com/llms-files/llms.md

collected-texts/llmify-docs.example.com/llms-files/llms-full.md

collected-texts/llmify-docs.example.com/seeds.json

See INSTRUCTIONS.md for guidance on how LLM agents should navigate

the generated documentation and glossary.

See CONTRIBUTING.md for setup, workflow, and PR guidelines.

See CHANGELOG.md for release notes.

PolyForm Noncommercial - see LICENSE for details.

FAQs

LLM-ify: create LLM-ready markdown and text from websites.

We found that llmify-cli demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Socket detected malicious node-ipc versions with obfuscated stealer/backdoor behavior in a developing npm supply chain attack.

Security News

TeamPCP and BreachForums are promoting a Shai-Hulud supply chain attack contest with a $1,000 prize for the biggest package compromise.

Security News

Packagist urges PHP projects to update Composer after a GitHub token format change exposed some GitHub Actions tokens in CI logs.