Multi-Agent Orchestrator

Flexible and powerful framework for managing multiple AI agents and handling complex conversations.

🔖 Features

- 🧠 Intelligent intent classification — Dynamically route queries to the most suitable agent based on context and content.

- 🌊 Flexible agent responses — Support for both streaming and non-streaming responses from different agents.

- 📚 Context management — Maintain and utilize conversation context across multiple agents for coherent interactions.

- 🔧 Extensible architecture — Easily integrate new agents or customize existing ones to fit your specific needs.

- 🌐 Universal deployment — Run anywhere - from AWS Lambda to your local environment or any cloud platform.

- 📦 Pre-built agents and classifiers — A variety of ready-to-use agents and multiple classifier implementations available.

- 🔤 TypeScript support — Native TypeScript implementation available.

What's the Multi-Agent Orchestrator ❓

The Multi-Agent Orchestrator is a flexible framework for managing multiple AI agents and handling complex conversations. It intelligently routes queries and maintains context across interactions.

The system offers pre-built components for quick deployment, while also allowing easy integration of custom agents and conversation messages storage solutions.

This adaptability makes it suitable for a wide range of applications, from simple chatbots to sophisticated AI systems, accommodating diverse requirements and scaling efficiently.

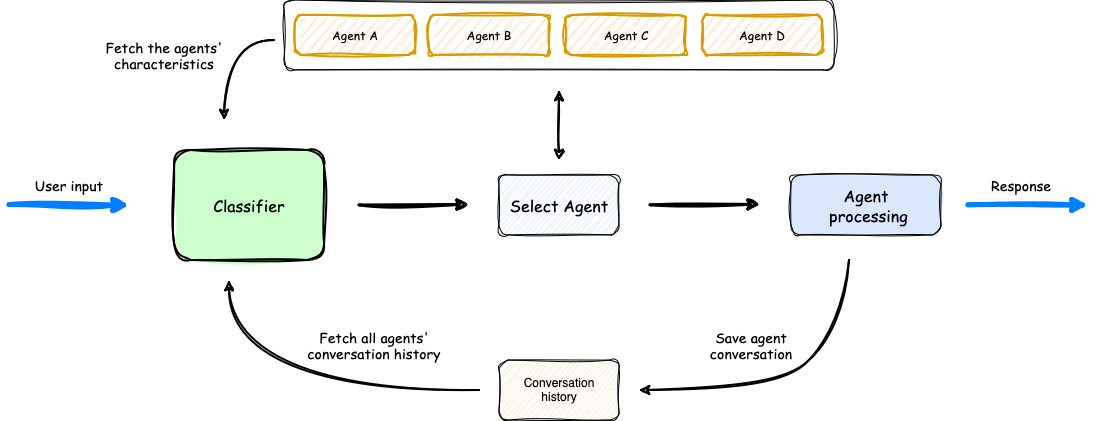

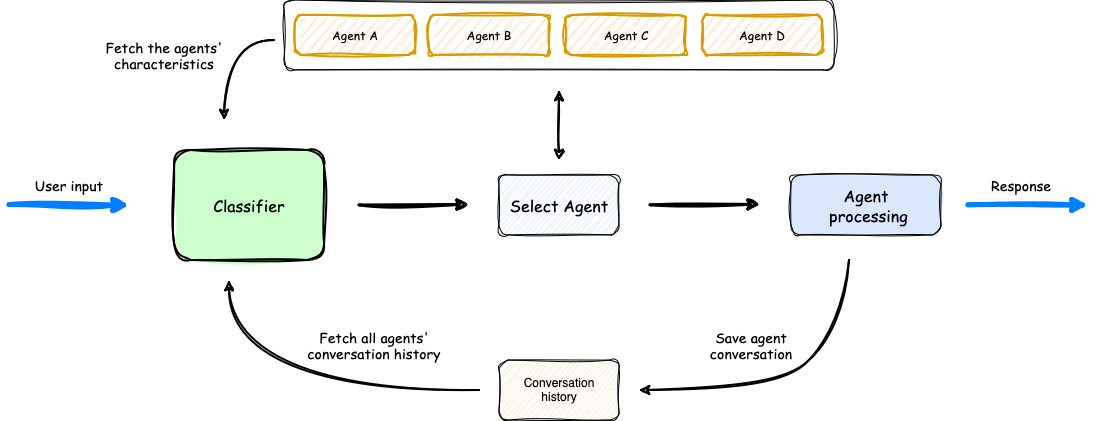

🏗️ High-level architecture flow diagram

- The process begins with user input, which is analyzed by a Classifier.

- The Classifier leverages both Agents' Characteristics and Agents' Conversation history to select the most appropriate agent for the task.

- Once an agent is selected, it processes the user input.

- The orchestrator then saves the conversation, updating the Agents' Conversation history, before delivering the response back to the user.

💬 Demo App

To quickly get a feel for the Multi-Agent Orchestrator, we've provided a Demo App with a few basic agents. This interactive demo showcases the orchestrator's capabilities in a user-friendly interface. To learn more about setting up and running the demo app, please refer to our Demo App section.

In the screen recording below, we demonstrate an extended version of the demo app that uses 6 specialized agents:

- Travel Agent: Powered by an Amazon Lex Bot

- Weather Agent: Utilizes a Bedrock LLM Agent with a tool to query the open-meteo API

- Restaurant Agent: Implemented as an Amazon Bedrock Agent

- Math Agent: Utilizes a Bedrock LLM Agent with two tools for executing mathematical operations

- Tech Agent: A Bedrock LLM Agent designed to answer questions on technical topics

- Health Agent: A Bedrock LLM Agent focused on addressing health-related queries

Watch as the system seamlessly switches context between diverse topics, from booking flights to checking weather, solving math problems, and providing health information.

Notice how the appropriate agent is selected for each query, maintaining coherence even with brief follow-up inputs.

The demo highlights the system's ability to handle complex, multi-turn conversations while preserving context and leveraging specialized agents across various domains.

Click on the image below to see a screen recording of the demo app on the GitHub repository of the project:

🚀 Getting Started

Check out our documentation for comprehensive guides on setting up and using the Multi-Agent Orchestrator!

Core Installation

python -m venv venv

source venv/bin/activate

pip install multi-agent-orchestrator[aws]

Default Usage

Here's an equivalent Python example demonstrating the use of the Multi-Agent Orchestrator with a Bedrock LLM Agent and a Lex Bot Agent:

import sys

import asyncio

from multi_agent_orchestrator.orchestrator import MultiAgentOrchestrator

from multi_agent_orchestrator.agents import BedrockLLMAgent, LexBotAgent, BedrockLLMAgentOptions, LexBotAgentOptions, AgentStreamResponse

orchestrator = MultiAgentOrchestrator()

tech_agent = BedrockLLMAgent(BedrockLLMAgentOptions(

name="Tech Agent",

streaming=True,

description="Specializes in technology areas including software development, hardware, AI, \

cybersecurity, blockchain, cloud computing, emerging tech innovations, and pricing/costs \

related to technology products and services.",

model_id="anthropic.claude-3-sonnet-20240229-v1:0",

))

orchestrator.add_agent(tech_agent)

health_agent = BedrockLLMAgent(BedrockLLMAgentOptions(

name="Health Agent",

streaming=True,

description="Specializes in health and well being",

))

orchestrator.add_agent(health_agent)

async def main():

response = await orchestrator.route_request(

"What is AWS Lambda?",

'user123',

'session456',

{},

True

)

if response.streaming:

print("\n** RESPONSE STREAMING ** \n")

print(f"> Agent ID: {response.metadata.agent_id}")

print(f"> Agent Name: {response.metadata.agent_name}")

print(f"> User Input: {response.metadata.user_input}")

print(f"> User ID: {response.metadata.user_id}")

print(f"> Session ID: {response.metadata.session_id}")

print(f"> Additional Parameters: {response.metadata.additional_params}")

print("\n> Response: ")

async for chunk in response.output:

async for chunk in response.output:

if isinstance(chunk, AgentStreamResponse):

print(chunk.text, end='', flush=True)

else:

print(f"Received unexpected chunk type: {type(chunk)}", file=sys.stderr)

else:

print("\n** RESPONSE ** \n")

print(f"> Agent ID: {response.metadata.agent_id}")

print(f"> Agent Name: {response.metadata.agent_name}")

print(f"> User Input: {response.metadata.user_input}")

print(f"> User ID: {response.metadata.user_id}")

print(f"> Session ID: {response.metadata.session_id}")

print(f"> Additional Parameters: {response.metadata.additional_params}")

print(f"\n> Response: {response.output.content}")

if __name__ == "__main__":

asyncio.run(main())

The following example demonstrates how to use the Multi-Agent Orchestrator with two different types of agents: a Bedrock LLM Agent with Converse API support and a Lex Bot Agent. This showcases the flexibility of the system in integrating various AI services.

This example showcases:

- The use of a Bedrock LLM Agent with Converse API support, allowing for multi-turn conversations.

- Integration of a Lex Bot Agent for specialized tasks (in this case, travel-related queries).

- The orchestrator's ability to route requests to the most appropriate agent based on the input.

- Handling of both streaming and non-streaming responses from different types of agents.

Working with Anthropic or OpenAI

If you want to use Anthropic or OpenAI for classifier and/or agents, make sure to install the multi-agent-orchestrator with the relevant extra feature.

pip install "multi-agent-orchestrator[anthropic]"

pip install "multi-agent-orchestrator[openai]"

Full package installation

For a complete installation (including Anthropic and OpenAi):

pip install multi-agent-orchestrator[all]

Building Locally

This guide explains how to build and install the multi-agent-orchestrator package from source code.

Prerequisites

- Python 3.11

- pip package manager

- Git (to clone the repository)

Building the Package

-

Navigate to the Python package directory:

cd python

-

Install the build dependencies:

python -m pip install build

-

Build the package:

python -m build

This process will create distribution files in the python/dist directory, including a wheel (.whl) file.

Installation

-

Locate the current version number in setup.cfg.

-

Install the built package using pip:

pip install ./dist/multi_agent_orchestrator-<VERSION>-py3-none-any.whl

Replace <VERSION> with the version number from setup.cfg.

Example

If the version in setup.cfg is 1.2.3, the installation command would be:

pip install ./dist/multi_agent_orchestrator-1.2.3-py3-none-any.whl

Troubleshooting

- If you encounter permission errors during installation, you may need to use

sudo or activate a virtual environment.

- Make sure you're in the correct directory when running the build and install commands.

- Clean the

dist directory before rebuilding if you encounter issues: rm -rf python/dist/*

🤝 Contributing

We welcome contributions! Please see our Contributing Guide for more details.

📄 LICENSE

This project is licensed under the Apache 2.0 licence - see the LICENSE file for details.

📄 Font License

This project uses the JetBrainsMono NF font, licensed under the SIL Open Font License 1.1.

For full license details, see FONT-LICENSE.md.