Research

/Security News

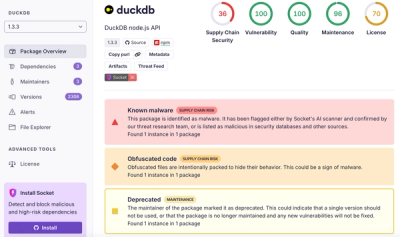

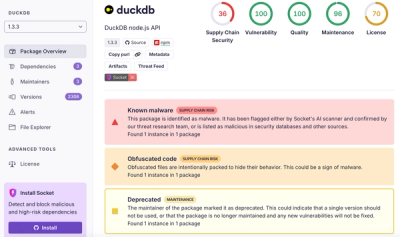

DuckDB npm Account Compromised in Continuing Supply Chain Attack

Ongoing npm supply chain attack spreads to DuckDB: multiple packages compromised with the same wallet-drainer malware.

multilabel-eval-metrics

Advanced tools

This toolkit focuses on different evaluation metrics that can be used for evaluating the performance of a multilabel classifier.

The evaluation metrics for multi-label classification can be broadly classified into two categories:

from multilabel_eval_metrics import *

import numpy as np

if __name__=="__main__":

y_true = np.array([[0, 1], [1, 1], [1, 1], [0, 1], [1, 0]])

y_pred = np.array([[1, 1], [1, 0], [1, 1], [0, 1], [1, 0]])

print(y_true)

print(y_pred)

result=MultiLabelMetrics(y_true,y_pred).get_metric_summary(show=True)

The multilabel-eval-metrics toolkit is provided by Donghua Chen with MIT License.

FAQs

Quickly evaluate multi-label classifiers in various metrics

We found that multilabel-eval-metrics demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

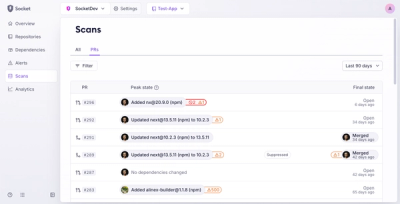

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Ongoing npm supply chain attack spreads to DuckDB: multiple packages compromised with the same wallet-drainer malware.

Security News

The MCP Steering Committee has launched the official MCP Registry in preview, a central hub for discovering and publishing MCP servers.

Product

Socket’s new Pull Request Stories give security teams clear visibility into dependency risks and outcomes across scanned pull requests.