Security News

Static vs. Runtime Reachability: Insights from Latio’s On the Record Podcast

The Latio podcast explores how static and runtime reachability help teams prioritize exploitable vulnerabilities and streamline AppSec workflows.

pipelinewise-target-s3-csv

Advanced tools

Singer.io target for writing CSV files and upload to S3 - PipelineWise compatible

Singer target that uploads loads data to S3 in CSV format following the Singer spec.

This is a PipelineWise compatible target connector.

The recommended method of running this target is to use it from PipelineWise. When running it from PipelineWise you don't need to configure this tap with JSON files and most of things are automated. Please check the related documentation at Target S3 CSV

If you want to run this Singer Target independently please read further.

First, make sure Python >=3.7 is installed on your system or follow these installation instructions for Mac or Ubuntu.

It's recommended to use a virtualenv:

python3 -m venv venv

pip install pipelinewise-target-s3-csv

or

make venv

Like any other target that's following the singer specification:

some-singer-tap | target-s3-csv --config [config.json]

It's reading incoming messages from STDIN and using the properties in config.json to upload data into Postgres.

Note: To avoid version conflicts run tap and targets in separate virtual environments.

Running the target connector requires a config.json file. An example with the minimal settings:

{

"s3_bucket": "my_bucket"

}

Profile based authentication used by default using the default profile. To use another profile set aws_profile parameter in config.json or set the AWS_PROFILE environment variable.

For non-profile based authentication set aws_access_key_id , aws_secret_access_key and optionally the aws_session_token parameter in the config.json. Alternatively you can define them out of config.json by setting AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY and AWS_SESSION_TOKEN environment variables.

Full list of options in config.json:

| Property | Type | Required? | Description |

|---|---|---|---|

| aws_access_key_id | String | No | S3 Access Key Id. If not provided, AWS_ACCESS_KEY_ID environment variable will be used. |

| aws_secret_access_key | String | No | S3 Secret Access Key. If not provided, AWS_SECRET_ACCESS_KEY environment variable will be used. |

| aws_session_token | String | No | AWS Session token. If not provided, AWS_SESSION_TOKEN environment variable will be used. |

| aws_endpoint_url | String | No | AWS endpoint URL. |

| aws_profile | String | No | AWS profile name for profile based authentication. If not provided, AWS_PROFILE environment variable will be used. |

| s3_bucket | String | Yes | S3 Bucket name |

| s3_key_prefix | String | (Default: None) A static prefix before the generated S3 key names. Using prefixes you can | |

| delimiter | String | (Default: ',') A one-character string used to separate fields. | |

| quotechar | String | (Default: '"') A one-character string used to quote fields containing special characters, such as the delimiter or quotechar, or which contain new-line characters. | |

| add_metadata_columns | Boolean | (Default: False) Metadata columns add extra row level information about data ingestions, (i.e. when was the row read in source, when was inserted or deleted in snowflake etc.) Metadata columns are creating automatically by adding extra columns to the tables with a column prefix _SDC_. The column names are following the stitch naming conventions documented at https://www.stitchdata.com/docs/data-structure/integration-schemas#sdc-columns. Enabling metadata columns will flag the deleted rows by setting the _SDC_DELETED_AT metadata column. Without the add_metadata_columns option the deleted rows from singer taps will not be recongisable in Snowflake. | |

| encryption_type | String | No | (Default: 'none') The type of encryption to use. Current supported options are: 'none' and 'KMS'. |

| encryption_key | String | No | A reference to the encryption key to use for data encryption. For KMS encryption, this should be the name of the KMS encryption key ID (e.g. '1234abcd-1234-1234-1234-1234abcd1234'). This field is ignored if 'encryption_type' is none or blank. |

| compression | String | No | The type of compression to apply before uploading. Supported options are none (default) and gzip. For gzipped files, the file extension will automatically be changed to .csv.gz for all files. |

| naming_convention | String | No | (Default: None) Custom naming convention of the s3 key. Replaces tokens date, stream, and timestamp with the appropriate values. Supports "folders" in s3 keys e.g. folder/folder2/{stream}/export_date={date}/{timestamp}.csv. Honors the s3_key_prefix, if set, by prepending the "filename". E.g. naming_convention = folder1/my_file.csv and s3_key_prefix = prefix_ results in folder1/prefix_my_file.csv |

| temp_dir | String | (Default: platform-dependent) Directory of temporary CSV files with RECORD messages. |

export TARGET_S3_CSV_ACCESS_KEY_ID=<s3-access-key-id>

export TARGET_S3_CSV_SECRET_ACCESS_KEY=<s3-secret-access-key>

export TARGET_S3_CSV_BUCKET=<s3-bucket>

export TARGET_S3_CSV_KEY_PREFIX=<s3-key-prefix>

make venv

make unit_test

make integration_test

make venv pylint

Apache License Version 2.0

See LICENSE to see the full text.

FAQs

Singer.io target for writing CSV files and upload to S3 - PipelineWise compatible

We found that pipelinewise-target-s3-csv demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

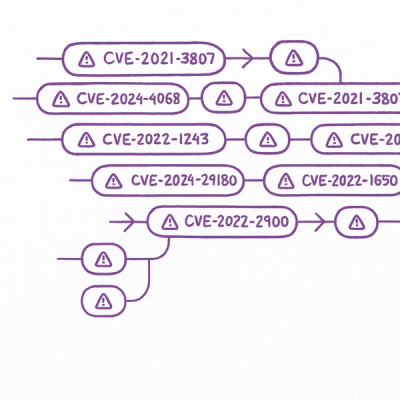

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

The Latio podcast explores how static and runtime reachability help teams prioritize exploitable vulnerabilities and streamline AppSec workflows.

Security News

The latest Opengrep releases add Apex scanning, precision rule tuning, and performance gains for open source static code analysis.

Security News

npm now supports Trusted Publishing with OIDC, enabling secure package publishing directly from CI/CD workflows without relying on long-lived tokens.