Security News

/Research

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

pytest-argus-reporter

Advanced tools

A plugin to send pytest tests results to Argus, with extra context data

branch or last commit and moreYou can install "pytest-argus-reporter" via pip from PyPI

pip install pytest-argus-reporter

pytest --argus-post-reports --argus-base-url http://argus-server.io:8080 --argus-api-key 1234 --extra-headers '{"X-My-Header": "my-value"}'

from pytest_argus_reporter import ArgusReporter

def pytest_plugin_registered(plugin, manager):

if isinstance(plugin, ArgusReporter):

# TODO: get credentials in more secure fashion programmatically, maybe AWS secrets or the likes

# or put them in plain-text in the code... what can ever go wrong...

plugin.base_url = "https://argus.scylladb.com"

plugin.api_key = "123456" # get it from your user page on Argus

plugin.test_type = "dtest"

plugin.extra_headers = {}

see pytest docs for more about how to configure pytest using .ini files

In this example, I'll be able to build a dashboard for each version:

import pytest

@pytest.fixture(scope="session", autouse=True)

def report_formal_version_to_argus(request):

"""

Append my own data specific, for example which of the code under test is used

"""

# TODO: programmatically set to the version of the code under test...

my_data = {"formal_version": "1.0.0-rc2" }

elk = request.config.pluginmanager.get_plugin("argus-reporter-runtime")

elk.session_data.update(**my_data)

import requests

def test_my_service_and_collect_timings(request, argus_reporter):

response = requests.get("http://my-server.io/api/do_something")

assert response.status_code == 200

argus_reporter.append_test_data(request, {"do_something_response_time": response.elapsed.total_seconds() })

# now, a dashboard showing response time by version should be quite easy

# and yeah, it's not exactly a real usable metric, but it's just one example...

Or via the record_property built-in fixture (that is normally used to collect data into junit.xml reports):

import requests

def test_my_service_and_collect_timings(record_property):

response = requests.get("http://my-server.io/api/do_something")

assert response.status_code == 200

record_property("do_something_response_time", response.elapsed.total_seconds())

One cool thing that can be done now that you have a history of the tests, is to split the tests based on their actual runtime when passing. For long-running integration tests, this is priceless.

In this example, we're going to split the run into a maximum of 4 min slices. Any test that doesn't have history information is assumed to be 60 sec long.

# pytest --collect-only --argus-splice --argus-max-splice-time=4 --argus-default-test-time=60

...

0: 0:04:00 - 3 - ['test_history_slices.py::test_should_pass_1', 'test_history_slices.py::test_should_pass_2', 'test_history_slices.py::test_should_pass_3']

1: 0:04:00 - 2 - ['test_history_slices.py::test_with_history_data', 'test_history_slices.py::test_that_failed']

...

# cat include000.txt

test_history_slices.py::test_should_pass_1

test_history_slices.py::test_should_pass_2

test_history_slices.py::test_should_pass_3

# cat include000.txt

test_history_slices.py::test_with_history_data

test_history_slices.py::test_that_failed

### now we can run each slice on its own machine

### on machine1

# pytest $(cat include000.txt)

### on machine2

# pytest $(cat include001.txt)

Contributions are very welcome. Tests can be run with nox. Please ensure

the coverage at least stays the same before you submit a pull request.

Distributed under the terms of the Apache license, "pytest-argus-reporter" is free and open source software

If you encounter any problems, please file an issue along with a detailed description.

This pytest plugin was generated with Cookiecutter along with @hackebrot's cookiecutter-pytest-plugin template.

FAQs

A simple plugin to report results of test into argus

We found that pytest-argus-reporter demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 3 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

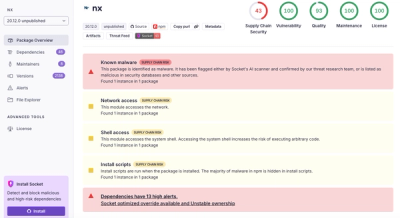

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.