Product

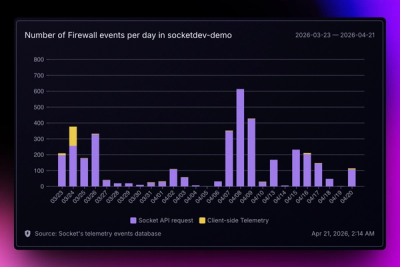

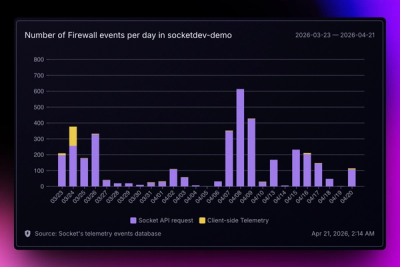

Introducing Reports: An Extensible Reporting Framework for Socket Data

Explore exportable charts for vulnerabilities, dependencies, and usage with Reports, Socket’s new extensible reporting framework.

rtx50-compat

Advanced tools

Enable NVIDIA RTX 50-series GPU support (sm_120) in PyTorch and the entire Python AI ecosystem with a single import.

The RTX 5090 features the new sm_120 compute capability, which isn't recognized by current PyTorch/CUDA libraries. This package provides a runtime patch that makes your RTX 5090 work seamlessly with existing AI frameworks.

# Recommended: use uv

uv pip install rtx50-compat

# Or with pip

pip install rtx50-compat

import rtx50_compat # Must be imported before PyTorch!

import torch

# Verify GPU is recognized

print(torch.cuda.get_device_name(0)) # NVIDIA GeForce RTX 5090

print(torch.cuda.is_available()) # True

# Now use PyTorch normally

model = torch.nn.Linear(1024, 1024).cuda()

Based on RTX 5090's 32GB GDDR7 VRAM and 70 TFLOPS compute:

| Model | RTX 5090 | i9-14900K | Speedup |

|---|---|---|---|

| Llama 3-8B | 180-250 tokens/s | 8-12 tokens/s | ~20x |

| Llama 3-13B | 120-180 tokens/s | 4-6 tokens/s | ~30x |

| Stable Diffusion XL | 40-60 img/min | 0.5 img/min | ~100x |

| Model | RTX 5090 (with offload) | i9-14900K | Speedup |

|---|---|---|---|

| Llama 3-70B Q4 | 25-35 tokens/s | 1-3 tokens/s | ~15x |

| Mixtral 8x7B | 40-60 tokens/s | 2-4 tokens/s | ~20x |

Note: 70B models require ~35GB for Q4 quantization, exceeding the RTX 5090's 32GB VRAM. Performance depends on offloading efficiency.

import rtx50_compat

import torch

# Check if patch was applied

if torch.cuda.is_available():

print(f"✅ GPU: {torch.cuda.get_device_name(0)}")

print(f"✅ VRAM: {torch.cuda.get_device_properties(0).total_memory / 1024**3:.1f} GB")

# Quick performance test

x = torch.randn(10000, 10000, device='cuda')

y = torch.matmul(x, x)

print("✅ CUDA operations working!")

else:

print("❌ CUDA not available")

import rtx50_compat

from transformers import AutoModelForCausalLM, AutoTokenizer

# Load model - fits entirely in 32GB VRAM

model_id = "meta-llama/Meta-Llama-3-8B"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.float16,

device_map="cuda"

)

# Generate at 180-250 tokens/s!

inputs = tokenizer("The future of AI is", return_tensors="pt").to("cuda")

outputs = model.generate(**inputs, max_length=100, temperature=0.8)

print(tokenizer.decode(outputs[0]))

# First convert to GGUF format for efficient memory usage

# pip install llama-cpp-python

import rtx50_compat

from llama_cpp import Llama

# Load 70B model with automatic GPU/CPU splitting

llm = Llama(

model_path="llama-3-70b-q4_k_m.gguf",

n_gpu_layers=-1, # Offload all layers that fit

n_ctx=4096,

verbose=False

)

# Generate at 25-35 tokens/s with partial offloading

response = llm("The meaning of life is", max_tokens=100)

print(response['choices'][0]['text'])

import rtx50_compat

from diffusers import DiffusionPipeline

import torch

pipe = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0",

torch_dtype=torch.float16,

use_safetensors=True,

variant="fp16"

).to("cuda")

# Generate at 40-60 images per minute!

images = pipe(

"A majestic mountain landscape at sunset, highly detailed, 8k",

num_images_per_prompt=4,

guidance_scale=7.5

).images

# The patch intercepts CUDA capability queries

original_get_device_capability = torch.cuda.get_device_capability

def patched_get_device_capability(device=None):

major, minor = original_get_device_capability(device)

if major == 12 and minor == 0: # sm_120 (RTX 50-series)

return (9, 0) # Masquerade as sm_90 (H100)

return (major, minor)

For subtle operations:

export RTX50_BATMAN_MODE=1

python your_script.py

Output:

🦇 I am Batman - at your local jujitsu establishment

RTX 5090 successfully disguised as H100

You didn't see anything... 🌙

rtx50-compat/

├── rtx50_compat.py # Main compatibility layer

├── patches/ # PyTorch & vLLM patches (for reference)

│ ├── pytorch_rtx5090.patch

│ ├── vllm_rtx5090.patch

│ └── README.md # Patch application guide

├── benchmarks/ # Benchmark scripts

│ ├── benchmark_8b.py # Llama 3-8B benchmark

│ ├── benchmark_70b.py # Llama 3-70B with offloading

│ └── benchmark_sd.py # Stable Diffusion benchmark

├── examples/ # Usage examples

│ ├── hello_world.py

│ ├── comfyui_integration.py

│ └── llama_cpp_example.py

├── tests/ # Unit tests

│ └── test_compatibility.py

├── LICENSE

├── README.md

└── setup.py

Ensure rtx50_compat is imported before any other CUDA/PyTorch imports:

import rtx50_compat # MUST be first

import torch # Now this works

For models exceeding 32GB VRAM, use quantization and offloading:

# Use 4-bit quantization

model = AutoModelForCausalLM.from_pretrained(

model_id,

load_in_4bit=True,

device_map="auto" # Automatic CPU/GPU splitting

)

nvidia-smi# Install the package and verify it works

claude "I have an RTX 5090. Help me set up rtx50-compat and run a Llama 3-8B model for maximum performance"

# Optimize for 70B models with offloading

claude "Show me how to run Llama 3-70B on my RTX 5090 using llama.cpp with optimal settings"

# Debug performance issues

claude "My RTX 5090 is only getting 10 tokens/s on Llama 3-13B. Help me diagnose and fix this"

# Integration with existing projects

claude "Add rtx50-compat support to my ComfyUI installation at ~/ComfyUI"

# Setup and verification

gemini -p "I have an RTX 5090 with 32GB VRAM. Guide me through installing rtx50-compat and running a benchmark"

# Model recommendations

gemini -p "What's the largest LLM I can run entirely in VRAM on my RTX 5090? Include quantization options"

# Performance optimization

gemini -p "Analyze my RTX 5090 setup and suggest optimizations for running Mixtral 8x7B at maximum speed"

# Troubleshooting

gemini -p "Getting 'no kernel image' error with RTX 5090 in PyTorch. Show me how to fix with rtx50-compat"

Help me set up a complete local AI workstation with RTX 5090:

1. Install rtx50-compat

2. Configure vLLM for serving

3. Set up Stable Diffusion XL

4. Create benchmarks for both text and image generation

I need to deploy a Llama 3-70B model on RTX 5090 for production use:

- Optimize for throughput (multiple users)

- Set up proper memory management

- Configure monitoring and logging

- Handle model switching between 8B/13B/70B based on load

PRs welcome! Areas needing help:

We're working on getting these patches merged upstream:

MIT License - see LICENSE

Note: This is a community compatibility layer. Once PyTorch officially supports sm_120, this package will become obsolete. Until then, enjoy running large models locally at impressive speeds! 🚀

FAQs

RTX 50-series GPU compatibility layer for PyTorch and CUDA - enables sm_120 support

We found that rtx50-compat demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Explore exportable charts for vulnerabilities, dependencies, and usage with Reports, Socket’s new extensible reporting framework.

Product

Socket for Jira lets teams turn alerts into Jira tickets with manual creation, automated ticketing rules, and two-way sync.

Company News

Socket won two 2026 Reppy Awards from RepVue, ranking in the top 5% of all sales orgs. AE Alexandra Lister shares what it's like to grow a sales career here.