Research

/Security News

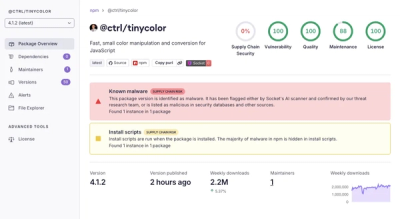

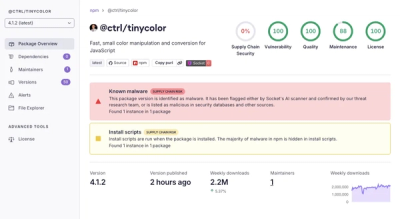

Popular Tinycolor npm Package Compromised in Supply Chain Attack Affecting 40+ Packages

Malicious update to @ctrl/tinycolor on npm is part of a supply-chain attack hitting 40+ packages across maintainers

github.com/JohnSnowLabs/spark-nlp

Spark NLP is a state-of-the-art Natural Language Processing library built on top of Apache Spark. It provides simple, performant & accurate NLP annotations for machine learning pipelines that scale easily in a distributed environment. Spark NLP comes with 21000+ pretrained pipelines and models in more than 200+ languages. It also offers tasks such as Tokenization, Word Segmentation, Part-of-Speech Tagging, Word and Sentence Embeddings, Named Entity Recognition, Dependency Parsing, Spell Checking, Text Classification, Sentiment Analysis, Token Classification, Machine Translation (+180 languages), Summarization, Question Answering, Table Question Answering, Text Generation, Image Classification, Image to Text (captioning), Automatic Speech Recognition, Zero-Shot Learning, and many more NLP tasks.

Spark NLP is the only open-source NLP library in production that offers state-of-the-art transformers such as BERT, CamemBERT, ALBERT, ELECTRA, XLNet, DistilBERT, RoBERTa, DeBERTa, XLM-RoBERTa, Longformer, ELMO, Universal Sentence Encoder, Facebook BART, Instructor, E5, Google T5, MarianMT, OpenAI GPT2, and Vision Transformers (ViT) not only to Python and R, but also to JVM ecosystem (Java, Scala, and Kotlin) at scale by extending Apache Spark natively.

Take a look at our official Spark NLP page: https://sparknlp.org/ for user documentation and examples

To use Spark NLP you need the following requirements:

GPU (optional):

Spark NLP 5.1.3 is built with ONNX 1.15.1 and TensorFlow 2.7.1 deep learning engines. The minimum following NVIDIA® software are only required for GPU support:

This is a quick example of how to use Spark NLP pre-trained pipeline in Python and PySpark:

$ java -version

# should be Java 8 or 11 (Oracle or OpenJDK)

$ conda create -n sparknlp python=3.7 -y

$ conda activate sparknlp

# spark-nlp by default is based on pyspark 3.x

$ pip install spark-nlp==5.1.3 pyspark==3.3.1

In Python console or Jupyter Python3 kernel:

# Import Spark NLP

from sparknlp.base import *

from sparknlp.annotator import *

from sparknlp.pretrained import PretrainedPipeline

import sparknlp

# Start SparkSession with Spark NLP

# start() functions has 3 parameters: gpu, apple_silicon, and memory

# sparknlp.start(gpu=True) will start the session with GPU support

# sparknlp.start(apple_silicon=True) will start the session with macOS M1 & M2 support

# sparknlp.start(memory="16G") to change the default driver memory in SparkSession

spark = sparknlp.start()

# Download a pre-trained pipeline

pipeline = PretrainedPipeline('explain_document_dl', lang='en')

# Your testing dataset

text = """

The Mona Lisa is a 16th century oil painting created by Leonardo.

It's held at the Louvre in Paris.

"""

# Annotate your testing dataset

result = pipeline.annotate(text)

# What's in the pipeline

list(result.keys())

Output: ['entities', 'stem', 'checked', 'lemma', 'document',

'pos', 'token', 'ner', 'embeddings', 'sentence']

# Check the results

result['entities']

Output: ['Mona Lisa', 'Leonardo', 'Louvre', 'Paris']

For more examples, you can visit our dedicated examples to showcase all Spark NLP use cases!

Spark NLP 5.1.3 has been built on top of Apache Spark 3.4 while fully supports Apache Spark 3.0.x, 3.1.x, 3.2.x, 3.3.x, and 3.4.x

| Spark NLP | Apache Spark 2.3.x | Apache Spark 2.4.x | Apache Spark 3.0.x | Apache Spark 3.1.x | Apache Spark 3.2.x | Apache Spark 3.3.x | Apache Spark 3.4.x |

|---|---|---|---|---|---|---|---|

| 5.0.x | NO | NO | YES | YES | YES | YES | YES |

| 4.4.x | NO | NO | YES | YES | YES | YES | YES |

| 4.3.x | NO | NO | YES | YES | YES | YES | NO |

| 4.2.x | NO | NO | YES | YES | YES | YES | NO |

| 4.1.x | NO | NO | YES | YES | YES | YES | NO |

| 4.0.x | NO | NO | YES | YES | YES | YES | NO |

| 3.4.x | YES | YES | YES | YES | Partially | N/A | NO |

| 3.3.x | YES | YES | YES | YES | NO | NO | NO |

| 3.2.x | YES | YES | YES | YES | NO | NO | NO |

| 3.1.x | YES | YES | YES | YES | NO | NO | NO |

| 3.0.x | YES | YES | YES | YES | NO | NO | NO |

| 2.7.x | YES | YES | NO | NO | NO | NO | NO |

Find out more about Spark NLP versions from our release notes.

| Spark NLP | Python 3.6 | Python 3.7 | Python 3.8 | Python 3.9 | Python 3.10 | Scala 2.11 | Scala 2.12 |

|---|---|---|---|---|---|---|---|

| 5.0.x | NO | YES | YES | YES | YES | NO | YES |

| 4.4.x | NO | YES | YES | YES | YES | NO | YES |

| 4.3.x | YES | YES | YES | YES | YES | NO | YES |

| 4.2.x | YES | YES | YES | YES | YES | NO | YES |

| 4.1.x | YES | YES | YES | YES | NO | NO | YES |

| 4.0.x | YES | YES | YES | YES | NO | NO | YES |

| 3.4.x | YES | YES | YES | YES | NO | YES | YES |

| 3.3.x | YES | YES | YES | NO | NO | YES | YES |

| 3.2.x | YES | YES | YES | NO | NO | YES | YES |

| 3.1.x | YES | YES | YES | NO | NO | YES | YES |

| 3.0.x | YES | YES | YES | NO | NO | YES | YES |

| 2.7.x | YES | YES | NO | NO | NO | YES | NO |

Spark NLP 5.1.3 has been tested and is compatible with the following runtimes:

CPU:

GPU:

Spark NLP 5.1.3 has been tested and is compatible with the following EMR releases:

Full list of Amazon EMR 6.x releases

NOTE: The EMR 6.1.0 and 6.1.1 are not supported.

This is a cheatsheet for corresponding Spark NLP Maven package to Apache Spark / PySpark major version:

| Apache Spark | Spark NLP on CPU | Spark NLP on GPU | Spark NLP on AArch64 (linux) | Spark NLP on Apple Silicon |

|---|---|---|---|---|

| 3.0/3.1/3.2/3.3/3.4 | spark-nlp | spark-nlp-gpu | spark-nlp-aarch64 | spark-nlp-silicon |

| Start Function | sparknlp.start() | sparknlp.start(gpu=True) | sparknlp.start(aarch64=True) | sparknlp.start(apple_silicon=True) |

NOTE: M1/M2 and AArch64 are under experimental support. Access and support to these architectures are limited by the

community and we had to build most of the dependencies by ourselves to make them compatible. We support these two

architectures, however, they may not work in some environments.

Spark NLP supports all major releases of Apache Spark 3.0.x, Apache Spark 3.1.x, Apache Spark 3.2.x, Apache Spark 3.3.x, and Apache Spark 3.4.x

# CPU

spark-shell --packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

pyspark --packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

spark-submit --packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

The spark-nlp has been published to

the Maven Repository.

# GPU

spark-shell --packages com.johnsnowlabs.nlp:spark-nlp-gpu_2.12:5.1.3

pyspark --packages com.johnsnowlabs.nlp:spark-nlp-gpu_2.12:5.1.3

spark-submit --packages com.johnsnowlabs.nlp:spark-nlp-gpu_2.12:5.1.3

The spark-nlp-gpu has been published to

the Maven Repository.

# AArch64

spark-shell --packages com.johnsnowlabs.nlp:spark-nlp-aarch64_2.12:5.1.3

pyspark --packages com.johnsnowlabs.nlp:spark-nlp-aarch64_2.12:5.1.3

spark-submit --packages com.johnsnowlabs.nlp:spark-nlp-aarch64_2.12:5.1.3

The spark-nlp-aarch64 has been published to

the Maven Repository.

# M1/M2 (Apple Silicon)

spark-shell --packages com.johnsnowlabs.nlp:spark-nlp-silicon_2.12:5.1.3

pyspark --packages com.johnsnowlabs.nlp:spark-nlp-silicon_2.12:5.1.3

spark-submit --packages com.johnsnowlabs.nlp:spark-nlp-silicon_2.12:5.1.3

The spark-nlp-silicon has been published to

the Maven Repository.

NOTE: In case you are using large pretrained models like UniversalSentenceEncoder, you need to have the following set in your SparkSession:

spark-shell \

--driver-memory 16g \

--conf spark.kryoserializer.buffer.max=2000M \

--packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

Spark NLP supports Scala 2.12.15 if you are using Apache Spark 3.0.x, 3.1.x, 3.2.x, 3.3.x, and 3.4.x versions. Our packages are deployed to Maven central. To add any of our packages as a dependency in your application you can follow these coordinates:

spark-nlp on Apache Spark 3.0.x, 3.1.x, 3.2.x, 3.3.x, and 3.4.x:

<!-- https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp -->

<dependency>

<groupId>com.johnsnowlabs.nlp</groupId>

<artifactId>spark-nlp_2.12</artifactId>

<version>5.1.3</version>

</dependency>

spark-nlp-gpu:

<!-- https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp-gpu -->

<dependency>

<groupId>com.johnsnowlabs.nlp</groupId>

<artifactId>spark-nlp-gpu_2.12</artifactId>

<version>5.1.3</version>

</dependency>

spark-nlp-aarch64:

<!-- https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp-aarch64 -->

<dependency>

<groupId>com.johnsnowlabs.nlp</groupId>

<artifactId>spark-nlp-aarch64_2.12</artifactId>

<version>5.1.3</version>

</dependency>

spark-nlp-silicon:

<!-- https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp-silicon -->

<dependency>

<groupId>com.johnsnowlabs.nlp</groupId>

<artifactId>spark-nlp-silicon_2.12</artifactId>

<version>5.1.3</version>

</dependency>

spark-nlp on Apache Spark 3.0.x, 3.1.x, 3.2.x, 3.3.x, and 3.4.x:

// https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp

libraryDependencies += "com.johnsnowlabs.nlp" %% "spark-nlp" % "5.1.3"

spark-nlp-gpu:

// https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp-gpu

libraryDependencies += "com.johnsnowlabs.nlp" %% "spark-nlp-gpu" % "5.1.3"

spark-nlp-aarch64:

// https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp-aarch64

libraryDependencies += "com.johnsnowlabs.nlp" %% "spark-nlp-aarch64" % "5.1.3"

spark-nlp-silicon:

// https://mvnrepository.com/artifact/com.johnsnowlabs.nlp/spark-nlp-silicon

libraryDependencies += "com.johnsnowlabs.nlp" %% "spark-nlp-silicon" % "5.1.3"

Maven Central: https://mvnrepository.com/artifact/com.johnsnowlabs.nlp

If you are interested, there is a simple SBT project for Spark NLP to guide you on how to use it in your projects Spark NLP SBT Starter

Spark NLP supports Python 3.6.x and above depending on your major PySpark version.

If you installed pyspark through pip/conda, you can install spark-nlp through the same channel.

Pip:

pip install spark-nlp==5.1.3

Conda:

conda install -c johnsnowlabs spark-nlp

PyPI spark-nlp package / Anaconda spark-nlp package

Then you'll have to create a SparkSession either from Spark NLP:

import sparknlp

spark = sparknlp.start()

or manually:

spark = SparkSession.builder

.appName("Spark NLP")

.master("local[*]")

.config("spark.driver.memory", "16G")

.config("spark.driver.maxResultSize", "0")

.config("spark.kryoserializer.buffer.max", "2000M")

.config("spark.jars.packages", "com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3")

.getOrCreate()

If using local jars, you can use spark.jars instead for comma-delimited jar files. For cluster setups, of course,

you'll have to put the jars in a reachable location for all driver and executor nodes.

Quick example:

import sparknlp

from sparknlp.pretrained import PretrainedPipeline

# create or get Spark Session

spark = sparknlp.start()

sparknlp.version()

spark.version

# download, load and annotate a text by pre-trained pipeline

pipeline = PretrainedPipeline('recognize_entities_dl', 'en')

result = pipeline.annotate('The Mona Lisa is a 16th century oil painting created by Leonardo')

sbt assembly

sbt -Dis_gpu=true assembly

sbt -Dis_silicon=true assembly

If for some reason you need to use the JAR, you can either download the Fat JARs provided here or download it from Maven Central.

To add JARs to spark programs use the --jars option:

spark-shell --jars spark-nlp.jar

The preferred way to use the library when running spark programs is using the --packages option as specified in

the spark-packages section.

Use either one of the following options

com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

Apart from the previous step, install the python module through pip

pip install spark-nlp==5.1.3

Or you can install spark-nlp from inside Zeppelin by using Conda:

python.conda install -c johnsnowlabs spark-nlp

Configure Zeppelin properly, use cells with %spark.pyspark or any interpreter name you chose.

Finally, in Zeppelin interpreter settings, make sure you set properly zeppelin.python to the python you want to use and

install the pip library with (e.g. python3).

An alternative option would be to set SPARK_SUBMIT_OPTIONS (zeppelin-env.sh) and make sure --packages is there as

shown earlier since it includes both scala and python side installation.

Recommended:

The easiest way to get this done on Linux and macOS is to simply install spark-nlp and pyspark PyPI packages and

launch the Jupyter from the same Python environment:

$ conda create -n sparknlp python=3.8 -y

$ conda activate sparknlp

# spark-nlp by default is based on pyspark 3.x

$ pip install spark-nlp==5.1.3 pyspark==3.3.1 jupyter

$ jupyter notebook

Then you can use python3 kernel to run your code with creating SparkSession via spark = sparknlp.start().

Optional:

If you are in different operating systems and require to make Jupyter Notebook run by using pyspark, you can follow these steps:

export SPARK_HOME=/path/to/your/spark/folder

export PYSPARK_PYTHON=python3

export PYSPARK_DRIVER_PYTHON=jupyter

export PYSPARK_DRIVER_PYTHON_OPTS=notebook

pyspark --packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

Alternatively, you can mix in using --jars option for pyspark + pip install spark-nlp

If not using pyspark at all, you'll have to run the instructions pointed here

Google Colab is perhaps the easiest way to get started with spark-nlp. It requires no installation or setup other than having a Google account.

Run the following code in Google Colab notebook and start using spark-nlp right away.

# This is only to setup PySpark and Spark NLP on Colab

!wget https://setup.johnsnowlabs.com/colab.sh -O - | bash

This script comes with the two options to define pyspark and spark-nlp versions via options:

# -p is for pyspark

# -s is for spark-nlp

# -g will enable upgrading libcudnn8 to 8.1.0 on Google Colab for GPU usage

# by default they are set to the latest

!wget https://setup.johnsnowlabs.com/colab.sh -O - | bash /dev/stdin -p 3.2.3 -s 5.1.3

Spark NLP quick start on Google Colab is a live demo on Google Colab that performs named entity recognitions and sentiment analysis by using Spark NLP pretrained pipelines.

Run the following code in Kaggle Kernel and start using spark-nlp right away.

# Let's setup Kaggle for Spark NLP and PySpark

!wget https://setup.johnsnowlabs.com/kaggle.sh -O - | bash

This script comes with the two options to define pyspark and spark-nlp versions via options:

# -p is for pyspark

# -s is for spark-nlp

# -g will enable upgrading libcudnn8 to 8.1.0 on Kaggle for GPU usage

# by default they are set to the latest

!wget https://setup.johnsnowlabs.com/colab.sh -O - | bash /dev/stdin -p 3.2.3 -s 5.1.3

Spark NLP quick start on Kaggle Kernel is a live demo on Kaggle Kernel that performs named entity recognitions by using Spark NLP pretrained pipeline.

Create a cluster if you don't have one already

On a new cluster or existing one you need to add the following to the Advanced Options -> Spark tab:

spark.kryoserializer.buffer.max 2000M

spark.serializer org.apache.spark.serializer.KryoSerializer

In Libraries tab inside your cluster you need to follow these steps:

3.1. Install New -> PyPI -> spark-nlp==5.1.3 -> Install

3.2. Install New -> Maven -> Coordinates -> com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3 -> Install

Now you can attach your notebook to the cluster and use Spark NLP!

NOTE: Databricks' runtimes support different Apache Spark major releases. Please make sure you choose the correct Spark NLP Maven package name (Maven Coordinate) for your runtime from our Packages Cheatsheet

To launch EMR clusters with Apache Spark/PySpark and Spark NLP correctly you need to have bootstrap and software configuration.

A sample of your bootstrap script

#!/bin/bash

set -x -e

echo -e 'export PYSPARK_PYTHON=/usr/bin/python3

export HADOOP_CONF_DIR=/etc/hadoop/conf

export SPARK_JARS_DIR=/usr/lib/spark/jars

export SPARK_HOME=/usr/lib/spark' >> $HOME/.bashrc && source $HOME/.bashrc

sudo python3 -m pip install awscli boto spark-nlp

set +x

exit 0

A sample of your software configuration in JSON on S3 (must be public access):

[{

"Classification": "spark-env",

"Configurations": [{

"Classification": "export",

"Properties": {

"PYSPARK_PYTHON": "/usr/bin/python3"

}

}]

},

{

"Classification": "spark-defaults",

"Properties": {

"spark.yarn.stagingDir": "hdfs:///tmp",

"spark.yarn.preserve.staging.files": "true",

"spark.kryoserializer.buffer.max": "2000M",

"spark.serializer": "org.apache.spark.serializer.KryoSerializer",

"spark.driver.maxResultSize": "0",

"spark.jars.packages": "com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3"

}

}]

A sample of AWS CLI to launch EMR cluster:

aws emr create-cluster \

--name "Spark NLP 5.1.3" \

--release-label emr-6.2.0 \

--applications Name=Hadoop Name=Spark Name=Hive \

--instance-type m4.4xlarge \

--instance-count 3 \

--use-default-roles \

--log-uri "s3://<S3_BUCKET>/" \

--bootstrap-actions Path=s3://<S3_BUCKET>/emr-bootstrap.sh,Name=custome \

--configurations "https://<public_access>/sparknlp-config.json" \

--ec2-attributes KeyName=<your_ssh_key>,EmrManagedMasterSecurityGroup=<security_group_with_ssh>,EmrManagedSlaveSecurityGroup=<security_group_with_ssh> \

--profile <aws_profile_credentials>

At gcloud shell:

gcloud services enable dataproc.googleapis.com \

compute.googleapis.com \

storage-component.googleapis.com \

bigquery.googleapis.com \

bigquerystorage.googleapis.com

REGION=<region>

BUCKET_NAME=<bucket_name>

gsutil mb -c standard -l ${REGION} gs://${BUCKET_NAME}

REGION=<region>

ZONE=<zone>

CLUSTER_NAME=<cluster_name>

BUCKET_NAME=<bucket_name>

You can set image-version, master-machine-type, worker-machine-type, master-boot-disk-size, worker-boot-disk-size, num-workers as your needs. If you use the previous image-version from 2.0, you should also add ANACONDA to optional-components. And, you should enable gateway. Don't forget to set the maven coordinates for the jar in properties.

gcloud dataproc clusters create ${CLUSTER_NAME} \

--region=${REGION} \

--zone=${ZONE} \

--image-version=2.0 \

--master-machine-type=n1-standard-4 \

--worker-machine-type=n1-standard-2 \

--master-boot-disk-size=128GB \

--worker-boot-disk-size=128GB \

--num-workers=2 \

--bucket=${BUCKET_NAME} \

--optional-components=JUPYTER \

--enable-component-gateway \

--metadata 'PIP_PACKAGES=spark-nlp spark-nlp-display google-cloud-bigquery google-cloud-storage' \

--initialization-actions gs://goog-dataproc-initialization-actions-${REGION}/python/pip-install.sh \

--properties spark:spark.serializer=org.apache.spark.serializer.KryoSerializer,spark:spark.driver.maxResultSize=0,spark:spark.kryoserializer.buffer.max=2000M,spark:spark.jars.packages=com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

On an existing one, you need to install spark-nlp and spark-nlp-display packages from PyPI.

Now, you can attach your notebook to the cluster and use the Spark NLP!

You can change the following Spark NLP configurations via Spark Configuration:

| Property Name | Default | Meaning |

|---|---|---|

spark.jsl.settings.pretrained.cache_folder | ~/cache_pretrained | The location to download and extract pretrained Models and Pipelines. By default, it will be in User's Home directory under cache_pretrained directory |

spark.jsl.settings.storage.cluster_tmp_dir | hadoop.tmp.dir | The location to use on a cluster for temporarily files such as unpacking indexes for WordEmbeddings. By default, this locations is the location of hadoop.tmp.dir set via Hadoop configuration for Apache Spark. NOTE: S3 is not supported and it must be local, HDFS, or DBFS |

spark.jsl.settings.annotator.log_folder | ~/annotator_logs | The location to save logs from annotators during training such as NerDLApproach, ClassifierDLApproach, SentimentDLApproach, MultiClassifierDLApproach, etc. By default, it will be in User's Home directory under annotator_logs directory |

spark.jsl.settings.aws.credentials.access_key_id | None | Your AWS access key to use your S3 bucket to store log files of training models or access tensorflow graphs used in NerDLApproach |

spark.jsl.settings.aws.credentials.secret_access_key | None | Your AWS secret access key to use your S3 bucket to store log files of training models or access tensorflow graphs used in NerDLApproach |

spark.jsl.settings.aws.credentials.session_token | None | Your AWS MFA session token to use your S3 bucket to store log files of training models or access tensorflow graphs used in NerDLApproach |

spark.jsl.settings.aws.s3_bucket | None | Your AWS S3 bucket to store log files of training models or access tensorflow graphs used in NerDLApproach |

spark.jsl.settings.aws.region | None | Your AWS region to use your S3 bucket to store log files of training models or access tensorflow graphs used in NerDLApproach |

SparkSession:

You can use .config() during SparkSession creation to set Spark NLP configurations.

from pyspark.sql import SparkSession

spark = SparkSession.builder

.master("local[*]")

.config("spark.driver.memory", "16G")

.config("spark.driver.maxResultSize", "0")

.config("spark.serializer", "org.apache.spark.serializer.KryoSerializer")

.config("spark.kryoserializer.buffer.max", "2000m")

.config("spark.jsl.settings.pretrained.cache_folder", "sample_data/pretrained")

.config("spark.jsl.settings.storage.cluster_tmp_dir", "sample_data/storage")

.config("spark.jars.packages", "com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3")

.getOrCreate()

spark-shell:

spark-shell \

--driver-memory 16g \

--conf spark.driver.maxResultSize=0 \

--conf spark.serializer=org.apache.spark.serializer.KryoSerializer

--conf spark.kryoserializer.buffer.max=2000M \

--conf spark.jsl.settings.pretrained.cache_folder="sample_data/pretrained" \

--conf spark.jsl.settings.storage.cluster_tmp_dir="sample_data/storage" \

--packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

pyspark:

pyspark \

--driver-memory 16g \

--conf spark.driver.maxResultSize=0 \

--conf spark.serializer=org.apache.spark.serializer.KryoSerializer

--conf spark.kryoserializer.buffer.max=2000M \

--conf spark.jsl.settings.pretrained.cache_folder="sample_data/pretrained" \

--conf spark.jsl.settings.storage.cluster_tmp_dir="sample_data/storage" \

--packages com.johnsnowlabs.nlp:spark-nlp_2.12:5.1.3

Databricks:

On a new cluster or existing one you need to add the following to the Advanced Options -> Spark tab:

spark.kryoserializer.buffer.max 2000M

spark.serializer org.apache.spark.serializer.KryoSerializer

spark.jsl.settings.pretrained.cache_folder dbfs:/PATH_TO_CACHE

spark.jsl.settings.storage.cluster_tmp_dir dbfs:/PATH_TO_STORAGE

spark.jsl.settings.annotator.log_folder dbfs:/PATH_TO_LOGS

NOTE: If this is an existing cluster, after adding new configs or changing existing properties you need to restart it.

In Spark NLP we can define S3 locations to:

NerDLApproachLogging:

To configure S3 path for logging while training models. We need to set up AWS credentials as well as an S3 path

spark.conf.set("spark.jsl.settings.annotator.log_folder", "s3://my/s3/path/logs")

spark.conf.set("spark.jsl.settings.aws.credentials.access_key_id", "MY_KEY_ID")

spark.conf.set("spark.jsl.settings.aws.credentials.secret_access_key", "MY_SECRET_ACCESS_KEY")

spark.conf.set("spark.jsl.settings.aws.s3_bucket", "my.bucket")

spark.conf.set("spark.jsl.settings.aws.region", "my-region")

Now you can check the log on your S3 path defined in spark.jsl.settings.annotator.log_folder property. Make sure to use the prefix s3://, otherwise it will use the default configuration.

Tensorflow Graphs:

To reference S3 location for downloading graphs. We need to set up AWS credentials

spark.conf.set("spark.jsl.settings.aws.credentials.access_key_id", "MY_KEY_ID")

spark.conf.set("spark.jsl.settings.aws.credentials.secret_access_key", "MY_SECRET_ACCESS_KEY")

spark.conf.set("spark.jsl.settings.aws.region", "my-region")

MFA Configuration:

In case your AWS account is configured with MFA. You will need first to get temporal credentials and add session token to the configuration as shown in the examples below For logging:

spark.conf.set("spark.jsl.settings.aws.credentials.session_token", "MY_TOKEN")

An example of a bash script that gets temporal AWS credentials can be found here This script requires three arguments:

./aws_tmp_credentials.sh iam_user duration serial_number

Quick example:

import com.johnsnowlabs.nlp.pretrained.PretrainedPipeline

import com.johnsnowlabs.nlp.SparkNLP

SparkNLP.version()

val testData = spark.createDataFrame(Seq(

(1, "Google has announced the release of a beta version of the popular TensorFlow machine learning library"),

(2, "Donald John Trump (born June 14, 1946) is the 45th and current president of the United States")

)).toDF("id", "text")

val pipeline = PretrainedPipeline("explain_document_dl", lang = "en")

val annotation = pipeline.transform(testData)

annotation.show()

/*

import com.johnsnowlabs.nlp.pretrained.PretrainedPipeline

import com.johnsnowlabs.nlp.SparkNLP

2.5.0

testData: org.apache.spark.sql.DataFrame = [id: int, text: string]

pipeline: com.johnsnowlabs.nlp.pretrained.PretrainedPipeline = PretrainedPipeline(explain_document_dl,en,public/models)

annotation: org.apache.spark.sql.DataFrame = [id: int, text: string ... 10 more fields]

+---+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+

| id| text| document| token| sentence| checked| lemma| stem| pos| embeddings| ner| entities|

+---+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+

| 1|Google has announ...|[[document, 0, 10...|[[token, 0, 5, Go...|[[document, 0, 10...|[[token, 0, 5, Go...|[[token, 0, 5, Go...|[[token, 0, 5, go...|[[pos, 0, 5, NNP,...|[[word_embeddings...|[[named_entity, 0...|[[chunk, 0, 5, Go...|

| 2|The Paris metro w...|[[document, 0, 11...|[[token, 0, 2, Th...|[[document, 0, 11...|[[token, 0, 2, Th...|[[token, 0, 2, Th...|[[token, 0, 2, th...|[[pos, 0, 2, DT, ...|[[word_embeddings...|[[named_entity, 0...|[[chunk, 4, 8, Pa...|

+---+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+--------------------+

*/

annotation.select("entities.result").show(false)

/*

+----------------------------------+

|result |

+----------------------------------+

|[Google, TensorFlow] |

|[Donald John Trump, United States]|

+----------------------------------+

*/

There are functions in Spark NLP that will list all the available Pipelines of a particular language for you:

import com.johnsnowlabs.nlp.pretrained.ResourceDownloader

ResourceDownloader.showPublicPipelines(lang = "en")

/*

+--------------------------------------------+------+---------+

| Pipeline | lang | version |

+--------------------------------------------+------+---------+

| dependency_parse | en | 2.0.2 |

| analyze_sentiment_ml | en | 2.0.2 |

| check_spelling | en | 2.1.0 |

| match_datetime | en | 2.1.0 |

...

| explain_document_ml | en | 3.1.3 |

+--------------------------------------------+------+---------+

*/

Or if we want to check for a particular version:

import com.johnsnowlabs.nlp.pretrained.ResourceDownloader

ResourceDownloader.showPublicPipelines(lang = "en", version = "3.1.0")

/*

+---------------------------------------+------+---------+

| Pipeline | lang | version |

+---------------------------------------+------+---------+

| dependency_parse | en | 2.0.2 |

...

| clean_slang | en | 3.0.0 |

| clean_pattern | en | 3.0.0 |

| check_spelling | en | 3.0.0 |

| dependency_parse | en | 3.0.0 |

+---------------------------------------+------+---------+

*/

**Some selected languages:

** Afrikaans, Arabic, Armenian, Basque, Bengali, Breton, Bulgarian, Catalan, Czech, Dutch, English, Esperanto, Finnish, French, Galician, German, Greek, Hausa, Hebrew, Hindi, Hungarian, Indonesian, Irish, Italian, Japanese, Latin, Latvian, Marathi, Norwegian, Persian, Polish, Portuguese, Romanian, Russian, Slovak, Slovenian, Somali, Southern Sotho, Spanish, Swahili, Swedish, Tswana, Turkish, Ukrainian, Zulu

Quick online example:

# load NER model trained by deep learning approach and GloVe word embeddings

ner_dl = NerDLModel.pretrained('ner_dl')

# load NER model trained by deep learning approach and BERT word embeddings

ner_bert = NerDLModel.pretrained('ner_dl_bert')

// load French POS tagger model trained by Universal Dependencies

val french_pos = PerceptronModel.pretrained("pos_ud_gsd", lang = "fr")

// load Italian LemmatizerModel

val italian_lemma = LemmatizerModel.pretrained("lemma_dxc", lang = "it")

Quick offline example:

PerceptronModel annotator model inside Spark NLP Pipelineval french_pos = PerceptronModel.load("/tmp/pos_ud_gsd_fr_2.0.2_2.4_1556531457346/")

.setInputCols("document", "token")

.setOutputCol("pos")

There are functions in Spark NLP that will list all the available Models of a particular Annotator and language for you:

import com.johnsnowlabs.nlp.pretrained.ResourceDownloader

ResourceDownloader.showPublicModels(annotator = "NerDLModel", lang = "en")

/*

+---------------------------------------------+------+---------+

| Model | lang | version |

+---------------------------------------------+------+---------+

| onto_100 | en | 2.1.0 |

| onto_300 | en | 2.1.0 |

| ner_dl_bert | en | 2.2.0 |

| onto_100 | en | 2.4.0 |

| ner_conll_elmo | en | 3.2.2 |

+---------------------------------------------+------+---------+

*/

Or if we want to check for a particular version:

import com.johnsnowlabs.nlp.pretrained.ResourceDownloader

ResourceDownloader.showPublicModels(annotator = "NerDLModel", lang = "en", version = "3.1.0")

/*

+----------------------------+------+---------+

| Model | lang | version |

+----------------------------+------+---------+

| onto_100 | en | 2.1.0 |

| ner_aspect_based_sentiment | en | 2.6.2 |

| ner_weibo_glove_840B_300d | en | 2.6.2 |

| nerdl_atis_840b_300d | en | 2.7.1 |

| nerdl_snips_100d | en | 2.7.3 |

+----------------------------+------+---------+

*/

And to see a list of available annotators, you can use:

import com.johnsnowlabs.nlp.pretrained.ResourceDownloader

ResourceDownloader.showAvailableAnnotators()

/*

AlbertEmbeddings

AlbertForTokenClassification

AssertionDLModel

...

XlmRoBertaSentenceEmbeddings

XlnetEmbeddings

*/

Spark NLP library and all the pre-trained models/pipelines can be used entirely offline with no access to the Internet. If you are behind a proxy or a firewall with no access to the Maven repository (to download packages) or/and no access to S3 (to automatically download models and pipelines), you can simply follow the instructions to have Spark NLP without any limitations offline:

.pretrained() function to download pretrained

models, you will need to manually download your pipeline/model from Models Hub,

extract it, and load it.Example of SparkSession with Fat JAR to have Spark NLP offline:

spark = SparkSession.builder

.appName("Spark NLP")

.master("local[*]")

.config("spark.driver.memory", "16G")

.config("spark.driver.maxResultSize", "0")

.config("spark.kryoserializer.buffer.max", "2000M")

.config("spark.jars", "/tmp/spark-nlp-assembly-5.1.3.jar")

.getOrCreate()

hdfs:///tmp/spark-nlp-assembly-5.1.3.jar)Example of using pretrained Models and Pipelines in offline:

# instead of using pretrained() for online:

# french_pos = PerceptronModel.pretrained("pos_ud_gsd", lang="fr")

# you download this model, extract it, and use .load

french_pos = PerceptronModel.load("/tmp/pos_ud_gsd_fr_2.0.2_2.4_1556531457346/")

.setInputCols("document", "token")

.setOutputCol("pos")

# example for pipelines

# instead of using PretrainedPipeline

# pipeline = PretrainedPipeline('explain_document_dl', lang='en')

# you download this pipeline, extract it, and use PipelineModel

PipelineModel.load("/tmp/explain_document_dl_en_2.0.2_2.4_1556530585689/")

hdfs:///tmp/explain_document_dl_en_2.0.2_2.4_1556530585689/)Need more examples? Check out our dedicated Spark NLP Examples repository to showcase all Spark NLP use cases!

Also, don't forget to check Spark NLP in Action built by Streamlit.

Check our Articles and Videos page here

We have published a paper that you can cite for the Spark NLP library:

@article{KOCAMAN2021100058,

title = {Spark NLP: Natural language understanding at scale},

journal = {Software Impacts},

pages = {100058},

year = {2021},

issn = {2665-9638},

doi = {https://doi.org/10.1016/j.simpa.2021.100058},

url = {https://www.sciencedirect.com/science/article/pii/S2665963.2.300063},

author = {Veysel Kocaman and David Talby},

keywords = {Spark, Natural language processing, Deep learning, Tensorflow, Cluster},

abstract = {Spark NLP is a Natural Language Processing (NLP) library built on top of Apache Spark ML. It provides simple, performant & accurate NLP annotations for machine learning pipelines that can scale easily in a distributed environment. Spark NLP comes with 1100+ pretrained pipelines and models in more than 192+ languages. It supports nearly all the NLP tasks and modules that can be used seamlessly in a cluster. Downloaded more than 2.7 million times and experiencing 9x growth since January 2020, Spark NLP is used by 54% of healthcare organizations as the world’s most widely used NLP library in the enterprise.}

}

}

We appreciate any sort of contributions:

Clone the repo and submit your pull-requests! Or directly create issues in this repo.

FAQs

Unknown package

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Malicious update to @ctrl/tinycolor on npm is part of a supply-chain attack hitting 40+ packages across maintainers

Security News

pnpm's new minimumReleaseAge setting delays package updates to prevent supply chain attacks, with other tools like Taze and NCU following suit.

Security News

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.