Research

Security News

Lazarus Strikes npm Again with New Wave of Malicious Packages

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

@ai-sdk/openai

Advanced tools

The OpenAI provider contains language model support for the OpenAI chat and completion APIs. It creates language model objects that can be used with the `generateText`, `streamText`, `generateObject`, and `streamObject` AI functions.

The OpenAI provider contains language model support for the OpenAI chat and completion APIs.

It creates language model objects that can be used with the generateText, streamText, generateObject, and streamObject AI functions.

The OpenAI provider is available in the @ai-sdk/openai module. You can install it with

npm i @ai-sdk/openai

You can import OpenAI from ai/openai and initialize a provider instance with various settings:

import { OpenAI } from '@ai-sdk/openai'

const openai = new OpenAI({

baseUrl: '', // optional base URL for proxies etc.

apiKey: '' // optional API key, default to env property OPENAI_API_KEY

organization: '' // optional organization

})

The AI SDK also provides a shorthand openai import with an OpenAI provider instance that uses defaults:

import { openai } from '@ai-sdk/openai';

You can create models that call the OpenAI chat API using the .chat() factory method.

The first argument is the model id, e.g. gpt-4.

The OpenAI chat models support tool calls and some have multi-modal capabilities.

const model = openai.chat('gpt-3.5-turbo');

OpenAI chat models support also some model specific settings that are not part of the standard call settings. You can pass them as an options argument:

const model = openai.chat('gpt-3.5-turbo', {

logitBias: {

// optional likelihood for specific tokens

'50256': -100,

},

user: 'test-user', // optional unique user identifier

});

You can create models that call the OpenAI completions API using the .completion() factory method.

The first argument is the model id.

Currently only gpt-3.5-turbo-instruct is supported.

const model = openai.completion('gpt-3.5-turbo-instruct');

OpenAI completion models support also some model specific settings that are not part of the standard call settings. You can pass them as an options argument:

const model = openai.chat('gpt-3.5-turbo', {

echo: true, // optional, echo the prompt in addition to the completion

logitBias: {

// optional likelihood for specific tokens

'50256': -100,

},

suffix: 'some text', // optional suffix that comes after a completion of inserted text

user: 'test-user', // optional unique user identifier

});

FAQs

The **[OpenAI provider](https://sdk.vercel.ai/providers/ai-sdk-providers/openai)** for the [AI SDK](https://sdk.vercel.ai/docs) contains language model support for the OpenAI chat and completion APIs and embedding model support for the OpenAI embeddings A

The npm package @ai-sdk/openai receives a total of 582,646 weekly downloads. As such, @ai-sdk/openai popularity was classified as popular.

We found that @ai-sdk/openai demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 2 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

Security News

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

Security News

Socket CEO Feross Aboukhadijeh discusses the open web, open source security, and how Socket tackles software supply chain attacks on The Pair Program podcast.

Security News

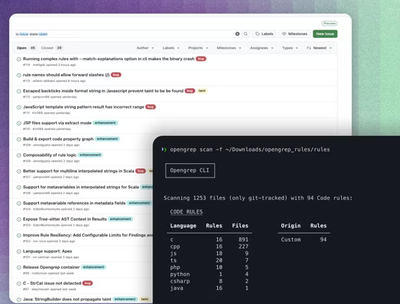

Opengrep continues building momentum with the alpha release of its Playground tool, demonstrating the project's rapid evolution just two months after its initial launch.