Company News

Socket Named to Rising in Cyber 2026 List of Top Cybersecurity Startups

Socket was named to the Rising in Cyber 2026 list, recognizing 30 private cybersecurity startups selected by CISOs and security executives.

@github/copilot-sdk

Advanced tools

TypeScript SDK for programmatic control of GitHub Copilot CLI via JSON-RPC

TypeScript SDK for programmatic control of GitHub Copilot CLI via JSON-RPC.

Note: This SDK is in public preview and may change in breaking ways.

npm install @github/copilot-sdk

Try the interactive chat sample (from the repo root):

cd nodejs

npm ci

npm run build

cd samples

npm install

npm start

import { CopilotClient, approveAll } from "@github/copilot-sdk";

// Create and start client

const client = new CopilotClient();

await client.start();

// Create a session (onPermissionRequest is required)

const session = await client.createSession({

model: "gpt-5",

onPermissionRequest: approveAll,

});

// Wait for response using typed event handlers

const done = new Promise<void>((resolve) => {

session.on("assistant.message", (event) => {

console.log(event.data.content);

});

session.on("session.idle", () => {

resolve();

});

});

// Send a message and wait for completion

await session.send({ prompt: "What is 2+2?" });

await done;

// Clean up

await session.disconnect();

await client.stop();

Sessions also support Symbol.asyncDispose for use with await using (TypeScript 5.2+/Node.js 18.0+):

await using session = await client.createSession({

model: "gpt-5",

onPermissionRequest: approveAll,

});

// session is automatically disconnected when leaving scope

new CopilotClient(options?: CopilotClientOptions)

Options:

cliPath?: string - Path to CLI executable (default: uses COPILOT_CLI_PATH env var or bundled instance)cliArgs?: string[] - Extra arguments prepended before SDK-managed flags (e.g. ["./dist-cli/index.js"] when using node)cliUrl?: string - URL of existing CLI server to connect to (e.g., "localhost:8080", "http://127.0.0.1:9000", or just "8080"). When provided, the client will not spawn a CLI process.port?: number - Server port (default: 0 for random)useStdio?: boolean - Use stdio transport instead of TCP (default: true)logLevel?: string - Log level (default: "info")autoStart?: boolean - Auto-start server (default: true)githubToken?: string - GitHub token for authentication. When provided, takes priority over other auth methods.useLoggedInUser?: boolean - Whether to use logged-in user for authentication (default: true, but false when githubToken is provided). Cannot be used with cliUrl.telemetry?: TelemetryConfig - OpenTelemetry configuration for the CLI process. Providing this object enables telemetry — no separate flag needed. See Telemetry below.onGetTraceContext?: TraceContextProvider - Advanced: callback for linking your application's own OpenTelemetry spans into the same distributed trace as the CLI's spans. Not needed for normal telemetry collection. See Telemetry below.start(): Promise<void>Start the CLI server and establish connection.

stop(): Promise<Error[]>Stop the server and close all sessions. Returns a list of any errors encountered during cleanup.

forceStop(): Promise<void>Force stop the CLI server without graceful cleanup. Use when stop() takes too long.

createSession(config?: SessionConfig): Promise<CopilotSession>Create a new conversation session.

Config:

sessionId?: string - Custom session ID.model?: string - Model to use ("gpt-5", "claude-sonnet-4.5", etc.). Required when using custom provider.reasoningEffort?: "low" | "medium" | "high" | "xhigh" - Reasoning effort level for models that support it. Use listModels() to check which models support this option.tools?: Tool[] - Custom tools exposed to the CLIsystemMessage?: SystemMessageConfig - System message customization (see below)infiniteSessions?: InfiniteSessionConfig - Configure automatic context compaction (see below)provider?: ProviderConfig - Custom API provider configuration (BYOK - Bring Your Own Key). See Custom Providers section.onPermissionRequest: PermissionHandler - Required. Handler called before each tool execution to approve or deny it. Use approveAll to allow everything, or provide a custom function for fine-grained control. See Permission Handling section.onUserInputRequest?: UserInputHandler - Handler for user input requests from the agent. Enables the ask_user tool. See User Input Requests section.onElicitationRequest?: ElicitationHandler - Handler for elicitation requests dispatched by the server. Enables this client to present form-based UI dialogs on behalf of the agent or other session participants. See Elicitation Requests section.hooks?: SessionHooks - Hook handlers for session lifecycle events. See Session Hooks section.resumeSession(sessionId: string, config?: ResumeSessionConfig): Promise<CopilotSession>Resume an existing session. Returns the session with workspacePath populated if infinite sessions were enabled.

ping(message?: string): Promise<{ message: string; timestamp: number }>Ping the server to check connectivity.

getState(): ConnectionStateGet current connection state.

listSessions(filter?: SessionListFilter): Promise<SessionMetadata[]>List all available sessions. Optionally filter by working directory context.

SessionMetadata:

sessionId: string - Unique session identifierstartTime: Date - When the session was createdmodifiedTime: Date - When the session was last modifiedsummary?: string - Optional session summaryisRemote: boolean - Whether the session is remotecontext?: SessionContext - Working directory context from session creationSessionContext:

cwd: string - Working directory where the session was createdgitRoot?: string - Git repository root (if in a git repo)repository?: string - GitHub repository in "owner/repo" formatbranch?: string - Current git branchdeleteSession(sessionId: string): Promise<void>Delete a session and its data from disk.

getForegroundSessionId(): Promise<string | undefined>Get the ID of the session currently displayed in the TUI. Only available when connecting to a server running in TUI+server mode (--ui-server).

setForegroundSessionId(sessionId: string): Promise<void>Request the TUI to switch to displaying the specified session. Only available in TUI+server mode.

on(eventType: SessionLifecycleEventType, handler): () => voidSubscribe to a specific session lifecycle event type. Returns an unsubscribe function.

const unsubscribe = client.on("session.foreground", (event) => {

console.log(`Session ${event.sessionId} is now in foreground`);

});

on(handler: SessionLifecycleHandler): () => voidSubscribe to all session lifecycle events. Returns an unsubscribe function.

const unsubscribe = client.on((event) => {

console.log(`${event.type}: ${event.sessionId}`);

});

Lifecycle Event Types:

session.created - A new session was createdsession.deleted - A session was deletedsession.updated - A session was updated (e.g., new messages)session.foreground - A session became the foreground session in TUIsession.background - A session is no longer the foreground sessionRepresents a single conversation session.

sessionId: stringThe unique identifier for this session.

workspacePath?: stringPath to the session workspace directory when infinite sessions are enabled. Contains checkpoints/, plan.md, and files/ subdirectories. Undefined if infinite sessions are disabled.

send(options: MessageOptions): Promise<string>Send a message to the session. Returns immediately after the message is queued; use event handlers or sendAndWait() to wait for completion.

Options:

prompt: string - The message/prompt to sendattachments?: Array<{type, path, displayName}> - File attachmentsmode?: "enqueue" | "immediate" - Delivery modeReturns the message ID.

sendAndWait(options: MessageOptions, timeout?: number): Promise<AssistantMessageEvent | undefined>Send a message and wait until the session becomes idle.

Options:

prompt: string - The message/prompt to sendattachments?: Array<{type, path, displayName}> - File attachmentsmode?: "enqueue" | "immediate" - Delivery modetimeout?: number - Optional timeout in millisecondsReturns the final assistant message event, or undefined if none was received.

on(eventType: string, handler: TypedSessionEventHandler): () => voidSubscribe to a specific event type. The handler receives properly typed events.

// Listen for specific event types with full type inference

session.on("assistant.message", (event) => {

console.log(event.data.content); // TypeScript knows about event.data.content

});

session.on("session.idle", () => {

console.log("Session is idle");

});

// Listen to streaming events

session.on("assistant.message_delta", (event) => {

process.stdout.write(event.data.deltaContent);

});

on(handler: SessionEventHandler): () => voidSubscribe to all session events. Returns an unsubscribe function.

const unsubscribe = session.on((event) => {

// Handle any event type

console.log(event.type, event);

});

// Later...

unsubscribe();

abort(): Promise<void>Abort the currently processing message in this session.

getMessages(): Promise<SessionEvent[]>Get all events/messages from this session.

disconnect(): Promise<void>Disconnect the session and free resources. Session data on disk is preserved for later resumption.

capabilities: SessionCapabilitiesHost capabilities reported when the session was created or resumed. Use this to check feature support before calling capability-gated APIs.

if (session.capabilities.ui?.elicitation) {

const ok = await session.ui.confirm("Deploy?");

}

Capabilities may update during the session. For example, when another client joins or disconnects with an elicitation handler. The SDK automatically applies capabilities.changed events, so this property always reflects the current state.

ui: SessionUiApiInteractive UI methods for showing dialogs to the user. Only available when the CLI host supports elicitation (session.capabilities.ui?.elicitation === true). See UI Elicitation for full details.

destroy(): Promise<void> (deprecated)Deprecated — use disconnect() instead.

Sessions emit various events during processing:

user.message - User message addedassistant.message - Assistant responseassistant.message_delta - Streaming response chunktool.execution_start - Tool execution startedtool.execution_complete - Tool execution completedcommand.execute - Command dispatch request (handled internally by the SDK)commands.changed - Command registration changedSee SessionEvent type in the source for full details.

The SDK supports image attachments via the attachments parameter. You can attach images by providing their file path, or by passing base64-encoded data directly using a blob attachment:

// File attachment — runtime reads from disk

await session.send({

prompt: "What's in this image?",

attachments: [

{

type: "file",

path: "/path/to/image.jpg",

},

],

});

// Blob attachment — provide base64 data directly

await session.send({

prompt: "What's in this image?",

attachments: [

{

type: "blob",

data: base64ImageData,

mimeType: "image/png",

},

],

});

Supported image formats include JPG, PNG, GIF, and other common image types. The agent's view tool can also read images directly from the filesystem, so you can also ask questions like:

await session.send({ prompt: "What does the most recent jpg in this directory portray?" });

Enable streaming to receive assistant response chunks as they're generated:

const session = await client.createSession({

model: "gpt-5",

streaming: true,

});

// Wait for completion using typed event handlers

const done = new Promise<void>((resolve) => {

session.on("assistant.message_delta", (event) => {

// Streaming message chunk - print incrementally

process.stdout.write(event.data.deltaContent);

});

session.on("assistant.reasoning_delta", (event) => {

// Streaming reasoning chunk (if model supports reasoning)

process.stdout.write(event.data.deltaContent);

});

session.on("assistant.message", (event) => {

// Final message - complete content

console.log("\n--- Final message ---");

console.log(event.data.content);

});

session.on("assistant.reasoning", (event) => {

// Final reasoning content (if model supports reasoning)

console.log("--- Reasoning ---");

console.log(event.data.content);

});

session.on("session.idle", () => {

// Session finished processing

resolve();

});

});

await session.send({ prompt: "Tell me a short story" });

await done; // Wait for streaming to complete

When streaming: true:

assistant.message_delta events are sent with deltaContent containing incremental textassistant.reasoning_delta events are sent with deltaContent for reasoning/chain-of-thought (model-dependent)deltaContent values to build the full response progressivelyassistant.message and assistant.reasoning events contain the complete contentNote: assistant.message and assistant.reasoning (final events) are always sent regardless of streaming setting.

const client = new CopilotClient({ autoStart: false });

// Start manually

await client.start();

// Use client...

// Stop manually

await client.stop();

You can let the CLI call back into your process when the model needs capabilities you own. Use defineTool with Zod schemas for type-safe tool definitions:

import { z } from "zod";

import { CopilotClient, defineTool } from "@github/copilot-sdk";

const session = await client.createSession({

model: "gpt-5",

tools: [

defineTool("lookup_issue", {

description: "Fetch issue details from our tracker",

parameters: z.object({

id: z.string().describe("Issue identifier"),

}),

handler: async ({ id }) => {

const issue = await fetchIssue(id);

return issue;

},

}),

],

});

When Copilot invokes lookup_issue, the client automatically runs your handler and responds to the CLI. Handlers can return any JSON-serializable value (automatically wrapped), a simple string, or a ToolResultObject for full control over result metadata. Raw JSON schemas are also supported if Zod isn't desired.

If you register a tool with the same name as a built-in CLI tool (e.g. edit_file, read_file), the SDK will throw an error unless you explicitly opt in by setting overridesBuiltInTool: true. This flag signals that you intend to replace the built-in tool with your custom implementation.

defineTool("edit_file", {

description: "Custom file editor with project-specific validation",

parameters: z.object({ path: z.string(), content: z.string() }),

overridesBuiltInTool: true,

handler: async ({ path, content }) => {

/* your logic */

},

});

Set skipPermission: true on a tool definition to allow it to execute without triggering a permission prompt:

defineTool("safe_lookup", {

description: "A read-only lookup that needs no confirmation",

parameters: z.object({ id: z.string() }),

skipPermission: true,

handler: async ({ id }) => {

/* your logic */

},

});

Register slash commands so that users of the CLI's TUI can invoke custom actions via /commandName. Each command has a name, optional description, and a handler called when the user executes it.

const session = await client.createSession({

onPermissionRequest: approveAll,

commands: [

{

name: "deploy",

description: "Deploy the app to production",

handler: async ({ commandName, args }) => {

console.log(`Deploying with args: ${args}`);

// Do work here — any thrown error is reported back to the CLI

},

},

],

});

When the user types /deploy staging in the CLI, the SDK receives a command.execute event, routes it to your handler, and automatically responds to the CLI. If the handler throws, the error message is forwarded.

Commands are sent to the CLI on both createSession and resumeSession, so you can update the command set when resuming.

When the session has elicitation support — either from the CLI's TUI or from another client that registered an onElicitationRequest handler (see Elicitation Requests) — the SDK can request interactive form dialogs from the user. The session.ui object provides convenience methods built on a single generic elicitation RPC.

Capability check: Elicitation is only available when at least one connected participant advertises support. Always check

session.capabilities.ui?.elicitationbefore calling UI methods — this property updates automatically as participants join and leave.

const session = await client.createSession({ onPermissionRequest: approveAll });

if (session.capabilities.ui?.elicitation) {

// Confirm dialog — returns boolean

const ok = await session.ui.confirm("Deploy to production?");

// Selection dialog — returns selected value or null

const env = await session.ui.select("Pick environment", ["production", "staging", "dev"]);

// Text input — returns string or null

const name = await session.ui.input("Project name:", {

title: "Name",

minLength: 1,

maxLength: 50,

});

// Generic elicitation with full schema control

const result = await session.ui.elicitation({

message: "Configure deployment",

requestedSchema: {

type: "object",

properties: {

region: { type: "string", enum: ["us-east", "eu-west"] },

dryRun: { type: "boolean", default: true },

},

required: ["region"],

},

});

// result.action: "accept" | "decline" | "cancel"

// result.content: { region: "us-east", dryRun: true } (when accepted)

}

All UI methods throw if elicitation is not supported by the host.

Control the system prompt using systemMessage in session config:

const session = await client.createSession({

model: "gpt-5",

systemMessage: {

content: `

<workflow_rules>

- Always check for security vulnerabilities

- Suggest performance improvements when applicable

</workflow_rules>

`,

},

});

The SDK auto-injects environment context, tool instructions, and security guardrails. The default CLI persona is preserved, and your content is appended after SDK-managed sections. To change the persona or fully redefine the prompt, use mode: "replace" or mode: "customize".

Use mode: "customize" to selectively override individual sections of the prompt while preserving the rest:

import { SYSTEM_PROMPT_SECTIONS } from "@github/copilot-sdk";

import type { SectionOverride, SystemPromptSection } from "@github/copilot-sdk";

const session = await client.createSession({

model: "gpt-5",

systemMessage: {

mode: "customize",

sections: {

// Replace the tone/style section

tone: {

action: "replace",

content: "Respond in a warm, professional tone. Be thorough in explanations.",

},

// Remove coding-specific rules

code_change_rules: { action: "remove" },

// Append to existing guidelines

guidelines: { action: "append", content: "\n* Always cite data sources" },

},

// Additional instructions appended after all sections

content: "Focus on financial analysis and reporting.",

},

});

Available section IDs: identity, tone, tool_efficiency, environment_context, code_change_rules, guidelines, safety, tool_instructions, custom_instructions, last_instructions. Use the SYSTEM_PROMPT_SECTIONS constant for descriptions of each section.

Each section override supports four actions:

replace — Replace the section content entirelyremove — Remove the section from the promptappend — Add content after the existing sectionprepend — Add content before the existing sectionUnknown section IDs are handled gracefully: content from replace/append/prepend overrides is appended to additional instructions, and remove overrides are silently ignored.

For full control (removes all guardrails), use mode: "replace":

const session = await client.createSession({

model: "gpt-5",

systemMessage: {

mode: "replace",

content: "You are a helpful assistant.",

},

});

By default, sessions use infinite sessions which automatically manage context window limits through background compaction and persist state to a workspace directory.

// Default: infinite sessions enabled with default thresholds

const session = await client.createSession({ model: "gpt-5" });

// Access the workspace path for checkpoints and files

console.log(session.workspacePath);

// => ~/.copilot/session-state/{sessionId}/

// Custom thresholds

const session = await client.createSession({

model: "gpt-5",

infiniteSessions: {

enabled: true,

backgroundCompactionThreshold: 0.8, // Start compacting at 80% context usage

bufferExhaustionThreshold: 0.95, // Block at 95% until compaction completes

},

});

// Disable infinite sessions

const session = await client.createSession({

model: "gpt-5",

infiniteSessions: { enabled: false },

});

When enabled, sessions emit compaction events:

session.compaction_start - Background compaction startedsession.compaction_complete - Compaction finished (includes token counts)const session1 = await client.createSession({ model: "gpt-5" });

const session2 = await client.createSession({ model: "claude-sonnet-4.5" });

// Both sessions are independent

await session1.sendAndWait({ prompt: "Hello from session 1" });

await session2.sendAndWait({ prompt: "Hello from session 2" });

const session = await client.createSession({

sessionId: "my-custom-session-id",

model: "gpt-5",

});

await session.send({

prompt: "Analyze this file",

attachments: [

{

type: "file",

path: "/path/to/file.js",

displayName: "My File",

},

],

});

The SDK supports custom OpenAI-compatible API providers (BYOK - Bring Your Own Key), including local providers like Ollama. When using a custom provider, you must specify the model explicitly.

ProviderConfig:

type?: "openai" | "azure" | "anthropic" - Provider type (default: "openai")baseUrl: string - API endpoint URL (required)apiKey?: string - API key (optional for local providers like Ollama)bearerToken?: string - Bearer token for authentication (takes precedence over apiKey)wireApi?: "completions" | "responses" - API format for OpenAI/Azure (default: "completions")azure?.apiVersion?: string - Azure API version (default: "2024-10-21")Example with Ollama:

const session = await client.createSession({

model: "deepseek-coder-v2:16b", // Required when using custom provider

provider: {

type: "openai",

baseUrl: "http://localhost:11434/v1", // Ollama endpoint

// apiKey not required for Ollama

},

});

await session.sendAndWait({ prompt: "Hello!" });

Example with custom OpenAI-compatible API:

const session = await client.createSession({

model: "gpt-4",

provider: {

type: "openai",

baseUrl: "https://my-api.example.com/v1",

apiKey: process.env.MY_API_KEY,

},

});

Example with Azure OpenAI:

const session = await client.createSession({

model: "gpt-4",

provider: {

type: "azure", // Must be "azure" for Azure endpoints, NOT "openai"

baseUrl: "https://my-resource.openai.azure.com", // Just the host, no path

apiKey: process.env.AZURE_OPENAI_KEY,

azure: {

apiVersion: "2024-10-21",

},

},

});

Important notes:

- When using a custom provider, the

modelparameter is required. The SDK will throw an error if no model is specified.- For Azure OpenAI endpoints (

*.openai.azure.com), you must usetype: "azure", nottype: "openai".- The

baseUrlshould be just the host (e.g.,https://my-resource.openai.azure.com). Do not include/openai/v1in the URL - the SDK handles path construction automatically.

The SDK supports OpenTelemetry for distributed tracing. Provide a telemetry config to enable trace export from the CLI process — this is all most users need:

const client = new CopilotClient({

telemetry: {

otlpEndpoint: "http://localhost:4318",

},

});

With just this configuration, the CLI emits spans for every session, message, and tool call to your collector. No additional dependencies or setup required.

TelemetryConfig options:

otlpEndpoint?: string - OTLP HTTP endpoint URLfilePath?: string - File path for JSON-lines trace outputexporterType?: string - "otlp-http" or "file"sourceName?: string - Instrumentation scope namecaptureContent?: boolean - Whether to capture message contentYou don't need this for normal telemetry collection. The

telemetryconfig above is sufficient to get full traces from the CLI.

onGetTraceContext is only needed if your application creates its own OpenTelemetry spans and you want them to appear in the same distributed trace as the CLI's spans — for example, to nest a "handle tool call" span inside the CLI's "execute tool" span, or to show the SDK call as a child of your application's request-handling span.

If you're already using @opentelemetry/api in your app and want this linkage, provide a callback:

import { propagation, context } from "@opentelemetry/api";

const client = new CopilotClient({

telemetry: { otlpEndpoint: "http://localhost:4318" },

onGetTraceContext: () => {

const carrier: Record<string, string> = {};

propagation.inject(context.active(), carrier);

return carrier;

},

});

Inbound trace context from the CLI is available on the ToolInvocation object passed to tool handlers as traceparent and tracestate fields. See the OpenTelemetry guide for a full wire-up example.

An onPermissionRequest handler is required whenever you create or resume a session. The handler is called before the agent executes each tool (file writes, shell commands, custom tools, etc.) and must return a decision.

Use the built-in approveAll helper to allow every tool call without any checks:

import { CopilotClient, approveAll } from "@github/copilot-sdk";

const session = await client.createSession({

model: "gpt-5",

onPermissionRequest: approveAll,

});

Provide your own function to inspect each request and apply custom logic:

import type { PermissionRequest, PermissionRequestResult } from "@github/copilot-sdk";

const session = await client.createSession({

model: "gpt-5",

onPermissionRequest: (request: PermissionRequest, invocation): PermissionRequestResult => {

// request.kind — what type of operation is being requested:

// "shell" — executing a shell command

// "write" — writing or editing a file

// "read" — reading a file

// "mcp" — calling an MCP tool

// "custom-tool" — calling one of your registered tools

// "url" — fetching a URL

// "memory" — storing or retrieving persistent session memory

// "hook" — invoking a server-side hook or integration

// (additional kinds may be added; include a default case in handlers)

// request.toolCallId — the tool call that triggered this request

// request.toolName — name of the tool (for custom-tool / mcp)

// request.fileName — file being written (for write)

// request.fullCommandText — full shell command (for shell)

if (request.kind === "shell") {

// Deny shell commands

return { kind: "denied-interactively-by-user" };

}

return { kind: "approved" };

},

});

| Kind | Meaning |

|---|---|

"approved" | Allow the tool to run |

"denied-interactively-by-user" | User explicitly denied the request |

"denied-no-approval-rule-and-could-not-request-from-user" | No approval rule matched and user could not be asked |

"denied-by-rules" | Denied by a policy rule |

"denied-by-content-exclusion-policy" | Denied due to a content exclusion policy |

"no-result" | Leave the request unanswered (only valid with protocol v1; rejected by protocol v2 servers) |

Pass onPermissionRequest when resuming a session too — it is required:

const session = await client.resumeSession("session-id", {

onPermissionRequest: approveAll,

});

To let a specific custom tool bypass the permission prompt entirely, set skipPermission: true on the tool definition. See Skipping Permission Prompts under Tools.

Enable the agent to ask questions to the user using the ask_user tool by providing an onUserInputRequest handler:

const session = await client.createSession({

model: "gpt-5",

onUserInputRequest: async (request, invocation) => {

// request.question - The question to ask

// request.choices - Optional array of choices for multiple choice

// request.allowFreeform - Whether freeform input is allowed (default: true)

console.log(`Agent asks: ${request.question}`);

if (request.choices) {

console.log(`Choices: ${request.choices.join(", ")}`);

}

// Return the user's response

return {

answer: "User's answer here",

wasFreeform: true, // Whether the answer was freeform (not from choices)

};

},

});

Register an onElicitationRequest handler to let your client act as an elicitation provider — presenting form-based UI dialogs on behalf of the agent. When provided, the server notifies your client whenever a tool or MCP server needs structured user input.

const session = await client.createSession({

model: "gpt-5",

onPermissionRequest: approveAll,

onElicitationRequest: async (context) => {

// context.sessionId - Session that triggered the request

// context.message - Description of what information is needed

// context.requestedSchema - JSON Schema describing the form fields

// context.mode - "form" (structured input) or "url" (browser redirect)

// context.elicitationSource - Origin of the request (e.g. MCP server name)

console.log(`Elicitation from ${context.elicitationSource}: ${context.message}`);

// Present UI to the user and collect their response...

return {

action: "accept", // "accept", "decline", or "cancel"

content: { region: "us-east", dryRun: true },

};

},

});

// The session now reports elicitation capability

console.log(session.capabilities.ui?.elicitation); // true

When onElicitationRequest is provided, the SDK sends requestElicitation: true during session create/resume, which enables session.capabilities.ui.elicitation on the session.

In multi-client scenarios:

capabilities.changed event to notify them that elicitation is now possible. The SDK automatically updates session.capabilities when these events arrive.capabilities.changed event indicating elicitation is no longer available.Hook into session lifecycle events by providing handlers in the hooks configuration:

const session = await client.createSession({

model: "gpt-5",

hooks: {

// Called before each tool execution

onPreToolUse: async (input, invocation) => {

console.log(`About to run tool: ${input.toolName}`);

// Return permission decision and optionally modify args

return {

permissionDecision: "allow", // "allow", "deny", or "ask"

modifiedArgs: input.toolArgs, // Optionally modify tool arguments

additionalContext: "Extra context for the model",

};

},

// Called after each tool execution

onPostToolUse: async (input, invocation) => {

console.log(`Tool ${input.toolName} completed`);

// Optionally modify the result or add context

return {

additionalContext: "Post-execution notes",

};

},

// Called when user submits a prompt

onUserPromptSubmitted: async (input, invocation) => {

console.log(`User prompt: ${input.prompt}`);

return {

modifiedPrompt: input.prompt, // Optionally modify the prompt

};

},

// Called when session starts

onSessionStart: async (input, invocation) => {

console.log(`Session started from: ${input.source}`); // "startup", "resume", "new"

return {

additionalContext: "Session initialization context",

};

},

// Called when session ends

onSessionEnd: async (input, invocation) => {

console.log(`Session ended: ${input.reason}`);

},

// Called when an error occurs

onErrorOccurred: async (input, invocation) => {

console.error(`Error in ${input.errorContext}: ${input.error}`);

return {

errorHandling: "retry", // "retry", "skip", or "abort"

};

},

},

});

Available hooks:

onPreToolUse - Intercept tool calls before execution. Can allow/deny or modify arguments.onPostToolUse - Process tool results after execution. Can modify results or add context.onUserPromptSubmitted - Intercept user prompts. Can modify the prompt before processing.onSessionStart - Run logic when a session starts or resumes.onSessionEnd - Cleanup or logging when session ends.onErrorOccurred - Handle errors with retry/skip/abort strategies.try {

const session = await client.createSession();

await session.send({ prompt: "Hello" });

} catch (error) {

console.error("Error:", error.message);

}

cliPath)MIT

FAQs

TypeScript SDK for programmatic control of GitHub Copilot CLI via JSON-RPC

The npm package @github/copilot-sdk receives a total of 134,899 weekly downloads. As such, @github/copilot-sdk popularity was classified as popular.

We found that @github/copilot-sdk demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 22 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Company News

Socket was named to the Rising in Cyber 2026 list, recognizing 30 private cybersecurity startups selected by CISOs and security executives.

Research

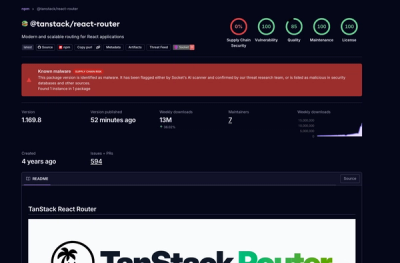

Socket detected 84 compromised TanStack npm package artifacts modified with suspected CI credential-stealing malware.

Security News

A dispute over fsnotify maintainer access set off supply chain alarms around one of Go’s most widely used filesystem libraries.