Security News

/Research

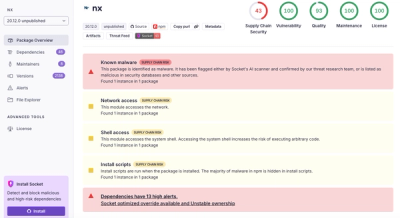

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

@google-cloud/dataproc

Advanced tools

Google Cloud Dataproc API client for Node.js

A comprehensive list of changes in each version may be found in the CHANGELOG.

Read more about the client libraries for Cloud APIs, including the older Google APIs Client Libraries, in Client Libraries Explained.

Table of contents:

npm install @google-cloud/dataproc

/**

* TODO(developer): Uncomment these variables before running the sample.

*/

/**

* Required. The ID of the Google Cloud Platform project that the cluster

* belongs to.

*/

// const projectId = 'abc123'

/**

* Required. The Dataproc region in which to handle the request.

*/

// const region = 'us-central1'

/**

* Optional. A filter constraining the clusters to list. Filters are

* case-sensitive and have the following syntax:

* field = value AND field = value ...

* where **field** is one of `status.state`, `clusterName`, or `labels.KEY`,

* and `[KEY]` is a label key. **value** can be `*` to match all values.

* `status.state` can be one of the following: `ACTIVE`, `INACTIVE`,

* `CREATING`, `RUNNING`, `ERROR`, `DELETING`, or `UPDATING`. `ACTIVE`

* contains the `CREATING`, `UPDATING`, and `RUNNING` states. `INACTIVE`

* contains the `DELETING` and `ERROR` states.

* `clusterName` is the name of the cluster provided at creation time.

* Only the logical `AND` operator is supported; space-separated items are

* treated as having an implicit `AND` operator.

* Example filter:

* status.state = ACTIVE AND clusterName = mycluster

* AND labels.env = staging AND labels.starred = *

*/

// const filter = 'abc123'

/**

* Optional. The standard List page size.

*/

// const pageSize = 1234

/**

* Optional. The standard List page token.

*/

// const pageToken = 'abc123'

// Imports the Dataproc library

const {ClusterControllerClient} = require('@google-cloud/dataproc').v1;

// Instantiates a client

const dataprocClient = new ClusterControllerClient();

async function callListClusters() {

// Construct request

const request = {

projectId,

region,

};

// Run request

const iterable = dataprocClient.listClustersAsync(request);

for await (const response of iterable) {

console.log(response);

}

}

callListClusters();

Samples are in the samples/ directory. Each sample's README.md has instructions for running its sample.

| Sample | Source Code | Try it |

|---|---|---|

| Autoscaling_policy_service.create_autoscaling_policy | source code |  |

| Autoscaling_policy_service.delete_autoscaling_policy | source code |  |

| Autoscaling_policy_service.get_autoscaling_policy | source code |  |

| Autoscaling_policy_service.list_autoscaling_policies | source code |  |

| Autoscaling_policy_service.update_autoscaling_policy | source code |  |

| Batch_controller.create_batch | source code |  |

| Batch_controller.delete_batch | source code |  |

| Batch_controller.get_batch | source code |  |

| Batch_controller.list_batches | source code |  |

| Cluster_controller.create_cluster | source code |  |

| Cluster_controller.delete_cluster | source code |  |

| Cluster_controller.diagnose_cluster | source code |  |

| Cluster_controller.get_cluster | source code |  |

| Cluster_controller.list_clusters | source code |  |

| Cluster_controller.start_cluster | source code |  |

| Cluster_controller.stop_cluster | source code |  |

| Cluster_controller.update_cluster | source code |  |

| Job_controller.cancel_job | source code |  |

| Job_controller.delete_job | source code |  |

| Job_controller.get_job | source code |  |

| Job_controller.list_jobs | source code |  |

| Job_controller.submit_job | source code |  |

| Job_controller.submit_job_as_operation | source code |  |

| Job_controller.update_job | source code |  |

| Node_group_controller.create_node_group | source code |  |

| Node_group_controller.get_node_group | source code |  |

| Node_group_controller.resize_node_group | source code |  |

| Session_controller.create_session | source code |  |

| Session_controller.delete_session | source code |  |

| Session_controller.get_session | source code |  |

| Session_controller.list_sessions | source code |  |

| Session_controller.terminate_session | source code |  |

| Session_template_controller.create_session_template | source code |  |

| Session_template_controller.delete_session_template | source code |  |

| Session_template_controller.get_session_template | source code |  |

| Session_template_controller.list_session_templates | source code |  |

| Session_template_controller.update_session_template | source code |  |

| Workflow_template_service.create_workflow_template | source code |  |

| Workflow_template_service.delete_workflow_template | source code |  |

| Workflow_template_service.get_workflow_template | source code |  |

| Workflow_template_service.instantiate_inline_workflow_template | source code |  |

| Workflow_template_service.instantiate_workflow_template | source code |  |

| Workflow_template_service.list_workflow_templates | source code |  |

| Workflow_template_service.update_workflow_template | source code |  |

| Quickstart | source code |  |

The Google Cloud Dataproc Node.js Client API Reference documentation also contains samples.

Our client libraries follow the Node.js release schedule. Libraries are compatible with all current active and maintenance versions of Node.js. If you are using an end-of-life version of Node.js, we recommend that you update as soon as possible to an actively supported LTS version.

Google's client libraries support legacy versions of Node.js runtimes on a best-efforts basis with the following warnings:

Client libraries targeting some end-of-life versions of Node.js are available, and

can be installed through npm dist-tags.

The dist-tags follow the naming convention legacy-(version).

For example, npm install @google-cloud/dataproc@legacy-8 installs client libraries

for versions compatible with Node.js 8.

This library follows Semantic Versioning.

This library is considered to be stable. The code surface will not change in backwards-incompatible ways unless absolutely necessary (e.g. because of critical security issues) or with an extensive deprecation period. Issues and requests against stable libraries are addressed with the highest priority.

More Information: Google Cloud Platform Launch Stages

Contributions welcome! See the Contributing Guide.

Please note that this README.md, the samples/README.md,

and a variety of configuration files in this repository (including .nycrc and tsconfig.json)

are generated from a central template. To edit one of these files, make an edit

to its templates in

directory.

Apache Version 2.0

See LICENSE

FAQs

Google Cloud Dataproc API client for Node.js

The npm package @google-cloud/dataproc receives a total of 6,868 weekly downloads. As such, @google-cloud/dataproc popularity was classified as popular.

We found that @google-cloud/dataproc demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.