Research

Security News

Lazarus Strikes npm Again with New Wave of Malicious Packages

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

@promptbook/azure-openai

Advanced tools

Promptbook

PromptbookBuild responsible, controlled and transparent applications on top of LLM models!

.docx, .doc and .pdf documents⚠ Warning: This is a pre-release version of the library. It is not yet ready for production use. Please look at latest stable release.

@promptbook/azure-openai@promptbook/azure-openai is one part of the promptbook ecosystem.To install this package, run:

# Install entire promptbook ecosystem

npm i ptbk

# Install just this package to save space

npm install @promptbook/azure-openai

@promptbook/azure-openai integrates Azure OpenAI API with Promptbook. It allows to execute Promptbooks with Azure OpenAI GPT models.

Note: This is similar to @promptbook/openai but more useful for Enterprise customers who use Azure OpenAI to ensure strict data privacy and compliance.

import { createPipelineExecutor, assertsExecutionSuccessful } from '@promptbook/core';

import {

createCollectionFromDirectory,

$provideExecutionToolsForNode,

$provideFilesystemForNode,

} from '@promptbook/node';

import { JavascriptExecutionTools } from '@promptbook/execute-javascript';

import { AzureOpenAiExecutionTools } from '@promptbook/azure-openai';

// ▶ Prepare tools

const fs = $provideFilesystemForNode();

const llm = new AzureOpenAiExecutionTools(

// <- TODO: [🧱] Implement in a functional (not new Class) way

{

isVerbose: true,

resourceName: process.env.AZUREOPENAI_RESOURCE_NAME,

deploymentName: process.env.AZUREOPENAI_DEPLOYMENT_NAME,

apiKey: process.env.AZUREOPENAI_API_KEY,

},

);

const executables = await $provideExecutablesForNode();

const tools = {

llm,

fs,

scrapers: await $provideScrapersForNode({ fs, llm, executables }),

script: [new JavascriptExecutionTools()],

};

// ▶ Create whole pipeline collection

const collection = await createCollectionFromDirectory('./promptbook-collection', tools);

// ▶ Get single Pipeline

const pipeline = await collection.getPipelineByUrl(`https://promptbook.studio/my-collection/write-article.ptbk.md`);

// ▶ Create executor - the function that will execute the Pipeline

const pipelineExecutor = createPipelineExecutor({ pipeline, tools });

// ▶ Prepare input parameters

const inputParameters = { word: 'crocodile' };

// 🚀▶ Execute the Pipeline

const result = await pipelineExecutor(inputParameters);

// ▶ Fail if the execution was not successful

assertsExecutionSuccessful(result);

// ▶ Handle the result

const { isSuccessful, errors, outputParameters, executionReport } = result;

console.info(outputParameters);

You can use multiple LLM providers in one Promptbook execution. The best model will be chosen automatically according to the prompt and the model's capabilities.

import { createPipelineExecutor, assertsExecutionSuccessful } from '@promptbook/core';

import {

createCollectionFromDirectory,

$provideExecutionToolsForNode,

$provideFilesystemForNode,

} from '@promptbook/node';

import { JavascriptExecutionTools } from '@promptbook/execute-javascript';

import { AzureOpenAiExecutionTools } from '@promptbook/azure-openai';

import { OpenAiExecutionTools } from '@promptbook/openai';

import { AnthropicClaudeExecutionTools } from '@promptbook/anthropic-claude';

// ▶ Prepare multiple tools

const fs = $provideFilesystemForNode();

const llm = [

// Note: You can use multiple LLM providers in one Promptbook execution.

// The best model will be chosen automatically according to the prompt and the model's capabilities.

new AzureOpenAiExecutionTools(

// <- TODO: [🧱] Implement in a functional (not new Class) way

{

resourceName: process.env.AZUREOPENAI_RESOURCE_NAME,

deploymentName: process.env.AZUREOPENAI_DEPLOYMENT_NAME,

apiKey: process.env.AZUREOPENAI_API_KEY,

},

),

new OpenAiExecutionTools(

// <- TODO: [🧱] Implement in a functional (not new Class) way

{

apiKey: process.env.OPENAI_API_KEY,

},

),

new AnthropicClaudeExecutionTools(

// <- TODO: [🧱] Implement in a functional (not new Class) way

{

apiKey: process.env.ANTHROPIC_CLAUDE_API_KEY,

},

),

];

const executables = await $provideExecutablesForNode();

const tools = {

llm,

fs,

scrapers: await $provideScrapersForNode({ fs, llm, executables }),

script: [new JavascriptExecutionTools()],

};

// ▶ Create whole pipeline collection

const collection = await createCollectionFromDirectory('./promptbook-collection', tools);

// ▶ Get single Pipeline

const pipeline = await collection.getPipelineByUrl(`https://promptbook.studio/my-collection/write-article.ptbk.md`);

// ▶ Create executor - the function that will execute the Pipeline

const pipelineExecutor = createPipelineExecutor({ pipeline, tools });

// ▶ Prepare input parameters

const inputParameters = { word: 'snake' };

// 🚀▶ Execute the Pipeline

const result = await pipelineExecutor(inputParameters);

// ▶ Fail if the execution was not successful

assertsExecutionSuccessful(result);

// ▶ Handle the result

const { isSuccessful, errors, outputParameters, executionReport } = result;

console.info(outputParameters);

See the other models available in the Promptbook package:

Rest of the documentation is common for entire promptbook ecosystem:

If you have a simple, single prompt for ChatGPT, GPT-4, Anthropic Claude, Google Gemini, Llama 3, or whatever, it doesn't matter how you integrate it. Whether it's calling a REST API directly, using the SDK, hardcoding the prompt into the source code, or importing a text file, the process remains the same.

But often you will struggle with the limitations of LLMs, such as hallucinations, off-topic responses, poor quality output, language and prompt drift, word repetition repetition repetition repetition or misuse, lack of context, or just plain w𝒆𝐢rd resp0nses. When this happens, you generally have three options:

In all of these situations, but especially in 3., the ✨ Promptbook can make your life waaaaaaaaaay easier.

temperature, top-k, top-p, or kernel sampling. Just write your intent and persona who should be responsible for the task and let the library do the rest.:) can't avoid the problems. In this case, the library has built-in anomaly detection and logging to help you find and fix the problems.| Promptbook whitepaper | Basic motivations and problems which we are trying to solve | https://github.com/webgptorg/book |

| Promptbook (system) | Promptbook ... | |

| Book language | Book is a markdown-like language to define projects, pipelines, knowledge,... in the Promptbook system. It is designed to be understandable by non-programmers and non-technical people | |

| Promptbook typescript project | Implementation of Promptbook in TypeScript published into multiple packages to NPM | https://github.com/webgptorg/promptbook |

| Promptbook studio | Promptbook studio | https://github.com/hejny/promptbook-studio |

| Promptbook miniapps | Promptbook miniapps |

Promptbook pipelines are written in markdown-like language called Book. It is designed to be understandable by non-programmers and non-technical people.

# 🌟 My first Book

- INPUT PARAMETER {subject}

- OUTPUT PARAMETER {article}

## Sample subject

> Promptbook

-> {subject}

## Write an article

- PERSONA Jane, marketing specialist with prior experience in writing articles about technology and artificial intelligence

- KNOWLEDGE https://ptbk.io

- KNOWLEDGE ./promptbook.pdf

- EXPECT MIN 1 Sentence

- EXPECT MAX 1 Paragraph

> Write an article about the future of artificial intelligence in the next 10 years and how metalanguages will change the way AI is used in the world.

> Look specifically at the impact of {subject} on the AI industry.

-> {article}

This library is divided into several packages, all are published from single monorepo. You can install all of them at once:

npm i ptbk

Or you can install them separately:

⭐ Marked packages are worth to try first

ptbk.pdf documents.docx, .odt,….doc, .rtf,…The following glossary is used to clarify certain concepts:

If you have a question start a discussion, open an issue or write me an email.

See CHANGELOG.md

Promptbook by Pavol Hejný is licensed under CC BY 4.0

See TODO.md

I am open to pull requests, feedback, and suggestions. Or if you like this utility, you can ☕ buy me a coffee or donate via cryptocurrencies.

You can also ⭐ star the promptbook package, follow me on GitHub or various other social networks.

FAQs

It's time for a paradigm shift. The future of software in plain English, French or Latin

The npm package @promptbook/azure-openai receives a total of 283 weekly downloads. As such, @promptbook/azure-openai popularity was classified as not popular.

We found that @promptbook/azure-openai demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

Security News

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

Security News

Socket CEO Feross Aboukhadijeh discusses the open web, open source security, and how Socket tackles software supply chain attacks on The Pair Program podcast.

Security News

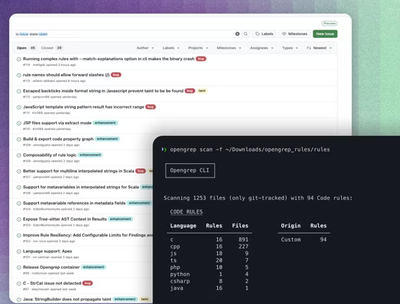

Opengrep continues building momentum with the alpha release of its Playground tool, demonstrating the project's rapid evolution just two months after its initial launch.