Security News

Attackers Are Hunting High-Impact Node.js Maintainers in a Coordinated Social Engineering Campaign

Multiple high-impact npm maintainers confirm they have been targeted in the same social engineering campaign that compromised Axios.

An interactive CLI tool leveraging multiple AI models for quick handling of simple requests

An interactive CLI tool leveraging multiple AI models for quick handling of simple requests

aipick is an interactive CLI tool leveraging multiple AI models for quick and efficient handling of simple requests such as variable name recommendations.

The minimum supported version of Node.js is the v18. Check your Node.js version with

node --version.

npm install -g aipick

aipick config set OPENAI.key=<your key>

aipick config set ANTHROPIC.key=<your key>

# ... (similar commands for other providers)

aipick -m "Why is the sky blue?"

👉 Tip: Use the

aipalias ifaipickis too long for you.

You can also use your model for free with Ollama and it is available to use both Ollama and remote providers simultaneously.

Install Ollama from https://ollama.com

Start it with your model

ollama run llama3.1 # model you want use. ex) codestral, gemma2

aipick config set OLLAMA.model=<your model>

If you want to use ollama, you must set OLLAMA.model.

aipick -m "Why is the sky blue?"

👉 Tip: Ollama can run LLMs in parallel from v0.1.33. Please see this section.

--message or -m: Message to ask AI (required)--systemPrompt or -s: System prompt for fine-tuningExample:

aipick --message "Explain quantum computing" --systemPrompt "You are a physics expert"

aipick config get <key>aipick config set <key>=<value>Example:

aipick config get OPENAI.key

aipick config set OPENAI.generate=3 GEMINI.temperature=0.5

--[ModelName].[SettingKey]=valueaipick -m "Why is the sky blue?" --OPENAI.generate=3

~/.aipick file or use set command.

Example ~/.aipick:# General Settings

logging=true

temperature=1.0

[OPENAI]

# Model-Specific Settings

key="<your-api-key>"

temperature=0.8

generate=2

[OLLAMA]

temperature=0.7

model[]=llama3.1

model[]=codestral

The priority of settings is: Command-line Arguments > Model-Specific Settings > General Settings > Default Values.

The following settings can be applied to most models, but support may vary. Please check the documentation for each specific model to confirm which settings are supported.

| Setting | Description | Default |

|---|---|---|

systemPrompt | System Prompt text | - |

systemPromptPath | Path to system prompt file | - |

timeout | Request timeout (milliseconds) | 10000 |

temperature | Model's creativity (0.0 - 2.0) | 0.7 |

maxTokens | Maximum number of tokens to generate | 1024 |

logging | Enable logging | true |

👉 Tip: To set the General Settings for each model, use the following command.

aipick config set OPENAI.maxTokens="2048" aipick config set ANTHROPIC.logging=false

aipick config set systemPrompt="Your communication style is friendly, engaging, and informative."

systemPrompttakes precedence overSystemPromptPathand does not apply at the same time.

aipick config set systemPromptPath="/path/to/user/prompt.txt"

The timeout for network requests in milliseconds.

Default: 10_000 (10 seconds)

aipick config set timeout=20000 # 20s

The temperature (0.0-2.0) is used to control the randomness of the output

Default: 0.7

aipick config set temperature=0

The maximum number of tokens that the AI models can generate.

Default: 1024

aipick config set maxTokens=3000

Default: true

Option that allows users to decide whether to generate a log file capturing the responses.

The log files will be stored in the ~/.aipick_log directory(user's home).

aipick log removeAll

Some models mentioned below are subject to change.

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | gpt-3.5-turbo |

url | API endpoint URL | https://api.openai.com |

path | API path | /v1/chat/completions |

proxy | Proxy settings | - |

generate | Number of responses to generate (1-5) | 1 |

The OpenAI API key. You can retrieve it from OpenAI API Keys page.

aipick config set OPENAI.key="your api key"

Default: gpt-3.5-turbo

The Chat Completions (/v1/chat/completions) model to use. Consult the list of models available in the OpenAI Documentation.

Tip: If you have access, try upgrading to

gpt-4for next-level code analysis. It can handle double the input size, but comes at a higher cost. Check out OpenAI's website to learn more.

aipick config set OPENAI.model=gpt-4

Default: https://api.openai.com

The OpenAI URL. Both https and http protocols supported. It allows to run local OpenAI-compatible server.

Default: /v1/chat/completions

The OpenAI Path.

Default: 1

The number of commit messages to generate to pick from.

Note, this will use more tokens as it generates more results.

aipick config set OPENAI.generate=2

| Setting | Description | Default |

|---|---|---|

model | Model(s) to use (comma-separated list) | - |

host | Ollama host URL | http://localhost:11434 |

timeout | Request timeout (milliseconds) | 100_000 |

The Ollama Model. Please see a list of models available

aipick config set OLLAMA.model="llama3"

aipick config set OLLAMA.model="llama3,codellama" # for multiple models

aipick config add OLLAMA.model="gemma2" # Only Ollama.model can be added.

OLLAMA.model is only string array type to support multiple Ollama. Please see this section.

Default: http://localhost:11434

The Ollama host

aipick config set OLLAMA.host=<host>

Default: 10_000 (10 seconds)

Request timeout for the Ollama.

aipick config set OLLAMA.timeout=<timeout>

Ollama does not support the following options in General Settings.

| Setting | Description | Default |

|---|---|---|

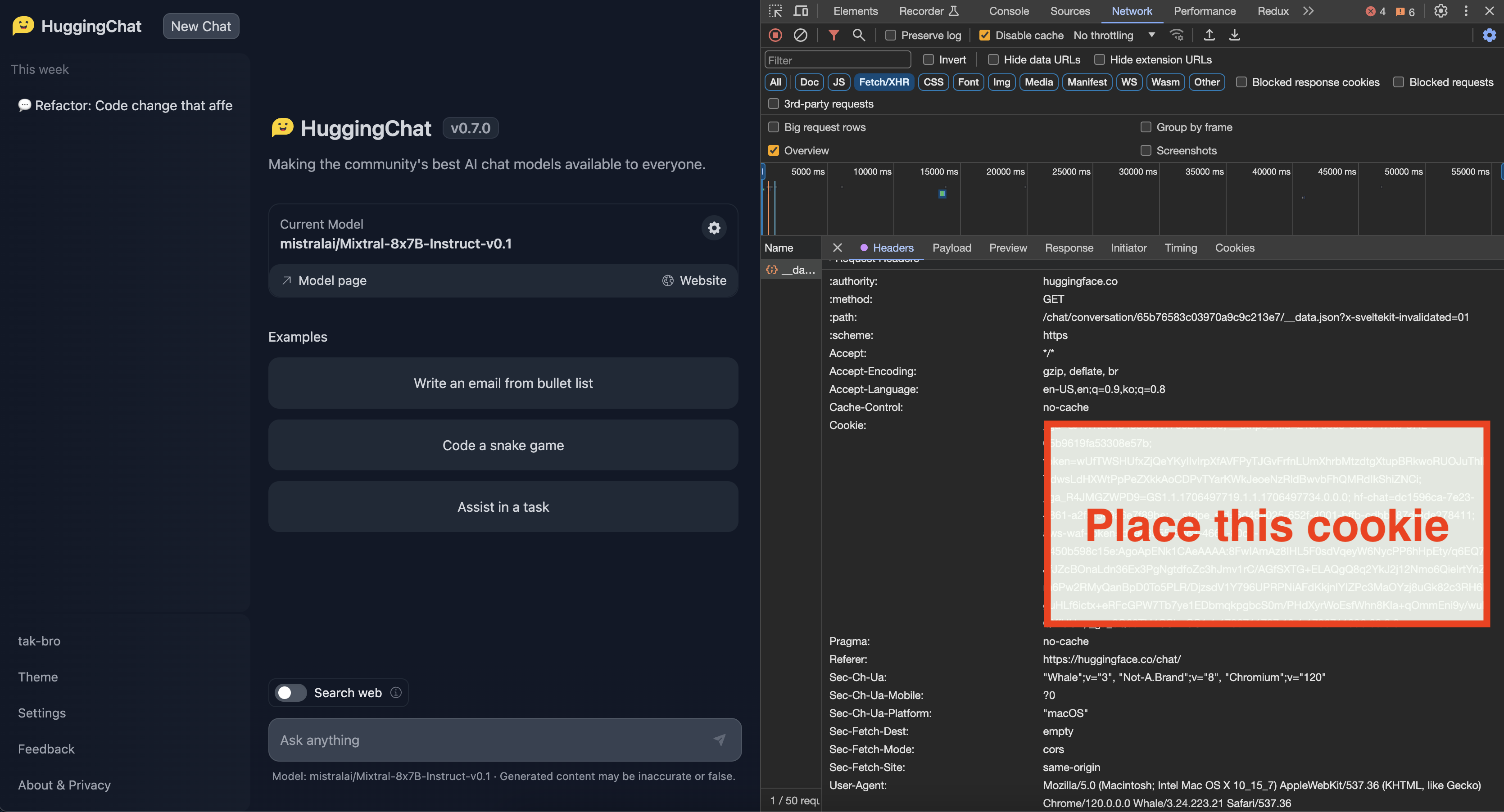

cookie | Authentication cookie | - |

model | Model to use | CohereForAI/c4ai-command-r-plus |

The Huggingface Chat Cookie. Please check how to get cookie

# Please be cautious of Escape characters(\", \') in browser cookie string

aipick config set HUGGINGFACE.cookie="your-cooke"

Default: CohereForAI/c4ai-command-r-plus

Supported:

CohereForAI/c4ai-command-r-plusmeta-llama/Meta-Llama-3-70B-InstructHuggingFaceH4/zephyr-orpo-141b-A35b-v0.1mistralai/Mixtral-8x7B-Instruct-v0.1NousResearch/Nous-Hermes-2-Mixtral-8x7B-DPO01-ai/Yi-1.5-34B-Chatmistralai/Mistral-7B-Instruct-v0.2microsoft/Phi-3-mini-4k-instructaipick config set HUGGINGFACE.model="mistralai/Mistral-7B-Instruct-v0.2"

Huggingface does not support the following options in General Settings.

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | gemini-1.5-pro-latest |

The Gemini API key. If you don't have one, create a key in Google AI Studio.

aipick config set GEMINI.key="your api key"

Default: gemini-1.5-pro-latest

Supported:

gemini-1.5-pro-latestgemini-1.5-flash-latestaipick config set GEMINI.model="gemini-1.5-flash-latest"

Gemini does not support the following options in General Settings.

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | claude-3-haiku-20240307 |

The Anthropic API key. To get started with Anthropic Claude, request access to their API at anthropic.com/earlyaccess.

Default: claude-3-haiku-20240307

Supported:

claude-3-haiku-20240307claude-3-sonnet-20240229claude-3-opus-20240229claude-3-5-sonnet-20240620aipick config set ANTHROPIC.model="claude-3-5-sonnet-20240620"

Anthropic does not support the following options in General Settings.

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | mistral-tiny |

The Mistral API key. If you don't have one, please sign up and subscribe in Mistral Console.

Default: mistral-tiny

Supported:

open-mistral-7bmistral-tiny-2312mistral-tinyopen-mixtral-8x7bmistral-small-2312mistral-smallmistral-small-2402mistral-small-latestmistral-medium-latestmistral-medium-2312mistral-mediummistral-large-latestmistral-large-2402mistral-embed| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | codestral-latest |

The Codestral API key. If you don't have one, please sign up and subscribe in Mistral Console.

Default: codestral-latest

Supported:

codestral-latestcodestral-2405aipick config set CODESTRAL.model="codestral-2405"

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | command |

The Cohere API key. If you don't have one, please sign up and get the API key in Cohere Dashboard.

Default: command

Supported models:

commandcommand-nightlycommand-lightcommand-light-nightlyaipick config set COHERE.model="command-r"

Cohere does not support the following options in General Settings.

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | gemma-7b-it |

The Groq API key. If you don't have one, please sign up and get the API key in Groq Console.

Default: gemma2-9b-it

Supported:

gemma2-9b-itgemma-7b-itllama-3.1-70b-versatilellama-3.1-8b-instantllama3-70b-8192llama3-8b-8192llama3-groq-70b-8192-tool-use-previewllama3-groq-8b-8192-tool-use-previewaipick config set GROQ.model="llama3-8b-8192"

| Setting | Description | Default |

|---|---|---|

key | API key | - |

model | Model to use | llama-3.1-sonar-small-128k-chat |

The Perplexity API key. If you don't have one, please sign up and get the API key in Perplexity

Default: llama-3.1-sonar-small-128k-chat

Supported:

llama-3.1-sonar-small-128k-chatllama-3.1-sonar-large-128k-chatllama-3.1-sonar-large-128k-onlinellama-3.1-sonar-small-128k-onlinellama-3.1-8b-instructllama-3.1-70b-instructllama-3.1-8bllama-3.1-70bThe models mentioned above are subject to change.

aipick config set PERPLEXITY.model="llama-3.1-70b"

Check the installed version with:

aipick --version

If it's not the latest version, run:

npm update -g aipick

aipick supports custom prompt templates through the systemPromptPath option. This feature allows you to define your own system prompt structure, giving you more control over the AI response generation process.

To use a custom prompt template, specify the path to your template file when running the tool:

aipick config set systemPromptPath="/path/to/user/prompt.txt"

Here's an example of how your custom system template might look:

You are a Software Development Tutor.

Your mission is to guide users from zero knowledge to understanding the fundamentals of software.

Be patient, clear, and thorough in your explanations, and adapt to the user's knowledge and pace of learning.

NOTE

- For the

systemPromptPathoption, set the template path, not the template content.- If you want to set the template content, use

systemPromptoption

You can load and make simultaneous requests to multiple models using Ollama's experimental feature, the OLLAMA_MAX_LOADED_MODELS option.

OLLAMA_MAX_LOADED_MODELS: Load multiple models simultaneouslyFollow these steps to set up and utilize multiple models simultaneously:

First, launch the Ollama server with the OLLAMA_MAX_LOADED_MODELS environment variable set. This variable specifies the maximum number of models to be loaded simultaneously.

For example, to load up to 3 models, use the following command:

OLLAMA_MAX_LOADED_MODELS=3 ollama serve

Refer to configuration for detailed instructions.

Next, set up aipick to specify multiple models. You can assign a list of models, separated by commas(,), to the OLLAMA.model environment variable. Here's how you do it:

aipick config set OLLAMA.model="mistral,llama3.1"

# or

aipick config add OLLAMA.model="mistral"

aipick config add OLLAMA.model="llama3.1"

With this command, aipick is instructed to utilize both the "mistral" and "llama3.1" models when making requests to the Ollama server.

aipick

Note that this feature is available starting from Ollama version 0.1.33.

When setting cookies with long string values, ensure to escape characters like ", ', and others properly.

- For double quotes ("), use \"

- For single quotes ('), use \'

This project uses functionalities from external APIs but is not officially affiliated with or endorsed by their providers. Users are responsible for complying with API terms, rate limits, and policies.

For bug fixes or feature implementations, please check the Contribution Guide.

If this project has been helpful, please consider giving it a Star ⭐️!

Maintainer: @tak-bro

FAQs

An interactive CLI tool leveraging multiple AI models for quick handling of simple requests

The npm package aipick receives a total of 5 weekly downloads. As such, aipick popularity was classified as not popular.

We found that aipick demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Multiple high-impact npm maintainers confirm they have been targeted in the same social engineering campaign that compromised Axios.

Security News

Axios compromise traced to social engineering, showing how attacks on maintainers can bypass controls and expose the broader software supply chain.

Security News

Node.js has paused its bug bounty program after funding ended, removing payouts for vulnerability reports but keeping its security process unchanged.