Security News

/Research

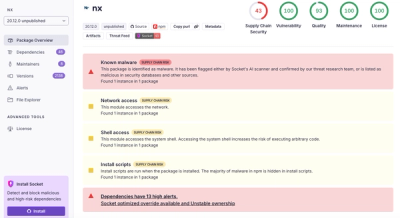

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

amplify-category-data-importer

Advanced tools

The easiest way to import CSV files into DynamoDB.

View Demo

·

Report Bug

·

Request Feature

WARNING: This plugin is in alpha, and may undergo backwards incompatible changes.

Amplify is great at replicating environments- but a database without data is a lonely place.

This project aims to automate the process of seeding/importing for Amplify projects.

Check out Installation to set up a S3 Bucket that streams data to your DynamoDB table.

To add this plugin to your Amplify project, follow these simple steps.

npm install -g amplify-category-data-importer

amplify plugin add amplify-category-data-importer

Add the data import resources to your amplify backend directory with:

amplify data-importer add

amplify push

A common use case is to export data from DynamoDB using the AWS Console, make some edits, and re-import it.

Change the name of the CSV file so it looks something like this:

Users-gkcm6todfzh5tlpgntm3lyrrgu-dev.csv

It must match the DynamoDB table you're targeting for upload.

Done! 🎉 Your DynamoDB table is now seeded with data.

By default this will upload data as strings.

If you have other types, edit the Lambda in the AWS Console.

Here's an example function to upload data based on type.

def write_row_to_dynamo(tableName, row):

try:

table = dynamodb.Table(tableName)

except:

print("Couldn't find DynamoDB table. Make sure the uploaded file name matches the table name.")

try:

with table.batch_writer() as batch:

print(row['id'])

batch.put_item(Item={

'id': row['id'],

'__typename': row['__typename'],

'updatedAt': row['updatedAt'],

'createdAt': row['createdAt'],

'count': int(row['count']),

'total': int(row['total']),

})

except Exception as e:

print(e)

The short term goal is to reduce the amount of manual steps required for a CSV import workflow.

See the Github Project Roadmap for a list of proposed improvements.

Contributions are what make the open source community such an amazing place to be learn, inspire, and create. Any contributions you make are greatly appreciated.

git checkout -b feature/AmazingFeature)git commit -m 'Add some AmazingFeature')git push origin feature/AmazingFeature)Distributed under the ISC License. See LICENSE for more information.

Twitter - @lordrozar

FAQs

<!-- PROJECT LOGO -->

The npm package amplify-category-data-importer receives a total of 11 weekly downloads. As such, amplify-category-data-importer popularity was classified as not popular.

We found that amplify-category-data-importer demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.