Product

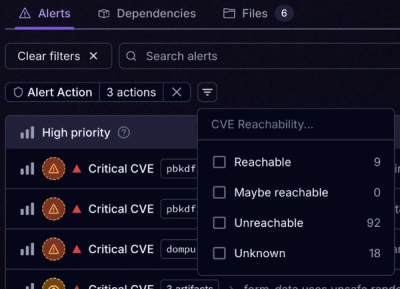

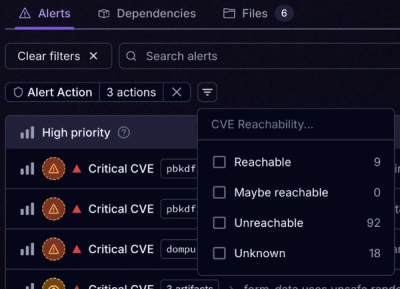

Introducing Tier 1 Reachability: Precision CVE Triage for Enterprise Teams

Socket’s new Tier 1 Reachability filters out up to 80% of irrelevant CVEs, so security teams can focus on the vulnerabilities that matter.

Splits a CSV read stream into multiple write streams or strings.

This library was forked from csv-split-stream, an extra method was added and the previous code was updated to support async functions within the callback functions. The original library hasn't been updated since 2017, so I decided to revive it. Feel free to submit a PR or issue containing any bug fixes or feature requests.

npm install chunk-csv

const chunkCsv = require('chunk-csv');

return chunkCsv.splitStream(

fs.createReadStream('input.csv'),

{

lineLimit: 10000

},

(index) => fs.createWriteStream(`output-${index}.csv`)

)

.then(csvSplitResponse => {

console.log('csv split succeeded!', csvSplitResponse);

// outputs: {

// "totalChunks": 350,

// "options": {

// "delimiter": "\n",

// "lineLimit": "10000"

// }

// }

}).catch(csvSplitError => {

console.log('csv split failed!, csvSplitError);

});

const http = require('http'),

const chunkCsv = require('csv-split-stream');

const AWS = require('aws-sdk'),

const s3Stream = require('s3-upload-stream')(new AWS.S3());

function downloadAndSplit(callback) {

http.get({...}, downloadStream => {

chunkCsv.splitStream(

downloadStream,

{

lineLimit: 10000

},

(index) => s3Stream.upload({

Bucket: 'testBucket',

Key: `output-${index}.csv`

})

)

.then(csvSplitResponse => {

console.log('csv split succeeded!', csvSplitResponse);

callback(...);

}).catch(csvSplitError => {

console.log('csv split failed!', csvSplitError);

callback(...);

});

});

}

const chunkCsv = require('chunk-csv');

chunkCsv.split(

fs.createReadStream('input.csv'),

{

lineLimit: 10000

},

async (chunk, index) => {

const data = await neatCsv(chunk);

console.log("Processed Chunk", index);

console.log(data);

}

)

.then(csvSplitResponse => {

console.log('csv split succeeded!', csvSplitResponse);

// outputs: {

// "totalChunks": 350,

// "options": {

// "delimiter": "\n",

// "lineLimit": "10000"

// }

// }

}).catch(csvSplitError => {

console.log('csv split failed!', csvSplitError);

});

splitStream(readable, options, callback(index))

The splitStream method splits a CSV readable stream into multiple write streams and takes 3 arguments.

delimiter (defaults to "\n"), lineLimit number of lines per chunk.index argument is given which specifies the chunk number being processed.

split(readable, options, callback(chunk, index))

The split method splits a CSV readable stream into multiple, smaller strings.

delimiter (defaults to "\n"), lineLimit number of lines per chunk.chunk argument will be the raw csv data with the specified number of lines and index specifies the chunk number being processed.This module will use the first row as a header, so make sure your CSV has a header row. Currently working on a solution to support a "no headers" option.

FAQs

Splits a CSV read stream into smaller chunks.

We found that chunk-csv demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket’s new Tier 1 Reachability filters out up to 80% of irrelevant CVEs, so security teams can focus on the vulnerabilities that matter.

Research

/Security News

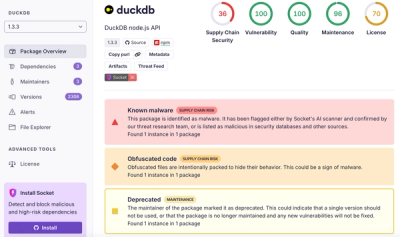

Ongoing npm supply chain attack spreads to DuckDB: multiple packages compromised with the same wallet-drainer malware.

Security News

The MCP Steering Committee has launched the official MCP Registry in preview, a central hub for discovering and publishing MCP servers.