Product

Introducing Webhook Events for Pull Request Scans

Add real-time Socket webhook events to your workflows to automatically receive pull request scan results and security alerts in real time.

_ _ _ _____ _______ __

| \ | | | | / ____|/ ____\ \ / /

| \| | ___ __| | ___ | | | (___ \ \ / /

| . ` |/ _ \ / _` |/ _ \| | \___ \ \ \/ /

| |\ | (_) | (_| | __/| |____ ____) | \ /

|_| \_|\___/ \__,_|\___| \_____|_____/ \/ New BSD License

This project provides CSV parsing and has been tested and used on a large source file (over 2Gb).

Using the library is a 4 steps process:

Here is a example:

// node samples/sample.js

var csv = require('csv');

csv()

.fromPath(__dirname+'/sample.in')

.toPath(__dirname+'/sample.out')

.transform(function(data){

data.unshift(data.pop());

return data;

})

.on('data',function(data,index){

console.log('#'+index+' '+JSON.stringify(data));

})

.on('end',function(count){

console.log('Number of lines: '+count);

})

.on('error',function(error){

console.log(error.message);

});

// Print sth like:

// #0 ["2000-01-01","20322051544","1979.0","8.8017226E7","ABC","45"]

// #1 ["2050-11-27","28392898392","1974.0","8.8392926E7","DEF","23"]

// Number of lines: 2

Via git (or downloaded tarball):

git clone http://github.com/wdavidw/node-csv-parser.git

Then, simply copy or link the ./lib/csv.js file into your $HOME/.node_libraries folder or inside a declared path folder.

Via npm:

npm install csv

The following method are available:

fromPath(data, options)

Take a file path as first argument and optionally on object of options as a second argument.

fromStream(readStream, options)

Take a readable stream as first argument and optionally on object of options as a second argument.

from(data, options)

Take a string, a buffer, an array or an object as first argument and optionally some options as a second argument.

Options are:

delimiter

Set the field delimiter, one character only, defaults to comma.

quote

Set the field delimiter, one character only, defaults to double quotes.

escape

Set the field delimiter, one character only, defaults to double quotes.

columns

List of fields or true if autodiscovered in the first CSV line, impact the transform argument and the data event by providing an object instead of an array, order matters, see the transform and the columns sections below.

encoding

Defaults to 'utf8', applied when a readable stream is created.

trim

If true, ignore whitespace immediately around the delimiter, defaults to false.

ltrim

If true, ignore whitespace immediately following the delimiter (i.e. left-trim all fields), defaults to false.

rtrim

If true, ignore whitespace immediately preceding the delimiter (i.e. right-trim all fields), defaults to false.

The following methods are available:

write(data, preserve)

Take a string, an array or an object, implementation of the StreamWriter API.

end()

Terminate the stream, implementation of the StreamWriter API.

toPath(path, options)

Take a file path as first argument and optionally on object of options as a second argument.

toStream(writeStream, options)

Take a readable stream as first argument and optionally on object of options as a second argument.

Options are:

delimiter

Defaults to the delimiter read option.

quote

Defaults to the quote read option.

quoted

Boolean, default to false, quote all the fields even if not required.

escape

Defaults to the escape read option.

columns

List of fields, applied when transform returns an object, order matters, see the transform and the columns sections below.

encoding

Defaults to 'utf8', applied when a writable stream is created.

header Display the column names on the first line if the columns option is provided.

lineBreaks

String used to delimit record rows or a special value; special values are 'auto', 'unix', 'mac', 'windows', 'unicode'; defaults to 'auto' (discovered in source or 'unix' if no source is specified).

flags

Defaults to 'w', 'w' to create or overwrite an file, 'a' to append to a file. Applied when using the toPath method.

bufferSize

Internal buffer holding data before being flushed into a stream. Applied when destination is a stream.

end

Prevent calling end on the destination, so that destination is no longer writable, similar to passing {end: false} option in stream.pipe().

newColumns

If the columns option is not specified (which means columns will be taken from the reader

options, will automatically append new columns if they are added during transform().

The contract is quite simple, you receive an array of fields for each record and return the transformed record. The return value may be an array, an associative array, a string or null. If null, the record will simply be skipped.

Unless you specify the columns read option, data are provided as arrays, otherwise they are objects with keys matching columns names.

When the returned value is an array, the fields are merged in order. When the returned value is an object, it will search for the columns property in the write or in the read options and smartly order the values. If no columns options are found, it will merge the values in their order of appearance. When the returned value is a string, it is directly sent to the destination source and it is your responsibility to delimit, quote, escape or define line breaks.

Example of transform returning a string

// node samples/transform.js

var csv = require('csv');

csv()

.fromPath(__dirname+'/transform.in')

.toStream(process.stdout)

.transform(function(data,index){

return (index>0 ? ',' : '') + data[0] + ":" + data[2] + ' ' + data[1];

});

// Print sth like:

// 82:Zbigniew Preisner,94:Serge Gainsbourg

By extending the Node EventEmitter class, the library provides a few useful events:

data (function(data, index){})

Thrown when a new row is parsed after the transform callback and with the data being the value returned by transform. Note however that the event won't be called if transform return null since the record is skipped.

The callback provide two arguments:

data is the CSV line being processed (by default as an array)

index is the index number of the line starting at zero

end

In case your redirecting the output to a file using the toPath method, the event will be called once the writing process is complete and the file closed.

error Thrown whenever an error is captured.

Columns names may be provided or discovered in the first line with the read options columns. If defined as an array, the order must match the one of the input source. If set to true, the fields are expected to be present in the first line of the input source.

You can define a different order and even different columns in the read options and in the write options. If the columns is not defined in the write options, it will default to the one present in the read options.

When working with fields, the transform method and the data events receive their data parameter as an object instead of an array where the keys are the field names.

// node samples/column.js

var csv = require('csv');

csv()

.fromPath(__dirname+'/columns.in',{

columns: true

})

.toStream(process.stdout,{

columns: ['id', 'name']

})

.transform(function(data){

data.name = data.firstname + ' ' + data.lastname

return data;

});

// Print sth like:

// 82,Zbigniew Preisner

// 94,Serge Gainsbourg

Tests are executed with expresso. To install it, simple use npm install -g expresso.

To run the tests

expresso test

PapaParse is a robust and powerful CSV parser for JavaScript with a similar feature set to csv. It supports browser and server-side parsing, auto-detection of delimiters, and streaming large files. Compared to csv, PapaParse is known for its ease of use and strong browser-side capabilities.

fast-csv is another popular CSV parsing and formatting library for Node.js. It offers a simple API, flexible parsing options, and support for streams. While csv provides a comprehensive set of tools for various CSV operations, fast-csv focuses on performance and ease of use for common tasks.

FAQs

A mature CSV toolset with simple api, full of options and tested against large datasets.

The npm package csv receives a total of 1,218,041 weekly downloads. As such, csv popularity was classified as popular.

We found that csv demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

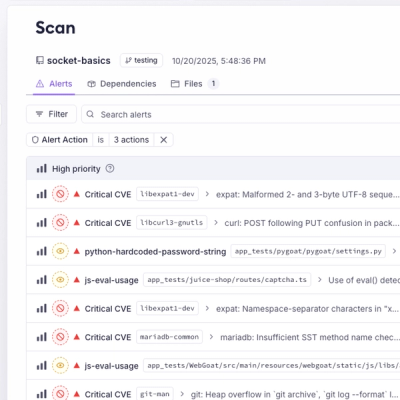

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Add real-time Socket webhook events to your workflows to automatically receive pull request scan results and security alerts in real time.

Research

The Socket Threat Research Team uncovered malicious NuGet packages typosquatting the popular Nethereum project to steal wallet keys.

Product

A single platform for static analysis, secrets detection, container scanning, and CVE checks—built on trusted open source tools, ready to run out of the box.