Research

Security News

Lazarus Strikes npm Again with New Wave of Malicious Packages

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

d-ser-t-service

Advanced tools

Dynamic Sentence Error Rate Testing: A Package for testing the CRIS speech-to-text model, quantifying the quality of the model with respect to its Word Error Rate

This project tests speech recognition . It takes sample audio and expected transcriptions, and tests whether or not there is proper transcription of the audio file in real time.

This project requires Microsoft speech service, audio files and a corresponding transcriptions.txt file.

Install the project from npm

npm install d-ser-t-service

If writing with TypeScript you can import it with import. If not, require.

// with import

import { CustomSpeechTestHarness } from 'd-ser-t-service';

// with require

const CustomSpeechTestHarness = require('d-ser-t-service').CustomSpeechTestHarness;

Create an instance of the test harness and pass the appropriate values as noted above.

const testHarness = new CustomSpeechTestHarness(

audioDirectory='path/to/audio/directory',

concurrency='number of concurrent calls, ≥ 1',

crisEndpointId='Custom Speech Endpoint ID',

serviceRegion='Speech service region, e.g. westus',

transcriptionFile='path/to/existing/transcription/file',

audioFile="optional; use if there's a single file to transcribe",

outFile='optional; defaults to ./test_results.json'

);

First, we must create the audio files that we wish to test, along with their expected transcriptions.

Audio must be .wav files sampled at 16kHz. My recommended approach for generating test audio is using Audacity to record wav files.

S on each individual label and rename, select right arrow on each label: >, drag right to mark end of phrase/labeling.location/of/audiodata/folderAs you create your audio files, keep track of the expected transcriptions in a text file called transcriptions.txt. The structure for .txt file is the same structure used for training a custom acoustic model. Each line of the transcription file should have the name of an audio file, followed by the corresponding transcription. The file name and transcription should be separated by a tab (\t).

Important: TXT files should be encoded as UTF-8 BOM and not contain any UTF-8 characters above U+00A1 in the Unicode characters table. Typically –, ‘, ‚, “ etc. This harness tries to address this by cleaning your data.

Testing stores test results in JSON format which is stored in ./test_results.json by default, can be changed with a flag

>= 10>= 2FAQs

Dynamic Sentence Error Rate Testing: A Package for testing the CRIS speech-to-text model, quantifying the quality of the model with respect to its Word Error Rate

The npm package d-ser-t-service receives a total of 0 weekly downloads. As such, d-ser-t-service popularity was classified as not popular.

We found that d-ser-t-service demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 2 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

Security News

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

Security News

Socket CEO Feross Aboukhadijeh discusses the open web, open source security, and how Socket tackles software supply chain attacks on The Pair Program podcast.

Security News

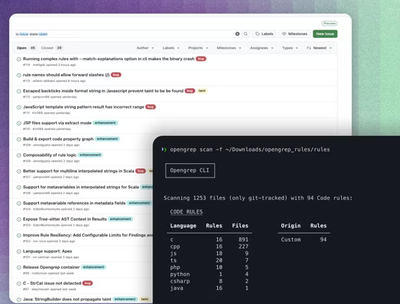

Opengrep continues building momentum with the alpha release of its Playground tool, demonstrating the project's rapid evolution just two months after its initial launch.