Product

Introducing Socket Fix for Safe, Automated Dependency Upgrades

Automatically fix and test dependency updates with socket fix—a new CLI tool that turns CVE alerts into safe, automated upgrades.

elasticsearch-exporter

Advanced tools

A utility for exporting data from one Elasticsearch cluster to another

#Elasticsearch-Exporter

A small script to export data from one Elasticsearch cluster into another.

Features:

A number of combinations of the options can be used, of which some are listed here to give you an idea of what can be done. The complete list of options can be found when running the exporter without any parameters. The script is trying to be smart enough to guess all missing options, so that e.g. if you don't specify a target type, but a target index, the type will be copied over without any changes. If you find that any combination of configuration doesn't make sense, please file a bug on Github.

// copy all indices from machine a to b

node exporter.js -a localhost -b foreignhost

// copy entire index1 to index2 on same machine

node exporter.js -i index1 -j index2

// copy type1 to type2 in same index

node exporter.js -i index -t type1 -u type2

// copy type1 from index1 to type2 in index2

node exporter.js -i index1 -t type1 -j index2 -u type2

// copy entire index1 from machine a to b

node exporter.js -a localhost -i index1 -b foreignhost

// copy index1 from machine1 to index2 on machine2

node exporter.js -a localhost -i index1 -b foreignhost -j index2

// only copy stuff from machine1 to machine2, that is in the query

node exporter.js -a localhost -b foreignhost -s '{"bool":{"must":{"term":{"field1":"value1"}}}}'

// do not execute any operation on machine2, just see the amount of data that would be queried

node exporter.js -a localhost -b foreignhost -r true

// use basic authentication for source and target connection

node exporter.js -a localhost -A myuser:mypass -b foreignhost -B myuser:mypass

You can now read all configuration from a file. The properties of the json read match one to one the extended option names shown in the help output.

// use an options file for configuration

node exporter.js -o myconfig.json

//myconfig.json

{

"sourceHost" : "localhost",

"targetHost" : "foreignhost",

"sourceIndex" : "myindex"

}

From database to file or vice versa you can use the following commands. Note that data files are now compressed by default. To disable this feature use additional flags:

// Export to file from database

node exporter.js -a localhost -i index1 -t type1 -g filename

// Import from file to database

node exporter.js -f filename -b foreignhost -i index2 -t type2

// To override the compression for a given source file

node exporter.js -f filename -c false -b foreignhost -j index2 -u type2

// To override the compression for a target file

node exporter.js -a localhost -i index1 -t type1 -g filename -d false

The tool responds with a number of exit codes that might help determine what went wrong:

0 Operation successful / No documents found to export 1 invalid options 2 source or target database cluster health = red99 Uncaught ExceptionIf memory is an issue pass these parameters and the process will try to run garbage collection before reaching memory limitations

node --nouse-idle-notification --expose-gc exporter.js ...

Or make use of the script in the tools folder:

tools/ex.sh ...

To run this script you will need at least node v0.10, as well as the nomnom, colors and through package installed (which will be installed automatically via npm).

Run the following command in the directory where you want the tools installed

npm install elasticsearch-exporter --production

The required packages will be installed automatically as a dependency, you won't have to do anything else to use the tool. If you install the package with the global flag (npm -g) there will also be a new executable available in the system called "eexport".

Notice that if you don't install released versions from npm and use the master instead, that it is in active development and might not be working. For help and questions you can always file an issue.

If you're trying to export a large amount of data it can take quite a while to export that data. Here are some tips that might help you speed up the process.

In most cases the limiting resource when running the exporter has not been CPU or Memory, but Network IO and response time from ElasticSearch. In some cases it is possible to speed up the process by reducing network hops. The closer you can get to either the source or target database the better. Try running on one of the nodes to reduce latency. If you're running a larger cluster try to run the script on the node where most shards of the data are available. This will further prevent ElasticSearch to make internal hops.

In some cases the number of requests queue up filling up memory. When running with garbage collection enabled, the client will wait until memory has been freed if it should fill up, but this might also cause the entire process to take longer to finish. Instead you can try and increase the amount of memory that the node process has available. To set memory to a higher value just pass this option with your desired memory setting to the node executable: --max-old-space-size=600. Note that there is an upper limit to the amount a node process can receive, so at some point it doesn't make much sense to increase it any further.

It might be the case that your network connection can handle a lot more than is typical and that the script is spending the most time waiting for additional sockets to be free. To get around this you can increase the maximum number of sockets on the global http agent by using the option flag for it (--maxSockets). Increase this to see if it will improve anything.

It might be possible to run the script multiple times in parallel. Since the exporter is single threaded it will only make use of one core and performance can be gained by querying ElasticSearch multiple times in parallel. To do so simple run the exporter tool against individual types or indexes instead of the entire cluster. If the bulk of your data is contained one type make use of the query parameter to further partition the existing data. Since it is necessary to understand the structure of the existing data it is not planned the exporter will attempt to do any of the optimization automatically.

Sometimes the whole pipe from source to target cluster is simply slow, unstable and annoying. In such a case try to export to a local file first. This way you have a complete backup with all the data and can transfer this to the target machine. While this might overall take more time, it might increase the speed of the individual steps.

It might help if you change the size of each scan request that fetches data. The current default of the option --sourceSize is set to 10. Increasing or decreasing this value might have great performance impact on the actual export.

This tool will only run as fast as your cluster can keep up. If the nodes are under heavy load, errors can occur and the entire process will take longer. How to omptimize your Cluster is a whole other chapter and depends on the version of ElasticSearch that you're running. Try the official documentation, google or the ElasticSearch mailing groups for help. The topic is too complex for us to cover it here.

To run the tests you must install the development dependencies along with the production dependencies

npm install elasticsearch-exporter

After that you can just run npm test to see an output of all existing tests.

I try to find all the bugs and have tests to cover all cases, but since I'm working on this project alone, it's easy to miss something. Also I'm trying to think of new features to implement, but most of the time I add new features because someone asked me for it. So please report any bugs or feature request to mallox@pyxzl.net or file an issue directly on Github. Thanks!

FAQs

A utility for exporting data from one Elasticsearch cluster to another

We found that elasticsearch-exporter demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Automatically fix and test dependency updates with socket fix—a new CLI tool that turns CVE alerts into safe, automated upgrades.

Security News

CISA denies CVE funding issues amid backlash over a new CVE foundation formed by board members, raising concerns about transparency and program governance.

Product

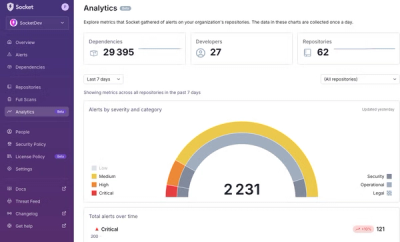

We’re excited to announce a powerful new capability in Socket: historical data and enhanced analytics.