Research

Security News

Lazarus Strikes npm Again with New Wave of Malicious Packages

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

google-cloud-bucket

Advanced tools

Google Cloud Bucket is node.js package to add objects to a Google Cloud Bucket.

npm i google-cloud-bucket --save

Before using this package, you must first:

Have a Google Cloud Account.

Have a Service Account set up with the following 2 roles:

roles/storage.objectCreatorroles/storage.objectAdmin (only if you want to update access to object or create/delete buckets)roles/storage.admin (only if you want to update access to an entire bucket)Get the JSON keys file for that Service Account above

Save that JSON key into a service-account.json file. Make sure it is located under a path that is accessible to your app (the root folder usually).

const { join } = require('path')

const { client } = require('google-cloud-bucket')

const storage = client.new({

jsonKeyFile: join(__dirname, './service-account.json')

})

// LISTING ALL THE BUCKETS IN THAT PROJECT

storage.list().then(console.log)

const someObject = {

firstname: 'Nicolas',

lastname: 'Dao',

company: 'Neap Pty Ltd',

city: 'Sydney'

}

// CREATING A BUCKET (This method will fail if your bucket name is not globally unique. You also need to the role 'roles/storage.objectAdmin')

storage.bucket('your-globally-unique-bucket-name').create()

.then(data => console.log(data))

// CREATING A BUCKET IN SPECIFIC LOCATION (default is US. A detailed list of all the locations can be found in the Annexes of this document)

storage.bucket('your-globally-unique-bucket-name').create({ location: 'australia-southeast1' })

.then(data => console.log(data))

// DELETING A BUCKET

storage.bucket('your-globally-unique-bucket-name').delete({ force:true })

.then(data => console.log(data))

// GET A BUCKET'S SETUP DATA

storage.bucket('your-globally-unique-bucket-name').get()

.then(data => console.log(data))

// ADDING AN OBJECT

storage.insert(someObject, 'your-bucket/a-path/filename.json') // insert an object into a bucket 'a-path/filename.json' does not need to exist

.then(() => storage.get('your-bucket/a-path/filename.json')) // retrieve that new object

.then(res => console.log(JSON.stringify(res, null, ' ')))

// ADDING A HTML PAGE WITH PUBLIC ACCESS (warning: Your service account must have the 'roles/storage.objectAdmin' role)

const html = `

<!doctype html>

<html>

<body>

<h1>Hello Giiiiirls</h1>

</body>

</html>`

storage.insert(html, 'your-bucket/a-path/index.html')

// UPLOADING AN IMAGE (we assume we have access to an image as a buffer variable called 'imgBuffer')

storage.insert(imgBuffer, 'your-bucket/a-path/image.jpg')

// GETTING BACK THE OBJECT

storage.get('your-bucket/a-path/filename.json').then(obj => console.log(obj))

// GETTING THE HTML BACK

storage.get('your-bucket/a-path/index.html').then(htmlString => console.log(htmlString))

// GETTING BACK THE IMAGE

// USE CASE 1 - Loading the entire buffer in memory

storage.get('your-bucket/a-path/image.jpg').then(imgBuffer => console.log(imgBuffer))

// USE CASE 2 - Loading the image on your filesystem

storage.get('your-bucket/a-path/image.jpg', { dst: 'some-path/image.jpg' })

.then(() => console.log(`Image successfully downloaded.`))

// USE CASE 3 - Piping the image buffer into a custom stream reader

const { Writable } = require('stream')

const customReader = new Writable({

write(chunk, encoding, callback) {

console.log('Hello chunk of image')

callback()

}

})

storage.get('your-bucket/a-path/image.jpg', { streamReader: customReader })

.then(() => console.log(`Image successfully downloaded.`))

// TESTING IF A FILE OR A BUCKET EXISTS

storage.exists('your-bucket/a-path/image.jpg')

.then(fileExists => fileExists ? console.log('File exists.') : console.log('File does not exist.'))

// LISTING ALL THE FILES METADATA WITH A FILEPATH THAT STARTS WITH SPECIFIC NAME

storage.list('your-bucket/a-path/')

.then(files => console.log(files))

Bucket API

The examples above demonstrate how to insert and query any storage. We've also included a variant of those APIs that are more focused on the bucket:

// THIS API:

storage.insert(someObject, 'your-bucket/a-path/filename.json')

// CAN BE REWRITTEN AS FOLLOW:

storage.bucket('your-bucket').object('a-path/filename.json').insert(someObject)

// THIS API:

storage.get('your-bucket/a-path/filename.json').then(obj => console.log(obj))

// CAN BE REWRITTEN AS FOLLOW:

storage.bucket('your-bucket').object('a-path/filename.json').get().then(obj => console.log(obj))

// THIS API:

storage.exists('your-bucket/a-path/image.jpg')

.then(fileExists => fileExists ? console.log('File exists.') : console.log('File does not exist.'))

// CAN BE REWRITTEN AS FOLLOW:

storage.bucket('your-bucket').object('a-path/image.jpg').exists()

.then(fileExists => fileExists ? console.log('File exists.') : console.log('File does not exist.'))

// THIS API:

storage.list('your-bucket/a-path/')

.then(files => console.log(files))

// CAN BE REWRITTEN AS FOLLOW:

storage.bucket('your-bucket').object('a-path/').list()

.then(files => console.log(files))

This allows to make any files publicly readable by anybody on the web. That's usefull if you want to host a website, or publish data (e.g., RSS feed).

Once your bucket is publicly readable, everyone can access it at this url: https://storage.googleapis.com/your-bucket/some-path/index.html

WARNING: If that bucket hosts files that hsould be accessible cross domain (e.g., an RSS feed), don't forget to also set up CORS (next section Configuring CORS On a Bucket).

const bucket = storage.bucket('your-bucket')

// TEST WHETHER A BUCKET IS PUBLIC OR NOT

bucket.isPublic().then(isPublic => isPublic ? console.log(`Bucket '${bucket.name}' is public`) : console.log(`Bucket '${bucket.name}' is not public`))

// MAKING A BUCKET PUBLICLY READABLE (warning: Your service account must have the 'roles/storage.admin' role)

// Once a bucket is public, all content added to it (even when omitting the 'public' flag) is public

bucket.addPublicAccess()

.then(({ publicUri }) => console.log(`Your web page is publicly available at: ${publicUri}`))

// REMOVING THE PUBLICLY READABLE ACCESS FROM A BUCKET (warning: Your service account must have the 'roles/storage.admin' role)

bucket.removePublicAccess()

// MAKING AN EXISTING OBJECT PUBLICLY READABLE (warning: Your service account must have the 'roles/storage.objectAdmin' role)

bucket.object('a-path/private.html').addPublicAccess()

.then(({ publicUri }) => console.log(`Your web page is publicly available at: ${publicUri}`))

// REMOVING THE PUBLICLY READABLE ACCESS FROM A FILE (warning: Your service account must have the 'roles/storage.objectAdmin' role)

bucket.object('a-path/private.html').removePublicAccess()

It is also possible to make a single file publicly readable in a single command when the file is created:

storage.insert(html, 'your-bucket/a-path/index.html', { public: true })

.then(({ publicUri }) => console.log(`Your web page is publicly available at: ${publicUri}`))

Once your file is publicly readable, everyone can access it at this url: https://storage.googleapis.com/your-bucket/a-path/index.html

WARNING: If that bucket hosts files that hsould be accessible cross domain (e.g., an RSS feed), don't forget to also set up CORS (next section Configuring CORS On a Bucket).

It is also possible to set a file's content encoding in a single command when the file is created:

storage.insert(html, 'your-bucket/a-path/index.html', { contentEncoding: 'gzip' })

.then(({ publicUri }) => console.log(`Your gzipped file is available at: ${publicUri}`))

If your files are publicly readable on the web, they might not be accessible when referenced from other websites. To enable other websites to access your files, you will have to configure CORS on your bucket:

// CONFIGURE CORS ON A BUCKET (warning: Your service account must have the 'roles/storage.admin' role)

bucket.cors.setup({

origin: ['*'],

method: ['GET', 'OPTIONS', 'HEAD', 'POST'],

responseHeader: ['Authorization', 'Origin', 'X-Requested-With', 'Content-Type', 'Accept'],

maxAgeSeconds: 3600

})

.then(() => console.log(`CORS successfully set up on your bucket.`))

If you want to check if CORS has already been set up on a bucket:

bucket.cors.exists().then(yes => yes

? console.log(`CORS already set up on bucket '${bucket.name}'.`)

: console.log(`CORS not set up yet on bucket '${bucket.name}'.`))

You can also check if a specific CORS config exists:

bucket.cors.exists({

origin: ['*'],

method: ['GET', 'OPTIONS', 'HEAD', 'POST'],

responseHeader: ['Authorization', 'Origin', 'X-Requested-With', 'Content-Type', 'Accept'],

maxAgeSeconds: 3600

}).then(yes => yes

? console.log(`CORS already set up on bucket '${bucket.name}'.`)

: console.log(`CORS not set up yet on bucket '${bucket.name}'.`))

To remove CORS from a bucket:

bucket.cors.disable().then(() => console.log(`CORS successfully disabled on bucket '${bucket.name}'.`))

To achieve this you need to setup 5 things:

You need to setup the service account that you've been using to manage your bucket (defined in your service-account.json) as a domain owner. To achieve that, the first step is to prove your ownership using https://search.google.com/search-console/welcome. When that's done, open the settings and select User and permissions. There, you'll be able to add a new owner, which will allow you to add the email of your service account.

Create a bucket with a name matching your domain (e.g., www.your-domain-name.com)

Make that bucket public. Refer to section Publicly Readable Config above.

Add a new CNAME record in your DNS similar to this:

| Type | Name | Value |

|---|---|---|

| CNAME | www | c.storage.googleapis.com |

Configure the bucket so that each index.html and the 404.html page are the default pages (otherwise, you'll have to explicitly enter http://www.your-domain-name.com/index.html to reach your website instead of simply entering http://www.your-domain-name.com):

bucket.website.setup({

mainPageSuffix: 'index.html',

notFoundPage: '404.html'

}).then(console.log)

const bucket = storage.bucket('your-bucket-name')

bucket.object('some-folder-path').zip({

to: {

local: 'some-path-on-your-local-machine',

bucket: {

name: 'another-existing-bucket-name', // Optional (default: Source bucket. In our example, that source bucket is 'your-bucket-name')

path: 'some-folder-path.zip' // Optional (default: 'archive.zip'). If specified, must have the '.zip' extension.

}

},

ignore:[/\.png$/, /\.jpg$/, /\.html$/] // Optional. Array of strings or regex

})

.then(({ count, data }) => {

console.log(`${count} files have been zipped`)

if (data)

// 'data' is null if the 'options.to' is defined

console.log(`The zip file's size is: ${data.length/1024} KB`)

})

Extra Options

You can also track the various steps of the zipping process with the optional on object:

const bucket = storage.bucket('your-bucket-name')

bucket.object('some-folder-path').zip({

to: {

local: 'some-path-on-your-local-machine',

bucket: {

name: 'another-existing-bucket-name', // Optional (default: Source bucket. In our example, that source bucket is 'your-bucket-name')

path: 'some-folder-path.zip' // Optional (default: 'archive.zip'). If specified, must have the '.zip' extension.

}

},

on:{

'files-listed': (files) => {

console.log(`Total number of files to be zipped: ${files.count}`)

console.log(`Raw size: ${(files.size/1024/1024).toFixed(1)} MB`)

// 'files.data' is an array of all the files' details

},

'file-received': ({ file, size }) => {

console.log(`File ${file} (byte size: ${size}) is being zipped`)

},

'finished': ({ size }) => {

console.log(`Zip process completed. The zip file's size is ${size} bytes`)

},

'saved': () => {

console.log('The zipped file has been saved')

},

'error': err => {

console.log(`${err.message}\n${err.stack}`)

}

}

})

.then(({ count, data }) => {

console.log(`${count} files have been zipped`)

if (data)

// 'data' is null if the 'options.to' is defined

console.log(`The zip file's size is: ${data.length/1024} KB`)

})

service-account.jsonWe assume that you have created a Service Account in your Google Cloud Account (using IAM) and that you've downloaded a service-account.json (the name of the file does not matter as long as it is a valid json file). The first way to create a client is to provide the path to that service-account.json as shown in the following example:

const storage = client.new({

jsonKeyFile: join(__dirname, './service-account.json')

})

This method is similar to the previous one. You should have dowloaded a service-account.json, but instead of providing its path, you provide some of its details explicitly:

const storage = client.new({

clientEmail: 'some-client-email',

privateKey: 'some-secret-private-key',

projectId: 'your-project-id'

})

If you're managing an Google Cloud OAuth2 token yourself (most likely using the google-auto-auth library), you are not required to explicitly pass account details like what was done in the previous 2 approaches. You can simply specify the projectId:

const storage = client.new({ projectId: 'your-project-id' })

Refer to the next section to see how to pass an OAuth2 token.

Networks errors (e.g. socket hang up, connect ECONNREFUSED) are a fact of life. To deal with those undeterministic errors, this library uses a simple exponential back off retry strategy, which will reprocess your read or write request for 10 seconds by default. You can increase that retry period as follow:

// Retry timeout for CHECKING FILE EXISTS

storage.exists('your-bucket/a-path/image.jpg', { timeout: 30000 }) // 30 seconds retry period timeout

// Retry timeout for INSERTS

storage.insert(someObject, 'your-bucket/a-path/filename.json', { timeout: 30000 }) // 30 seconds retry period timeout

// Retry timeout for QUERIES

storage.get('your-bucket/a-path/filename.json', { timeout: 30000 }) // 30 seconds retry period timeout

If you've used the 3rd method to create a client (i.e. 3. Using a ProjectId), then all the method you use require an explicit OAuth2 token:

storage.list({ token }).then(console.log)

All method accept a last optional argument object.

The Google Cloud Storage API supports partial response. This allows to only return specific fields rather than all of them, which can improve performances if you're querying a lot of objects. The only method that currently supports partial response is the the list API.

storage.bucket('your-bucket').object('a-folder/').list({ fields:['name'] })

The above example only returns the name field. The full list of supported fields is detailed under bucketObject.list([options]) section.

<Promise<GoogleBucketObject>>Gets an object located under the bucket's filePath path.

filePath <String>options <Object><Promise<Array<GoogleBucketBase|GoogleBucketObject>>>Lists buckets for this project or objects under a specific filePath.

filePath <String>options <Object>filePath is passed.filePath is passed.<Promise<GoogleBucketObjectPlus>>Inserts a new object to filePath.

object <Object>Object you want to upload.filePath <String>Storage's pathname destination where that object is uploaded, e.g., your-bucket-id/media/css/style.css.options <Object><Promise<GoogleBucketObjectPlus>>Inserts a file located at localPath to filePath.

localPath <String>Absolute path on your local machine of the file you want to upload.filePath <String> Storage's pathname destination where that object is uploaded, e.g., your-bucket-id/media/css/style.css.options <Object><Promise<Boolean>>Checks whether an object located under the filePath path exists or not.

filePath <String>options <Object><Promise<Object>>Grants public access to a file located under the filePath path.

filePath <String>options

<Promise<Object>>Removes public access from a file located under the filePath path.

filePath <String>options <Object><Bucket>Gets a bucket object. This object exposes a series of APIs described under the bucket API section below.

bucketId <String>The bucket object is created using a code snippet similar to the following:

const bucket = storage.bucket('your-bucket-id')

<String>Gets the bucket's name

<Promise<Object>>Gets a bucket's metadata object.

options <Object><Promise<Boolean>>Checks if a bucket exists.

options <Object><Promise<Object>>Creates a new bucket.

options <Object>

location <String> Default is US. The full list can be found in section List Of All Google Cloud Platform Locations.WARNING: A bucket's name must follow clear guidelines (more details in the Annexes under the Bucket Name Restrictions section). To facilitate the buckets name validation, a mathod called

validate.bucketNameis provided:const { utils:{ validate } } = require('google-cloud-bucket') const validate.bucketName('hello') // => { valid:true, reason:null } validate.bucketName('hello.com') // => { valid:true, reason:null } validate.bucketName('he_llo-23') // => { valid:true, reason:null } validate.bucketName('_hello') // => { valid:false, reason:'The bucket name must start and end with a number or letter.' } validate.bucketName('hEllo') // => { valid:false, reason:'The bucket name must contain only lowercase letters, numbers, dashes (-), underscores (_), and dots (.).' } validate.bucketName('192.168.5.4') // => { valid:false, reason:'The bucket name cannot be represented as an IP address in dotted-decimal notation (for example, 192.168.5.4).' } validate.bucketName('googletest') // => { valid:false, reason:'The bucket name cannot begin with the "goog" prefix or contain close misspellings, such as "g00gle".' }

<Promise<Object>>Deletes a bucket.

options <Object>

force <Boolean> Default is false. When false, deleting a non-empty bucket throws a 409 error. When set to true, even a non-empty bucket is deleted. Behind the scene, the entire content is deleted first. That's why forcing a bucket deletion might take longer.count <Number> The number of files deleted. This count number does not include the bucket itself (e.g., 0 means that the bucket was empty and was deleted successfully).data <Object> Extra information about the deleted bucket.<Promise<Object>>Updates a bucket.

config <Object>options <Object><Promise<Object>>Grants public access to a bucket as well as all its files.

options <Object><Promise<Object>>Removes public access from a bucket as well as all its files.

options <Object><Promise<Boolean>>Checks if a bucket is public or not.

options <Object><Promise<Object>>Zips bucket.

options <Object><Promise<Boolean>>Checks if a bucket has been configured with specific CORS setup.

corsConfig <Object>options <Object><Promise<Object>>Configures a bucket with a specific CORS setup.

corsConfig <Object>options <Object><Promise<Object>>Removes any CORS setup from a bucket.

options <Object><Promise<Object>>Configures a bucket with a specific website setup.

NOTE: This API does not change the bucket access state. You should make this bucket public first using the

bucket.addPublicAccessAPI described above.

webConfig <Object>

mainPageSuffix <String> This is the file that would be served by default when the website's pathname does not specify any explicit file name (e.g., use index.html so that https://your-domain.com is equivalent to http://your-domain.com/index.html).notFoundPage <String> This is the page that would be served if your user enter a URL that does not match any file (e.g., 404.html).options <Object><BucketObject>Gets a bucket's object reference. This object exposes a series of APIs detailed in the next section BucketObject API.

filePath <String> This path represents a file or a folder.The bucketObject object is created using a code snippet similar to the following:

const bucket = storage.bucket('your-bucket-id')

const bucketObject = bucket.object('folder1/folder2/index.html')

<String>Gets bucketObject file name.

<Promise<<Object>>Gets the bucket object.

options <Object>

headers <Object> The content type of each object request is automatically determined by the file's URL. However, there are scenarios where the extension is unknown or the content type must be overidden. In that case, this option can be used as follow: { headers: { 'Content-Type': 'application/json' } }.<Promise<<Array<Object>>>Lists all the objects located under the bucket object (if that bucket object is a folder).

options <Object>

pattern <String|Array<String>> Filters results using a glob pattern or an array of globbing patterns (e.g., '**/*.png' to only get png images).ignore <String|Array<String>> Filters results using a glob pattern or an array of globbing patterns to ignore some files or folders (e.g., '**/*.png' to return everything except png images).fields <Array<String>> Whitelists the properties that are returned to increase network performance. The available fields are: 'kind','id','selfLink','name','bucket','generation','metageneration','contentType','timeCreated','updated','storageClass','timeStorageClassUpdated','size','md5Hash','mediaLink','crc32c','etag'.<Promise<Boolean>>Checks if a bucket object exists or not.

options <Object><Promise<GoogleBucketObjectPlus>>Inserts a new object to that bucket object.

object <Object>Object you want to upload.options <Object>

headers <Object> The content type of each object request is automatically determined by the file's URL. However, there are scenarios where the extension is unknown or the content type must be overidden. In that case, this option can be used as follow: { headers: { 'Content-Type': 'application/json' } }.<Promise<GoogleBucketObjectPlus>>Inserts a file located at localPath to that bucket object.

localPath <String>Absolute path on your local machine of the file you want to upload.options <Object><Promise<Object>>Deletes an object or an entire folder.

options <Object>

type <String> Valid values are 'file' or 'folder'. By default, the type is determined based on the filePath used when creating the bucketObject (const bucketObject = storage.bucket(bucketId).object(filePath)) but this can lead to errors. It is recommended to set this options explicitly.count <Number> The number of files deleted.NOTE: To delete a folder, the argument used to create the

bucketObjectmust be a folder (e.g.,storage.bucket('your-bucket-id').object('folderA/folderB').delete())

<Promise<Object>>Lists all the objects located under the bucket object (if that bucket object is a folder).

options <Object><Promise<Object>>Grants public access to a bucket object.

options <Object><Promise<Object>>Removes public access from a bucket object.

options <Object>kind <String> Always set to 'storage#bucket'.id <String>selfLink <String>projectNumber <String>name <String>timeCreated <String> UTC date, e.g., '2019-01-18T05:57:24.213Z'.updated <String> UTC date, e.g., '2019-01-18T05:57:32.548Z'.metageneration <String>iamConfiguration <String> { bucketPolicyOnly: { enabled: false } },location <String> Valid values are described in section List Of All Google Cloud Platform Locations.website <GoogleBucketWebsite>cors <Array<GoogleBucketCORS>>storageClass <String>etag <String>Same as GoogleBucketBase with an extra property:

iam <GoogleBucketIAM>mainPageSuffix <String> Defines which is the default file served when none is explicitly specified un a URI. For example, setting this property to 'index.html' means that this content could be reached using https://your-domain.com instead of https://your-domain.com/index.html.notFoundPage <String> E.g., '404.html'. This means that all not found page are redirected to this page.origin <Array<String>>method <Array<String>>responseHeader <Array<String>>maxAgeSeconds <Number>kind <String> Always set to 'storage#policy',resourceId <String>bindings <Array<GoogleBucketBindings>>etag <String>role <String> E.g., 'roles/storage.objectViewer', ...members <Array<String>> E.g., 'allUsers', 'projectEditor:your-project-id', 'projectOwner:your-project-id', ...kind <String> Always set to 'storage#object'.id <String>selfLink <String>name <String> Pathname to the object inside the bucket, e.g., 'new/css/line-icons.min.css'.bucket <String> Bucket's IDgeneration <String> Date stamp in milliseconds from epoc.metageneration <String>contentType <String> e.g., 'text/css'.timeCreated <String> UTC date, e.g., '2019-04-02T22:33:18.362Z'.updated <String> UTC date, e.g., '2019-04-02T22:33:18.362Z'.storageClass <String> Valid value are: 'STANDARD', 'MULTI-REGIONAL', 'NEARLINE', 'COLDLINE'.timeStorageClassUpdated <String> UTC date, e.g., '2019-04-02T22:33:18.362Z'.size <String> Size in bytes, e.g., '5862'.md5Hash <String>mediaLink <String>crc32c <String>etag <String>Same as GoogleBucketObject, but with the following extra property:

publicUri <String> If the object is publicly available, this URI indicates where it is located. This URI follows this structure: https://storage.googleapis.com/your-bucket-id/your-file-path.Follow those rules to choose a bucket's name (those rules were extracted from the official Google Cloud Storage documentation):

To help validate if a name follows those rules, this package provides a utility method called validate.bucketName:

const { utils:{ validate } } = require('google-cloud-bucket')

const validate.bucketName('hello') // => { valid:true, reason:null }

validate.bucketName('hello.com') // => { valid:true, reason:null }

validate.bucketName('he_llo-23') // => { valid:true, reason:null }

validate.bucketName('_hello') // => { valid:false, reason:'The bucket name must start and end with a number or letter.' }

validate.bucketName('hEllo') // => { valid:false, reason:'The bucket name must contain only lowercase letters, numbers, dashes (-), underscores (_), and dots (.).' }

validate.bucketName('192.168.5.4') // => { valid:false, reason:'The bucket name cannot be represented as an IP address in dotted-decimal notation (for example, 192.168.5.4).' }

validate.bucketName('googletest') // => { valid:false, reason:'The bucket name cannot begin with the "goog" prefix or contain close misspellings, such as "g00gle".' }

Single reagions are bucket locations that indicate that your data are replicated in multiple servers in that single region. Though it is unlikely that you would loose your data because all servers fail, it is however possible that a network failure brings that region inaccessbile. At this stage, your data would not be lost, but they would be unavailable for the period of that network outage. This type of storage is the cheapest.

Use this type of location if your data:

If the above limits are too strict for your use case, then you should probably use a Multi Regions.

| Location | Description |

|---|---|

| northamerica-northeast1 | Canada - Montréal |

| us-central1 | US - Iowa |

| us-east1 | US - South Carolina |

| us-east4 | US - Northern Virginia |

| us-west1 | US - Oregon |

| us-west2 | US - Los Angeles |

| southamerica-east1 | South America - Brazil |

| europe-north1 | Europe - Finland |

| europe-west1 | Europe - Belgium |

| europe-west2 | Europe - England |

| europe-west3 | Europe - Germany |

| europe-west4 | Europe - Netherlands |

| asia-east1 | Asia - Taiwan |

| asia-east2 | Asia - Hong Kong |

| asia-northeast1 | Asia - Japan |

| asia-south1 | Asia - Mumbai |

| asia-southeast1 | Asia - Singapore |

| australia-southeast1 | Asia - Australia |

| asia | Asia |

| us | US |

| eu | Europe |

Multi regions are bucket locations where your data are not only replicated in multiple servers in the same regions, but also replicated across multiple locations (e.g., asia will replicate your data across Taiwan, Hong Kong, Japan, Mumbai, Singapore, Australia). That means that your data are:

| Location | Description |

|---|---|

| asia | Asia |

| us | US |

| eu | Europe |

We are Neap, an Australian Technology consultancy powering the startup ecosystem in Sydney. We simply love building Tech and also meeting new people, so don't hesitate to connect with us at https://neap.co.

Our other open-sourced projects:

Copyright (c) 2018, Neap Pty Ltd. All rights reserved.

Redistribution and use in source and binary forms, with or without modification, are permitted provided that the following conditions are met:

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL NEAP PTY LTD BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

FAQs

Nodejs package to manage Google Cloud Buckets and its objects.

The npm package google-cloud-bucket receives a total of 134 weekly downloads. As such, google-cloud-bucket popularity was classified as not popular.

We found that google-cloud-bucket demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

Security News

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

Security News

Socket CEO Feross Aboukhadijeh discusses the open web, open source security, and how Socket tackles software supply chain attacks on The Pair Program podcast.

Security News

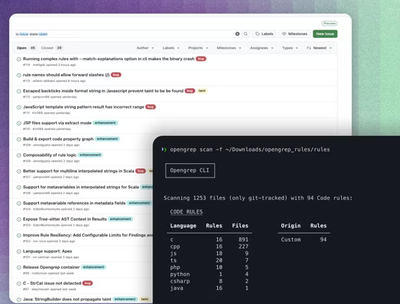

Opengrep continues building momentum with the alpha release of its Playground tool, demonstrating the project's rapid evolution just two months after its initial launch.