Research

Security News

Lazarus Strikes npm Again with New Wave of Malicious Packages

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

media-captions

Advanced tools

Captions parsing and rendering library built for the modern web.

Features

fetch and ReadableStream APIs.➕ Planning to also add a TTML, CEA-608, and CEA-708 parser that will map to VTT and render correctly. In addition, custom font loading and text codes support is planned for SSA/ASS captions. We don't have an exact date but most likely after the Vidstack Player 1.0. If urgent and you're willing to sponsor, feel free to email me at rahim.alwer@gmail.com.

🔗 Quicklinks

The StackBlitz link below showcases a simple example of how captions are fetched, parsed, and rendered over a native video element. We plan on adding more examples for additional captions formats and scenarios.

❓ Are native captions not good enough?

Simply put, no.

::cue selector is inconsistent across browsers and severely

limited with respect to even basic movement and styles.Did you know closed-captions are governed by the Federal Communications Commission (FCC) under the Communications and Video Accessibility Act (CVA)? Not providing captions and adequate customization options on the web for content that was shown on TV doesn't meet guidelines 😱 Filed law suits have dramatically increased in recent years! See the amazing Caption Me If You Can talk at Demuxed by Dan Sparacio to learn more.

❓ What about mozilla/vtt?

The library is old, outdated, and unmaintained.

First, install the NPM package:

npm i media-captions

Next, include styles if you plan on rendering captions using the CaptionsRenderer:

import 'media-captions/styles/captions.css';

// Optional - include if rendering VTT regions.

import 'media-captions/styles/regions.css';

Optionally, you can load the styles directly from a CDN using JSDelivr like so:

<link rel="stylesheet" href="https://cdn.jsdelivr.net/npm/media-captions/styles/captions.min.css" />

<!-- Optional - include if rendering VTT regions. -->

<link rel="stylesheet" href="https://cdn.jsdelivr.net/npm/media-captions/styles/regions.min.css" />

All parsing functions exported from this package accept the following options:

strict: Whether strict mode is enabled. In strict mode parsing errors will throw and cancel

the parsing process.errors: Whether errors should be collected and reported in the final

parser result. By default, this value will be true in dev mode or if strict

mode is true. If set to true and strict mode is false, the onError callback will be invoked.

Do note, setting this to true will dynamically load error builders which will slightly increase

bundle size (~1kB).type: The type of the captions file format so the correct parser is loaded. Options

include vtt, srt, ssa, ass, or a custom CaptionsParser object.onHeaderMetadata: Callback that is invoked when the metadata from the header block has been

parsed.onCue: Invoked when parsing a VTT cue block has finished parsing and a VTTCue has

been created. Do note, regardless of which captions file format is provided a VTTCue will

be created.onRegion: Invoked when parsing a VTT region block has finished and a VTTRegion has been

created.onError: Invoked when a loading or parser error is encountered. Do note, this is only invoked

in development, if the strict parsing option is true, or if the errors parsing option is

true.Options can be provided to any parsing function like so:

import { parseText } from 'media-captions';

parseText('...', {

strict: false,

type: 'vtt',

onCue(cue) {

// ...

},

onError(error) {

// ...

},

});

All parsing functions exported from this package return a Promise which will resolve a

ParsedCaptionsResult object with the following properties:

metadata: An object containing all metadata that was parsed from the header block.regions: An array containing VTTRegion objects that were parsed and created during the

parsing process.cues: An array containing VTTCue objects that were parsed and created during the parsing

process.errors: An array containing ParseError objects. Do note, errors will only be collected if

in development mode, if strict parsing option is set to true, or the errors parsing option is

set to true.import { parseText } from 'media-captions';

// `ParsedCaptionsResult`

const { metadata, regions, cues, errors } = await parseText('...');

for (const cue of cues) {

// ...

}

By default, parsing is error tolerant and will always try to recover. You can set strict mode to ensure errors are not tolerated and are instead thrown. The text stream and parsing process will also be cancelled.

import { parseText, type ParseError } from 'media-captions';

try {

// Any error will now throw and cancel parsing.

await parseText('...', { strict: true });

} catch (error: ParseError) {

console.log(error.code, error.message, error.line);

}

A more tolerant error collection option is to set the errors parsing option to true. This

will ensure the onError callback is invoked and also errors are reported in the final

result (this will add ~1kB to the bundle size):

import { parseText } from 'media-captions';

const { errors } = await parseText('...', {

errors: true, // Not required if you only want errors in dev mode.

onError(error) {

error; // `ParseError`

},

});

for (const error of errors) {

// ...

}

The ParseError contains a numeric error code that matches the following values:

const ParseErrorCode = {

LoadFail: 0,

BadSignature: 1,

BadTimestamp: 2,

BadSettingValue: 3,

BadFormat: 4,

UnknownSetting: 5,

};

The ParseErrorCode object can be imported from the package.

parseTextThis function accepts a text string as input to be parsed:

import { parseText } from 'media-captions';

const { cues } = await parseText('...');

parseTextStreamThis function accepts a text stream ReadableStream<string> as input to be parsed:

import { parseTextStream } from 'media-captions';

const stream = new ReadableStream<string>({

start(controller) {

controller.enqueue('...');

controller.enqueue('...');

controller.enqueue('...');

// ...

controller.close();

},

});

// `ParsedCaptionsResult`

const result = await parseTextStream(stream, {

onCue(cue) {

// ...

},

});

parseResponseThe parseResponse function accepts a Response or Promise<Response> object. It can be seamlessly used with

fetch to parse a response body stream like so:

import { ParseErrorCode, parseResponse } from 'media-captions';

// `ParsedCaptionsResult`

const result = await parseResponse(fetch('/media/subs/english.vtt'), {

onCue(cue) {

// ...

},

onError(error) {

if (error.code === ParseErrorCode.LoadFail) {

console.log(error.message);

}

},

});

The captions type will inferred from the response header content-type field. You can specify

the specific captions format like so:

parseResponse(..., { type: 'vtt' });

The text encoding will be inferred from the response header and forwarded to the underlying

TextDecoder. You

can specify a specific encoding like so:

parseResponse(..., { encoding: 'utf8' });

parseByteStreamThis function is used to parse byte streams ReadableStream<Uint8Array>. It's used by the

parseResponse function to parse response body streams. It can be used like so:

import { parseByteStream } from 'media-captions';

const byteStream = new ReadableStream<Uint8Array>({

// ...

});

const result = await parseByteStream(byteStream, {

encoding: 'utf8',

onCue(cue) {

// ...

},

});

CaptionsParserYou can create a custom caption parser and provide it to the type option on any parse function.

The parser can be created and provided like so:

import {

type CaptionsParser,

type CaptionsParserInit,

type ParsedCaptionsResult,

} from 'media-captions';

class CustomCaptionsParser implements CaptionsParser {

/**

* Called when initializing the parser before the

* parsing process begins.

*/

init(init: CaptionsParserInit): void | Promise<void> {

// ...

}

/**

* Called when a new line of text has been read and

* requires parsing. This includes empty lines which

* can be used to separate caption blocks.

*/

parse(line: string, lineCount: number): void {

// ...

}

/**

* Called when parsing has been cancelled, or has

* naturally ended as there are no more lines of

* text to be parsed.

*/

done(cancelled: boolean): ParsedCaptionsResult {

// ...

}

}

// Custom parser can be provided to any parse function.

parseText('...', {

type: () => new CustomCaptionsParser(),

});

createVTTCueTemplateThis function takes a VTTCue and renders the cue text string into a HTML template element

and returns a VTTCueTemplate. The template can be used to efficiently store and clone

the rendered cue HTML like so:

import { createVTTCueTemplate, VTTCue } from 'media-captions';

const cue = new VTTCue(0, 10, '<v Joe>Hello world!');

const template = createVTTCueTemplate(cue);

template.cue; // original `VTTCue`

template.content; // `DocumentFragment`

// <span title="Joe" part="voice">Hello world!</span>

const cueHTML = template.content.cloneNode(true);

renderVTTCueStringThis function takes a VTTCue and renders the cue text string into a HTML string. This

function can be used server-side to render cue content like so:

import { renderVTTCueString, VTTCue } from 'media-captions';

const cue = new VTTCue(0, 10, '<v Joe>Hello world!');

// Output: <span title="Joe" part="voice">Hello world!</span>

const content = renderVTTCueString(cue);

The second argument accepts the current playback time to add the correct data-past and

data-future attributes to timed text (i.e., karaoke-style captions):

const cue = new VTTCue(0, 320, 'Hello my name is <5:20>Joe!');

// Output: Hello my name is <span part="timed" data-time="80" data-future>Joe!</span>

renderVTTCueString(cue, 310);

// Output: Hello my name is <span part="timed" data-time="80" data-past>Joe!</span>

renderVTTCueString(cue, 321);

tokenizeVTTCueThis function takes a VTTCue and returns a collection of VTT tokens based on the cue

text. Tokens represent the render nodes for a cue:

import { tokenizeVTTCue, VTTCue } from 'media-captions';

const cue = new VTTCue(0, 10, '<b.foo.bar><v Joe>Hello world!');

const tokens = tokenizeVTTCue(cue);

// `tokens` output:

[

{

tagName: 'b',

type: 'b',

class: 'foo bar',

children: [

{

tagName: 'span',

type: 'v',

voice: 'Joe',

children: [{ type: 'text', data: 'Hello world!' }],

},

],

},

];

Nodes can be a VTTBlockNode which can have children (i.e., class, italic, bold, underline,

ruby, ruby text, voice, lang, timestamp) or a VTTLeafNode (i.e., text nodes). The tokens

can be used for custom rendering like so:

function renderTokens(tokens: VTTNode[]) {

for (const token of tokens) {

if (token.type === 'text') {

// Process text nodes here...

token.data;

} else {

// Process block nodes here...

token.tagName;

token.class;

token.type === 'v' && token.voice;

token.type === 'lang' && token.lang;

token.type === 'timestamp' && token.time;

token.color;

token.bgColor;

renderTokens(tokens.children);

}

}

}

All token types are listed below for use in TypeScript:

import type {

VTTBlock,

VTTBlockNode,

VTTBlockType,

VTTBoldNode,

VTTClassNode,

VTTextNode,

VTTItalicNode,

VTTLangNode,

VTTLeafNode,

VTTNode,

VTTRubyNode,

VTTRubyTextNode,

VTTTimestampNode,

VTTUnderlineNode,

VTTVoiceNode,

} from 'media-captions';

renderVTTTokensStringThis function takes an array of VTToken objects and renders them into a string:

import { renderVTTTokensString, tokenizeVTTCue, VTTCue } from 'media-captions';

const cue = new VTTCue(0, 10, '<v Joe>Hello world!');

const tokens = tokenizeVTTCue(cue);

// Output: <span title="Joe" part="voice">Hello world!</span>

const result = renderVTTTokensString(tokens);

updateTimedVTTCueNodesThis function accepts a root DOM node to update all timed text nodes by setting the correct

data-future and data-past attributes.

import { updateTimedVTTCueNodes } from 'media-captions';

const video = document.querySelector('video')!,

captions = document.querySelector('#captions')!;

video.addEventListener('timeupdate', () => {

updateTimedVTTCueNodes(captions, video.currentTime);

});

This can be used when working with karaoke-style captions:

const cue = new VTTCue(300, 308, '<05:00>Timed...<05:05>Text!');

// Timed text nodes that would be updated at 303 seconds:

// <span part="timed" data-time="300" data-past>Timed...</span>

// <span part="timed" data-time="305" data-future>Text!</span>

CaptionsRendererThe captions overlay renderer is used to render captions over a video player. It follows the WebVTT rendering specification on how regions and cues should be visually rendered. It includes:

data-past and data-future attributes.VTTCue objects.Warning The styles files need to be included for the overlay renderer to work correctly!

<div>

<video src="..."></video>

<div id="captions"></div>

</div>

import 'media-captions/styles/captions.css';

import 'media-captions/styles/regions.css';

import { CaptionsRenderer, parseResponse } from 'media-captions';

const video = document.querySelector('video')!,

captions = document.querySelector('#captions')!,

renderer = new CaptionsRenderer(captions);

parseResponse(fetch('/media/subs/english.vtt')).then((result) => {

renderer.changeTrack(result);

});

video.addEventListener('timeupdate', () => {

renderer.currentTime = video.currentTime;

});

Props

dir: Sets the text direction (i.e., ltr or rtl).currentTime: Updates the current playback time and schedules a re-render.Methods

changeTrack(track: CaptionsRendererTrack): Resets the renderer and prepares new regions and cues.addCue(cue: VTTCue): Add a new cue to the renderer.removeCue(cue: VTTCue): Remove a cue from the renderer.update(forceUpdate: boolean): Schedules a re-render to happen on the next animation frame.reset(): Reset the renderer and clear all internal state including region and cue DOM nodes.destroy(): Reset the renderer and destroy internal observers and event listeners.Captions rendered with the CaptionOverlayRenderer can be

easily customized with CSS. Here are all the parts you can select and customize:

/* `#captions` assumes you set the id on the captions overlay element. */

#captions {

/* simple CSS vars customization (defaults below) */

--overlay-padding: 1%;

--cue-color: white;

--cue-bg-color: rgba(0, 0, 0, 0.8);

--cue-font-size: calc(var(--overlay-height) / 100 * 5);

--cue-line-height: calc(var(--cue-font-size) * 1.2);

--cue-padding-x: calc(var(--cue-font-size) * 0.6);

--cue-padding-y: calc(var(--cue-font-size) * 0.4);

}

#captions [part='region'] {

}

#captions [part='region'][data-active] {

}

#captions [part='region'][data-scroll='up'] {

}

#captions [part='cue-display'] {

}

#captions [part='cue'] {

}

#captions [part='cue'][data-id='...'] {

}

#captions [part='voice'] {

}

#captions [part='voice'][title='Joe'] {

}

#captions [part='timed'] {

}

#captions [part='timed'][data-past] {

}

#captions [part='timed'][data-future] {

}

Web Video Text Tracks (WebVTT) is the natively supported captions format supported by browsers. You can learn more about it on MDN or by reading the W3 specification.

WebVTT is a plain-text file that looks something like this:

WEBVTT

Kind: Language

Language: en-US

REGION id:foo width:100 lines:3 viewportanchor:0%,0% regionanchor:0%,0% scroll:up

1

00:00 --> 00:02 region:foo

Hello, Joe!

2

00:02 --> 00:04 region:foo

Hello, Jane!

parseResponse(fetch('/subs/english.vtt'), { type: 'vtt' });

Warning The parser will throw in strict parsing mode if the WEBVTT header line is not present.

WebVTT supports regions for bounding/positioning cues and implementing roll up captions

by setting scroll:up.

WebVTT cues are used for positioning and displaying text. They can snap to lines or be freely positioned as a percentage of the viewport.

const cue = new VTTCue(0, 10, '...');

// Position at line 5 in the video.

// Lines are calculated using cue line height.

cue.line = 5;

// 50% from the top and 10% from the left of the video.

cue.snapToLines = false;

cue.line = 50;

cue.position = 10;

// Align cue horizontally at end of line.

cue.align = 'end';

// Align top of the cue at the bottom of the line.

cue.lineAlign = 'end';

SubRip Subtitle (SRT) is a simple captions format that only contains cues. There are no regions or positioning settings as found in VTT.

SRT is a plain-text file that looks like this:

00:00 --> 00:02,200

Hello, Joe!

00:02,200 --> 00:04,400

Hello, Jane!

parseResponse(fetch('/subs/english.srt'), { type: 'srt' });

Note that SRT timestamps use a comma , to separate the milliseconds unit unlike VTT which uses

a dot ..

SubStation Alpha (SSA) and its successor Advanced SubStation Alpha (ASS) are subtitle formats commonly used for anime content. They allow for rich text formatting, including color, font size, bold, italic, and underline, as well as more advanced features like karaoke and typesetting.

SSA/ASS is a plain-text file that looks like this:

[Styles]

Format: Name, Fontname, Fontsize, PrimaryColour, SecondaryColour, OutlineColour, BackColour, Bold, Italic, Underline, StrikeOut, ScaleX, ScaleY, Spacing, Angle, BorderStyle, Outline, Shadow, Alignment, MarginL, MarginR, MarginV, Encoding

Style: Default,Arial,36,&H00FFFFFF,&H000000FF,&H00000000,&H00000000,0,0,0,0,100,100,0,0,1,2,2,2,10,10,10,1

[Events]

Format: Layer, Start, End, Style, Name, MarginL, MarginR, MarginV, Effect, Text

Dialogue: 0,0:00:05.10,0:00:07.20,Default,,0,0,0,,Hello, world!

[Other Events]

Format: Start, End, Text

Dialogue: 0:00:04,\t0:00:07.20, One!

Dialogue: 0:00:05,\t0:00:08.20, Two!

Dialogue: 0:00:06,\t0:00:09.20, Three!

Continue dialogue on a new line.

parseResponse(fetch('/subs/english.ssa'), { type: 'ssa' });

The following features are supported:

The following features are not supported yet:

It is very likely we will implement custom font loading, layers, and text codes in the near future. The rest is unlikely for now. You can always try and implement custom transitions or animations using CSS (see Styling).

We recommend using SubtitlesOctopus for SSA/ASS captions as it supports most features and is a performant WASM wrapper of libass. You'll need to fall back to this implementation on iOS Safari (iPhone) as custom captions are not supported there.

You can split large captions files into chunks and use the parseTextStream

or parseResponse functions to read and parse the stream. Files can be chunked

however you like and don't need to be aligned with line breaks.

Here's an example that chunks and streams a large VTT file on the server:

import fs from 'node:fs';

async function handle() {

const stream = new ReadableStream({

start(controller) {

const encoder = new TextEncoder();

const stream = fs.createReadStream('english.vtt');

stream.on('readable', () => {

controller.enqueue(encoder.encode(stream.read()));

});

stream.on('end', () => {

controller.close();

});

},

});

return new Response(stream, {

headers: {

'Content-Type': 'text/vtt; charset=utf-8',

},

});

}

Here's the types that are available from this package for use in TypeScript:

import type {

CaptionsFileFormat,

CaptionsParser,

CaptionsParserInit,

CaptionsRenderer,

CaptionsRendererTrack,

ParseByteStreamOptions,

ParseCaptionsOptions,

ParsedCaptionsResult,

ParseError,

ParseErrorCode,

ParseErrorInit,

TextCue,

VTTCue,

VTTCueTemplate,

VTTHeaderMetadata,

VTTRegion,

} from 'media-captions';

Media Captions is MIT licensed.

FAQs

Media captions parser and renderer.

The npm package media-captions receives a total of 55,894 weekly downloads. As such, media-captions popularity was classified as popular.

We found that media-captions demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

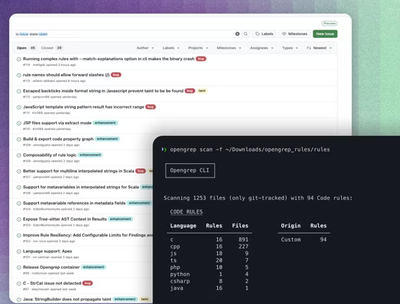

Research

Security News

The Socket Research Team has discovered six new malicious npm packages linked to North Korea’s Lazarus Group, designed to steal credentials and deploy backdoors.

Security News

Socket CEO Feross Aboukhadijeh discusses the open web, open source security, and how Socket tackles software supply chain attacks on The Pair Program podcast.

Security News

Opengrep continues building momentum with the alpha release of its Playground tool, demonstrating the project's rapid evolution just two months after its initial launch.