Security News

Risky Biz Podcast: Making Reachability Analysis Work in Real-World Codebases

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

my-codex-no-sandbox

Advanced tools

OpenAI Codex CLI Lightweight coding agent that runs in your terminal

Lightweight coding agent that runs in your terminal

npm i -g @openai/codex

Codex CLI is an experimental project under active development. It is not yet stable, may contain bugs, incomplete features, or undergo breaking changes. We're building it in the open with the community and welcome:

Help us improve by filing issues or submitting PRs (see the section below for how to contribute)!

Install globally:

npm install -g @openai/codex

Next, set your OpenAI API key as an environment variable:

export OPENAI_API_KEY="your-api-key-here"

Note: This command sets the key only for your current terminal session. You can add the

exportline to your shell's configuration file (e.g.,~/.zshrc) but we recommend setting for the session. Tip: You can also place your API key into a.envfile at the root of your project:OPENAI_API_KEY=your-api-key-hereThe CLI will automatically load variables from

.env(viadotenv/config).

--provider to use other modelsCodex also allows you to use other providers that support the OpenAI Chat Completions API. You can set the provider in the config file or use the

--providerflag. The possible options for--providerare:

- openai (default)

- openrouter

- azure

- gemini

- ollama

- mistral

- deepseek

- xai

- groq

- arceeai

- any other provider that is compatible with the OpenAI API

If you use a provider other than OpenAI, you will need to set the API key for the provider in the config file or in the environment variable as:

export <provider>_API_KEY="your-api-key-here"If you use a provider not listed above, you must also set the base URL for the provider:

export <provider>_BASE_URL="https://your-provider-api-base-url"

Run interactively:

codex

Or, run with a prompt as input (and optionally in Full Auto mode):

codex "explain this codebase to me"

codex --approval-mode full-auto "create the fanciest todo-list app"

That's it - Codex will scaffold a file, run it inside a sandbox, install any missing dependencies, and show you the live result. Approve the changes and they'll be committed to your working directory.

Codex CLI is built for developers who already live in the terminal and want ChatGPT-level reasoning plus the power to actually run code, manipulate files, and iterate - all under version control. In short, it's chat-driven development that understands and executes your repo.

And it's fully open-source so you can see and contribute to how it develops!

Codex lets you decide how much autonomy the agent receives and auto-approval policy via the

--approval-mode flag (or the interactive onboarding prompt):

| Mode | What the agent may do without asking | Still requires approval |

|---|---|---|

| Suggest (default) | ||

| Auto Edit | ||

| Full Auto | - |

In Full Auto every command is run network-disabled and confined to the current working directory (plus temporary files) for defense-in-depth. Codex will also show a warning/confirmation if you start in auto-edit or full-auto while the directory is not tracked by Git, so you always have a safety net.

Coming soon: you'll be able to whitelist specific commands to auto-execute with the network enabled, once we're confident in additional safeguards.

The hardening mechanism Codex uses depends on your OS:

macOS 12+ - commands are wrapped with Apple Seatbelt (sandbox-exec).

$PWD, $TMPDIR, ~/.codex, etc.).curl somewhere it will fail.Linux - there is no sandboxing by default.

We recommend using Docker for sandboxing, where Codex launches itself inside a minimal

container image and mounts your repo read/write at the same path. A

custom iptables/ipset firewall script denies all egress except the

OpenAI API. This gives you deterministic, reproducible runs without needing

root on the host. You can use the run_in_container.sh script to set up the sandbox.

| Requirement | Details |

|---|---|

| Operating systems | macOS 12+, Ubuntu 20.04+/Debian 10+, or Windows 11 via WSL2 |

| Node.js | 22 or newer (LTS recommended) |

| Git (optional, recommended) | 2.23+ for built-in PR helpers |

| RAM | 4-GB minimum (8-GB recommended) |

Never run

sudo npm install -g; fix npm permissions instead.

| Command | Purpose | Example |

|---|---|---|

codex | Interactive REPL | codex |

codex "..." | Initial prompt for interactive REPL | codex "fix lint errors" |

codex -q "..." | Non-interactive "quiet mode" | codex -q --json "explain utils.ts" |

codex completion <bash|zsh|fish> | Print shell completion script | codex completion bash |

Key flags: --model/-m, --approval-mode/-a, --quiet/-q, and --notify.

You can give Codex extra instructions and guidance using AGENTS.md files. Codex looks for AGENTS.md files in the following places, and merges them top-down:

~/.codex/AGENTS.md - personal global guidanceAGENTS.md at repo root - shared project notesAGENTS.md in the current working directory - sub-folder/feature specificsDisable loading of these files with --no-project-doc or the environment variable CODEX_DISABLE_PROJECT_DOC=1.

Run Codex head-less in pipelines. Example GitHub Action step:

- name: Update changelog via Codex

run: |

npm install -g @openai/codex

export OPENAI_API_KEY="${{ secrets.OPENAI_KEY }}"

codex -a auto-edit --quiet "update CHANGELOG for next release"

Set CODEX_QUIET_MODE=1 to silence interactive UI noise.

Setting the environment variable DEBUG=true prints full API request and response details:

DEBUG=true codex

Below are a few bite-size examples you can copy-paste. Replace the text in quotes with your own task. See the prompting guide for more tips and usage patterns.

| ✨ | What you type | What happens |

|---|---|---|

| 1 | codex "Refactor the Dashboard component to React Hooks" | Codex rewrites the class component, runs npm test, and shows the diff. |

| 2 | codex "Generate SQL migrations for adding a users table" | Infers your ORM, creates migration files, and runs them in a sandboxed DB. |

| 3 | codex "Write unit tests for utils/date.ts" | Generates tests, executes them, and iterates until they pass. |

| 4 | codex "Bulk-rename *.jpeg -> *.jpg with git mv" | Safely renames files and updates imports/usages. |

| 5 | codex "Explain what this regex does: ^(?=.*[A-Z]).{8,}$" | Outputs a step-by-step human explanation. |

| 6 | codex "Carefully review this repo, and propose 3 high impact well-scoped PRs" | Suggests impactful PRs in the current codebase. |

| 7 | codex "Look for vulnerabilities and create a security review report" | Finds and explains security bugs. |

npm install -g @openai/codex

# or

yarn global add @openai/codex

# or

bun install -g @openai/codex

# or

pnpm add -g @openai/codex

# Clone the repository and navigate to the CLI package

git clone https://github.com/openai/codex.git

cd codex/codex-cli

# Enable corepack

corepack enable

# Install dependencies and build

pnpm install

pnpm build

# Linux-only: download prebuilt sandboxing binaries (requires gh and zstd).

./scripts/install_native_deps.sh

# Get the usage and the options

node ./dist/cli.js --help

# Run the locally-built CLI directly

node ./dist/cli.js

# Or link the command globally for convenience

pnpm link

Codex configuration files can be placed in the ~/.codex/ directory, supporting both YAML and JSON formats.

| Parameter | Type | Default | Description | Available Options |

|---|---|---|---|---|

model | string | o4-mini | AI model to use | Any model name supporting OpenAI API |

approvalMode | string | suggest | AI assistant's permission mode | suggest (suggestions only)auto-edit (automatic edits)full-auto (fully automatic) |

fullAutoErrorMode | string | ask-user | Error handling in full-auto mode | ask-user (prompt for user input)ignore-and-continue (ignore and proceed) |

notify | boolean | true | Enable desktop notifications | true/false |

In the providers object, you can configure multiple AI service providers. Each provider requires the following parameters:

| Parameter | Type | Description | Example |

|---|---|---|---|

name | string | Display name of the provider | "OpenAI" |

baseURL | string | API service URL | "https://api.openai.com/v1" |

envKey | string | Environment variable name (for API key) | "OPENAI_API_KEY" |

In the history object, you can configure conversation history settings:

| Parameter | Type | Description | Example Value |

|---|---|---|---|

maxSize | number | Maximum number of history entries to save | 1000 |

saveHistory | boolean | Whether to save history | true |

sensitivePatterns | array | Patterns of sensitive information to filter in history | [] |

~/.codex/config.yaml):model: o4-mini

approvalMode: suggest

fullAutoErrorMode: ask-user

notify: true

~/.codex/config.json):{

"model": "o4-mini",

"approvalMode": "suggest",

"fullAutoErrorMode": "ask-user",

"notify": true

}

Below is a comprehensive example of config.json with multiple custom providers:

{

"model": "o4-mini",

"provider": "openai",

"providers": {

"openai": {

"name": "OpenAI",

"baseURL": "https://api.openai.com/v1",

"envKey": "OPENAI_API_KEY"

},

"azure": {

"name": "AzureOpenAI",

"baseURL": "https://YOUR_PROJECT_NAME.openai.azure.com/openai",

"envKey": "AZURE_OPENAI_API_KEY"

},

"openrouter": {

"name": "OpenRouter",

"baseURL": "https://openrouter.ai/api/v1",

"envKey": "OPENROUTER_API_KEY"

},

"gemini": {

"name": "Gemini",

"baseURL": "https://generativelanguage.googleapis.com/v1beta/openai",

"envKey": "GEMINI_API_KEY"

},

"ollama": {

"name": "Ollama",

"baseURL": "http://localhost:11434/v1",

"envKey": "OLLAMA_API_KEY"

},

"mistral": {

"name": "Mistral",

"baseURL": "https://api.mistral.ai/v1",

"envKey": "MISTRAL_API_KEY"

},

"deepseek": {

"name": "DeepSeek",

"baseURL": "https://api.deepseek.com",

"envKey": "DEEPSEEK_API_KEY"

},

"xai": {

"name": "xAI",

"baseURL": "https://api.x.ai/v1",

"envKey": "XAI_API_KEY"

},

"groq": {

"name": "Groq",

"baseURL": "https://api.groq.com/openai/v1",

"envKey": "GROQ_API_KEY"

},

"arceeai": {

"name": "ArceeAI",

"baseURL": "https://conductor.arcee.ai/v1",

"envKey": "ARCEEAI_API_KEY"

}

},

"history": {

"maxSize": 1000,

"saveHistory": true,

"sensitivePatterns": []

}

}

You can create a ~/.codex/AGENTS.md file to define custom guidance for the agent:

- Always respond with emojis

- Only use git commands when explicitly requested

For each AI provider, you need to set the corresponding API key in your environment variables. For example:

# OpenAI

export OPENAI_API_KEY="your-api-key-here"

# Azure OpenAI

export AZURE_OPENAI_API_KEY="your-azure-api-key-here"

export AZURE_OPENAI_API_VERSION="2025-03-01-preview" (Optional)

# OpenRouter

export OPENROUTER_API_KEY="your-openrouter-key-here"

# Similarly for other providers

In 2021, OpenAI released Codex, an AI system designed to generate code from natural language prompts. That original Codex model was deprecated as of March 2023 and is separate from the CLI tool.

Any model available with Responses API. The default is o4-mini, but pass --model gpt-4.1 or set model: gpt-4.1 in your config file to override.

o3 or o4-mini not work for me?It's possible that your API account needs to be verified in order to start streaming responses and seeing chain of thought summaries from the API. If you're still running into issues, please let us know!

Codex runs model-generated commands in a sandbox. If a proposed command or file change doesn't look right, you can simply type n to deny the command or give the model feedback.

Not directly. It requires Windows Subsystem for Linux (WSL2) - Codex has been tested on macOS and Linux with Node 22.

Codex CLI does support OpenAI organizations with Zero Data Retention (ZDR) enabled. If your OpenAI organization has Zero Data Retention enabled and you still encounter errors such as:

OpenAI rejected the request. Error details: Status: 400, Code: unsupported_parameter, Type: invalid_request_error, Message: 400 Previous response cannot be used for this organization due to Zero Data Retention.

You may need to upgrade to a more recent version with: npm i -g @openai/codex@latest

We're excited to launch a $1 million initiative supporting open source projects that use Codex CLI and other OpenAI models.

Interested? Apply here.

This project is under active development and the code will likely change pretty significantly. We'll update this message once that's complete!

More broadly we welcome contributions - whether you are opening your very first pull request or you're a seasoned maintainer. At the same time we care about reliability and long-term maintainability, so the bar for merging code is intentionally high. The guidelines below spell out what "high-quality" means in practice and should make the whole process transparent and friendly.

main - e.g. feat/interactive-prompt.pnpm test:watch during development for super-fast feedback.This project uses Husky to enforce code quality checks:

These hooks help maintain code quality and prevent pushing code with failing tests. For more details, see HUSKY.md.

pnpm test && pnpm run lint && pnpm run typecheck

If you have not yet signed the Contributor License Agreement (CLA), add a PR comment containing the exact text

I have read the CLA Document and I hereby sign the CLA

The CLA-Assistant bot will turn the PR status green once all authors have signed.

# Watch mode (tests rerun on change)

pnpm test:watch

# Type-check without emitting files

pnpm typecheck

# Automatically fix lint + prettier issues

pnpm lint:fix

pnpm format:fix

To debug the CLI with a visual debugger, do the following in the codex-cli folder:

pnpm run build to build the CLI, which will generate cli.js.map alongside cli.js in the dist folder.node --inspect-brk ./dist/cli.js The program then waits until a debugger is attached before proceeding. Options:

9229 (likely the first option)codex --help), or relevant example projects.npm test && npm run lint && npm run typecheck). CI failures that could have been caught locally slow down the process.main and that you have resolved merge conflicts.If you run into problems setting up the project, would like feedback on an idea, or just want to say hi - please open a Discussion or jump into the relevant issue. We are happy to help.

Together we can make Codex CLI an incredible tool. Happy hacking! :rocket:

All contributors must accept the CLA. The process is lightweight:

Open your pull request.

Paste the following comment (or reply recheck if you've signed before):

I have read the CLA Document and I hereby sign the CLA

The CLA-Assistant bot records your signature in the repo and marks the status check as passed.

No special Git commands, email attachments, or commit footers required.

| Scenario | Command |

|---|---|

| Amend last commit | git commit --amend -s --no-edit && git push -f |

The DCO check blocks merges until every commit in the PR carries the footer (with squash this is just the one).

codexTo publish a new version of the CLI you first need to stage the npm package. A

helper script in codex-cli/scripts/ does all the heavy lifting. Inside the

codex-cli folder run:

# Classic, JS implementation that includes small, native binaries for Linux sandboxing.

pnpm stage-release

# Optionally specify the temp directory to reuse between runs.

RELEASE_DIR=$(mktemp -d)

pnpm stage-release --tmp "$RELEASE_DIR"

# "Fat" package that additionally bundles the native Rust CLI binaries for

# Linux. End-users can then opt-in at runtime by setting CODEX_RUST=1.

pnpm stage-release --native

Go to the folder where the release is staged and verify that it works as intended. If so, run the following from the temp folder:

cd "$RELEASE_DIR"

npm publish

Prerequisite: Nix >= 2.4 with flakes enabled (experimental-features = nix-command flakes in ~/.config/nix/nix.conf).

Enter a Nix development shell:

# Use either one of the commands according to which implementation you want to work with

nix develop .#codex-cli # For entering codex-cli specific shell

nix develop .#codex-rs # For entering codex-rs specific shell

This shell includes Node.js, installs dependencies, builds the CLI, and provides a codex command alias.

Build and run the CLI directly:

# Use either one of the commands according to which implementation you want to work with

nix build .#codex-cli # For building codex-cli

nix build .#codex-rs # For building codex-rs

./result/bin/codex --help

Run the CLI via the flake app:

# Use either one of the commands according to which implementation you want to work with

nix run .#codex-cli # For running codex-cli

nix run .#codex-rs # For running codex-rs

Use direnv with flakes

If you have direnv installed, you can use the following .envrc to automatically enter the Nix shell when you cd into the project directory:

cd codex-rs

echo "use flake ../flake.nix#codex-cli" >> .envrc && direnv allow

cd codex-cli

echo "use flake ../flake.nix#codex-rs" >> .envrc && direnv allow

Have you discovered a vulnerability or have concerns about model output? Please e-mail security@openai.com and we will respond promptly.

This repository is licensed under the Apache-2.0 License.

FAQs

OpenAI Codex CLI Lightweight coding agent that runs in your terminal

The npm package my-codex-no-sandbox receives a total of 166 weekly downloads. As such, my-codex-no-sandbox popularity was classified as not popular.

We found that my-codex-no-sandbox demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

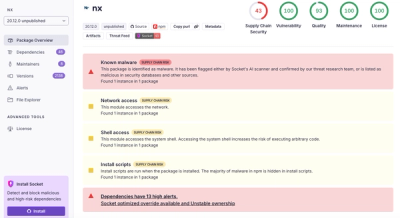

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

Security News

CISA’s 2025 draft SBOM guidance adds new fields like hashes, licenses, and tool metadata to make software inventories more actionable.