Product

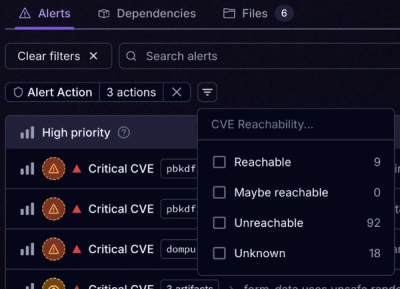

Introducing Tier 1 Reachability: Precision CVE Triage for Enterprise Teams

Socket’s new Tier 1 Reachability filters out up to 80% of irrelevant CVEs, so security teams can focus on the vulnerabilities that matter.

react-dictate-button

Advanced tools

A button to start dictation using Web Speech API, with an easy to understand event lifecycle.

A button to start speech recognition using Web Speech API, with an easy to understand event lifecycle.

react@>=16.8.0 and core-js@3SpeechGrammarList is only constructed when grammar props is presentspeechRecognition prop is not present, capability detection is now done through window.mediaDevices.getUserMediaTry out this component at github.io/compulim/react-dictate-button.

Reasons why we need to build our own component, instead of using existing packages on NPM:

First, install our production version by npm install react-dictate-button. Or our development version by npm install react-dictate-button@master.

import { DictateButton } from 'react-dictate-button';

export default () => (

<DictateButton

className="my-dictate-button"

grammar="#JSGF V1.0; grammar districts; public <district> = Tuen Mun | Yuen Long;"

lang="en-US"

onDictate={this.handleDictate}

onProgress={this.handleProgress}

>

Start/stop

</DictateButton>

);

| Name | Type | Default | Description |

|---|---|---|---|

className | string | undefined | Class name to apply to the button |

continuous | boolean | false | true to set Web Speech API to use continuous mode and should continue to recognize until stop, otherwise, false |

disabled | boolean | false | true to abort ongoing recognition and disable the button, otherwise, false |

extra | { [key: string]: any } | {} | Additional properties to set to SpeechRecognition before start, useful when bringing your own SpeechRecognition |

grammar | string | undefined | Grammar list in JSGF format |

lang | string | undefined | Language to recognize, for example, 'en-US' or navigator.language |

speechGrammarList | any | window.SpeechGrammarList (or vendor-prefixed) | Bring your own SpeechGrammarList |

speechRecognition | any | window.SpeechRecognition (or vendor-prefixed) | Bring your own SpeechRecognition |

Note: change of

extra,grammar,lang,speechGrammarList, andspeechRecognitionwill not take effect until next speech recognition is started.

| Name | Signature | Description |

|---|---|---|

onClick | (event: MouseEvent) => void | Emit when the user click on the button, preventDefault will stop recognition from starting |

onDictate |

({

result: {

confidence: number,

transcript: number

},

type: 'dictate'

}) => void

| Emit when recognition is completed |

onError | (event: SpeechRecognitionErrorEvent) => void | Emit when error has occurred or recognition is interrupted, see below |

onProgress |

({

abortable: boolean,

results: [{

confidence: number,

transcript: number

}],

type: 'progress'

}) => void

| Emit for interim results, the array contains every segments of recognized text |

onRawEvent | (event: SpeechRecognitionEvent) => void |

Emit for handling raw events from

SpeechRecognition

|

Although previous versions exported a React Context, it is recommended to use the hooks interface.

| Name | Signature | Description |

|---|---|---|

useAbortable | [boolean] | If ongoing speech recognition has abort() function and can be aborted, true, otherwise, false |

useReadyState | [number] | Returns the current state of recognition, refer to this section |

useSupported | [boolean] | If speech recognition is supported, true, otherwise, false |

To determines whether speech recognition is supported in the browser:

speechRecognition prop is undefined

window.navigator.mediaDevices and window.navigator.mediaDevices.getUserMedia are falsy, it is not supported

window.SpeechRecognition and vendor-prefixed are falsy, it is not supportednot-allowed error code, it is not supportedEven the browser is on an insecure HTTP connection,

window.SpeechRecognition(or vendor-prefixed) will continue to be truthy. Instead,mediaDevices.getUserMediais used for capability detection.

One of the design aspect is to make sure events are easy to understand and deterministic. First rule of thumb is to make sure onProgress will lead to either onDictate or onError. Here are some samples of event firing sequence (tested on Chrome 67):

onStartonProgress({}) (just started, therefore, no results)onProgress({ results: [] })onDictate({ result: ... })onEndonStartonProgress({}) (just started, therefore, no results)onProgress({ results: [] })onDictate({ result: ... })onProgress({ results: [] })onDictate({ result: ... })onEndonStartonProgress({})onDictate({}) (nothing is recognized, therefore, no result)onEndonStartonProgress({})onError({ error: 'no-speech' })onEndonStartonProgress({})onProgress({ results: [] })props.disabled to false, abort recognitiononError({ error: 'aborted' })onEndonStartonError({ error: 'not-allowed' })onEndInstead of passing child elements, you can pass a function to render different content based on ready state. This is called function as a child.

| Ready state | Description |

|---|---|

0 | Not started |

1 | Starting recognition engine, recognition is not ready until it turn to 2 |

2 | Recognizing |

3 | Stopping |

For example,

<DictateButton>

{({ readyState }) =>

readyState === 0 ? 'Start' : readyState === 1 ? 'Starting...' : readyState === 2 ? 'Listening...' : 'Stopping...'

}

</DictateButton>

You can build your own component by copying our layout code, without messing around the logic code behind the scene. For details, please refer to DictateButton.js, DictateCheckbox.js, and DictationTextBox.js.

In addition to <button>, we also ship <input type="checkbox"> out of the box. The checkbox version is better suited for toggle button scenario and web accessibility. You can use the following code for the checkbox version.

import { DictateCheckbox } from 'react-dictate-button';

export default () => (

<DictateCheckbox

className="my-dictate-checkbox"

grammar="#JSGF V1.0; grammar districts; public <district> = Redmond | Bellevue;"

lang="en-US"

onDictate={this.handleDictate}

onProgress={this.handleProgress}

>

Start/stop

</DictateCheckbox>

);

We also provide a "text box with dictate button" version. But instead of shipping a full-fledged control, we make it a minimally-styled control so you can start copying the code and customize it in your own project. The sample code can be found at DictationTextBox.js.

onstart, onaudiostart, onsoundstart, onspeechstartonresult may not fire in some cases, onnomatch is not fired in ChromeonProgress, then either onDictate or onErroronErrorPlease feel free to file suggestions.

readyState is 1 or 3 (transitioning), the underlying speech engine cannot be started/stopped until the state transition is complete

Composer.js, how about

SpeechRecognition into another object with simpler event model and readyStateComposer.js to bridge the new SimpleSpeechRecognition model and React ContextSimpleSpeechRecognition so people not on React can still benefit from the simpler event modelLike us? Star us.

Want to make it better? File us an issue.

Don't like something you see? Submit a pull request.

[4.0.0] - 2025-02-13

resultIndex in SpeechRecognitionResultEvent, by @compulim, in PR #86SpeechRecognition.abort() is undefined) will warn instead of throw, by @compulim, in PR #88dictate event should dispatch before end event, by @compulim, in PR #87SpeechRecognition.continuous property than continuous props, by @compulim, in PR #87end event should only be dispatched after SpeechRecognition.error event, instead of always emit on stop/unmount, by @compulim, in PR #88FAQs

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket’s new Tier 1 Reachability filters out up to 80% of irrelevant CVEs, so security teams can focus on the vulnerabilities that matter.

Research

/Security News

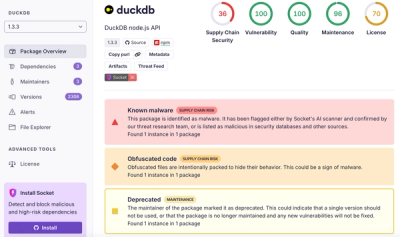

Ongoing npm supply chain attack spreads to DuckDB: multiple packages compromised with the same wallet-drainer malware.

Security News

The MCP Steering Committee has launched the official MCP Registry in preview, a central hub for discovering and publishing MCP servers.