Security News

Axios Supply Chain Attack Reaches OpenAI macOS Signing Pipeline, Forces Certificate Rotation

OpenAI rotated macOS signing certificates after a malicious Axios package reached its CI pipeline in a broader software supply chain attack.

npm install sg-chat

import { SgChat } from 'sg-chat'

import 'sg-chat/sg-chat.css'

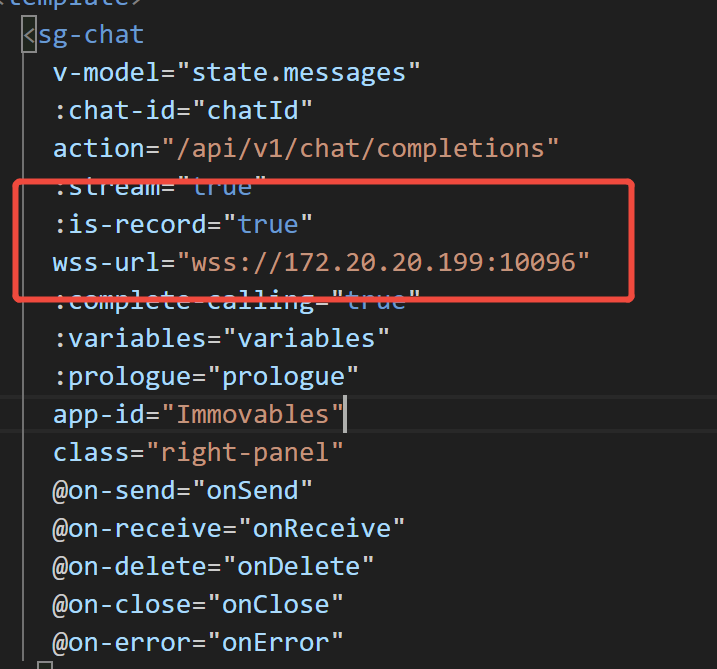

<sg-chat

:chatId="chatId"

action="/api/v1/chat/completions"

v-model="state.messages"

:stream="false"

:isRecord="true"

wssUrl="wss://172.20.20.199:10096"

:completeCalling="true"

:variables="variables"

appId=""

theme="dark"

@on-send="onSend"

@on-receive="onReceive"

@on-delete="onDelete"

@on-close="onClose"

@on-error="onError"

class="right-panel"

/>

| 名称 | 释义 | 类型 | 是否必须 |

|---|---|---|---|

| chatId | 会话ID | String | 是 |

| isSupportFile | 是否支持文件,默认false | Boolean | 否 |

| action | 服务地址 | String | 是 |

| stream | 流式响应,默认true | Boolean | 否 |

| value | 消息 | Array | 是 |

| theme | 主题,支持 light(亮色),dark(暗色) 默认light | String | 否 |

| completeCalling | 是否完整性 | Boolean | 否 |

| variables | 参数 | Object | 否 |

| appId | 智能体id | String | 是 |

| isRecord | 是否支持语音转文字 默认false | Boolean | 否 |

| wssUrl | asr服务器地址 isRecord为true时必填 | String | 否 |

| prologue | 开场白 {text:'开场白',problems:['问题1','问题1']} | Object | 否 |

| aiAvatarUrl | 智能体头像地址 | String | 否 |

| aiAvatarStyle | 智能体头像样式 {width:'30px',height:'30px'} | Object | 否 |

| userAvatarUrl | 用户头像地址 | String | 否 |

| userAvatarStyle | 用户头像样式 {width:'30px',height:'30px'} | Object | 否 |

| isConnectDigit | 是否连接数字人 默认false | Boolean | 否 |

| on-send | 发送消息回调函数 | Function | 否 |

| on-receive | 接受消息回调函数 | Function | 否 |

| on-delete | 删除消息回调函数 | Function | 否 |

| on-close | 消息接收完成回调函数 | Function | 否 |

| on-love | 点赞/踩回到函数 userFeedback default:默认,upvote:点, downvote:踩 | Function | 否 |

| on-jump | 页面跳转回调函数 | Function | 否 |

| 名称 | 释义 | 是否必须 |

|---|---|---|

| question | 用户消息内容 | 是 |

| dataId | 消息编号 | 是 |

| status | 消息状态 waiting:等待,running:运行中,finished:完成,failed:失败 | 是 |

| completeDetails | 智能体消息内容 | 是 |

| userFeedback | 点赞/踩状态 default:默认,upvote:点, downvote:踩 | 否 |

{

"chatId": "9762b3a5-509f-4745-9984-2f79c57e176f",

"dataId": "BU5NxZjGkNlMG6ZEUFRa",

"question": "aaaa",

"completeDetails": [

{

"event": "TextMessage",

"data": {

"id": "9762b3a5-509f-4745-9984-2f79c57e176f",

"dataId": "BU5NxZjGkNlMG6ZEUFRa",

"object": "southgis.chat.completion",

"created": 1750817401514,

"model": "Qwen3-30B-A3B-Int4-W4A16",

"choices": [

{

"message": {

"role": "user",

"content": "aaaa"

},

"finish_reason": null,

"index": 0

}

],

"usage": null,

"type": "OtherResponse"

}

},

{

"event": "ThoughtEvent",

"data": {

"id": "9762b3a5-509f-4745-9984-2f79c57e176f",

"dataId": "BU5NxZjGkNlMG6ZEUFRa",

"object": "southgis.chat.completion",

"created": 1750817401514,

"model": "Qwen3-30B-A3B-Int4-W4A16",

"choices": [

{

"message": {

"role": "Assistant",

"content": "好的,用户发送了“aaaa”。首先,我需要确定用户的需求是什么。可能的情况有很多种:用户可能是在测试系统,或者误操作发送了多个a,也可能有其他意图。\n\n接下来,我要考虑如何回应。如果用户只是随意输入,可能需要友好地询问是否有问题需要帮助。同时,要保持自然,避免让用户感到被质疑。\n\n另外,要检查是否有潜在的问题。比如,用户是否在尝试触发某种特定的响应,或者是否有拼写错误。但“aaaa”看起来像是重复的字母,可能没有实际意义。\n\n还要注意用户可能的背景。如果是新用户,可能需要更详细的引导;如果是老用户,可能已经了解如何与系统互动。但当前信息不足,无法确定。\n\n最后,确保回应符合公司的政策和价值观,保持专业和友好,同时鼓励用户提供更多信息以便更好地帮助他们。"

},

"finish_reason": null,

"index": 0

}

],

"usage": null,

"type": "FinalResponse"

}

},

{

"event": "TextMessage",

"data": {

"id": "9762b3a5-509f-4745-9984-2f79c57e176f",

"dataId": "BU5NxZjGkNlMG6ZEUFRa",

"object": "southgis.chat.completion",

"created": 1750817401514,

"model": "Qwen3-30B-A3B-Int4-W4A16",

"choices": [

{

"message": {

"role": "Assistant",

"content": "你好!看起来你可能在测试或随意输入了一些字符。如果有任何问题或需要帮助,请随时告诉我!😊"

},

"finish_reason": "stop",

"index": 0

}

],

"usage": {

"prompt_tokens": 0,

"completion_tokens": 0,

"total_tokens": 0

},

"type": "FinalResponse"

}

}

],

"status": 'finished',

"userFeedback": 'default',

}

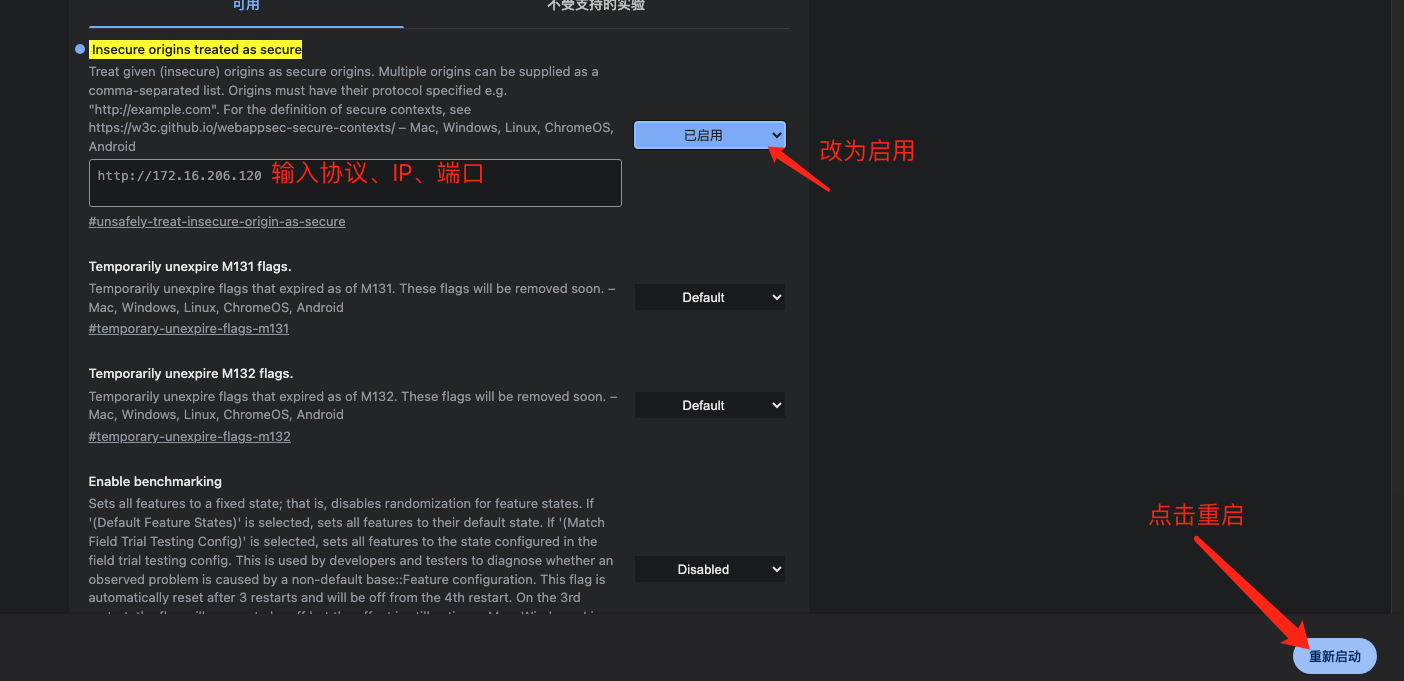

isRecord为true,并设置wssUrl 录音服务的值,如下

因为像摄像头和麦克风属于可能涉及重大隐私问题的API,getUserMedia()的规范提出了浏览器必须满足一系列隐私和安全要求。这个方法功能很强大,只能在安全的上下文中使用,在不安全的环境中为undefined 允许的安全环境有:

使用HTTPS

file://url方案加载的页面

本地开发者测试用的 localhost、127.0.0.1

如果想要在服务器使用录音设备,有两种方法:

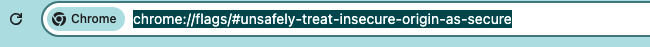

打开谷歌浏览器,在地址栏输入:

chrome://flags/#unsafely-treat-insecure-origin-as-secure

敲击回车后,配置如下信息,多个地址用英文逗号隔开

<video ref="digitalHumanVideo" autoplay playsinline @canplay="computeFrame" style="opactiy: 0" width="400" height="400"></video>

<div class="bg-content"><canvas id="canvas" ref="videoCanvas" v-if="isConnected" width="400" height="400"/></div>

/**

* WebRTC 服务类,用于处理 WebRTC 连接和流媒体传输

*/

class WebRTCService {

constructor() {

// WebRTC 连接对象

this.peerConnection = null;

// 远程媒体流

this.remoteStream = null;

}

/**

* 初始化 WebRTC 连接

* @param {Object} configuration - WebRTC 配置项,包含 ICE 服务器等信息

* @returns {Promise<boolean>} 初始化是否成功

*/

async initializeConnection(configuration = {

iceServers: [

{ urls: 'stun:stun.l.google.com:19302' }

]

}) {

try {

this.peerConnection = new RTCPeerConnection(configuration);

// 添加音视频收发器,设置为仅接收模式

this.peerConnection.addTransceiver('video', { direction: 'recvonly' });

this.peerConnection.addTransceiver('audio', { direction: 'recvonly' });

this.setupPeerConnectionListeners();

return true;

} catch (error) {

console.error('Failed to initialize WebRTC connection:', error);

return false;

}

}

/**

* 设置 WebRTC 连接的事件监听器

* 包括 ICE 候选者和远程流接收的处理

*/

setupPeerConnectionListeners() {

// 处理 ICE 候选者

this.peerConnection.onicecandidate = (event) => {

if (event.candidate) {

this.onIceCandidate(event.candidate);

}

};

// 处理接收到的远程媒体流

this.peerConnection.ontrack = (event) => {

this.remoteStream = event.streams[0];

this.onRemoteStreamReceived(this.remoteStream);

};

}

/**

* 创建并设置本地 SDP offer

* @returns {Promise<RTCSessionDescription>} 本地 SDP 描述

*/

async createOffer() {

try {

const offer = await this.peerConnection.createOffer();

await this.peerConnection.setLocalDescription(offer);

return this.peerConnection.localDescription;

} catch (error) {

console.error('Error creating offer:', error);

throw error;

}

}

/**

* 处理远程 SDP answer

* @param {RTCSessionDescriptionInit} answer - 远程 SDP 答复

*/

async handleAnswer(answer) {

try {

await this.peerConnection.setRemoteDescription(new RTCSessionDescription(answer));

} catch (error) {

console.error('Error handling answer:', error);

throw error;

}

}

// 回调函数接口

onIceCandidate(candidate) {}

onRemoteStreamReceived(stream) {}

/**

* 关闭并清理 WebRTC 连接

*/

closeConnection() {

if (this.peerConnection) {

this.peerConnection.close();

}

this.remoteStream = null;

this.peerConnection = null;

}

}

export default WebRTCService;

webRTC.value = new WebRTCService()

await webRTC.value.initializeConnection({

sdpSemantics: 'unified-plan',

iceServers: [{ urls: ['stun:stun.l.google.com:19302'] }]

})

webRTC.value.onRemoteStreamReceived = (stream) => {

if (digitalHumanVideo.value) {

digitalHumanVideo.value.srcObject = stream

}

}

webRTC.value.onIceCandidate = async (candidate) => {

if (!sessionId.value) return

try {

const response = await fetch(`/webrtcApi2/ice`, {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({

candidate: candidate,

sessionid: sessionId.value

})

})

if (!response.ok) {

throw new Error('Failed to send ICE candidate')

}

} catch (error) {

console.error('Error sending ICE candidate:', error)

}

}

const offer = await webRTC.value.createOffer()

console.log('Creating offer:', offer)

const response = await fetch(`/webrtcApi2/offer`, {

method: 'POST',

headers: {

'Content-Type': 'application/json'

},

body: JSON.stringify({

sdp: offer.sdp,

type: offer.type

})

})

if (!response.ok) {

connectToDigitalHuman()

}

const data = await response.json()

console.log('Server response:', data)

if (!data || !data.sdp) {

throw new Error('Invalid response from server')

}

const answerDescription = {

type: 'answer',

sdp: data.sdp

}

sessionId.value = data.sessionid

await webRTC.value.handleAnswer(answerDescription)

isConnected.value = true

isConnecting.value=false

message.success('连接成功')

} catch (error) {

console.error('Error connecting to digital human:', error)

message.error('连接失败,请联系管理员!')

isConnected.value = false

isConnecting.value = false

}

fabric 去掉绿布,支持fabric 5.3.0版本

import { fabric } from 'fabric';

fabric.Image.filters.RemoveGreen = fabric.util.createClass(fabric.Image.filters.BaseFilter, /** @lends fabric.Image.filters.RemoveGreen.prototype */ {

/**

* Filter type

* @param {String} type

* @default

*/

type: 'RemoveGreen',

/**

* Color to remove, in any format understood by fabric.Color.

* @param {String} type

* @default

*/

color: '#00FF00',

/**

* Fragment source for the brightness program

*/

fragmentSource: `precision highp float;

varying vec2 vTexCoord;

uniform sampler2D uTexture;

uniform vec3 keyColor;

// 色度的相似度计算

uniform float similarity;

// 透明度的平滑度计算

uniform float smoothness;

// 降低绿幕饱和度,提高抠图准确度

uniform float spill;

vec2 RGBtoUV(vec3 rgb) {

return vec2(

rgb.r * -0.169 + rgb.g * -0.331 + rgb.b * 0.5 + 0.5,

rgb.r * 0.5 + rgb.g * -0.419 + rgb.b * -0.081 + 0.5

);

}

void main() {

// 获取当前像素的rgba值

vec4 rgba = texture2D(uTexture, vTexCoord);

// 计算当前像素与绿幕像素的色度差值

vec2 chromaVec = RGBtoUV(rgba.rgb) - RGBtoUV(keyColor);

// 计算当前像素与绿幕像素的色度距离(向量长度), 越相像则色度距离越小

float chromaDist = sqrt(dot(chromaVec, chromaVec));

// 设置了一个相似度阈值,baseMask为负,则表明是绿幕,为正则表明不是绿幕

float baseMask = chromaDist - similarity;

// 如果baseMask为负数,fullMask等于0;baseMask为正数,越大,则透明度越低

float fullMask = pow(clamp(baseMask / smoothness, 0., 1.), 1.5);

rgba.a = fullMask; // 设置透明度

// 如果baseMask为负数,spillVal等于0;baseMask为整数,越小,饱和度越低

float spillVal = pow(clamp(baseMask / spill, 0., 1.), 1.5);

float desat = clamp(rgba.r * 0.2126 + rgba.g * 0.7152 + rgba.b * 0.0722, 0., 1.); // 计算当前像素的灰度值

rgba.rgb = mix(vec3(desat, desat, desat), rgba.rgb, spillVal);

gl_FragColor = rgba;

}

`,

similarity: 0.02,

smoothness: 0.02,

spill: 0.02,

/**

* distance to actual color, as value up or down from each r,g,b

* between 0 and 1

**/

distance: 0.02,

/**

* For color to remove inside distance, use alpha channel for a smoother deletion

* NOT IMPLEMENTED YET

**/

useAlpha: false,

/**

* Constructor

* @memberOf fabric.Image.filters.RemoveWhite.prototype

* @param {Object} [options] Options object

* @param {Number} [options.color=#RRGGBB] Threshold value

* @param {Number} [options.distance=10] Distance value

*/

/**

* Applies filter to canvas element

* @param {Object} canvasEl Canvas element to apply filter to

*/

applyTo2d: function(options) {

var imageData = options.imageData,

data = imageData.data, i,

distance = this.distance * 255,

r, g, b,

source = new fabric.Color(this.color).getSource(),

lowC = [

source[0] - distance,

source[1] - distance,

source[2] - distance,

],

highC = [

source[0] + distance,

source[1] + distance,

source[2] + distance,

];

for (i = 0; i < data.length; i += 4) {

r = data[i];

g = data[i + 1];

b = data[i + 2];

if (r > lowC[0] &&

g > lowC[1] &&

b > lowC[2] &&

r < highC[0] &&

g < highC[1] &&

b < highC[2]) {

data[i + 3] = 0;

}

}

},

/**

* Return WebGL uniform locations for this filter's shader.

*

* @param {WebGLRenderingContext} gl The GL canvas context used to compile this filter's shader.

* @param {WebGLShaderProgram} program This filter's compiled shader program.

*/

getUniformLocations: function(gl, program) {

return {

similarity: gl.getUniformLocation(program, 'similarity'),

smoothness: gl.getUniformLocation(program, 'smoothness'),

spill: gl.getUniformLocation(program, 'spill'),

keyColor: gl.getUniformLocation(program, 'keyColor'),

};

},

/**

* Send data from this filter to its shader program's uniforms.

*

* @param {WebGLRenderingContext} gl The GL canvas context used to compile this filter's shader.

* @param {Object} uniformLocations A map of string uniform names to WebGLUniformLocation objects

*/

sendUniformData: function(gl, uniformLocations) {

// var source = new fabric.Color(this.color).getSource(),

// distance = parseFloat(this.distance),

// lowC = [

// 0 + source[0] / 255 - distance,

// 0 + source[1] / 255 - distance,

// 0 + source[2] / 255 - distance,

// 1

// ],

// highC = [

// source[0] / 255 + distance,

// source[1] / 255 + distance,

// source[2] / 255 + distance,

// 1

// ];

gl.uniform3fv(

uniformLocations.keyColor,

(new fabric.Color(this.color).getSource()).slice(0, 3).map((v) => v / 255),

);

gl.uniform1f(uniformLocations.similarity, this.similarity);

gl.uniform1f(uniformLocations.smoothness, this.smoothness);

gl.uniform1f(uniformLocations.spill, this.spill);

},

/**

* Returns object representation of an instance

* @return {Object} Object representation of an instance

*/

toObject: function() {

return fabric.util.object.extend(this.callSuper('toObject'), {

color: this.color,

similarity: this.similarity,

smoothness: this.smoothness,

spill: this.spill,

});

}

});

/**

* Returns filter instance from an object representation

* @static

* @param {Object} object Object to create an instance from

* @param {Function} [callback] to be invoked after filter creation

* @return {fabric.Image.filters.RemoveGreen} Instance of fabric.Image.filters.RemoveWhite

*/

fabric.Image.filters.RemoveGreen.fromObject = fabric.Image.filters.BaseFilter.fromObject;

const { width, height } = digitalHumanVideo.value.getBoundingClientRect()

ctx.value = videoCanvas.value.getContext('2d')

videoCanvas.value.setAttribute('width', width)

videoCanvas.value.setAttribute('height', height)

const canvas = new fabric.Canvas('canvas');

if (digitalHumanVideo.value) {

if (digitalHumanVideo.value.paused || digitalHumanVideo.value.ended) return

}

let video = new fabric.Image(digitalHumanVideo.value);

video.filters.push(

new fabric.Image.filters.RemoveGreen({

similarity: 0.44,

smoothness: 0.06,

spill: 0.02

}),

)

video.applyFilters()

// 也可以使用setElement()方法,将已经加载好的视频元素传入

canvas.add(video);

fabric.util.requestAnimFrame(function render() {

fabric.filterBackend.evictCachesForKey(video.cacheKey)

// 应用滤镜

video.applyFilters()

// console.log('renderAll')

canvas.renderAll();

fabric.util.requestAnimFrame(render);

});

// 视频正常加载后,再生成 fabric.Image 对象

FAQs

The npm package sg-chat receives a total of 8 weekly downloads. As such, sg-chat popularity was classified as not popular.

We found that sg-chat demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

OpenAI rotated macOS signing certificates after a malicious Axios package reached its CI pipeline in a broader software supply chain attack.

Security News

Open source is under attack because of how much value it creates. It has been the foundation of every major software innovation for the last three decades. This is not the time to walk away from it.

Security News

Socket CEO Feross Aboukhadijeh breaks down how North Korea hijacked Axios and what it means for the future of software supply chain security.