Product

Announcing Socket Fix 2.0

Socket Fix 2.0 brings targeted CVE remediation, smarter upgrade planning, and broader ecosystem support to help developers get to zero alerts.

symspell-ex

Advanced tools

Spelling correction & Fuzzy search based on symmetric delete spelling correction algorithm

Spelling correction & Fuzzy search based on Symmetric Delete Spelling Correction algorithm (SymSpell)

Work in progress, need more optimizations

npm install symspell-ex --save

Changes v1.1.1

- Tokenization support

- Term frequency should be provided for training and terms should be unique

- Correct function return different object (

Correctionobject)- Hash table implemented in redis store instead of normal list structure

- Enhanced testing code and coverage

- Fixed bugs in lookup

For single term training you can use add function:

import {SymSpellEx, MemoryStore} from 'symspell-ex';

const LANGUAGE = 'en';

// Create SymSpellEx instnce and inject store new store instance

symSpellEx = new SymSpellEx(new MemoryStore());

await symSpellEx.initialize();

// Train data

await symSpellEx.add("argument", LANGUAGE);

await symSpellEx.add("computer", LANGUAGE);

For multiple terms (Array) you can use train function:

const terms = ['argument', 'computer'];

await symSpellEx.train(terms, 1, LANGUAGE);

search function can be used to get multiple suggestions if available up to the maxSuggestions value

Arguments:

input String (Wrong/Invalid word we need to correct)language String (Language to be used in search)maxDistance Number, optional, default = 2 (Maximum distance for suggestions)maxSuggestions Number, optional, default = 5 (Maximum suggestions number to return)Return: Array<Suggetion> Array of suggestions

Example

await symSpellEx.search('argoments', 'en');

Example Suggestion Object:

{

"term": "argoments",

"suggestion": "arguments",

"distance": 2,

"frequency": 155

}

correct function can be used to get the best suggestion for input word or sentence in terms of edit distance and frequency

Arguments:

input String (Wrong/Invalid word we need to correct)language String (Language to be used in search)maxDistance Number, optional, default = 2 (Maximum distance for suggestions)Return: Correction object which contains original input and corrected output string, with array of suggestions

Example

await symSpellEx.correct('Special relatvity was orignally proposed by Albert Einstein', 'en');

Returns this Correction object:

This output is totally depending on the quality of the training data that was push into the store

{

"suggestions": [],

"input": "Special relatvity was orignally proposed by Albert Einstein",

"output": "Special relativity was originally proposed by Albert Einstein"

}

The algorithm has constant time O(1) time, independent of the dictionary size, but depend on the average term length and maximum edit distance, Hash Table is used to store all search entries which has an average search time complexity of O(1).

in training phase all possible spelling error variants as generated (deletes only) and stored in hash table

This makes the algorithm very fast, but it also required a large memory footprint, and the training phase takes a considerable amount of time to build the dictionary first time. (Using RedisStore makes it easy to train and build once, then search and correct from any external source)

It allows a tremendous reduction of the number of spelling error candidates to be pre-calculated (generated and added to hash table), which then allows O(1) search while getting spelling suggestions.

This interface can be implemented to provide a different tokenizer for the library

Interface type

export interface Tokenizer {

tokenize(input: string): Array<Token>;

}

This interface can be implemented to provide more algorithms to use to calculate edit distance between two words

Edit Distance is a way of quantifying how dissimilar two strings (e.g., words) are to one another by counting the minimum number of operations required to transform one string into the other

Interface type

interface EditDistance {

name: String;

calculateDistance(source: string, target: string): number;

}

This interface can be implemented to provide additional method to store data other than built-in stores (Memory, Redis)

Data store should handle storage for these 2 data types:

Data store should also handle storage for multiple languages and switch between them

Interface type

export interface DataStore {

name: string;

initialize(): Promise<void>;

isInitialized(): boolean;

setLanguage(language: string): Promise<void>;

pushTerm(value: string): Promise<number>;

getTermAt(index: number): Promise<string>;

getTermsAt(indexes: Array<number>): Promise<Array<string>>;

getEntry(key: string): Promise<Array<number>>;

getEntries(keys: Array<string>): Promise<Array<Array<number>>>;

setEntry(key: string, value: Array<number>): Promise<boolean>;

hasEntry(key: string): Promise<boolean>;

maxEntryLength(): Promise<number>;

clear(): Promise<void>;

}

May be limited by node process memory limits, which can be overridden

Very efficient way to train and store data, it will allow accessing by multiple processes and/or machines, also dumping and migrating data will be easy

TODO

MIT

FAQs

Spelling correction & Fuzzy search based on symmetric delete spelling correction algorithm

The npm package symspell-ex receives a total of 232 weekly downloads. As such, symspell-ex popularity was classified as not popular.

We found that symspell-ex demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket Fix 2.0 brings targeted CVE remediation, smarter upgrade planning, and broader ecosystem support to help developers get to zero alerts.

Security News

Socket CEO Feross Aboukhadijeh joins Risky Business Weekly to unpack recent npm phishing attacks, their limited impact, and the risks if attackers get smarter.

Product

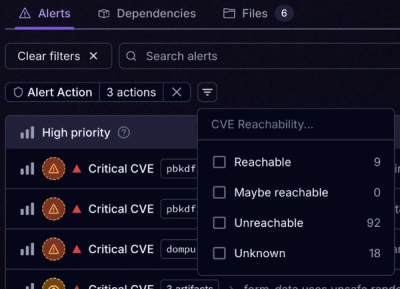

Socket’s new Tier 1 Reachability filters out up to 80% of irrelevant CVEs, so security teams can focus on the vulnerabilities that matter.