Security News

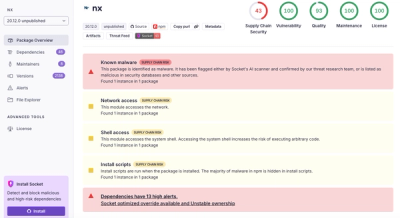

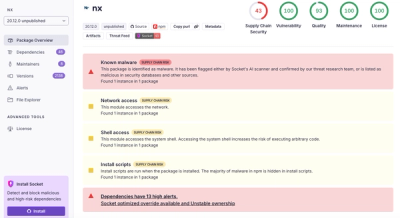

Nx npm Packages Compromised in Supply Chain Attack Weaponizing AI CLI Tools

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

The unzipper npm package is a module that provides streaming APIs for unzipping .zip files. It allows for extracting the contents of zip files, parsing zip file structures, and more, all while being memory efficient and fast.

Extracting zip files

This feature allows you to extract the contents of a zip file to a specified directory. The code sample demonstrates how to read a zip file as a stream and pipe it to the unzipper's Extract method, which will extract the files to the given path.

const unzipper = require('unzipper');

const fs = require('fs');

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Extract({ path: 'output/path' }));Parsing zip file entries

This feature allows you to parse the contents of a zip file and work with each entry individually. The code sample shows how to read entries from a zip file and handle them based on their type, either extracting files or draining directories.

const unzipper = require('unzipper');

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.on('entry', function (entry) {

const fileName = entry.path;

const type = entry.type; // 'Directory' or 'File'

if (type === 'File') {

entry.pipe(fs.createWriteStream('output/path/' + fileName));

} else {

entry.autodrain();

}

});Buffer-based extraction

This feature allows you to extract files from a zip file that is already loaded into a buffer. The code sample demonstrates how to open a zip file from a buffer and then extract the contents of the first file into another buffer.

const unzipper = require('unzipper');

unzipper.Open.buffer(buffer)

.then(function (directory) {

return directory.files[0].buffer();

})

.then(function (contentBuffer) {

// Use the contentBuffer

});adm-zip is a JavaScript implementation for zip data compression for NodeJS. It provides functionalities similar to unzipper, such as reading and extracting zip files. Unlike unzipper, which is stream-based, adm-zip works with in-memory buffers, which can be less efficient for large files.

jszip is a library for creating, reading, and editing .zip files with JavaScript, with a focus on client-side use. It can be used in NodeJS as well. It offers a more comprehensive API for handling zip files compared to unzipper, including the ability to generate zip files, but it might not be as optimized for streaming large zip files.

yauzl is another NodeJS library for reading zip files. It focuses on low-level zip file parsing and decompression, providing a minimal API. It's designed to be more memory efficient than adm-zip by using lazy parsing, but it doesn't provide the high-level convenience methods that unzipper does.

$ npm install unzipper

The open methods allow random access to the underlying files of a zip archive, from disk or from the web, s3 or a custom source.

The open methods return a promise on the contents of the central directory of a zip file, with individual files listed in an array.

Each file record has the following methods, providing random access to the underlying files:

stream([password]) - returns a stream of the unzipped content which can be piped to any destinationbuffer([password]) - returns a promise on the buffered content of the file.If the file is encrypted you will have to supply a password to decrypt, otherwise you can leave blank.

Unlike adm-zip the Open methods will never read the entire zipfile into buffer.

The last argument to the Open methods is an optional options object where you can specify tailSize (default 80 bytes), i.e. how many bytes should we read at the end of the zipfile to locate the endOfCentralDirectory. This location can be variable depending on zip64 extensible data sector size. Additionally you can supply option crx: true which will check for a crx header and parse the file accordingly by shifting all file offsets by the length of the crx header.

Returns a Promise to the central directory information with methods to extract individual files. start and end options are used to avoid reading the whole file.

Here is a simple example of opening up a zip file, printing out the directory information and then extracting the first file inside the zipfile to disk:

async function main() {

const directory = await unzipper.Open.file('path/to/archive.zip');

console.log('directory', directory);

return new Promise( (resolve, reject) => {

directory.files[0]

.stream()

.pipe(fs.createWriteStream('firstFile'))

.on('error',reject)

.on('finish',resolve)

});

}

main();

If you want to extract all files from the zip file, the directory object supplies an extract method. Here is a quick example:

async function main() {

const directory = await unzipper.Open.file('path/to/archive.zip');

await directory.extract({ path: '/path/to/destination' })

}

This function will return a Promise to the central directory information from a URL point to a zipfile. Range-headers are used to avoid reading the whole file. Unzipper does not ship with a request library so you will have to provide it as the first option.

Live Example: (extracts a tiny xml file from the middle of a 500MB zipfile)

const request = require('request');

const unzipper = require('./unzip');

async function main() {

const directory = await unzipper.Open.url(request,'http://www2.census.gov/geo/tiger/TIGER2015/ZCTA5/tl_2015_us_zcta510.zip');

const file = directory.files.find(d => d.path === 'tl_2015_us_zcta510.shp.iso.xml');

const content = await file.buffer();

console.log(content.toString());

}

main();

This function takes a second parameter which can either be a string containing the url to request, or an options object to invoke the supplied request library with. This can be used when other request options are required, such as custom headers or authentication to a third party service.

const request = require('google-oauth-jwt').requestWithJWT();

const googleStorageOptions = {

url: `https://www.googleapis.com/storage/v1/b/m-bucket-name/o/my-object-name`,

qs: { alt: 'media' },

jwt: {

email: google.storage.credentials.client_email,

key: google.storage.credentials.private_key,

scopes: ['https://www.googleapis.com/auth/devstorage.read_only']

}

});

async function getFile(req, res, next) {

const directory = await unzipper.Open.url(request, googleStorageOptions);

const file = zip.files.find((file) => file.path === 'my-filename');

return file.stream().pipe(res);

});

This function will return a Promise to the central directory information from a zipfile on S3. Range-headers are used to avoid reading the whole file. Unzipper does not ship with with the aws-sdk so you have to provide an instantiated client as first arguments. The params object requires Bucket and Key to fetch the correct file.

Example:

const unzipper = require('./unzip');

const AWS = require('aws-sdk');

const s3Client = AWS.S3(config);

async function main() {

const directory = await unzipper.Open.s3(s3Client,{Bucket: 'unzipper', Key: 'archive.zip'});

return new Promise( (resolve, reject) => {

directory.files[0]

.stream()

.pipe(fs.createWriteStream('firstFile'))

.on('error',reject)

.on('finish',resolve)

});

}

main();

If you already have the zip file in-memory as a buffer, you can open the contents directly.

Example:

// never use readFileSync - only used here to simplify the example

const buffer = fs.readFileSync('path/to/arhive.zip');

async function main() {

const directory = await unzipper.Open.buffer(buffer);

console.log('directory',directory);

// ...

}

main();

This function can be used to provide a custom source implementation. The source parameter expects a stream and a size function to be implemented. The size function should return a Promise that resolves the total size of the file. The stream function should return a Readable stream according to the supplied offset and length parameters.

Example:

// Custom source implementation for reading a zip file from Google Cloud Storage

const { Storage } = require('@google-cloud/storage');

async function main() {

const storage = new Storage();

const bucket = storage.bucket('my-bucket');

const zipFile = bucket.file('my-zip-file.zip');

const customSource = {

stream: function(offset, length) {

return zipFile.createReadStream({

start: offset,

end: length && offset + length

})

},

size: async function() {

const objMetadata = (await zipFile.getMetadata())[0];

return objMetadata.size;

}

};

const directory = await unzipper.Open.custom(customSource);

console.log('directory', directory);

// ...

}

main();

The directory object returned from Open.[method] provides an extract method which extracts all the files to a specified path, with an optional concurrency (default: 1).

Example (with concurrency of 5):

unzip.Open.file('path/to/archive.zip')

.then(d => d.extract({path: '/extraction/path', concurrency: 5}));

Please note: Methods that use the Central Directory instead of parsing entire file can be found under Open

Chrome extension files (.crx) are zipfiles with an extra header at the start of the file. Unzipper will parse .crx file with the streaming methods (Parse and ParseOne).

This library began as an active fork and drop-in replacement of the node-unzip to address the following issues:

Originally the only way to use the library was to stream the entire zip file. This method is inefficient if you are only interested in selected files from the zip files. Additionally this method can be error prone since it relies on the local file headers which could be wrong.

The structure of this fork is similar to the original, but uses Promises and inherit guarantees provided by node streams to ensure low memory footprint and emits finish/close events at the end of processing. The new Parser will push any parsed entries downstream if you pipe from it, while still supporting the legacy entry event as well.

Breaking changes: The new Parser will not automatically drain entries if there are no listeners or pipes in place.

Unzipper provides simple APIs similar to node-tar for parsing and extracting zip files. There are no added compiled dependencies - inflation is handled by node.js's built in zlib support.

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Extract({ path: 'output/path' }));

Extract emits the 'close' event once the zip's contents have been fully extracted to disk. Extract uses fstream.Writer and therefore needs an absolute path to the destination directory. This directory will be automatically created if it doesn't already exist.

Process each zip file entry or pipe entries to another stream.

Important: If you do not intend to consume an entry stream's raw data, call autodrain() to dispose of the entry's

contents. Otherwise the stream will halt. .autodrain() returns an empty stream that provides error and finish events.

Additionally you can call .autodrain().promise() to get the promisified version of success or failure of the autodrain.

// If you want to handle autodrain errors you can either:

entry.autodrain().catch(e => handleError);

// or

entry.autodrain().on('error' => handleError);

Here is a quick example:

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.on('entry', function (entry) {

const fileName = entry.path;

const type = entry.type; // 'Directory' or 'File'

const size = entry.vars.uncompressedSize; // There is also compressedSize;

if (fileName === "this IS the file I'm looking for") {

entry.pipe(fs.createWriteStream('output/path'));

} else {

entry.autodrain();

}

});

and the same example using async iterators:

const zip = fs.createReadStream('path/to/archive.zip').pipe(unzipper.Parse({forceStream: true}));

for await (const entry of zip) {

const fileName = entry.path;

const type = entry.type; // 'Directory' or 'File'

const size = entry.vars.uncompressedSize; // There is also compressedSize;

if (fileName === "this IS the file I'm looking for") {

entry.pipe(fs.createWriteStream('output/path'));

} else {

entry.autodrain();

}

}

If you pipe from unzipper the downstream components will receive each entry for further processing. This allows for clean pipelines transforming zipfiles into unzipped data.

Example using stream.Transform:

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.pipe(stream.Transform({

objectMode: true,

transform: function(entry,e,cb) {

const fileName = entry.path;

const type = entry.type; // 'Directory' or 'File'

const size = entry.vars.uncompressedSize; // There is also compressedSize;

if (fileName === "this IS the file I'm looking for") {

entry.pipe(fs.createWriteStream('output/path'))

.on('finish',cb);

} else {

entry.autodrain();

cb();

}

}

}

}));

Example using etl:

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.pipe(etl.map(entry => {

if (entry.path == "this IS the file I'm looking for")

return entry

.pipe(etl.toFile('output/path'))

.promise();

else

entry.autodrain();

}))

unzipper.parseOne([regex]) is a convenience method that unzips only one file from the archive and pipes the contents down (not the entry itself). If no search criteria is specified, the first file in the archive will be unzipped. Otherwise, each filename will be compared to the criteria and the first one to match will be unzipped and piped down. If no file matches then the the stream will end without any content.

Example:

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.ParseOne())

.pipe(fs.createWriteStream('firstFile.txt'));

While the recommended strategy of consuming the unzipped contents is using streams, it is sometimes convenient to be able to get the full buffered contents of each file . Each entry provides a .buffer function that consumes the entry by buffering the contents into memory and returning a promise to the complete buffer.

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.pipe(etl.map(async entry => {

if (entry.path == "this IS the file I'm looking for") {

const content = await entry.buffer();

await fs.writeFile('output/path',content);

}

else {

entry.autodrain();

}

}))

The parser emits finish and error events like any other stream. The parser additionally provides a promise wrapper around those two events to allow easy folding into existing Promise-based structures.

Example:

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.on('entry', entry => entry.autodrain())

.promise()

.then( () => console.log('done'), e => console.log('error',e));

Archives created by legacy tools usually have filenames encoded with IBM PC (Windows OEM) character set. You can decode filenames with preferred character set:

const il = require('iconv-lite');

fs.createReadStream('path/to/archive.zip')

.pipe(unzipper.Parse())

.on('entry', function (entry) {

// if some legacy zip tool follow ZIP spec then this flag will be set

const isUnicode = entry.props.flags.isUnicode;

// decode "non-unicode" filename from OEM Cyrillic character set

const fileName = isUnicode ? entry.path : il.decode(entry.props.pathBuffer, 'cp866');

const type = entry.type; // 'Directory' or 'File'

const size = entry.vars.uncompressedSize; // There is also compressedSize;

if (fileName === "Текстовый файл.txt") {

entry.pipe(fs.createWriteStream(fileName));

} else {

entry.autodrain();

}

});

See LICENCE

FAQs

Unzip cross-platform streaming API

The npm package unzipper receives a total of 2,915,756 weekly downloads. As such, unzipper popularity was classified as popular.

We found that unzipper demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

Security News

CISA’s 2025 draft SBOM guidance adds new fields like hashes, licenses, and tool metadata to make software inventories more actionable.

Security News

A clarification on our recent research investigating 60 malicious Ruby gems.