Research

/Security News

Intercom’s npm Package Compromised in Ongoing Mini Shai-Hulud Worm Attack

Compromised intercom-client@7.0.4 npm package is tied to the ongoing Mini Shai-Hulud worm attack targeting developer and CI/CD secrets.

agent-framework-devui

Advanced tools

A lightweight, standalone sample app interface for running entities (agents/workflows) in the Microsoft Agent Framework supporting directory-based discovery, in-memory entity registration, and sample entity gallery.

[!IMPORTANT] DevUI is a sample app to help you get started with the Agent Framework. It is not intended for production use. For production, or for features beyond what is provided in this sample app, it is recommended that you build your own custom interface and API server using the Agent Framework SDK.

# Install

pip install agent-framework-devui --pre

You can also launch it programmatically

from agent_framework import Agent

from agent_framework.openai import OpenAIChatClient

from agent_framework.devui import serve

def get_weather(location: str) -> str:

"""Get weather for a location."""

return f"Weather in {location}: 72°F and sunny"

# Create your agent

agent = Agent(

name="WeatherAgent",

client=OpenAIChatClient(),

tools=[get_weather]

)

# Launch debug UI - that's it!

serve(entities=[agent], auto_open=True)

# → Opens browser to http://localhost:8080

In addition, if you have agents/workflows defined in a specific directory structure (see below), you can launch DevUI from the cli to discover and run them.

# Launch web UI + API server

devui ./agents --port 8080

# → Web UI: http://localhost:8080

# → API: http://localhost:8080/v1/*

When DevUI starts with no discovered entities, it displays a sample entity gallery with curated examples from the Agent Framework repository. You can download these samples, review them, and run them locally to get started quickly.

Important: Don't use async with context managers when creating agents with MCP tools for DevUI - connections will close before execution.

# ✅ Correct - DevUI handles cleanup automatically

mcp_tool = MCPStreamableHTTPTool(url="http://localhost:8011/mcp", client=client)

agent = Agent(tools=mcp_tool)

serve(entities=[agent])

MCP tools use lazy initialization and connect automatically on first use. DevUI attempts to clean up connections on shutdown

Register cleanup hooks to properly close credentials and resources on shutdown:

from azure.identity.aio import DefaultAzureCredential

from agent_framework import Agent

from agent_framework.openai import OpenAIChatCompletionClient

from agent_framework_devui import register_cleanup, serve

credential = DefaultAzureCredential()

client = OpenAIChatCompletionClient()

agent = Agent(name="MyAgent", client=client)

# Register cleanup hook - credential will be closed on shutdown

register_cleanup(agent, credential.close)

serve(entities=[agent])

Works with multiple resources and file-based discovery. See tests for more examples.

For your agents to be discovered by the DevUI, they must be organized in a directory structure like below. Each agent/workflow must have an __init__.py that exports the required variable (agent or workflow).

Note: .env files are optional but will be automatically loaded if present in the agent/workflow directory or parent entities directory. Use them to store API keys, configuration variables, and other environment-specific settings.

agents/

├── weather_agent/

│ ├── __init__.py # Must export: agent = Agent(...)

│ ├── agent.py

│ └── .env # Optional: API keys, config vars

├── my_workflow/

│ ├── __init__.py # Must export: workflow = WorkflowBuilder(start_executor=...)...

│ ├── workflow.py

│ └── .env # Optional: environment variables

└── .env # Optional: shared environment variables

If your agents import tools or utilities from sibling directories (e.g., from tools.helpers import my_tool), you must set PYTHONPATH to include the parent directory:

# Project structure:

# backend/

# ├── agents/

# │ └── my_agent/

# │ └── agent.py # contains: from tools.helpers import my_tool

# └── tools/

# └── helpers.py

# Run from project root with PYTHONPATH

cd backend

PYTHONPATH=. devui ./agents --port 8080

Without PYTHONPATH, Python cannot find modules in sibling directories and DevUI will report an import error.

Agent Framework emits OpenTelemetry (Otel) traces for various operations. You can view these traces in DevUI by enabling instrumentation when starting the server.

devui ./agents --instrumentation

For convenience, DevUI provides an OpenAI Responses backend API. This means you can run the backend and also use the OpenAI client sdk to connect to it. Use agent/workflow name as the entity_id in metadata, and set streaming to True as needed.

# Simple - use your entity name as the entity_id in metadata

curl -X POST http://localhost:8080/v1/responses \

-H "Content-Type: application/json" \

-d @- << 'EOF'

{

"metadata": {"entity_id": "weather_agent"},

"input": "Hello world"

}

Or use the OpenAI Python SDK:

from openai import OpenAI

client = OpenAI(

base_url="http://localhost:8080/v1",

api_key="not-needed" # API key not required for local DevUI

)

response = client.responses.create(

metadata={"entity_id": "weather_agent"}, # Your agent/workflow name

input="What's the weather in Seattle?"

)

# Extract text from response

print(response.output[0].content[0].text)

# Supports streaming with stream=True

Use the standard OpenAI conversation parameter for multi-turn conversations:

# Create a conversation

conversation = client.conversations.create(

metadata={"agent_id": "weather_agent"}

)

# Use it across multiple turns

response1 = client.responses.create(

metadata={"entity_id": "weather_agent"},

input="What's the weather in Seattle?",

conversation=conversation.id

)

response2 = client.responses.create(

metadata={"entity_id": "weather_agent"},

input="How about tomorrow?",

conversation=conversation.id # Continues the conversation!

)

How it works: DevUI automatically retrieves the conversation's message history from the stored thread and passes it to the agent. You don't need to manually manage message history - just provide the same conversation ID for follow-up requests.

DevUI provides an OpenAI Proxy feature for testing OpenAI models directly through the interface without creating custom agents. Enable via Settings → OpenAI Proxy tab.

How it works: The UI sends requests to the DevUI backend (with X-Proxy-Backend: openai header), which then proxies them to OpenAI's Responses API (and Conversations API for multi-turn chats). This proxy approach keeps your OPENAI_API_KEY secure on the server—never exposed in the browser or client-side code.

Example:

curl -X POST http://localhost:8080/v1/responses \

-H "X-Proxy-Backend: openai" \

-d '{"model": "gpt-4.1-mini", "input": "Hello"}'

Note: Requires OPENAI_API_KEY environment variable configured on the backend.

devui [directory] [options]

Options:

--port, -p Port (default: 8080)

--host Host (default: 127.0.0.1)

--headless API only, no UI

--no-open Don't automatically open browser

--instrumentation Enable OpenTelemetry instrumentation

--reload Enable auto-reload

--mode developer|user (default: developer)

--auth Enable Bearer token authentication

--auth-token Custom authentication token

# Development

devui ./agents

# Production (user-facing)

devui ./agents --mode user --auth

Given that DevUI offers an OpenAI Responses API, it internally maps messages and events from Agent Framework to OpenAI Responses API events (in _mapper.py). For transparency, this mapping is shown below:

| OpenAI Event/Type | Agent Framework Content | Status |

|---|---|---|

| Lifecycle Events | ||

response.created + response.in_progress | AgentStartedEvent | OpenAI |

response.completed | AgentCompletedEvent | OpenAI |

response.failed | AgentFailedEvent | OpenAI |

response.created + response.in_progress | WorkflowEvent (type='started') | OpenAI |

response.completed | WorkflowEvent (type='status') | OpenAI |

response.failed | WorkflowEvent (type='failed') | OpenAI |

| Content Types | ||

response.content_part.added + response.output_text.delta | TextContent | OpenAI |

response.reasoning_text.delta | TextReasoningContent | OpenAI |

response.output_item.added | FunctionCallContent (initial) | OpenAI |

response.function_call_arguments.delta | FunctionCallContent (args) | OpenAI |

response.function_result.complete | FunctionResultContent | DevUI |

response.function_approval.requested | FunctionApprovalRequestContent | DevUI |

response.function_approval.responded | FunctionApprovalResponseContent | DevUI |

response.output_item.added (ResponseOutputImage) | DataContent (images) | DevUI |

response.output_item.added (ResponseOutputFile) | DataContent (files) | DevUI |

response.output_item.added (ResponseOutputData) | DataContent (other) | DevUI |

response.output_item.added (ResponseOutputImage/File) | UriContent (images/files) | DevUI |

error | ErrorContent | OpenAI |

Final Response.usage field (not streamed) | UsageContent | OpenAI |

| Workflow Events | ||

response.output_item.added (ExecutorActionItem)* | WorkflowEvent (type='executor_invoked') | OpenAI |

response.output_item.done (ExecutorActionItem)* | WorkflowEvent (type='executor_completed') | OpenAI |

response.output_item.done (ExecutorActionItem with error)* | WorkflowEvent (type='executor_failed') | OpenAI |

response.output_item.added (ResponseOutputMessage) | WorkflowEvent (type='output') | OpenAI |

response.workflow_event.complete | WorkflowEvent (other types) | DevUI |

response.trace.complete | WorkflowEvent (type='status') | DevUI |

response.trace.complete | WorkflowEvent (type='warning') | DevUI |

| Trace Content | ||

response.trace.complete | DataContent (no data/errors) | DevUI |

response.trace.complete | UriContent (unsupported MIME) | DevUI |

response.trace.complete | HostedFileContent | DevUI |

response.trace.complete | HostedVectorStoreContent | DevUI |

*Uses standard OpenAI event structure but carries DevUI-specific ExecutorActionItem payload

DevUI follows the OpenAI Responses API specification for maximum compatibility:

OpenAI Standard Event Types Used:

ResponseOutputItemAddedEvent - Output item notifications (function calls, images, files, data)ResponseOutputItemDoneEvent - Output item completion notificationsResponse.usage - Token usage (in final response, not streamed)Custom DevUI Extensions:

response.output_item.added with custom item types:

ResponseOutputImage - Agent-generated images (inline display)ResponseOutputFile - Agent-generated files (inline display)ResponseOutputData - Agent-generated structured data (inline display)response.function_approval.requested - Function approval requests (for interactive approval workflows)response.function_approval.responded - Function approval responses (user approval/rejection)response.function_result.complete - Server-side function execution resultsresponse.workflow_event.complete - Agent Framework workflow eventsresponse.trace.complete - Execution traces and internal content (DataContent, UriContent, hosted files/stores)These custom extensions are clearly namespaced and can be safely ignored by standard OpenAI clients. Note that DevUI also uses standard OpenAI events with custom payloads (e.g., ExecutorActionItem within response.output_item.added).

GET /v1/entities - List discovered agents/workflowsGET /v1/entities/{entity_id}/info - Get detailed entity informationPOST /v1/entities/{entity_id}/reload - Hot reload entity (for development)POST /v1/responses - Execute agent/workflow (streaming or sync)POST /v1/conversations - Create conversationGET /v1/conversations/{id} - Get conversationPOST /v1/conversations/{id} - Update conversation metadataDELETE /v1/conversations/{id} - Delete conversationGET /v1/conversations?agent_id={id} - List conversations (DevUI extension)POST /v1/conversations/{id}/items - Add items to conversationGET /v1/conversations/{id}/items - List conversation itemsGET /v1/conversations/{id}/items/{item_id} - Get conversation itemGET /health - Health checkDevUI is designed as a sample application for local development and should not be exposed to untrusted networks without proper authentication.

For production deployments:

# User mode with authentication (recommended)

devui ./agents --mode user --auth --host 0.0.0.0

This restricts developer APIs (reload, deployment, entity details) and requires Bearer token authentication.

Security features:

--authBest practices:

--mode user --auth for any deployment exposed to end users.env files for sensitive credentials (never commit them)agent_framework_devui/_discovery.pyagent_framework_devui/_executor.pyagent_framework_devui/_mapper.pyagent_framework_devui/_conversations.pyagent_framework_devui/_server.pyagent_framework_devui/_cli.pySee working implementations in python/samples/02-agents/devui/

MIT

FAQs

Debug UI for Microsoft Agent Framework with OpenAI-compatible API server.

We found that agent-framework-devui demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Compromised intercom-client@7.0.4 npm package is tied to the ongoing Mini Shai-Hulud worm attack targeting developer and CI/CD secrets.

Research

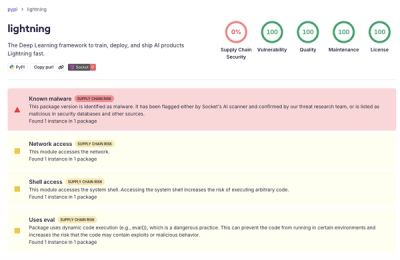

Socket detected a malicious supply chain attack on PyPI package lightning versions 2.6.2 and 2.6.3, which execute credential-stealing malware on import.

Research

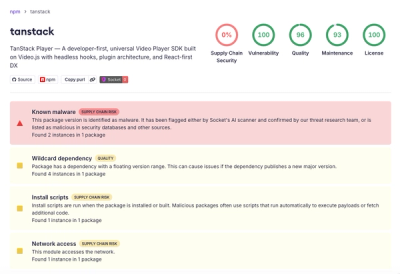

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.