Research

/Security News

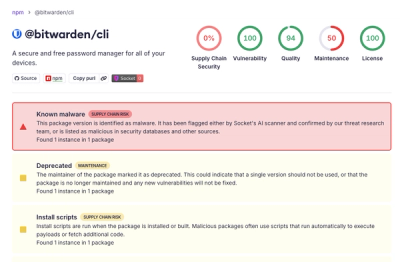

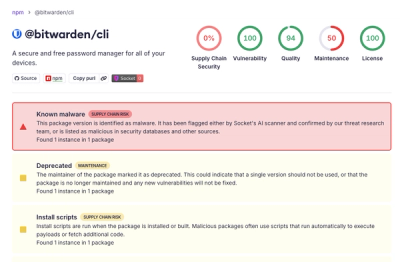

Bitwarden CLI Compromised in Ongoing Checkmarx Supply Chain Campaign

Bitwarden CLI 2026.4.0 was compromised in the Checkmarx supply chain campaign after attackers abused a GitHub Action in Bitwarden’s CI/CD pipeline.

charset-normalizer

Advanced tools

The Real First Universal Charset Detector. Open, modern and actively maintained alternative to Chardet.

The Real First Universal Charset Detector

In other language (unofficial port - by the community)

A library that helps you read text from an unknown charset encoding.

Motivated bychardet, I'm trying to resolve the issue by taking a new approach. All IANA character set names for which the Python core library provides codecs are supported. You can also register your own set of codecs, and yes, it would work as-is.

>>>>> 👉 Try Me Online Now, Then Adopt Me 👈 <<<<<

This project offers you an alternative to Universal Charset Encoding Detector, also known as Chardet.

| Feature | Chardet | Charset Normalizer | cChardet |

|---|---|---|---|

Fast | ✅ | ✅ | ✅ |

Universal1 | ❌ | ✅ | ❌ |

Reliable without distinguishable standards | ✅ | ✅ | ✅ |

Reliable with distinguishable standards | ✅ | ✅ | ✅ |

License | Disputed2 restrictive | MIT | MPL-1.1 restrictive |

Native Python | ✅ | ✅ | ❌ |

Detect spoken language | ✅ | ✅ | N/A |

UnicodeDecodeError Safety | ✅ | ✅ | ❌ |

Whl Size (min) | 500 kB | 150 kB | ~200 kB |

Supported Encoding | 99 | 99 | 40 |

Can register custom encoding | ❌ | ✅ | ❌ |

This package offer better performances (99th, and 95th) against Chardet. Here are some numbers.

| Package | Accuracy | Mean per file (ms) | File per sec (est) |

|---|---|---|---|

| chardet 7.1 | 89 % | 3 ms | 333 file/sec |

| charset-normalizer | 97 % | 3 ms | 333 file/sec |

| Package | 99th percentile | 95th percentile | 50th percentile |

|---|---|---|---|

| chardet 7.1 | 32 ms | 17 ms | < 1 ms |

| charset-normalizer | 16 ms | 10 ms | 1 ms |

updated as of March 2026 using CPython 3.12, Charset-Normalizer 3.4.6, and Chardet 7.1.0

Chardet's performance on larger file (1MB+) are very poor. Expect huge difference on large payload. No longer the case since Chardet 7.0+

Stats are generated using 400+ files using default parameters. More details on used files, see GHA workflows. And yes, these results might change at any time. The dataset can be updated to include more files. The actual delays heavily depends on your CPU capabilities. The factors should remain the same. Chardet claims on his documentation to have a greater accuracy than us based on the dataset they trained Chardet on(...) Well, it's normal, the opposite would have been worrying. Whereas charset-normalizer don't train on anything, our solution is based on a completely different algorithm, still heuristic through, it does not need weights across every encoding tables.

Using pip:

pip install charset-normalizer -U

This package comes with a CLI.

usage: normalizer [-h] [-v] [-a] [-n] [-m] [-r] [-f] [-t THRESHOLD]

file [file ...]

The Real First Universal Charset Detector. Discover originating encoding used

on text file. Normalize text to unicode.

positional arguments:

files File(s) to be analysed

optional arguments:

-h, --help show this help message and exit

-v, --verbose Display complementary information about file if any.

Stdout will contain logs about the detection process.

-a, --with-alternative

Output complementary possibilities if any. Top-level

JSON WILL be a list.

-n, --normalize Permit to normalize input file. If not set, program

does not write anything.

-m, --minimal Only output the charset detected to STDOUT. Disabling

JSON output.

-r, --replace Replace file when trying to normalize it instead of

creating a new one.

-f, --force Replace file without asking if you are sure, use this

flag with caution.

-t THRESHOLD, --threshold THRESHOLD

Define a custom maximum amount of chaos allowed in

decoded content. 0. <= chaos <= 1.

--version Show version information and exit.

normalizer ./data/sample.1.fr.srt

or

python -m charset_normalizer ./data/sample.1.fr.srt

🎉 Since version 1.4.0 the CLI produce easily usable stdout result in JSON format.

{

"path": "/home/default/projects/charset_normalizer/data/sample.1.fr.srt",

"encoding": "cp1252",

"encoding_aliases": [

"1252",

"windows_1252"

],

"alternative_encodings": [

"cp1254",

"cp1256",

"cp1258",

"iso8859_14",

"iso8859_15",

"iso8859_16",

"iso8859_3",

"iso8859_9",

"latin_1",

"mbcs"

],

"language": "French",

"alphabets": [

"Basic Latin",

"Latin-1 Supplement"

],

"has_sig_or_bom": false,

"chaos": 0.149,

"coherence": 97.152,

"unicode_path": null,

"is_preferred": true

}

Just print out normalized text

from charset_normalizer import from_path

results = from_path('./my_subtitle.srt')

print(str(results.best()))

Upgrade your code without effort

from charset_normalizer import detect

The above code will behave the same as chardet. We ensure that we offer the best (reasonable) BC result possible.

See the docs for advanced usage : readthedocs.io

When I started using Chardet, I noticed that it was not suited to my expectations, and I wanted to propose a reliable alternative using a completely different method. Also! I never back down on a good challenge!

I don't care about the originating charset encoding, because two different tables can produce two identical rendered string. What I want is to get readable text, the best I can.

In a way, I'm brute forcing text decoding. How cool is that ? 😎

Don't confuse package ftfy with charset-normalizer or chardet. ftfy goal is to repair Unicode string whereas charset-normalizer to convert raw file in unknown encoding to unicode.

Wait a minute, what is noise/mess and coherence according to YOU ?

Noise : I opened hundred of text files, written by humans, with the wrong encoding table. I observed, then I established some ground rules about what is obvious when it seems like a mess (aka. defining noise in rendered text). I know that my interpretation of what is noise is probably incomplete, feel free to contribute in order to improve or rewrite it.

Coherence : For each language there is on earth, we have computed ranked letter appearance occurrences (the best we can). So I thought that intel is worth something here. So I use those records against decoded text to check if I can detect intelligent design.

If you are running:

Upgrade your Python interpreter as soon as possible.

Contributions, issues and feature requests are very much welcome.

Feel free to check issues page if you want to contribute.

Copyright © Ahmed TAHRI @Ousret.

This project is MIT licensed.

Characters frequencies used in this project © 2012 Denny Vrandečić

Professional support for charset-normalizer is available as part of the Tidelift Subscription. Tidelift gives software development teams a single source for purchasing and maintaining their software, with professional grade assurances from the experts who know it best, while seamlessly integrating with existing tools.

All notable changes to charset-normalizer will be documented in this file. This project adheres to Semantic Versioning. The format is based on Keep a Changelog.

setuptools constraint to setuptools>=68,<82.1.charset_normalizer.md for higher performance. Removed eligible(..) and feed(...)

in favor of feed_info(...).UNICODE_RANGES_COMBINED using Unicode blocks v17.--normalize writing to wrong path when passing multiple files in. (#702)setuptools constraint to setuptools>=68,<=82.query_yes_no function (inside CLI) to avoid using ambiguous licensed code.cd.py submodule into mypyc optional compilation to reduce further the performance impact.setuptools to a specific constraint setuptools>=68,<=81.setuptools-scm as a build dependency.dev-requirements.txt and created ci-requirements.txt for security purposes. multiple.intoto.jsonl in GitHub releases in addition to individual attestation file per wheel.CHARSET_NORMALIZER_USE_MYPYC isn't set to 1. (#595) (#583)detect output legacy function. (#391)argparse.FileType by backporting the target class into the package. (#591)pyproject.toml instead of setup.cfg using setuptools as the build backend.build-requirements.txt as per using pyproject.toml native build configuration.bin/integration.py and bin/serve.py in favor of downstream integration test (see noxfile).setup.cfg in favor of pyproject.toml metadata configuration.utils.range_scan function.utf_8 instead of preferred utf-8. (#572)--no-preemptive in the CLI to prevent the detector to search for hints.python -m charset_normalizer.cli or python -m charset_normalizerencoding.aliases as they have no alias (#323)from_path no longer enforce PathLike as its first argumentis_binary that relies on main capabilities, and optimized to detect binariesenable_fallback argument throughout from_bytes, from_path, and from_fp that allow a deeper control over the detection (default True)should_rename_legacy for legacy function detect and disregard any new arguments without errors (PR #262)language_threshold in from_bytes, from_path and from_fp to adjust the minimum expected coherence rationormalizer --version now specify if current version provide extra speedup (meaning mypyc compilation whl)md.py can be compiled using Mypyc to provide an extra speedup up to 4x faster than v2.1first() and best() from CharsetMatchnormalizechaos_secondary_pass, coherence_non_latin and w_counter from CharsetMatchunicodedata2language_threshold in from_bytes, from_path and from_fp to adjust the minimum expected coherence rationormalizer --version now specify if current version provide extra speedup (meaning mypyc compilation whl)first() and best() from CharsetMatchmd.py can be compiled using Mypyc to provide an extra speedup up to 4x faster than v2.1normalizechaos_secondary_pass, coherence_non_latin and w_counter from CharsetMatchunicodedata2normalize scheduled for removal in 3.0--version (PR #194)unicodedata2 as Python is quickly catching up, scheduled for removal in 3.0 (PR #194)explain to True (PR #146)NullHandler by default from @nmaynes (PR #135)explain to True will add provisionally (bounded to function lifespan) a specific stream handler (PR #135)set_logging_handler to configure a specific StreamHandler from @nmaynes (PR #135)CHANGELOG.md entries, format is based on Keep a Changelog (PR #141)alphabets property. (PR #39)MIT License

Copyright (c) 2025 TAHRI Ahmed R.

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

They are clearly using specific code for a specific encoding even if covering most of used one. ↩

Chardet 7.0+ was relicensed from LGPL-2.1 to MIT following an AI-assisted rewrite. This relicensing is disputed on two independent grounds: (a) the original author contests that the maintainer had the right to relicense, arguing the rewrite is a derivative work of the LGPL-licensed codebase since it was not a clean room implementation; (b) the copyright claim itself is questionable given the code was primarily generated by an LLM, and AI-generated output may not be copyrightable under most jurisdictions. Either issue alone could undermine the MIT license. Beyond licensing, the rewrite raises questions about responsible use of AI in open source: key architectural ideas pioneered by charset-normalizer - notably decode-first validity filtering (our foundational approach since v1) and encoding pairwise similarity with the same algorithm and threshold — surfaced in chardet 7 without acknowledgment. The project also imported test files from charset-normalizer to train and benchmark against it, then claimed superior accuracy on those very files. Charset-normalizer has always been MIT-licensed, encoding-agnostic by design, and built on a verifiable human-authored history. ↩

FAQs

The Real First Universal Charset Detector. Open, modern and actively maintained alternative to Chardet.

We found that charset-normalizer demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

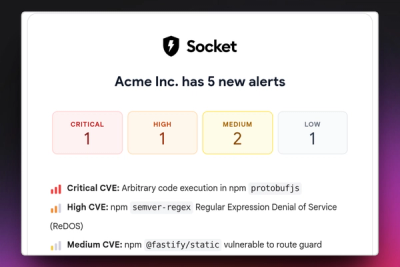

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Bitwarden CLI 2026.4.0 was compromised in the Checkmarx supply chain campaign after attackers abused a GitHub Action in Bitwarden’s CI/CD pipeline.

Research

/Security News

Docker and Socket have uncovered malicious Checkmarx KICS images and suspicious code extension releases in a broader supply chain compromise.

Product

Stay on top of alert changes with filtered subscriptions, batched summaries, and notification routing built for triage.