Research

/Security News

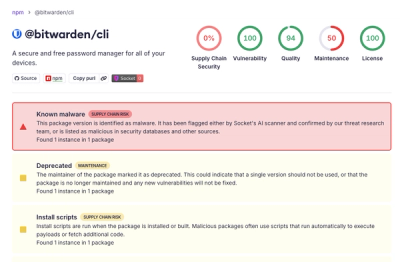

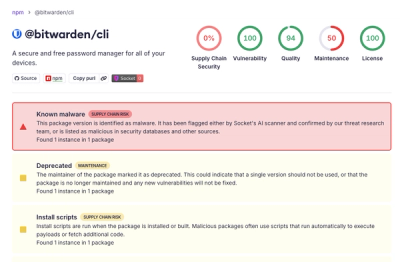

Bitwarden CLI Compromised in Ongoing Checkmarx Supply Chain Campaign

Bitwarden CLI 2026.4.0 was compromised in the Checkmarx supply chain campaign after attackers abused a GitHub Action in Bitwarden’s CI/CD pipeline.

cuga

Advanced tools

CUGA is an open-source generalist agent for the enterprise, supporting complex task execution on web and APIs, OpenAPI/MCP integrations, composable architecture, reasoning modes, and policy-aware features.

Building a domain-specific enterprise agent from scratch is complex and requires significant effort: agent and tool orchestration, planning logic, safety and alignment policies, evaluation for performance/cost tradeoffs and ongoing improvements. CUGA is a state-of-the-art generalist agent designed with enterprise needs in mind, so you can focus on configuring your domain tools, policies and workflow.

🎉 NEW: CUGA Enterprise SDK with Policy System — Build production-ready AI agents with enterprise-grade governance. Programmatically configure safety guards, workflow controls, and compliance policies via Python SDK or visual UI. Ensure consistent, secure, and compliant agent behavior across your organization.

Policy Types & Enterprise Value:

Policy Type Value Use Cases Intent Guard Block unauthorized actions Data deletion prevention, access restrictions, compliance enforcement Playbook Standardize workflows Onboarding, audit workflows, regulatory compliance Tool Approval Human oversight Financial transactions, data modifications Tool Guide Domain knowledge Compliance notes, domain context Output Formatter Format, redirect, govern outputs report generation, response routing, output masking 📚 Documentation: SDK Guide | Policies Guide | Quick Start →

CUGA achieves state-of-the-art performance on leading benchmarks:

High-performing generalist agent — Benchmarked on complex web and API tasks. Combines best-of-breed agentic patterns (e.g. planner-executor, code-act) with structured planning and smart variable management to prevent hallucination and handle complexity

Configurable reasoning modes — Balance performance and cost/latency with flexible modes ranging from fast heuristics to deep planning, optimizing for your specific task requirements

Flexible agent and tool integration — Seamlessly integrate tools via OpenAPI specs, MCP servers, and Langchain, enabling rapid connection to REST APIs, custom protocols, and Python functions

Integrates with Langflow — Low-code visual build experience for designing and deploying agent workflows without extensive coding

Open-source and composable — Built with modularity in mind, CUGA itself can be exposed as a tool to other agents, enabling nested reasoning and multi-agent collaboration. Evolving toward enterprise-grade reliability

Policy System — Configure agent behavior with 5 policy types (Intent Guard, Playbook, Tool Approval, Tool Guide, Output Formatter) via the Python SDK or standalone UI in demo mode. Includes human-in-the-loop approval gates for safe agent behavior in enterprise contexts. See SDK Docs and Policies Guide

Save-and-reuse capabilities (Experimental) — Capture and reuse successful execution paths (plans, code, and trajectories) for faster and consistent behavior across repeated tasks

Explore the Roadmap to see what's ahead, or join the 🤝 Call for the Community to get involved.

Watch CUGA seamlessly combine web and API operations in a single workflow:

Example Task: get top account by revenue from digital sales, then add it to current page

https://github.com/user-attachments/assets/0cef8264-8d50-46d9-871a-ab3cefe1dde5

Experience CUGA's hybrid capabilities by combining API calls with web interactions:

Switch to hybrid mode:

# Edit ./src/cuga/settings.toml and change:

mode = 'hybrid' # under [advanced_features] section

Install browser API support:

playwright installer should already be included after installing with Quick Startplaywright install chromium

Start the demo:

cuga start demo

Enable the browser extension:

Open the test application:

Try the hybrid task:

get top account by revenue from digital sales then add it to current page

🎯 What you'll see: CUGA will fetch data from the Digital Sales API and then interact with the web page to add the account information directly to the current page - demonstrating seamless API-to-web workflow integration!

Watch CUGA pause for human approval during critical decision points:

Example Task: get best accounts

https://github.com/user-attachments/assets/d103c299-3280-495a-ba66-373e72554e78

Experience CUGA's Human-in-the-Loop capabilities where the agent pauses for human approval at key decision points:

Enable HITL mode:

# Edit ./src/cuga/settings.toml and ensure:

api_planner_hitl = true # under [advanced_features] section

Start the demo:

cuga start demo

Try the HITL task:

get best accounts

🎯 What you'll see: CUGA will pause at critical decision points, showing you the planned actions and waiting for your approval before proceeding.

# In terminal, clone the repository and navigate into it

git clone https://github.com/cuga-project/cuga-agent.git

cd cuga-agent

# 1. Create and activate virtual environment

uv venv --python=3.12 && source .venv/bin/activate

# 2. Install dependencies

uv sync

# 3. Set up environment variables

# Create .env file with your API keys

echo "OPENAI_API_KEY=your-openai-api-key-here" > .env

# 4. Start the demo

cuga start demo_crm --read-only

# Chrome will open automatically at https://localhost:7860

# then try sending your task to CUGA: 'from contacts.txt show me which users belong to the crm system'

# 5. View agent trajectories (optional)

cuga viz

# This launches a web-based dashboard for visualizing and analyzing

# agent execution trajectories, decision-making, and tool usage

Refer to: .env.example for detailed examples.

CUGA supports multiple LLM providers with flexible configuration options. You can configure models through TOML files or override specific settings using environment variables.

Setup Instructions:

.env file:

# OpenAI Configuration

OPENAI_API_KEY=sk-...your-key-here...

AGENT_SETTING_CONFIG="settings.openai.toml"

# Optional overrides

MODEL_NAME=gpt-4o # Override model name

OPENAI_BASE_URL=https://api.openai.com/v1 # Override base URL

OPENAI_API_VERSION=2024-08-06 # Override API version

Default Values:

gpt-4oSetup Instructions:

Access IBM WatsonX

Create a project and get your credentials:

Add to your .env file:

# WatsonX Configuration

WATSONX_API_KEY=your-watsonx-api-key

WATSONX_PROJECT_ID=your-project-id

WATSONX_URL=https://us-south.ml.cloud.ibm.com # or your region

AGENT_SETTING_CONFIG="settings.watsonx.toml"

# Optional override

MODEL_NAME=meta-llama/llama-4-maverick-17b-128e-instruct-fp8 # Override model for all agents

Default Values:

meta-llama/llama-4-maverick-17b-128e-instruct-fp8Setup Instructions:

.env file:

AGENT_SETTING_CONFIG="settings.azure.toml" # Default config uses ETE

AZURE_OPENAI_API_KEY="<your azure apikey>"

AZURE_OPENAI_ENDPOINT="<your azure endpoint>"

OPENAI_API_VERSION="2024-08-01-preview"

CUGA supports LiteLLM through the OpenAI configuration by overriding the base URL:

Add to your .env file:

# LiteLLM Configuration (using OpenAI settings)

OPENAI_API_KEY=your-api-key

AGENT_SETTING_CONFIG="settings.openai.toml"

# Override for LiteLLM

MODEL_NAME=Azure/gpt-4o # Override model name

OPENAI_BASE_URL=https://your-litellm-endpoint.com # Override base URL

OPENAI_API_VERSION=2024-08-06 # Override API version

Setup Instructions:

.env file:

# Groq Configuration

GROQ_API_KEY=your-groq-api-key-here

AGENT_SETTING_CONFIG="settings.groq.toml"

# Optional override

MODEL_NAME=llama-3.1-70b-versatile # Override model name

Default Values:

settings.groq.tomlSetup Instructions:

.env file:

# OpenRouter Configuration

OPENROUTER_API_KEY=your-openrouter-api-key

AGENT_SETTING_CONFIG="settings.openrouter.toml"

OPENROUTER_BASE_URL="https://openrouter.ai/api/v1"

# Optional override

MODEL_NAME=openai/gpt-4o # Override model name

CUGA uses TOML configuration files located in src/cuga/configurations/models/:

settings.openai.toml - OpenAI configuration (also supports LiteLLM via base URL override)settings.watsonx.toml - WatsonX configurationsettings.azure.toml - Azure OpenAI configurationsettings.groq.toml - Groq configurationsettings.openrouter.toml - OpenRouter configurationEach file contains agent-specific model settings that can be overridden by environment variables.

💡 Tip: Want to use your own tools or add your MCP tools? Check out src/cuga/backend/tools_env/registry/config/mcp_servers.yaml for examples of how to configure custom tools and APIs, including those for digital sales.

CUGA can be easily integrated into your Python applications as a library. The SDK provides a clean, minimal API for creating and invoking agents with custom tools.

📚 SDK Documentation: SDK Documentation

from cuga import CugaAgent

from langchain_core.tools import tool

import asyncio

@tool

def add_numbers(a: int, b: int) -> int:

'''Add two numbers together'''

return a + b

@tool

def multiply_numbers(a: int, b: int) -> int:

'''Multiply two numbers together'''

return a * b

# Create agent with tools

agent = CugaAgent(tools=[add_numbers, multiply_numbers])

async def main():

# Add an Intent Guard to block specific operations

await agent.policies.add_intent_guard(

name="Block Delete Operations",

description="Prevents deletion of critical data",

keywords=["delete", "remove", "erase"],

response="Deletion operations are not permitted for security reasons.",

priority=100 # Higher priority = checked first

)

# Add a Playbook to provide step-by-step guidance for complex workflows

await agent.policies.add_playbook(

name="Budget Analysis Workflow",

description="Multi-step process for analyzing financial budgets",

natural_language_trigger=["When user asks to analyze their budget"],

content="""# Budget Analysis Workflow

## Step 1: Calculate Total Expenses

- Sum all expense categories using add_numbers

- Document each category amount

## Step 2: Calculate Total Revenue

- Sum all revenue streams using add_numbers

- Include all income sources

## Step 3: Calculate Profit Margin

- Use multiply_numbers to calculate profit (revenue - expenses)

- Calculate margin percentage

## Step 4: Generate Recommendations

- Compare against target budget

- Identify areas for optimization

- Provide actionable insights""",

priority=50

)

result = await agent.invoke("Analyze my budget: expenses are 5000 and 3000, revenue is 12000")

print(result.answer) # The agent's response

if __name__ == "__main__":

asyncio.run(main())

CugaAgent(tools=[...]) → await agent.invoke(message)agent.stream()thread_id📚 Documentation: SDK Guide | Policies Guide

CUGA includes a built-in knowledge base powered by LangChain and local vector stores. Docling is integrated for document ingestion: it parses and normalizes PDFs, Office files, HTML, Markdown, images, and other supported types before chunking and embedding, so the pipeline stays self-contained with no external document services.

When enabled, the agent can search, ingest, and manage documents.

Try the knowledge demo: same as the main demo but with the knowledge engine on (upload documents and query them):

cuga start demo_knowledge

Knowledge is enabled by default via settings.toml. The SDK auto-injects knowledge tools

and awareness into the agent, so it knows what documents are available and how to search them.

from cuga import CugaAgent

import asyncio

agent = CugaAgent(enable_knowledge=True)

async def main():

# Ingest a document

await agent.knowledge.ingest("/path/to/quarterly_report.pdf")

# The agent now automatically knows about this document

result = await agent.invoke("What does the report say about Q4 revenue?")

print(result.answer) # Agent searches knowledge base and answers

# Direct search

results = await agent.knowledge.search("Q4 revenue figures")

for r in results:

print(f"{r['filename']} (page {r['page']}): {r['text'][:100]}")

# List documents

docs = await agent.knowledge.list_documents()

# Clean up

await agent.aclose()

asyncio.run(main())

Documents can be scoped to a specific conversation thread:

thread_id = "user-session-123"

# Ingest into session scope (temporary, per-conversation)

await agent.knowledge.ingest("/path/to/file.pdf", scope="session", thread_id=thread_id)

# Search session documents

results = await agent.knowledge.search("query", scope="session", thread_id=thread_id)

# Agent scope (default) — permanent, shared across conversations

await agent.knowledge.ingest("/path/to/file.pdf", scope="agent")

agent = CugaAgent(tools=[my_tools], enable_knowledge=False)

PDF, DOCX, XLSX, PPTX, HTML, Markdown, images, and more (via Docling).

Orchestrate multiple agents with a single supervisor: delegate tasks to specialized sub-agents, mix local agents with remote A2A agents, and pass data between them.

📚 Documentation: CugaSupervisor

Try the supervisor demo: run the multi-agent demo (CRM + email sub-agents) with:

cuga start demo_supervisor

from cuga import CugaAgent, CugaSupervisor

from langchain_core.tools import tool

import asyncio

@tool

def get_customers(limit: int = 10) -> str:

"""Fetch top customers from CRM with name, email, and revenue. Returns a formatted string."""

customers = [

"Alice (alice@example.com, $250,000)",

"Bob (bob@example.com, $180,000)",

"Carol (carol@example.com, $120,000)",

"Dave (dave@example.com, $95,000)",

"Eve (eve@example.com, $88,000)",

]

top = customers[: min(limit, len(customers))]

return "Top customers by revenue: " + "; ".join(f"{i+1}. {c}" for i, c in enumerate(top))

@tool

def send_email(to: str, body: str) -> str:

"""Send an email. Returns confirmation."""

return f"Email sent successfully to {to}"

async def main():

crm_agent = CugaAgent(tools=[get_customers])

crm_agent.description = "CRM and customer data"

email_agent = CugaAgent(tools=[send_email])

email_agent.description = "Sending emails and notifications"

supervisor = CugaSupervisor(agents={

"crm": crm_agent,

"email": email_agent,

})

result = await supervisor.invoke("Get our top 5 customers by revenue, then send the top customer a thank-you email")

print(result.answer)

asyncio.run(main())

To add a remote agent via A2A, pass an external config in agents: "analytics": {"type": "external", "description": "...", "config": {"a2a_protocol": {"endpoint": "http://localhost:9999", "transport": "http"}}}.

CugaAgent instances with external agents via A2A, task-only or variables in metadata if enabled.variables=["var_name"] to pass previous agent outputs or context to the next agent (for internal agents, or A2A when pass_variables_a2a is enabled in settings).You can also load agents from YAML with CugaSupervisor.from_yaml("path/to/config.yaml"). Enable the supervisor in settings.toml under [supervisor] when using the server.

Cuga supports isolated code execution using Docker/Podman containers for enhanced security.

Install container runtime: Download and install Rancher Desktop or Docker.

Install sandbox dependencies:

uv sync --group sandbox

Start with remote sandbox enabled:

cuga start demo --sandbox

This automatically configures Cuga to use Docker/Podman for code execution instead of local execution.

Test your sandbox setup (optional):

# Test local sandbox (default)

cuga test-sandbox

# Test remote sandbox with Docker/Podman

cuga test-sandbox --remote

You should see the output: ('test succeeded\n', {})

Note: Without the --sandbox flag, Cuga uses local Python execution (default), which is faster but provides less isolation.

CUGA supports E2B for cloud-based code execution in secure, ephemeral sandboxes. This provides better isolation than local execution while being faster than Docker/Podman containers.

Get an E2B API key:

Set up the E2B template:

# Install E2B CLI

npm install -g @e2b/cli

# Login with your API key

e2b auth login

# Create a template (one-time setup)

# This creates a 'cuga-langchain' template that CUGA uses

e2b template build --name cuga-langchain

Install E2B dependencies:

uv sync --group e2b

Configure environment:

Add to your .env file:

E2B_API_KEY=your-e2b-api-key-here

E2B runs in the cloud and needs to call your local API registry to execute tools. You need to expose your local registry publicly using a tunneling service like ngrok.

Best if you have multiple ports available:

# In a separate terminal, start ngrok tunnel to registry

ngrok http 8001

# You'll get a public URL like: https://abc123.ngrok.io

# Copy this URL

Then edit ./src/cuga/settings.toml:

[server_ports]

function_call_host = "https://abc123.ngrok.io" # Your ngrok URL

Best if you're restricted to 1 port - CUGA will proxy calls to the registry:

# In a separate terminal, start ngrok tunnel to CUGA

ngrok http 7860

# You'll get a public URL like: https://xyz789.ngrok.io

# Copy this URL

Then edit ./src/cuga/settings.toml:

[server_ports]

function_call_host = "https://xyz789.ngrok.io" # Your ngrok URL

CUGA automatically proxies /functions/call requests to the registry when using the CUGA port.

Edit ./src/cuga/settings.toml:

[advanced_features]

e2b_sandbox = true

e2b_sandbox_mode = "per-session" # Options: "per-session" | "single" | "per-call"

e2b_sandbox_ttl = 600 # Cache TTL in seconds (10 minutes)

per-session (default): One sandbox per conversation thread, cached for reusesingle: Single shared sandbox across all threads (most cost-effective)per-call: New sandbox for each execution (most isolated, highest cost)# Make sure ngrok is running in another terminal

cuga start demo

E2B will automatically execute code in cloud sandboxes. You'll see logs indicating "CODE SENT TO E2B SANDBOX" when E2B is active.

function_call_host in settings.toml with your ngrok URLBenefits of E2B:

Note: E2B is a paid service with a free tier. Check e2b.dev/pricing for details.

./src/cuga| Mode | File | Description |

|---|---|---|

fast | ./configurations/modes/fast.toml | Optimized for speed |

balanced | ./configurations/modes/balanced.toml | Balance between speed and precision (default) |

accurate | ./configurations/modes/accurate.toml | Optimized for precision |

custom | ./configurations/modes/custom.toml | User-defined settings |

configurations/

├── modes/fast.toml

├── modes/balanced.toml

├── modes/accurate.toml

└── modes/custom.toml

Edit settings.toml:

[features]

cuga_mode = "fast" # or "balanced" or "accurate" or "custom"

Documentation: ./docs/flags.html

| Mode | Description |

|---|---|

api | API-only mode - executes API tasks (default) |

web | Web-only mode - executes web tasks using browser extension |

hybrid | Hybrid mode - executes both API tasks and web tasks using browser extension |

mode = 'api')mode = 'web')mode = 'hybrid')demo_mode.start_urlEdit ./src/cuga/settings.toml:

[demo_mode]

start_url = "https://opensource-demo.orangehrmlive.com/web/index.php/auth/login" # Starting URL for hybrid mode

[advanced_features]

mode = 'api' # 'api', 'web', or 'hybrid'

Each .md file contains specialized instructions that are automatically integrated into the CUGA's internal prompts when that component is active. Simply edit the markdown files to customize behavior for each node type.

Available instruction sets: answer, api_planner, code_agent, plan_controller, reflection, shortlister, task_decomposition

configurations/

└── instructions/

├── instructions.toml

├── default/

│ ├── answer.md

│ ├── api_planner.md

│ ├── code_agent.md

│ ├── plan_controller.md

│ ├── reflection.md

│ ├── shortlister.md

│ └── task_decomposition.md

└── [other instruction sets]/

Edit configurations/instructions/instructions.toml:

[instructions]

instruction_set = "default" # or any instruction set above

uv sync --extra memoryenable_memory = true in settings.tomlcuga start memoryWatch CUGA with Memory enabled

[LINK]

Would you like to test this? (Advanced Demo)

enable_memory flag to truecuga start memorycuga start demo_crm --sample-memory-dataIdentify the common cities between my cuga_workspace/cities.txt and cuga_workspace/company.txt . Here you should see the errors related to CodeAgent. Wait for a minute for tips to be generated. Tips generation can be confirmed from the terminal where cuga start memory was runEvolve can now be used with CugaLite to bring task-specific guidance into the prompt before execution and save completed trajectories after the run.

This flow is:

cuga[evolve] if you want the upstream Evolve package available locally, or let uvx fetch it on demandChoose how Evolve will be started.

Recommended for normal CUGA usage: let the CUGA MCP registry launch Evolve for you.

In the manager UI, add an MCP tool with:

evolveCommand (stdio)uvx--from

altk-evolve

--with

setuptools<70

evolve-mcp

Then set the tool environment values in the UI. Recommended defaults:

EVOLVE_MODEL_NAME=Azure/gpt-4o

OPENAI_API_KEY=env://OPENAI_API_KEY # pragma: allowlist secret

OPENAI_BASE_URL=env://OPENAI_BASE_URL # pragma: allowlist secret

Notes:

Azure/gpt-4o with the exact allowed model if needed.OPENAI_API_KEY=env://OPENAI_API_KEY means "read the real value from the CUGA process environment at runtime". OPENAI_BASE_URL=env://OPENAI_BASE_URL means "read the LiteLLM/OpenAI-compatible base URL from the CUGA process environment at runtime". setuptools<70 is included because milvus-lite still imports pkg_resources.Important: this command starts Evolve in stdio mode through the upstream Evolve package. It is intended to be launched by the CUGA registry, not run manually in a separate terminal.

Alternative for standalone/manual debugging: run Evolve yourself as an SSE server:

uvx --from altk-evolve --with setuptools<70 evolve-mcp --transport sse --port 8201

Edit ./src/cuga/settings.toml and enable lite mode plus Evolve:

[advanced_features]

lite_mode = true

[evolve]

enabled = true

url = "http://127.0.0.1:8201/sse"

mode = "auto"

app_name = "evolve"

lite_mode_only = true

save_on_success = true

save_on_failure = true

async_save = true

timeout = 30.0

If you use the recommended registry-managed setup above, keep mode = "auto" or set mode = "registry".

If you run Evolve manually as a standalone SSE server, keep url = "http://127.0.0.1:8201/sse" and set mode = "direct" if you want to skip registry lookup entirely.

If you use Evolve tip generation, make sure the environment for the Evolve MCP server includes the required Evolve model settings. Otherwise save_trajectory may fail later with a LiteLLM/OpenAI model access error even when the MCP connection itself works.

cuga start demo

Evolve Guidelines sectionasync_save = true saves trajectories in the background and avoids blocking the responsesave_on_success and save_on_failure let you control which runs are recordedmode = "auto" lets CUGA use a registry-managed Evolve MCP server when available and fall back to the direct SSE URL otherwisemode = "registry" is best when you want Evolve to be fully managed as a normal CUGA MCP toolmode = "direct" is best when you are manually running an SSE Evolve server outside CUGAcuga start memory namespace / tip workflow• Change ./src/cuga/settings.toml: cuga_mode = "save_reuse_fast"

• Run: cuga start demo

• First run: get top account by revenue

get top 2 accounts by revenue• Flow now will be saved:

• Verify reuse: get top 4 accounts by revenue

CUGA supports three types of tool integrations. Each approach has its own use cases and benefits:

| Tool Type | Best For | Configuration | Runtime Loading |

|---|---|---|---|

| OpenAPI | REST APIs, existing services | mcp_servers.yaml | ✅ Build |

| MCP | Custom protocols, complex integrations | mcp_servers.yaml | ✅ Build |

| LangChain | Python functions, rapid prototyping | Direct import | ✅ Runtime |

All tests are available through ./src/scripts/run_tests.sh:

Unit Tests

Policy Integration Tests (src/cuga/backend/cuga_graph/policy/tests/)

SDK Integration Tests (src/cuga/sdk_core/tests/)

Stability Tests (run_stability_tests.py)

Run all tests (unit, integration, and stability):

./src/scripts/run_tests.sh

Run unit tests only:

./src/scripts/run_tests.sh unit_tests

For information on how to evaluate, see the CUGA Evaluation Documentation

CUGA is open source because we believe trustworthy enterprise agents must be built together.

Here's how you can help:

All contributions are welcome through GitHub Issues - whether it's sharing use cases, requesting features, or reporting bugs!

Amongst other, we're exploring the following directions:

Please follow the contribution guide in CONTRIBUTING.md.

FAQs

CUGA is an open-source generalist agent for the enterprise, supporting complex task execution on web and APIs, OpenAPI/MCP integrations, composable architecture, reasoning modes, and policy-aware features.

We found that cuga demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

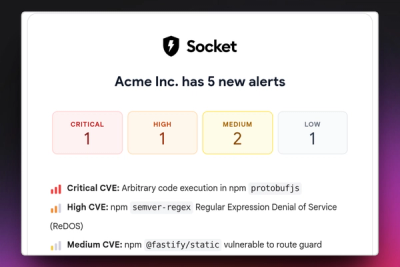

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Bitwarden CLI 2026.4.0 was compromised in the Checkmarx supply chain campaign after attackers abused a GitHub Action in Bitwarden’s CI/CD pipeline.

Research

/Security News

Docker and Socket have uncovered malicious Checkmarx KICS images and suspicious code extension releases in a broader supply chain compromise.

Product

Stay on top of alert changes with filtered subscriptions, batched summaries, and notification routing built for triage.