Research

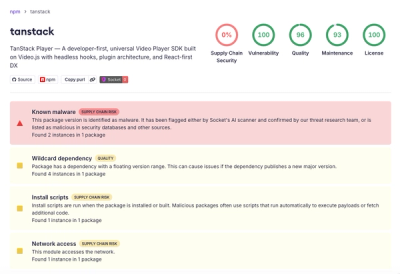

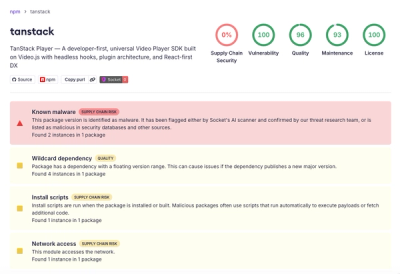

Malicious npm Package Brand-Squats TanStack to Exfiltrate Environment Variables

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

dbl-discoverx

Advanced tools

Your Swiss-Army-knife for Lakehouse administration.

DiscoverX automates administration tasks that require inspecting or applying operations to a large number of Lakehouse assets.

You can execute a SQL template against multiple tables with

DisocoverX will concurrently execute the SQL template against all Delta tables matching the selection pattern and return a Spark DataFrame with the union of all results.

Some useful SQL templates are

DESCRIBE DETAIL {full_table_name}SHOW HISTORY {full_table_name}CREATE TABLE IF NOT EXISTS {table_catalog}.{table_schema}_clone.{table_name} DEEP CLONE {full_table_name}CREATE TABLE IF NOT EXISTS {table_catalog}.{table_schema}.{table_name}_empty_copy LIKE {full_table_name}ALTER TABLE {full_table_name} SET TAGS ('tag_name' = 'tag_value')ALTER TABLE {full_table_name} SET OWNER TO principalSHOW PARTITIONS {full_table_name}SELECT to_json(struct(*)) AS row FROM {full_table_name} LIMIT 1SELECT {stack_string_columns} AS (column_name, string_value) FROM {full_table_name}SELECT {stack_all_columns_as_string} AS (column_name, string_value) FROM {full_table_name}ALTER TABLE {full_table_name} CLUSTER BY (column1, column2)VACUUM {full_table_name}OPTIMIZE {full_table_name}The available variables to use in the SQL templates are

{full_table_name} - The full table name (catalog.schema.table){table_catalog} - The catalog name{table_schema} - The schema name{table_name} - Teh table name{stack_string_columns} - A SQL expression stack(N, 'col1', `col1`, ... , 'colN', `colN` ) for all N columns of type string{stack_all_columns_as_string} - A SQL expression stack(N, 'col1', cast(`col1` AS string), ... , 'colN', cast(`colN` AS string) for all N columnsYou can filter tables that only contain a specific column name, and them use the column name in the queries.

DiscoverX can concurrently apply python funcitons to multiple assets

The properties available in table_info are

catalog - The catalog nameschema - The schema nametable - The table namecolumns - A list of ColumnInfo, with name, data_type, and partition_indextags - A list of TagsInfo, with column_tags, table_tags, schema_tags, and catalog_tags. Tags are only populated if the from_tables(...) operation is followed by .with_tags(True)Install DiscoverX, in Databricks notebook type

%pip install dbl-discoverx

Get started

from discoverx import DX

dx = DX(locale="US")

You can now run operations across multiple tables.

The available dx functions are

from_tables("<catalog>.<schema>.<table>") selects tables based on the specified pattern (use * as a wildcard). Returns a DataExplorer object with methods

having_columns restricts the selection to tables that have the specified columnswith_concurrency defines how many queries are executed concurrently (10 by defailt)with_sql applies a SQL template to all tables. After this command you can apply an action. See in-depth documentation here.unpivot_string_columns returns a melted (unpivoted) dataframe with all string columns from the selected tables. After this command you can apply an actionscan (experimental) scans the lakehouse with regex expressions defined by the rules and to power the semantic classification.intro gives an introduction to the libraryscan [deprecated] scans the lakehouse with regex expressions defined by the rules and to power the semantic classification. Documentationdisplay_rules shows the rules available for semantic classificationsearch [deprecated] searches the lakehouse content for by leveraging the semantic classes identified with scan (eg. email, ip address, etc.). Documentationselect_by_class [deprecated] selects data from the lakehouse content by semantic class. Documentationdelete_by_class [deprecated] deletes from the lakehouse by semantic class. DocumentationAfter a with_sql or unpivot_string_columns command, you can apply the following actions:

explain explains the queries that would be executeddisplay executes the queries and shows the first 1000 rows of the result in a unioned dataframeapply returns a unioned dataframe with the result from the queriesPlease note that all projects in the /databrickslabs github account are provided for your exploration only, and are not formally supported by Databricks with Service Level Agreements (SLAs). They are provided AS-IS and we do not make any guarantees of any kind. Please do not submit a support ticket relating to any issues arising from the use of these projects.

Any issues discovered through the use of this project should be filed as GitHub Issues on the Repo. They will be reviewed as time permits, but there are no formal SLAs for support.

FAQs

DiscoverX - Map and Search your Lakehouse

We found that dbl-discoverx demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

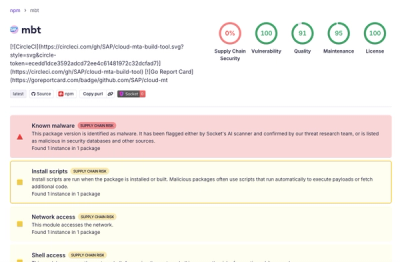

Research

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.