Research

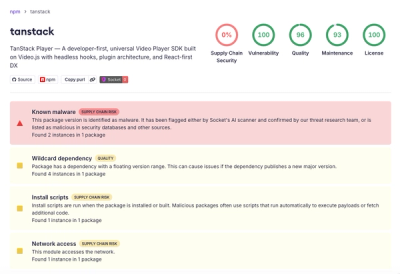

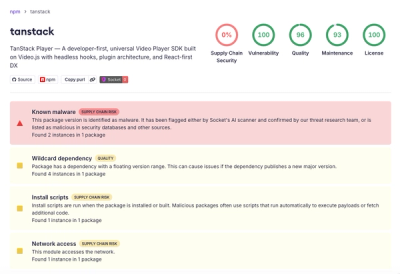

Malicious npm Package Brand-Squats TanStack to Exfiltrate Environment Variables

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

doc2mark

Advanced tools

Turn any document into clean Markdown -- in one line.

# Core (no OCR)

pip install doc2mark

# With OpenAI OCR

pip install doc2mark[ocr]

# With Google Gemini / Vertex AI OCR

pip install doc2mark[vertex_ai]

# Everything

pip install doc2mark[all]

from doc2mark import UnifiedDocumentLoader

loader = UnifiedDocumentLoader()

result = loader.load("document.pdf")

print(result.content)

doc2mark supports three OCR providers. Pass ocr_provider to UnifiedDocumentLoader to choose one.

Uses GPT-4.1 vision. Requires an API key.

export OPENAI_API_KEY=sk-...

loader = UnifiedDocumentLoader(ocr_provider="openai")

result = loader.load(

"scanned_doc.pdf",

extract_images=True,

ocr_images=True,

)

Customize the model or use an OpenAI-compatible endpoint:

loader = UnifiedDocumentLoader(

ocr_provider="openai",

model="gpt-4o-mini", # cheaper model

base_url="http://localhost:11434/v1", # self-hosted / Ollama

api_key="any-string",

)

Uses Gemini models via Google Cloud. Authenticates with Application Default Credentials.

export GOOGLE_APPLICATION_CREDENTIALS=/path/to/service-account-key.json

loader = UnifiedDocumentLoader(

ocr_provider="vertex_ai",

project="my-gcp-project", # or set GOOGLE_CLOUD_PROJECT

)

result = loader.load("scan.pdf", extract_images=True, ocr_images=True)

Override model and region:

loader = UnifiedDocumentLoader(

ocr_provider="vertex_ai",

project="my-gcp-project",

model="gemini-2.0-flash", # default: gemini-3.1-flash-lite-preview

location="us-central1", # default: global

)

Local OCR, no API key needed. Requires Tesseract installed on your system.

from doc2mark.ocr.base import OCRConfig

loader = UnifiedDocumentLoader(

ocr_provider="tesseract",

ocr_config=OCRConfig(language="chinese"), # optional language hint

)

result = loader.load("scan.png", extract_images=True, ocr_images=True)

| Provider | Requires | Best for | Install extra |

|---|---|---|---|

openai | OPENAI_API_KEY | Highest accuracy, complex layouts | pip install doc2mark[ocr] |

vertex_ai | GCP service account | Google Cloud workflows, Gemini models | pip install doc2mark[vertex_ai] |

tesseract | Tesseract binary | Offline / air-gapped environments | pip install doc2mark[ocr] |

| Category | Formats |

|---|---|

| Office | DOCX, XLSX, PPTX |

| PDF (text + scanned) | |

| Images | PNG, JPG, WEBP, TIFF, BMP, GIF, HEIC, HEIF, AVIF |

| Text / Data | TXT, CSV, TSV, JSON, JSONL |

| Markup | HTML, XML, Markdown |

| Legacy | DOC, XLS, PPT, RTF, PPS (requires LibreOffice) |

from doc2mark import load

# Text-only extraction (no OCR)

md = load("report.pdf").content

# With OCR for embedded images

md = load("report.pdf", extract_images=True, ocr_images=True).content

from doc2mark import UnifiedDocumentLoader

loader = UnifiedDocumentLoader(ocr_provider="openai")

loader.batch_process(

input_dir="documents/",

output_dir="converted/",

extract_images=True,

ocr_images=True,

save_files=True,

show_progress=True,

)

from doc2mark import batch_process_files

results = batch_process_files(

["invoice.pdf", "contract.docx", "receipt.png"],

output_dir="output/",

extract_images=True,

ocr_images=True,

)

doc2mark includes specialized prompts for different content types:

loader = UnifiedDocumentLoader(

ocr_provider="openai",

prompt_template="table_focused", # optimized for tables

)

Available templates: default, table_focused, document_focused, multilingual, form_focused, receipt_focused, handwriting_focused, code_focused.

Control how complex tables (with merged cells) are rendered:

loader = UnifiedDocumentLoader(

table_style="minimal_html", # clean HTML with rowspan/colspan (default)

# table_style="markdown_grid", # markdown with merge annotations

# table_style="styled_html", # full HTML with inline styles

)

When using OpenAI or Vertex AI, each OCR result includes token usage in its metadata:

from doc2mark import UnifiedDocumentLoader

loader = UnifiedDocumentLoader(ocr_provider="openai")

result = loader.load("scan.pdf", extract_images=True, ocr_images=True)

# Token usage per OCR call is in result metadata

usage = result.metadata.get("token_usage", {})

print(usage)

# {"input_tokens": 1234, "output_tokens": 567, "total_tokens": 1801}

# Single file to stdout

doc2mark report.pdf

# Save to file

doc2mark report.pdf -o report.md

# Batch convert a directory

doc2mark documents/ -o converted/ -r

# With OpenAI OCR

doc2mark scan.pdf --ocr openai --ocr-images

# With Tesseract OCR

doc2mark scan.pdf --ocr tesseract --ocr-images

# Disable OCR entirely

doc2mark report.pdf --ocr none --no-ocr-images

# JSON output

doc2mark report.pdf --format json

MIT -- see LICENSE.

FAQs

Unified document processing with AI-powered OCR

We found that doc2mark demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

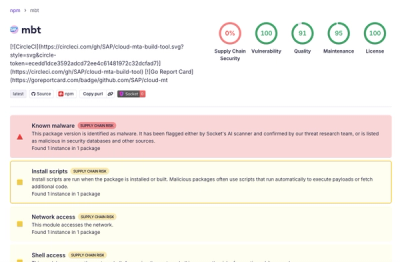

Research

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.